Analytics Engineer | Modern Data Stack | DataOps & FinOps Advocate

I build enterprise-grade analytics platforms that transform raw data into reliable, governed business insights.

My work focuses on:

-

🏗️ Analytics Engineering: Designing Kimball Star Schemas & Medallion architectures using Snowflake + dbt Core.

-

📊 Governed BI: Building version-controlled (PBIP/TMDL) Power BI semantic models optimized for sub-second rendering.

-

⚙️ DataOps & Quality: Enforcing strict data contracts and CI pipelines (GitHub Actions) for zero-break deployments.

-

🐍 Root-Cause Diagnostics: Writing memory-optimized (PyArrow/Pandera) Python pipelines to mathematically isolate operational bottlenecks.

-

💡 ROI Focus: I don't just write SQL—I focus on delivering business insights, not just dashboards.

I focus on tools that enable scalable, version-controlled, and tested data platforms.

| Domain | Technologies |

|---|---|

| ☁️ Cloud & Data Warehousing |   |

| 🔄 Data Transformation |   |

| 📊 Business Intelligence |     |

| 🐍 Python & Analytics |        |

| ⚙️ DataOps & CI/CD |       |

| 🤖 AI, Tooling & Docs |      |

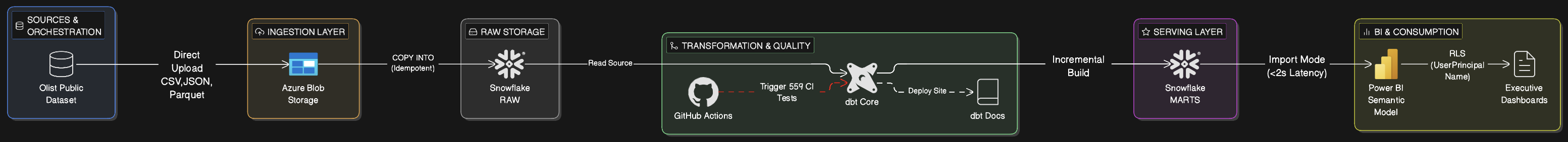

An enterprise-grade, governed analytics platform built on 1.55M+ Brazilian e-commerce records. Designed to replace ad-hoc SQL chaos with a single, highly performant Source of Truth.

-

Cloud Data Architecture: Engineered a Kimball dimensional data warehouse (Star Schema) in Snowflake using dbt, applying a "Shift-Left" compute strategy for optimal downstream BI performance.

-

DataOps & Zero-Break CI/CD: Built a rigorous pipeline via GitHub Actions with ephemeral PR schemas, enforcing 100% test coverage (559 tests), SQL linting, and strict dbt Data Contracts.

-

Governed Semantic Layer: Deployed a version-controlled Power BI semantic model (PBIP/TMDL) featuring 50+ pre-certified DAX measures and dynamic Row-Level Security (RLS) via a decoupled Bridge Table.

-

"Trust, Don't Trash" Quality Framework: Designed a dual-metric data quality engine that flags dirty data upstream rather than deleting it, maintaining perfect financial alignment.

- ⚡ 93% Faster Queries: Reduced reporting latency from 45+ seconds (Direct SQL) to <1.2s dashboard rendering (VertiPaq Import).

- 💰 42% Cost Reduction: Slashed Snowflake compute costs via

unique_keyincremental materializations and strict warehouse auto-suspension. - 🛡️ 100% Financial Reconciliation: Achieved penny-perfect alignment with source systems while actively surfacing R$ 1.13M in at-risk revenue for the operations team.

A fail-loud, memory-optimized Python diagnostic pipeline designed to mathematically isolate a critical SLA failure hidden within a 110K+ row One-Big-Table (OBT).

-

MemoryOps & FinOps Architecture: Engineered a zero-credit EDA pipeline by caching a Snowflake OBT locally. Slashed local memory usage by 44% utilizing PyArrow columnar storage and three-pass data downcasting.

-

Fail-Loud Data Contracts: Implemented a 4-layer data quality gate using Pandera runtime validation to enforce schema integrity, seamlessly isolating 1,600+ "ghost deliveries" without dropping the audit trail.

-

Vectorized Performance: Enforced a strict zero

.apply()policy via CI linting (Ruff). Replaced slow row-by-row iteration with 100% vectorized Pandas operations, achieving up to 100x faster transformations. -

Modular DRY Engineering: Replaced 300+ lines of messy notebook wrangling with a modular, 1,400-line typed Python library (

src/). Ensured 100% environment reproducibility across machines usinguv.lock.

- 🎯 Mathematical Precision: Reconciled 4 North Star KPIs across DAX, SQL, and Pandas with zero discrepancies, proving absolute cross-platform governance.

- 🛑 False-Signal Debunking: Disproved a false AI-generated signal, shifting executive focus away from an anomaly (n=3) toward the true structural bottleneck in RJ/SP.

- 💡 Quantified Actionable ROI: Delivered the statistical proof needed for operations to trigger automated CRM rescue workflows, actively de-risking R$ 1.13M in vulnerable revenue.

I validate my technical expertise through rigorous, industry-recognised certifications across the Modern Data Stack.

💡 Note: Every tool listed above is actively demonstrated in production-grade code within my pinned repositories.

I treat data as software. My projects are governed by four core architectural principles:

-

🛡️ Trust, Don't Trash: I never silently drop bad data. I use upstream boolean flags (e.g.,

is_verified = 1) to isolate dirty records. This guarantees 100% financial reconciliation with source systems while quantifying the exact "Cost of Poor Quality" for business operations. -

🏎️ Shift-Left Compute: Complex math (date logic, string parsing, window functions) belongs in the Data Warehouse (dbt/Snowflake). BI tools (Power BI/DAX) should only perform light aggregations and visual rendering. This is the secret to sub-second dashboards.

-

🧱 Data Contracts over Hope: Schemas must fail fast. I use explicit column selection in Power Query and strict data type enforcement in dbt YAML to ensure upstream changes trigger pipeline errors, preventing silent dashboard corruption.

-

💰 DataFinOps by Default: I optimize ruthlessly for cloud compute costs. I favor incremental materializations (

unique_key), columnar memory pruning (PyArrow), and X-Small warehouse auto-suspension over brute-force full refreshes.

I am currently exploring Data & Analytics Engineering opportunities. Whether your team needs to untangle a legacy SQL codebase, optimize Snowflake compute costs, or build a governed Power BI semantic model from scratch—let's talk.

⚡ Architecture built to scale. Diagnostics built to recover revenue. Thanks for stopping by.

-29B5E8?style=for-the-badge&logo=snowflake&logoColor=white)