Added propagation of plugin broker failing error#11344

Added propagation of plugin broker failing error#11344sleshchenko merged 3 commits intoeclipse-che:masterfrom

Conversation

garagatyi

left a comment

garagatyi

left a comment

There was a problem hiding this comment.

Looks good. I have commented on some stuff, please, take a look at inlined comments.

I would LOVE to see unit tests in the PR too! I know that it is me who haven't had them in place firsthand, but the code gets complicated. I started adding unit tests in my recent PRs and would really love some assistance :-)

|

|

||

| if (pods.size() > 1) { | ||

| throw new InternalInfrastructureException( | ||

| "There are multiply pods in plugin broker environment"); |

There was a problem hiding this comment.

WDYT if we change two checks to just one? Something like:

Plugin brokering supports one pod only. Workspace 'ID' contains 'N' pods

There was a problem hiding this comment.

Good idea.

Changed to

format("Plugin broker environment must have only one pod. Workspace

%scontains%spods.", workspaceId, pods.size())

Do you think it is good enough?

| String encodedTooling = event.getTooling(); | ||

| if (event.getStatus() == null | ||

| || event.getWorkspaceId() == null | ||

| || (event.getError() == null && event.getTooling() == null)) { |

There was a problem hiding this comment.

Makes sense. I've added a check before completion of future, and the whole plugin broker process will be failed if DONE event is published but plugins are null 4858738

| finishFuture.completeExceptionally( | ||

| new InfrastructureException("Broker process failed with error: " + event.getError())); | ||

| InfrastructureException ex = | ||

| new InfrastructureException("Broker process failed with error: " + event.getError()); |

There was a problem hiding this comment.

Can you also include the workspace ID in the message? Just in case.

| DeployBroker deployBroker = getDeployBrokerPhase(kubernetesNamespace, brokerEnvironment); | ||

| DeployBroker deployBroker = | ||

| getDeployBrokerPhase( | ||

| runtimeID.getWorkspaceId(), kubernetesNamespace, brokerEnvironment, startFuture); |

There was a problem hiding this comment.

I would rather set WS_ID in the BrokerEnvironmentFactory, WDYT?

There was a problem hiding this comment.

It was done in the same way for ListenBrokerEvents and PrepareStorage.

Sorry, I'm not sure that I fully understand your idea. Do you propose to store WS_ID in KubernetesEnvvironment that is created by brokerEnvironmentFactory and then fetch this value in phases from there?

There was a problem hiding this comment.

Oh I see that it is not in the K8sEnv. Sorry for confusing

|

@garagatyi I've added few more tests classes, please let me know if you think that any other components need to be covered with tests. |

|

ci-test |

|

Results of automated E2E tests of Eclipse Che Multiuser on OCP: |

ibuziuk

left a comment

ibuziuk

left a comment

There was a problem hiding this comment.

+1 but I have only reviewed bits related to Unrecoverable events

|

ci-test |

|

Results of automated E2E tests of Eclipse Che Multiuser on OCP: |

343c99a to

f829da2

Compare

|

ci-test |

|

Results of automated E2E tests of Eclipse Che Multiuser on OCP: |

|

The selenium tests did not reveal any regression in next report #11344 (comment) |

f829da2 to

adcbe9e

Compare

What does this PR do?

It adds propagation of plugin broker failing error and instead of

Plugins installation process timed out.Changes are separate into different commits:

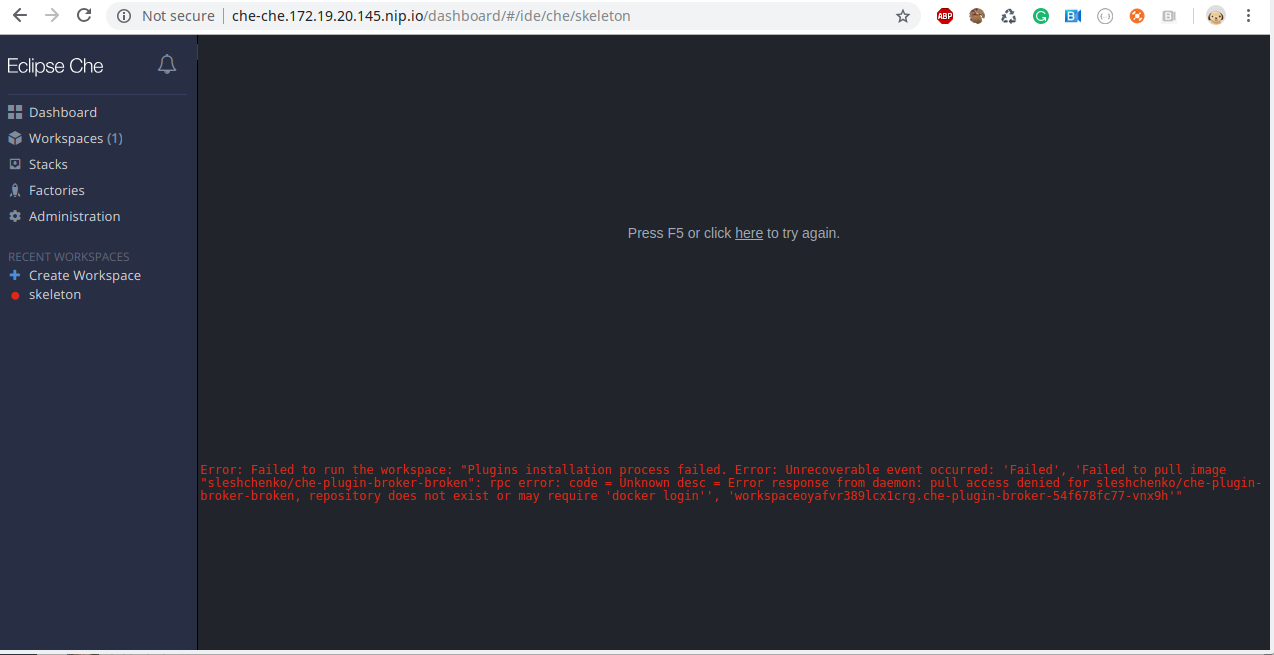

Restart policyisAlwaysbecause of creating pods viaDeploymentsand Pod status is alwaysRunningwhile a particular container may be failed and restarted with some period.BrokerResultEventtoBrokerStatusChangedEventand removes check that error or plugin list is required.Example what a user sees when plugin broker fails to start because of non-existing image

What issues does this PR fix or reference?

#11070

#11068

Release Notes

N/A

Docs PR

N/A