agent: floor context-size estimate with server-reported prompt_tokens#315444

Merged

agent: floor context-size estimate with server-reported prompt_tokens#315444

Conversation

The local tokenizer undercounts certain content kinds (notably Anthropic

thinking blocks: the visible "text" is a small summary of much larger

reasoning, signature bytes, etc.) and ignores per-block JSON envelope

tokens. As a result the agent's compaction triggers can sit at ratio

~0.7 while the server's actual prompt_tokens is over the budget, so

neither pre-render nor post-render kick-off fires.

Use the server's reported usage as a floor:

- Rename AnthropicTokenUsageMetadata to TurnTokenUsageMetadata; record

it for every provider on every successful fetch (drop the

isAnthropicFamily guard). All providers populate usage.prompt_tokens.

- In AgentIntentInvocation.buildPrompt, walk the conversation turns

with findLast to pick up the most recent turn that actually fetched

(covers both within-turn iterations and the iteration-1-of-a-fresh-

turn case where the current turn has no metadata yet).

- Math.max the local estimate with that server-reported promptTokens

for contextRatio (telemetry only) and postRenderRatio (gates

background compaction kick-off).

Post-compaction stays safe: the only behavioral consumer is

postRenderRatio, which is already guarded by the

"didSummarizeThisIteration" check, so a stale pre-compaction floor

cannot trigger a redundant kick-off in the same iteration.

Snapshot drift in defaultIntentRequestHandler.spec.ts.snap is benign:

mocks return usage:{0,0}, so the now-unconditional metadata ends up as

{promptTokens:0, outputTokens:0} on six previously-non-Anthropic

fixtures.

b3238d7 to

cb7fbe2

Compare

Contributor

There was a problem hiding this comment.

Pull request overview

This PR improves agent-mode summarization triggering by flooring the local context-size estimate with server-reported usage.prompt_tokens from the most recent successful fetch, addressing cases where the local tokenizer undercounts certain content kinds and compaction never kicks in despite being over budget.

Changes:

- Introduces

TurnTokenUsageMetadata(renamed/generalized fromAnthropicTokenUsageMetadata) and records it for successful fetches across providers. - Updates agent intent logic to

findLastturn token usage and use it as a floor when computing context ratios for compaction/summarization decisions. - Wires token-usage metadata into the returned

ChatResult.metadataand updates snapshots accordingly.

Show a summary per file

| File | Description |

|---|---|

| extensions/copilot/src/extension/prompt/node/test/snapshots/defaultIntentRequestHandler.spec.ts.snap | Updates expected result metadata to include promptTokens/outputTokens. |

| extensions/copilot/src/extension/prompt/node/defaultIntentRequestHandler.ts | Switches result-metadata aggregation to include TurnTokenUsageMetadata. |

| extensions/copilot/src/extension/prompt/node/chatParticipantRequestHandler.ts | Rehydrates token usage metadata from persisted chat history using TurnTokenUsageMetadata. |

| extensions/copilot/src/extension/prompt/common/conversation.ts | Renames/generalizes token-usage metadata class and updates documentation. |

| extensions/copilot/src/extension/intents/node/toolCallingLoop.ts | Records per-turn token usage metadata from fetchResult.usage on success (all providers). |

| extensions/copilot/src/extension/intents/node/agentIntent.ts | Floors local token estimates with latest server prompt-token usage when computing summarization ratios. |

Copilot's findings

- Files reviewed: 6/6 changed files

- Comments generated: 1

dmitrivMS

approved these changes

May 9, 2026

Contributor

blocks-ci screenshots changedReplace the contents of Updated blocks-ci-screenshots.md<!-- auto-generated by CI — do not edit manually -->

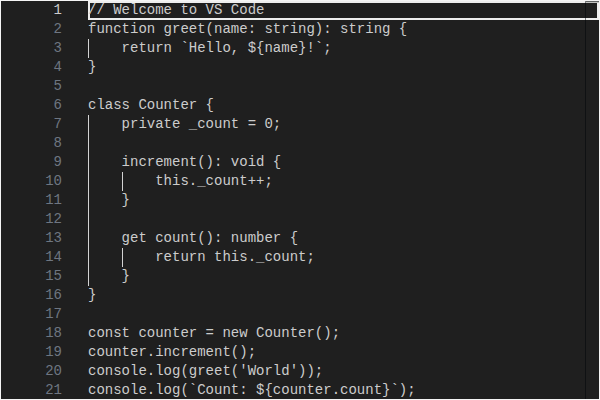

#### editor/codeEditor/CodeEditor/Dark

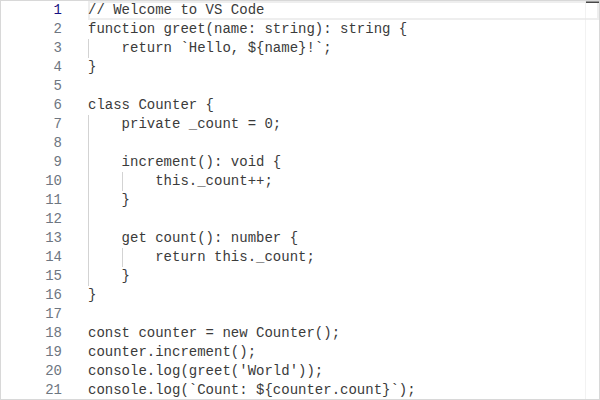

#### editor/codeEditor/CodeEditor/Light

#### editor/inlineChatZoneWidget/InlineChatZoneWidget/Dark

#### editor/inlineChatZoneWidget/InlineChatZoneWidget/Light

#### editor/inlineChatZoneWidget/InlineChatZoneWidgetTerminated/Dark

#### editor/inlineChatZoneWidget/InlineChatZoneWidgetTerminated/Light

|

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

The local tokenizer undercounts a few content kinds (Anthropic thinking

blocks, per-block JSON envelopes), so compaction can sit at ratio ~0.7

while server

prompt_tokensis already over budget and kick-off neverfires.

Floor the local estimate with the previous fetch's

usage.prompt_tokensvia a new

TurnTokenUsageMetadata(renamed fromAnthropicTokenUsageMetadata,now recorded for all providers).

findLastwalks turns to cover bothwithin-turn iterations and iteration 1 of a fresh user turn.