-

Notifications

You must be signed in to change notification settings - Fork 25.7k

Update sdp guards for performance #87241

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

🔗 Helpful Links🧪 See artifacts and rendered test results at hud.pytorch.org/pr/87241

Note: Links to docs will display an error until the docs builds have been completed. ✅ No Failures, 5 PendingAs of commit 013511a: This comment was automatically generated by Dr. CI and updates every 15 minutes. |

| int64_t offset_constant = (tensor_offsets[1] - tensor_offsets[0]) / | ||

| tensor_size_ptr[0] * tensor_stride_ptr[0]; | ||

|

|

||

| for (int64_t i = 2; i < n_tensors; i++) { |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Could probably roll this into the previous loop but this uses the stride matrix and size matrix to compute numel so is based off the first check for descending ordering

|

@pytorchbot merge -l |

Merge startedThe Your change will be merged once all checks on your PR pass (ETA 0-4 Hours). Learn more about merging in the wiki. Questions? Feedback? Please reach out to the PyTorch DevX Team |

|

Hey @drisspg. |

Summary

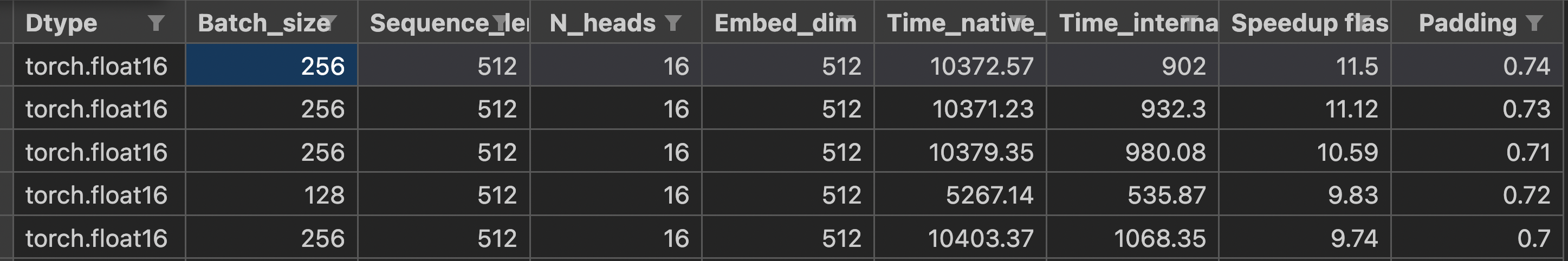

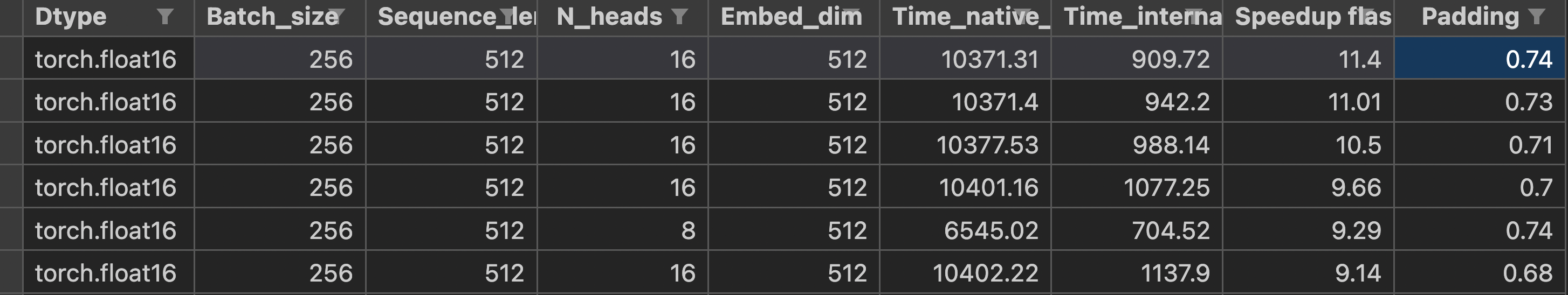

Makes the contiguous check for the nt input more strict/correct as well as makes some performance improvements to the checks