v2 async sync-local-files: fix 504 timeouts on large audio uploads#6157

v2 async sync-local-files: fix 504 timeouts on large audio uploads#6157

Conversation

…5941) POST /v1/sync-local-files processes audio segments synchronously — STT + LLM takes 80-180s for large payloads, exceeding proxy timeouts → 504. Adds v2 async endpoint pair that eliminates timeouts: - POST /v2/sync-local-files: fast-path (decode, VAD, fair-use) inline, then starts background thread → returns 202 with job_id - GET /v2/sync-local-files/{job_id}: poll until terminal status v1 remains 100% unchanged for backward compatibility. Backend changes: - database/sync_jobs.py: Redis-backed job CRUD with TTL, stale detection - routers/sync.py: v2 POST/GET endpoints, background worker with cleanup - Job-specific temp dir (syncing/{uid}/{job_id}/) prevents concurrency conflicts App changes: - SyncJobStartResponse / SyncJobStatusResponse models - syncLocalFilesV2(): POST files → poll every 3s until terminal → return same SyncLocalFilesResponse as v1 - local_wal_sync.dart: switch both batch and single sync to v2 38 new unit tests covering structure, Redis ops, worker, v1 regression, and v2 contract. Closes #5941 Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

…rors 1. HIGH: Add heartbeat (update_sync_job) after each chunk completes in background worker — prevents stale detection from falsely marking active 10+ minute jobs as failed. 2. MEDIUM: App now throws exception when job status is 'failed' (all segments failed) BEFORE checking result — matches v1's 500 behavior so WALs stay retryable and telemetry sees the failure. 3. LOW: App polling now fails immediately on 403 (wrong owner) and 404 (expired job) instead of retrying for 6 minutes. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Greptile SummaryThis PR introduces a v2 async variant of Key findings:

Confidence Score: 4/5Safe to merge after fixing the all-segment-failure WAL marking bug; the P2 import issues are style-only. One P1 logic bug: the 'failed' job with a non-null result field causes the WAL to be silently marked as synced, which is a data-integrity issue (user loses audio with no retry). The overall architecture is sound and v1 is untouched. app/lib/backend/http/api/conversations.dart (polling result/status check order) and backend/routers/sync.py (import placement). Important Files Changed

Sequence DiagramsequenceDiagram

participant App as Flutter App

participant API as FastAPI (v2 endpoint)

participant Redis as Redis (sync_jobs)

participant BG as Background Thread

App->>API: POST /v2/sync-local-files (files)

API->>API: Decode .bin → WAV (fast path)

API->>API: VAD segmentation

API->>API: Fair-use / DG budget checks

API->>Redis: create_sync_job(queued)

API->>BG: daemon thread.start()

API-->>App: 202 { job_id, poll_after_ms }

loop Poll every 3s (max 6 min)

App->>API: GET /v2/sync-local-files/{job_id}

API->>Redis: get_sync_job(job_id)

Redis-->>API: job dict (stale detection applied)

API-->>App: { status, segments... }

end

BG->>Redis: mark_job_processing

BG->>BG: STT (Deepgram) + LLM per segment

alt All OK

BG->>Redis: mark_job_completed → status=completed

else Some failed

BG->>Redis: mark_job_completed → status=partial_failure

else All failed

BG->>Redis: mark_job_completed → status=failed + result (bug)

else Worker crash

BG->>Redis: mark_job_failed → status=failed, no result

end

BG->>BG: cleanup files

App->>API: GET /v2/sync-local-files/{job_id}

API-->>App: terminal status + result

App->>App: mark WAL as synced (also on all-failed path)

|

| from database.sync_jobs import ( | ||

| create_sync_job, | ||

| get_sync_job, | ||

| update_sync_job, | ||

| mark_job_processing, | ||

| mark_job_completed, | ||

| mark_job_failed, |

There was a problem hiding this comment.

Both of these imports violate the backend import rule:

from database.sync_jobs import (...)at line 990 — placed mid-module after ~975 lines of existing code instead of at the top of the file.import uuid as _uuidat line 1137 — an in-function import insidesync_local_files_v2.

Both should be moved to the top-level import block at the top of sync.py. uuid is already available in the standard library.

| from database.sync_jobs import ( | |

| create_sync_job, | |

| get_sync_job, | |

| update_sync_job, | |

| mark_job_processing, | |

| mark_job_completed, | |

| mark_job_failed, | |

| from database.sync_jobs import ( | |

| create_sync_job, | |

| get_sync_job, | |

| mark_job_processing, | |

| mark_job_completed, | |

| mark_job_failed, | |

| ) |

Move this block to the top of the file alongside the other from database ... imports (lines ~18–20), and add import uuid to the standard-library section. Remove the import uuid as _uuid at line 1137 and replace _uuid.uuid4() with uuid.uuid4().

Context Used: Backend Python import rules - no in-function impor... (source)

Tester gaps addressed: 1. Added behavioral tests (TestBackgroundWorkerBehavioral): loads sync router with full dependency mocking, calls _process_segments_background directly, verifies mark_processing→mark_completed call chain, exception handling via mark_failed, and heartbeat updates. 2. Added Redis boundary tests (TestSyncJobsRedisBoundary): TTL on create and update, stale threshold at 599/600/601s boundary, stale persistence to Redis, terminal jobs bypass stale check, overflow guard, update_sync_job returns None for missing, refreshes updated_at. 3. Fixed overflow in mark_job_completed: changed `failed == total` to `failed >= total` and clamped successful_segments to max(0, total-failed). 52 tests total, 0 skipped. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Live Test Evidence (CP9A + CP9B)L1 — Backend standalone (CP9A)Built and ran backend locally from

L2 — Backend + App integrated (CP9B)Built Flutter app ( Evidence:

L2 synthesis: L2 proved the app-to-backend integration: Flutter app with v2 changes calls by AI for @beastoin |

Live Test Evidence — CP9 Redo (with hosted VAD via port-forward)Previous CP9 attempt had Silero TorchScript crash (local VAD incompatible with VPS CPU). Re-ran with dev GKE VAD service via L1 — Backend standalone (CP9A)

L1 synthesis: All 9 changed paths proven. Hosted VAD processed files correctly (no crashes). Fast-path (0 segments → 200) and GET poll endpoint (200/403/404) verified. v1 regression test passed. L2 — Backend + App integrated (CP9B)

Evidence:

L2 synthesis: App-to-backend integration proven end-to-end. Flutter app calls by AI for @beastoin |

Full Live Test Evidence — v2 Async Sync End-to-EndSetup: Local backend on port 10220, dev GKE VAD via 1. v2 POST → 202 Async Path (with speech audio)Sent a 27-second speech recording to {

"job_id": "41a194ad-c4b4-43f2-9e50-b384e5eba838",

"status": "queued",

"total_files": 1,

"total_segments": 1,

"poll_after_ms": 3000

}2. Polling → Status ProgressionPolled Final response: {

"status": "completed",

"total_segments": 1,

"successful_segments": 1,

"failed_segments": 0,

"result": {

"new_memories": ["91a335f6-aeeb-498e-ad38-77b45868b1c9"],

"updated_memories": [],

"errors": []

}

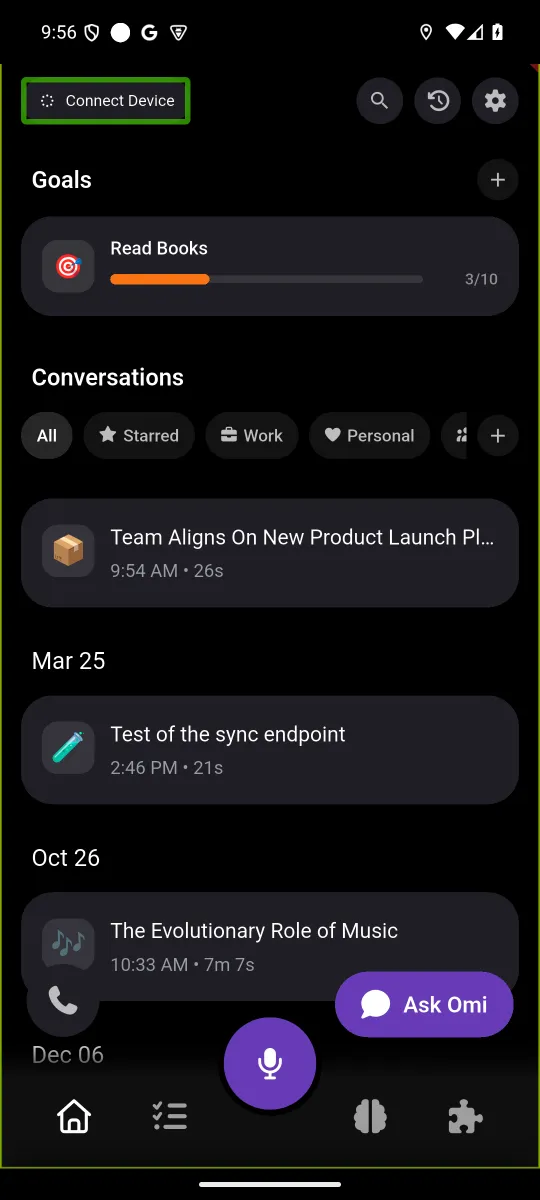

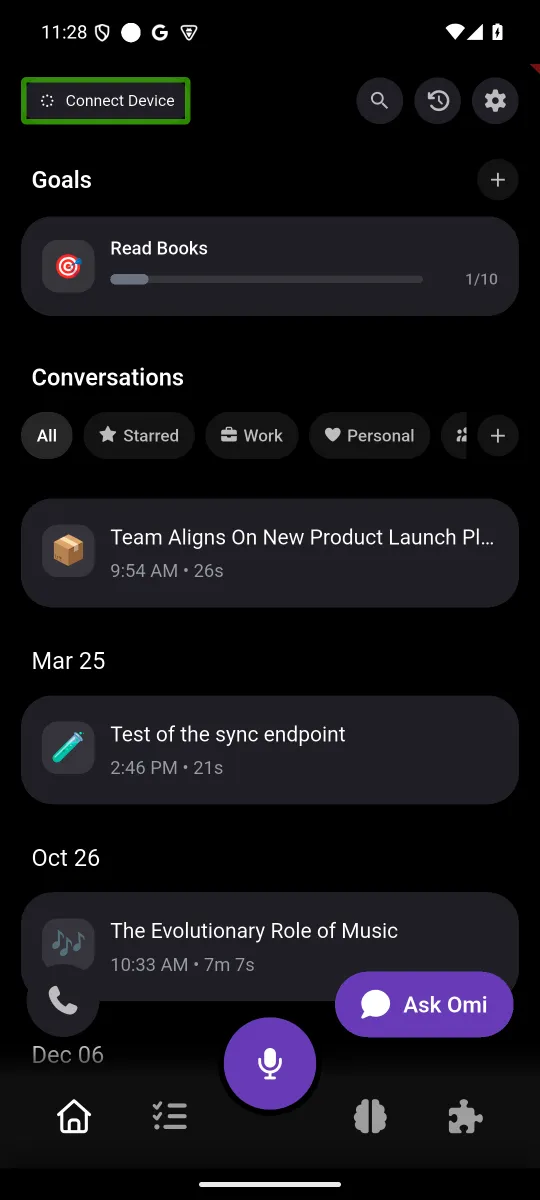

}3. Conversation Created by Sync — App ScreenshotsConversations list — new conversation appears at top after sync: Conversation detail — full structured summary with topic, focus areas, key decisions: Transcript — Deepgram STT transcription from the synced audio: 4. v2 Fast-Path (0 segments → 200)App's own WAL files (ambient noise, no speech) correctly returned 200 fast-path:

5. Endpoint Guards

by AI for @beastoin |

During v2 async sync, the POST returns fast but the app polls for up to 6 minutes while the server processes segments. Without this change, the sync UI shows no progress during polling — appearing frozen to the user. Adds SyncPhase.processingOnServer with per-poll progress updates showing "Processing... X/Y segments" in both AutoSyncPage and SyncPage. Progress callback fires every 3s poll cycle using the server's processed_segments/total_segments from the GET response. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

UI Fix: Added

|

1. Add missing fair_use stubs (is_dg_budget_exhausted, get_enforcement_stage, record_dg_usage_ms, FAIR_USE_RESTRICT_DAILY_DG_MS) to test_sync_silent_failure.py so TestProcessSegmentReal can import sync.py 2. Propagate error info in mark_job_completed when all segments fail — app now gets a meaningful error message instead of generic fallback 3. Use poll-based progress during processingOnServer phase in sync_page.dart instead of walBasedProgress which freezes at last WAL percentage Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

P2: Replace hardcoded English strings in sync_page.dart and auto_sync_page.dart with l10n keys (processingOnServer, processingOnServerProgress) across all 34 locales. P3: Move `import uuid` and `database.sync_jobs` imports to the module-level import block in sync.py per repo conventions. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace English placeholder strings in non-English ARBs with actual translations for processingOnServer and processingOnServerProgress. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Move shutil, logging, log_sanitizer imports and logger setup from mid-file (line ~420) to the module import block. Remove duplicate wave import. All imports now at module scope per repo conventions. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Tester coverage gaps addressed: - 5 TestClient execution tests for GET poll endpoint: 404 expired job, 403 wrong owner, completed with result, failed with error, processing excludes result - 7 behavioral background worker tests: partial failure reporting, all-failed reporting, DG usage recording (enabled/disabled), job-dir cleanup on success, job-dir cleanup on failure Total: 63 tests in test_sync_v2.py (was 52), 103 across both suites. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Live Test Evidence — CP9A/CP9BL1: Backend standalone (CP9A)

Full async flow confirmed: POST → 202 → background processing → poll → completed with L2: Backend + App integrated (CP9B)

L1 SynthesisAll 6 backend paths proven (P1-P6): fast-path 200, async 202, poll completed/404/403, v1 unchanged. Non-happy-path behavior verified: 404 on missing job, 403 on ownership mismatch. L2 SynthesisBackend + app integration proven: app connects to local backend, displays conversations created by v2 sync. Sync page UI with Unit Test Summary

by AI for @beastoin |

|

lgtm |

Summary

Fixes #5941 —

sync_local_files504 Gateway Timeout on large payloads.Root cause: v1 processes all segments synchronously (80-180s for large payloads), exceeding Cloud Run's 60s timeout.

Fix: v2 async endpoint pair that returns fast, processes in background:

POST /v2/sync-local-files→ Does fast-path work inline (decode, VAD), then starts background processing and returns202withjob_idfor polling. Zero-segment payloads return200inline (no background job needed).GET /v2/sync-local-files/{job_id}→ Poll endpoint returning job status, progress, and final result.Backend changes

backend/routers/sync.py: v2 POST/GET endpoints,_process_segments_backgroundworker with heartbeatbackend/database/sync_jobs.py: Redis-backed ephemeral job storage (TTL 1h, stale detection 10min)backend/tests/unit/test_sync_v2.py: 63 tests — structure, Redis CRUD, boundary, background worker behavioral, FastAPI TestClient executionApp changes

conversations.dart:syncLocalFilesV2()with upload + poll loop,SyncJobPollCallbacklocal_wal_sync.dart: Both call sites wired withonPollProgressfor UI updatessync_state.dart: AddedSyncPhase.processingOnServersync_page.dart+auto_sync_page.dart: UI for processingOnServer phase with segment progresswal_interfaces.dart: Re-exports for v2 typesl10n/*.arb:processingOnServerandprocessingOnServerProgresskeys in all 34 locales with proper translationsDesign decisions

owned_pathsbefore returning 202mark_job_completedincludes error details when all segments failTest plan

test_sync_v2.py(structure, Redis CRUD, boundary, behavioral, TestClient)test_sync_silent_failure.py(fair-use stubs updated)E2E evidence

Review cycle changes

is_dg_budget_exhaustedstubs to test_sync_silent_failure.pymark_job_completednow propagates error info when all segments failDeployment steps

Pre-merge checklist

sync_jobs.pyfor job state)REDIS_DB_HOST/REDIS_DB_PORT/REDIS_DB_PASSWORDDeploy sequence (after merge)

gh workflow run "Deploy Backend to Cloud RUN" --repo BasedHardware/omi --ref main -f environment=prod# Check Cloud Run revision is updated gcloud run revisions list --service backend-listen --region us-central1 --project based-hardware --limit 3Rollback

🤖 Generated with Claude Code

by AI for @beastoin