pip install torch

pip install numpy

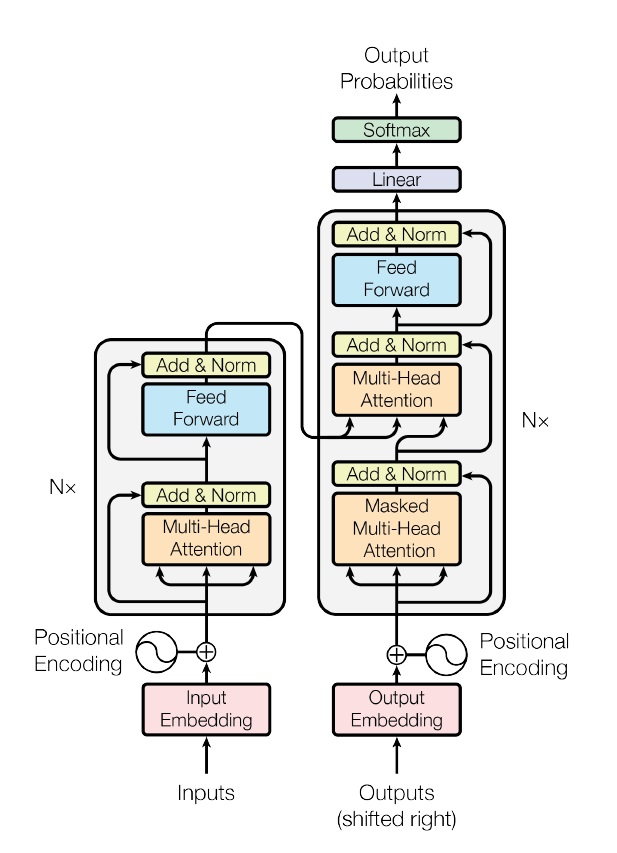

Implement based on NumPy and PyTorch, with

- Multihead Self-attention

- Basic Positional Embedding(Increasing linear numbers)

- Feed Forward layer

- Adding and normalization(Residual Network)

Model been used to content generate. Since it's been trained at financial discourse, the generated text had stay on that domain.

And we analyzing the model performance based on the intrinsic property: Perplexity.