This is the GitHub repository for the Applied Machine Learning Project: Feather in Focus! This project is associated with a private Kaggle competition, and you can find more details about the competition here.

The goal of the challenge is to classify the bird image and find the name of the bird! This dataset contains 200 bird species, and the goal is to achieve a high accuracy in predicting the birds!

BirdDeep's model strives for increased robustness by decoupling forward and background image subsets. This is achieved through background removal, comple-mented by other accuracy enhancing features including:

- Data Balancing

- Data augmentation

- Hyperparameter Tuning

- Use of pre-trained Models

Noise or signal: The role of image backgrounds in object recognition presents several background removal methods. BIRDeep uses the Only-FG format in Figure 1. This choice eliminates background interactions, enabling the model to concentrate solely on bird characteristics.

Figure 1: Obtained from Xiao, K., Engstrom, L., Ilyas, A., & Madry, A. (2020). Noise or signal: The role of image backgrounds in object recognition. arXiv preprint arXiv:2006.09994.

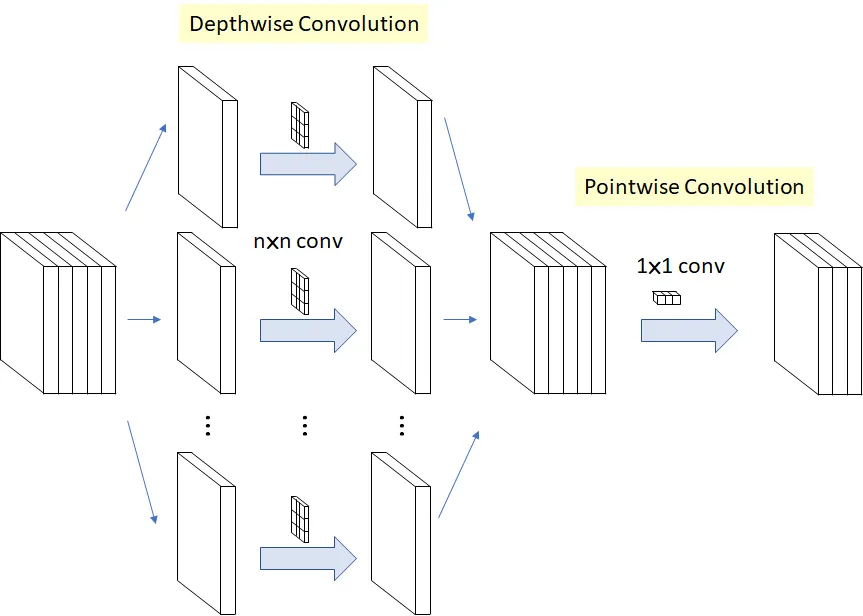

The featured pre-trained model is Xception. This model employs depthwise separable convolution: a pointwise convolution succeeded by a depthwise convolution.

Figure 2: Xception convolution procedure. A 1x1 pointwise convolution is performed to then apply nxn depthwise convolutions.

To emulate the Only-FG image format, the system performs interactive foreground selection using GrabCut. This method introduces a simplicity tradeoff, occasionally yielding suboptimal results due to a predefined cutting area. In BIRDeep, a rectangular boundary (50, 50, 200, 200) is set over 299x299 pixel images, as illustrated in Figure 3.

Figure 3: BirdDeep’s foreground and background extraction process based on GrabCut masking.

Each foreground only image is incorporated to the training dataset alongside four copies subjected to data augmentation.

- Image horizontal flip

- Image 90 degrees rotation

- Image contrast increased by 50%

- Image contrast decreased by 50%

Figure 4: BirdDeep’s data augmentation processes applied over a single image.

The Baseline Model achieved a test accuracy of 58%.

The Mixed Model approach can be found here and involves three jupyter notebooks:

- Generating Subfolder - Generates the needed folder structure for the Mixed Model

- Data Balancing - Performs data balancing to only use 27 files

- Mixed Model achieved a test accuracy of 60%. This approach includes mainly augmented Only-FG images, but also some raw images.

All the other Branches were created for testing purposes and can be ingnored regarding the sumbission for Applied Machine Learning.

| Name | |

|---|---|

| Alina Baciu | alina.baciu@student.uva.nl |

| Thomas Erhard | thomas.erhard@student.uva.nl |

| Jaime Pons | jaime.pons@student.uva.nl |

| Leonardo Provenzano | leonardo.provenzano@student.uva.nl |

Project Link: https://github.com/Jaime47/BIRDeep

To facilitate the efficient loading of images, we developed a function that creates distinct subfolders for each class and organizes the images accordingly. To streamline the process of transferring the dataset across different machines, such as Colab and Snellius, we uploaded the directory containing both train and test images to Roboflow. Leveraging Roboflow's export capabilities, we were able to easily move the images to our desired destinations whenever needed.