-

Notifications

You must be signed in to change notification settings - Fork 472

Description

1. Quick Debug Checklist

- Are you running on an Ubuntu 18.04 node?

yes - Are you running Kubernetes v1.13+?

yes - Are you running Docker (>= 18.06) or CRIO (>= 1.13+)?

no - Do you have

i2c_coreandipmi_msghandlerloaded on the nodes?

yes - Did you apply the CRD (

kubectl describe clusterpolicies --all-namespaces)

yes

1. Issue or feature description

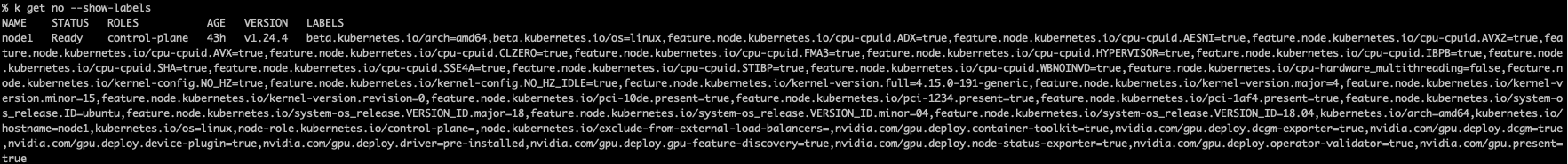

On a fresh install of kubernetes with kubespray, and default values for gpu-operator. The nodes are always labeled with nvidia.com/gpu.deploy.driver=pre-installed even though there are no nvidia drivers installed. This causes gpu-operator pods aside from feature-discovery to be stuck in Init.

% k get no --show-labels NAME STATUS ROLES AGE VERSION LABELS node1 Ready control-plane 43h v1.24.4 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,feature.node.kubernetes.io/cpu-cpuid.ADX=true,feature.node.kubernetes.io/cpu-cpuid.AESNI=true,feature.node.kubernetes.io/cpu-cpuid.AVX2=true,feature.node.kubernetes.io/cpu-cpuid.AVX=true,feature.node.kubernetes.io/cpu-cpuid.CLZERO=true,feature.node.kubernetes.io/cpu-cpuid.FMA3=true,feature.node.kubernetes.io/cpu-cpuid.HYPERVISOR=true,feature.node.kubernetes.io/cpu-cpuid.IBPB=true,feature.node.kubernetes.io/cpu-cpuid.SHA=true,feature.node.kubernetes.io/cpu-cpuid.SSE4A=true,feature.node.kubernetes.io/cpu-cpuid.STIBP=true,feature.node.kubernetes.io/cpu-cpuid.WBNOINVD=true,feature.node.kubernetes.io/cpu-hardware_multithreading=false,feature.node.kubernetes.io/kernel-config.NO_HZ=true,feature.node.kubernetes.io/kernel-config.NO_HZ_IDLE=true,feature.node.kubernetes.io/kernel-version.full=4.15.0-191-generic,feature.node.kubernetes.io/kernel-version.major=4,feature.node.kubernetes.io/kernel-version.minor=15,feature.node.kubernetes.io/kernel-version.revision=0,feature.node.kubernetes.io/pci-10de.present=true,feature.node.kubernetes.io/pci-1234.present=true,feature.node.kubernetes.io/pci-1af4.present=true,feature.node.kubernetes.io/system-os_release.ID=ubuntu,feature.node.kubernetes.io/system-os_release.VERSION_ID.major=18,feature.node.kubernetes.io/system-os_release.VERSION_ID.minor=04,feature.node.kubernetes.io/system-os_release.VERSION_ID=18.04,kubernetes.io/arch=amd64,kubernetes.io/hostname=node1,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=,nvidia.com/gpu.deploy.container-toolkit=true,nvidia.com/gpu.deploy.dcgm-exporter=true,nvidia.com/gpu.deploy.dcgm=true,nvidia.com/gpu.deploy.device-plugin=true,nvidia.com/gpu.deploy.driver=pre-installed,nvidia.com/gpu.deploy.gpu-feature-discovery=true,nvidia.com/gpu.deploy.node-status-exporter=true,nvidia.com/gpu.deploy.operator-validator=true,nvidia.com/gpu.present=true

3. Information to attach (optional if deemed irrelevant)

- kubernetes pods status:

kubectl get pods -n gpu-operator

% kubectl get pods -n gpu-operator NAME READY STATUS RESTARTS AGE gpu-feature-discovery-5ldkw 0/1 Init:0/1 0 6m2s gpu-feature-discovery-m879f 0/1 Init:0/1 0 6m2s gpu-feature-discovery-rwf7k 0/1 Init:0/1 0 6m2s gpu-operator-569d9c8cb-g2qsb 1/1 Running 0 6m24s gpu-operator-node-feature-discovery-master-84c7c7c6cf-5xkqn 1/1 Running 0 6m24s gpu-operator-node-feature-discovery-worker-dmtvv 1/1 Running 0 6m24s gpu-operator-node-feature-discovery-worker-szjrf 1/1 Running 0 6m24s gpu-operator-node-feature-discovery-worker-wsr55 1/1 Running 0 6m24s nvidia-container-toolkit-daemonset-k7qrd 0/1 Init:0/1 0 6m2s nvidia-container-toolkit-daemonset-qxr5j 0/1 Init:0/1 0 6m2s nvidia-container-toolkit-daemonset-rkf5b 0/1 Init:0/1 0 6m2s nvidia-dcgm-exporter-9rbsx 0/1 Init:0/1 0 6m2s nvidia-dcgm-exporter-cmql4 0/1 Init:0/1 0 6m2s nvidia-dcgm-exporter-q48vb 0/1 Init:0/1 0 6m2s nvidia-device-plugin-daemonset-fbz7l 0/1 Init:0/1 0 6m2s nvidia-device-plugin-daemonset-qmq4t 0/1 Init:0/1 0 6m2s nvidia-device-plugin-daemonset-x6s68 0/1 Init:0/1 0 6m2s nvidia-operator-validator-kc7km 0/1 Init:0/4 0 6m2s nvidia-operator-validator-lb4mw 0/1 Init:0/4 0 6m2s nvidia-operator-validator-wf7vv 0/1 Init:0/4 0 6m2s

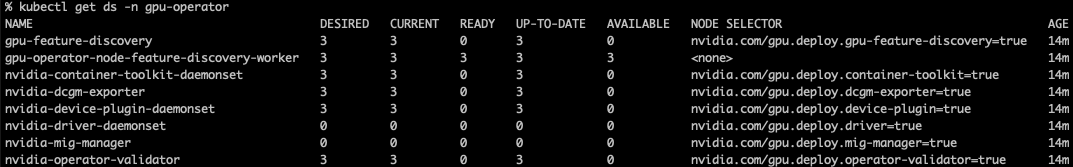

- kubernetes daemonset status:

kubectl get ds -n gpu-operator

kubectl get ds -n gpu-operator NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE gpu-feature-discovery 3 3 0 3 0 nvidia.com/gpu.deploy.gpu-feature-discovery=true 6m38s gpu-operator-node-feature-discovery-worker 3 3 3 3 3 <none> 7m nvidia-container-toolkit-daemonset 3 3 0 3 0 nvidia.com/gpu.deploy.container-toolkit=true 6m38s nvidia-dcgm-exporter 3 3 0 3 0 nvidia.com/gpu.deploy.dcgm-exporter=true 6m38s nvidia-device-plugin-daemonset 3 3 0 3 0 nvidia.com/gpu.deploy.device-plugin=true 6m38s nvidia-driver-daemonset 0 0 0 0 0 nvidia.com/gpu.deploy.driver=true 6m38s nvidia-mig-manager 0 0 0 0 0 nvidia.com/gpu.deploy.mig-manager=true 6m38s nvidia-operator-validator 3 3 0 3 0 nvidia.com/gpu.deploy.operator-validator=true 6m38s

-

If a pod/ds is in an error state or pending state

kubectl describe pod -n NAMESPACE POD_NAME

n/a -

If a pod/ds is in an error state or pending state

kubectl logs -n NAMESPACE POD_NAME

n/a -

NVIDIA shared directory:

ls -la /run/nvidia

ls -la /run/nvidia total 0 drwxr-xr-x 4 root root 80 Aug 25 17:09 . drwxr-xr-x 33 root root 1080 Aug 25 17:09 .. drwxr-xr-x 2 root root 40 Aug 25 17:09 driver drwxr-xr-x 2 root root 40 Aug 25 17:09 validations -

NVIDIA packages directory:

ls -la /usr/local/nvidia/toolkit

ls -la /usr/local/nvidia/toolkit ls: cannot access '/usr/local/nvidia/toolkit': No such file or directory -

NVIDIA driver directory:

ls -la /run/nvidia/driver

ls -la /run/nvidia/driver total 0 drwxr-xr-x 2 root root 40 Aug 25 17:09 . drwxr-xr-x 4 root root 80 Aug 25 17:09 .. -

kubelet logs

journalctl -u kubelet > kubelet.logs

kubelet.logs.txt