[BEAM-2991] Sets a TTL on BigQueryIO.read().fromQuery() temp dataset#3883

[BEAM-2991] Sets a TTL on BigQueryIO.read().fromQuery() temp dataset#3883jkff wants to merge 1 commit intoapache:masterfrom

Conversation

9b1aaa5 to

d06d4b2

Compare

|

Rebased to fix compilation error. PTAL. |

|

retest this please |

Also fixes a bug where we start the query job twice - once to extract the files, once to get schema. Luckily it doesn't actually run twice, because inserting the same job a second time gives an ignorable error, but it was still icky. Also adds some logging.

reuvenlax

left a comment

reuvenlax

left a comment

There was a problem hiding this comment.

however, please change description to link to appropriate JIRA

|

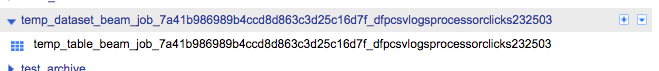

@jkff - Has Dataflow always created Datasets/Tables in BigQuery? I've never seen it do that before, and I always through it exported tables/queries to GCS for reading into the pipeline. The issue we are now seeing is that our Dataflow pipelines share the same project id as our BigQuery users. So, these temp datasets/tables are now showing up in their web UI. Our BigQuery users know nothing about Dataflow and the pipelines we run behind the scenes for them - they just ETL the data in and out of BigQuery. It's abstracted from the BigQuery users. We've had several users contact us asking why they suddenly see these weird looking datasets and tables in the BigQuery Web UI e.g: This is not a good experience for our BQ users. Also, what if a batch pipeline takes more than 24hrs? We've had batch pipelines run close to that due to sheer volume of data its ingesting and writing. Cheers, |

|

Yes, I believe dataflow always created temporary datasets when reading from

a query (except perhaps in some versions before 2.0? I'm not sure but I

don't think so, because Bigquery doesn't have a feature to directly export

results of a large query to files). Query results are exported to a

dataset, then the dataset is exported to files and the dataset is deleted,

then the job processes the files and the files are deleted at some point.

…On Thu, Oct 12, 2017, 9:53 PM Graham Polley ***@***.***> wrote:

@jkff <https://github.com/jkff> - Has Dataflow always created

Datasets/Tables in BigQuery? I've never seen it do that before, and I

always through it exported tables/queries to GCS for reading into the

pipeline. The issue we are now seeing is that our Dataflow pipelines share

the same project id as our BigQuery users.

So, these temp datasets/tables are now showing up in their web UI. Our

BigQuery users know nothing about Dataflow and the pipelines we run behind

the scenes for them - they just ETL the data in and out of BigQuery. It's

abstracted from the BigQuery users.

We've had several users contact us asking why they suddenly see these

weird looking datasets and tables in the BigQuery Web UI e.g:

[image: screen shot 2017-10-09 at 5 06 15 pm]

<https://user-images.githubusercontent.com/5554342/31530787-77e96922-b02e-11e7-8cf6-28aa24d87211.png>

This is not a good experience for our BQ users.

Also, what if a batch pipeline takes more than 24hrs? We've had batch

pipelines run close to that due to sheer volume of data its ingesting and

writing.

Cheers,

Graham

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

<#3883 (comment)>, or mute

the thread

<https://github.com/notifications/unsubscribe-auth/AAU-qv-pEY2XlUrrhAMeYtjhomX1KN02ks5sruzjgaJpZM4PgIq->

.

|

Also fixes a bug where we start the query job twice - once to extract the files, once to get schema. Luckily it doesn't actually run twice, because inserting the same job a second time gives an ignorable error, but it was still icky.

Also adds some logging.

R: @reuvenlax