[Improve Doc] : Modify the disadvantages of the lazyLoadOnStart feature.#10608

[Improve Doc] : Modify the disadvantages of the lazyLoadOnStart feature.#10608jihoonson merged 2 commits intoapache:masterfrom

Conversation

|

I will keep digging and try to make an another PR to fix this problem of lazyOnStart. |

|

@jihoonson @nishantmonu51 @capistrant Thanks for your review and merge! |

I just make a PR(#10650) to solve the problem described in the quote and tested on Druid Cluster. |

|

@zhangyue19921010 I guess this doc should be modified again according to #10688 . The corrupted segment doesn't need manual deletion now. |

|

Hi @kaijianding. Thanks for reminding. I will make a further PR to modified docs again today. |

|

done. #10778 |

…re. (apache#10608) * modify docs * modify docs Co-authored-by: yuezhang <yuezhang@freewheel.tv>

Description

Conclusion

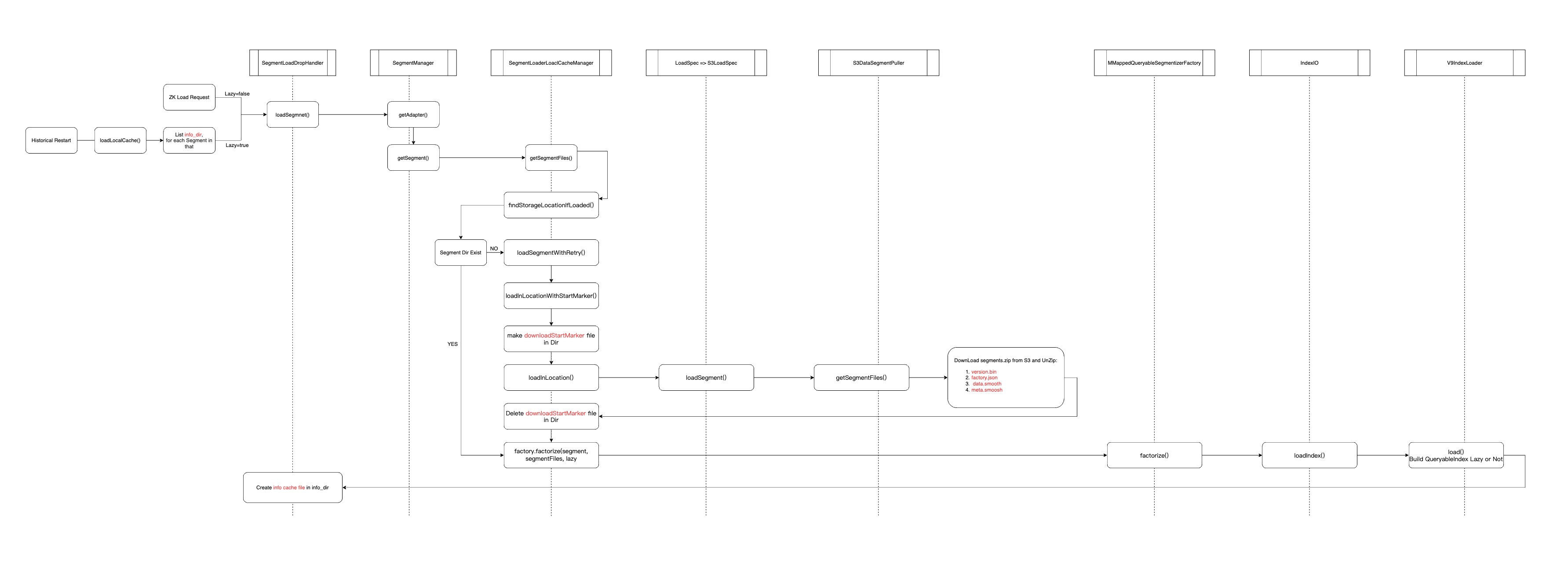

meta.smooshmaliciously, Historical node with lazyOnStart enable will startup successfully but queries using these segments would fail at runtime.Here is the work flow of historical server loading segments.

Condition 1: When Historical Server re-start, it will try to load local cached segments listed in

info_dir.Condition 2: Historical will create a specific file named by a corresponding segmentID in

info_dirafter downloading/creating segment files successfully.If Historical crashed in the process of downloading/creating segment files before

info_dir filecreated, Historical would not try to load this segments when startup lazily. And the loading action of this segment will be triggered by ZKCurator which is a unLazy action.Un-lazy loading action will throw exception because of damaged segment files.

And will delete all the damaged files.

If Historical crashed after

info_dir filecreated, it means all the segments file is complete.What's more!

If served segments is damaged like manually damage

meta.smooshmaliciously, Historical node with lazyOnStart enable will startup successfully but queries using these segments would fail at runtime.I delete

video_cro_network_name,0,23837261,23910951inmeta.smooshmanually and restart the historical node(enable lazyOnStart).Historical server startup successfully but queries is failed.

This PR has:

Key changed/added classes in this PR

index.md