disable broker parallel merge for Java versions greater than 8 and less than 20 due to apparent bug#14130

Conversation

…ss than 20 due to apparent bug with how we are using fork join pool

|

I guess this should probably just disable by default instead of completely, to allow risk takers to still enable it for these versions. I'll modify this PR in a bit to change it to just be off by default, and issue a warning in the logs if it detects the config as enabled for these java versions to perhaps more carefully monitor things to ensure the brokers are ok. |

|

|

||

| public boolean useParallelMergePool() | ||

| { | ||

| if (JvmUtils.majorVersion() < 20 && JvmUtils.majorVersion() >= 9) { |

There was a problem hiding this comment.

A comment here explaining the reasoning would be nice.

| if (hasTimeout) { | ||

| final long thisTimeoutNanos = timeoutAtNanos - System.nanoTime(); | ||

| if (thisTimeoutNanos < 0) { | ||

| item = null; |

There was a problem hiding this comment.

What's this line & similar ones like it for?

There was a problem hiding this comment.

these changes were just me be cautious to help ensure that isReleasable always can return true if an exception is thrown. Im not sure if these are actually problematic or fix any problems, but based on reading the fork join pool code and looking at how it does the managed blocking stuff there were a handful of places where it is checked on a loop which made me a bit nervous, so I wanted to try to minimize the chances of anything getting stuck.

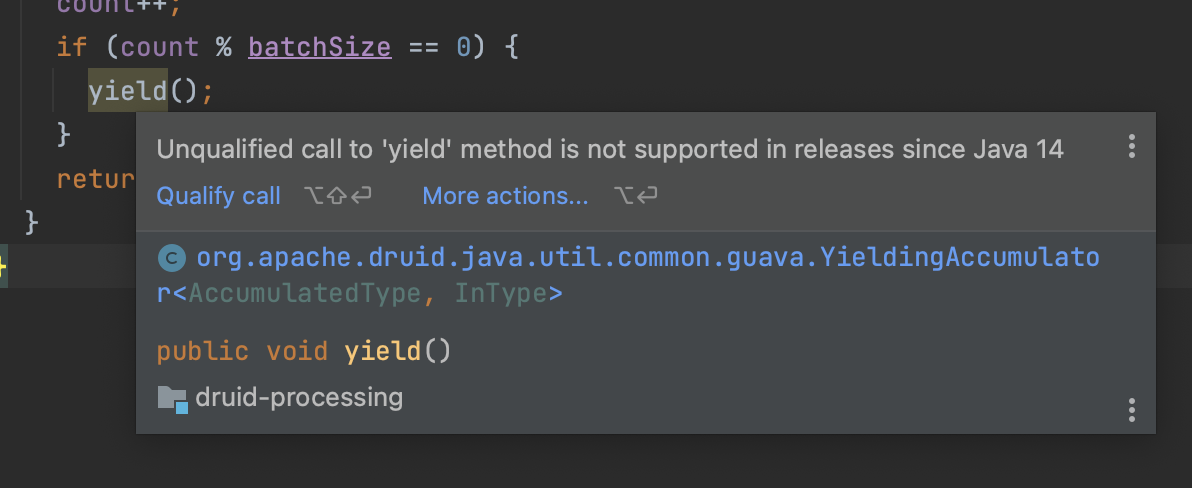

| count++; | ||

| if (count % batchSize == 0) { | ||

| yield(); | ||

| this.yield(); |

There was a problem hiding this comment.

Is this change cosmetic or does it change behavior?

| public boolean isReleasable() | ||

| { | ||

| return resultBatch != null && !resultBatch.isDrained(); | ||

| return yielder.isDone() || (resultBatch != null && !resultBatch.isDrained()); |

There was a problem hiding this comment.

same comment about being overly cautious

| assertException(input); | ||

| Throwable t = Assert.assertThrows(RuntimeException.class, () -> assertException(input)); | ||

| Assert.assertEquals("exploded", t.getMessage()); | ||

| Assert.assertTrue(pool.awaitQuiescence(1, TimeUnit.SECONDS)); |

There was a problem hiding this comment.

suggestion— put the wait-for-quiescence stuff in @After

|

fyi, I am still trying to prove this is actually real and not an artifact of how the test is written |

|

@clintropolis I took a look at this and I think there is a an assumption that is broken by the testing configuration but that could occur in the real world. It's late here so I'll share my notes and wrap up. From the user's perspective, the query is canceled. However, for the system, (I think) the yielder keeps reading data at least until it can load a batch of data. In short I think there is something where the two-layer yielders do not propagate cancellation when the exception occurs, so the one started on the fjpool keeps running. If I extend the timeout and capture a stack trace, And adding a whole ton of println / statements I get the stdout, in which we can see:

I haven't quite gotten to the bottom of it, but there's something here, I think it's vaguely in this area: In the test:

|

Description

Through a bit of luck, stumbled into a possible bug with broker parallel merging on certain java versions which can eventually result in all worker threads becoming completely blocked effectively blocking queries.

I have adjusted the

ParallelMergeCombiningSequenceTestto check that the pool becomes idle after a short period whenever an exception occurs. The adjust tests fail pretty consistently for me with java 11 and 17, but can repeat indefinitely on java 8 and java 20 on my laptop. Some of these timeout tests are still ever so slightly racy since the exception message can vary slightly depending on which part in the code triggers the timeout exception, but the true important part is that previously missing a check that the pool becomes idle.I still haven't determined why this happens, perhaps a java bug or at least some issue with how we are trying to configure/use the pool on these versions. It could also just be an artifact of how the test is written, and not a real issue? Im not really sure yet.

Release note

Broker parallel merge is now disabled for Java versions greater than 8 and less than 20 due to a bug with how this mechanism operates on these versions that can result in all pool threads becoming blocked effectively halting the ability to process most queries. Java 8 and Java 20 do not appear to exhibit these symptoms and so still have parallel result merging enabled by default.

This PR has: