Re-retry failing flaky tests from CI pipeline#2367

Conversation

|

Thanks for working on improving Helix test. But overall I don't like this kind of changes.

|

|

Thanks a lot @jiajunwang for the review and valuable feedback! I totally agree with your thought process on retrying tests only when it makes sense but for now we have unstable CI and it's such a pain for contributor to submit a PR then wait for results and if any tests fail then verify it locally (which most of the times it works). This is an attempt to reduce that pain. I totally understand that it might not help or resolve this issue but i can try :) Hence i have not yet published this PR, first let me try this CI couple of times and if i have enough confidence on this that it's giving positive results then i can submit it for review :) |

I guess it makes sense because if the test fails today, people are just manually retrying. So it helps to avoid that part of human toils. Given that saying, one thing I belive is necessary is to count the retried tests as unstable tests as well. We don't want to lost track of unstable test signal, even we want to make people's life easier. |

Current solution to retry failed tests is not working and in few solutions it's suggesting to pass this flag at mvn clean stage as well. Trying this solution.

18f35e0 to

2708225

Compare

|

@qqu0127 can i get your feedback/review on this please? |

qqu0127

left a comment

qqu0127

left a comment

There was a problem hiding this comment.

One question, overall LGTM.

|

Ready to merge, Approved by @qqu0127! |

Add rerun option for failing flaky tests from CI pipeline.

Add rerun option for failing flaky tests from CI pipeline.

Issues

Fixes #2375

Description

Problem :

Currently there are many flaky tests (~10) which fails atleast once in 10 runs. After analyzing its failures it looks like that many of those failures are due to some uncontrolled situations like previous tests stale zk client interruption, callbacks. This can be fixed if this tests are re-run independently. Currently all contributors have to manually re-run this failing tests locally and and once it passes it can be shows as a proof.

Solutions :

This fix proposes to use surefire plugin to re-run failing tests. This plugin re-runs failing test independently in same CI pipeline run.

Tests

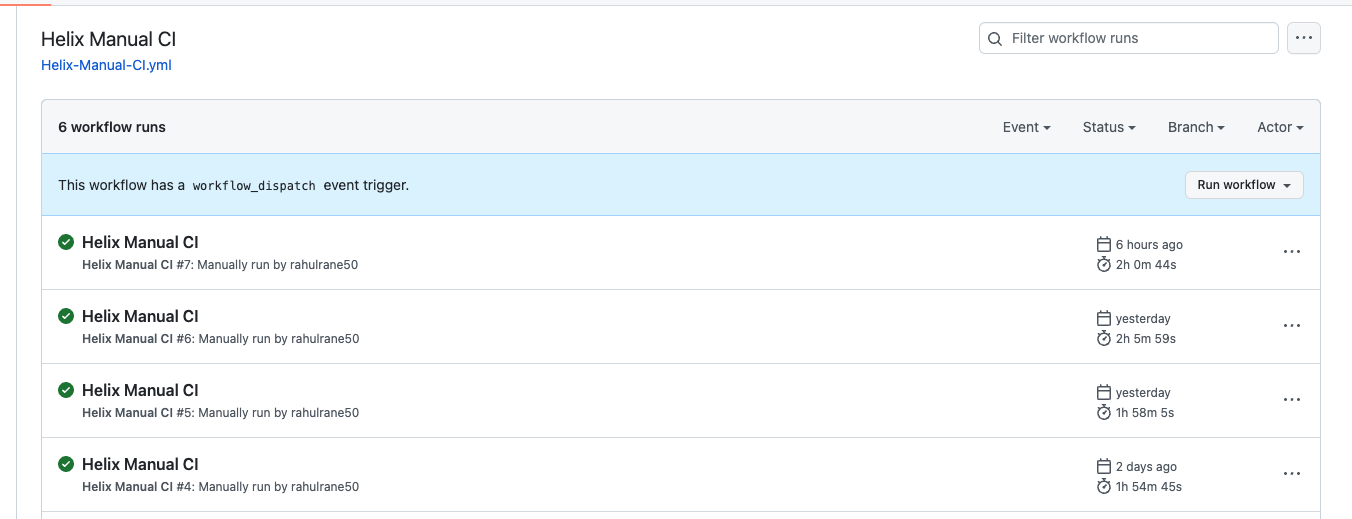

I verified this option on my repo 4 times and all 4 times CI run was successful. Although this doesn't guarantee that CI will never fail due to flaky tests but it gives enough confidence for us to try this option in our CI pipelines.

Yaml validated files.