-

Notifications

You must be signed in to change notification settings - Fork 2.5k

Closed

Labels

area:sqlSQL interfacesSQL interfacespriority:highSignificant impact; potential bugsSignificant impact; potential bugs

Description

The environment is CDH6.3.2 and Hudi is 0.11.1

I want to test delete of spark sql,

there are 4 records in the table ,vehicle_model_id is [100 101 102 105]

when I use spark sql run:

delete from zone_test.hudi_spark_table0719_0101 where vehicle_model_id = '102'; it works ok there is 3 records in the table ,

But when I run :

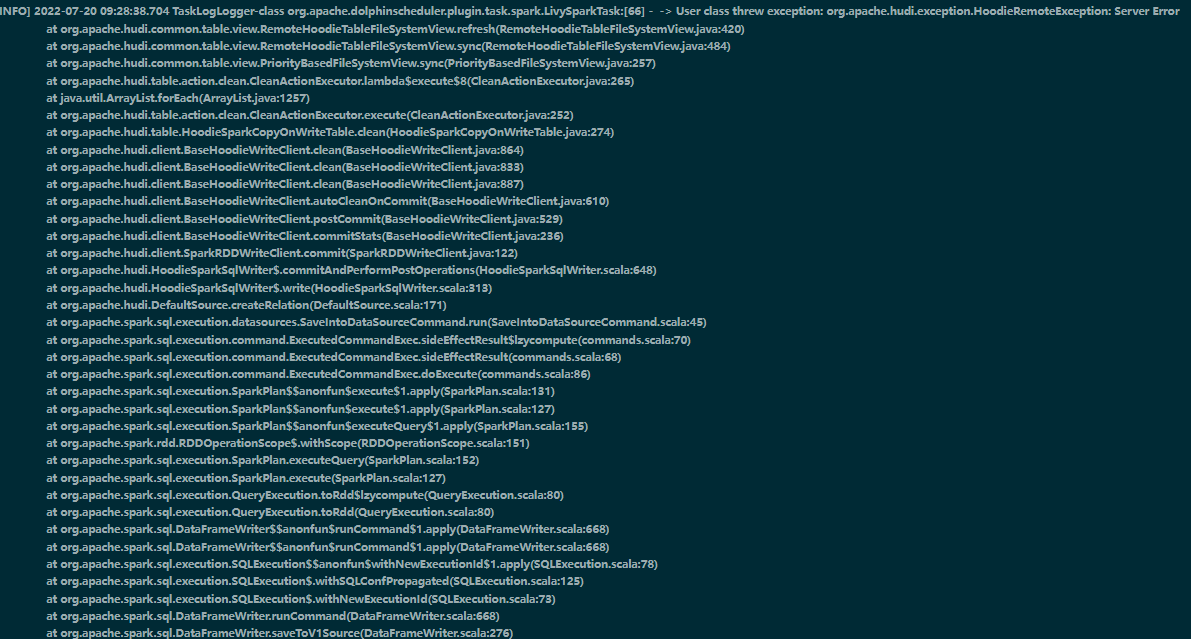

delete from zone_test.hudi_spark_table0719_0101 where vehicle_model_id = '109'; 109 does‘t exists in the table。

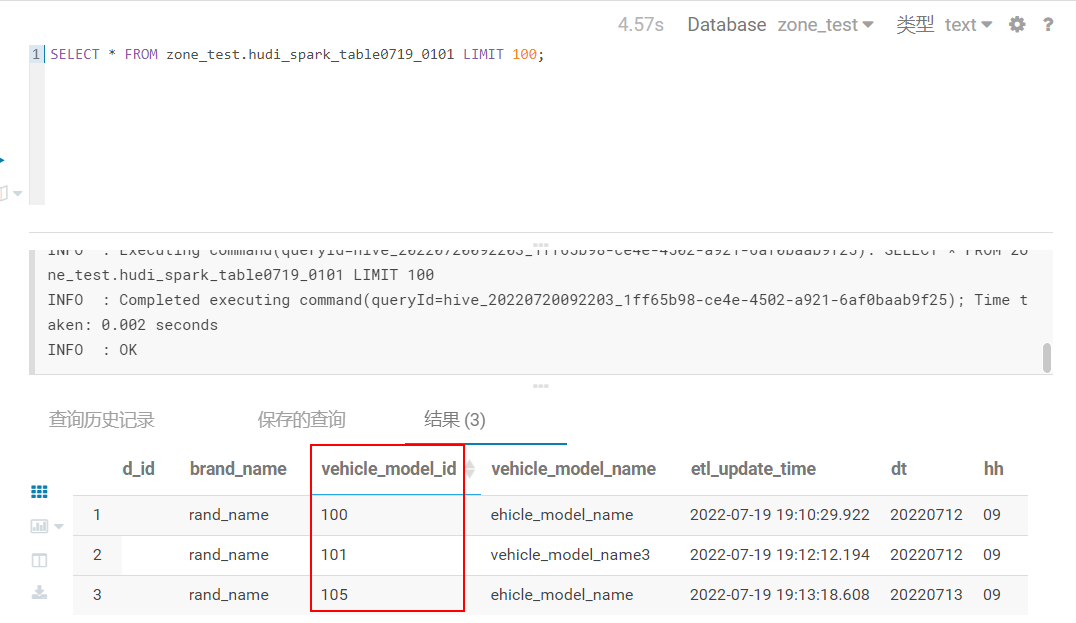

and i query by hive

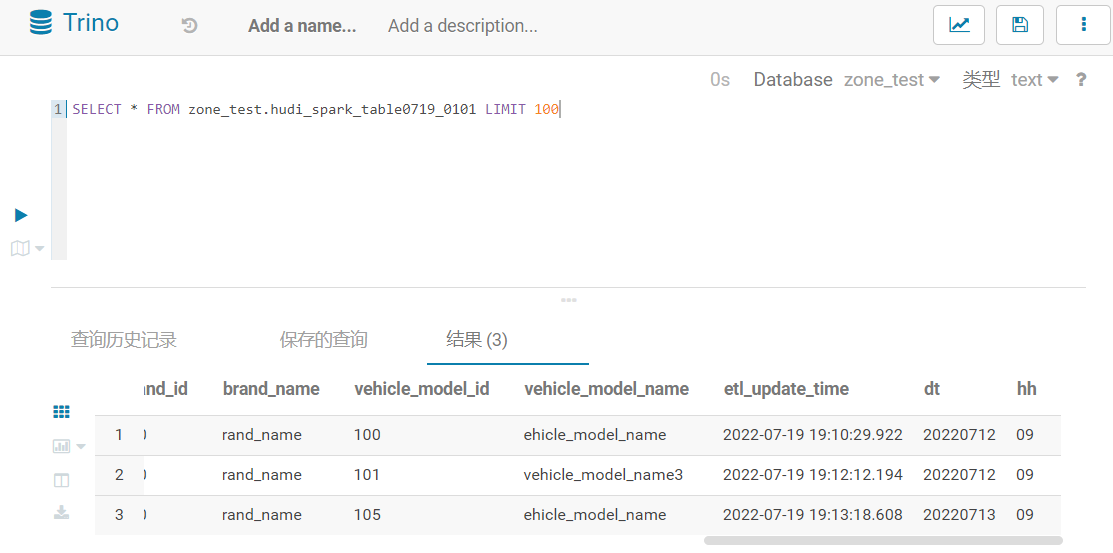

and i query by trino ,it query normal。

is hudi can't delete not exsits record?

Reactions are currently unavailable

Metadata

Metadata

Assignees

Labels

area:sqlSQL interfacesSQL interfacespriority:highSignificant impact; potential bugsSignificant impact; potential bugs

Type

Projects

Status

✅ Done