[MINOR] Change MINI_BATCH_SIZE to 2048#4862

Merged

danny0405 merged 1 commit intoapache:masterfrom Feb 28, 2022

Merged

Conversation

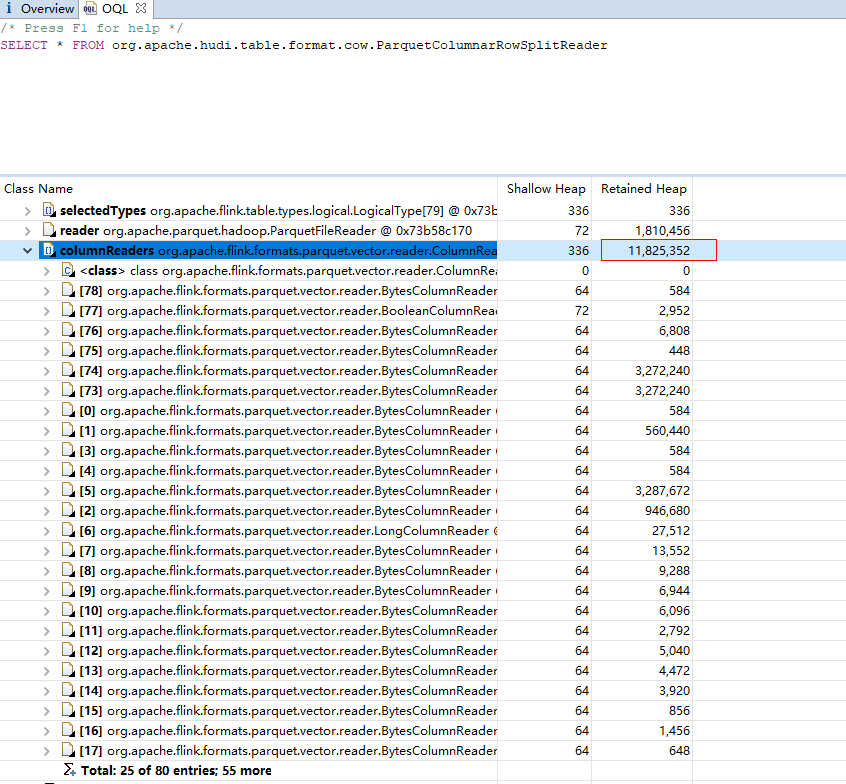

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache.

Contributor

Author

|

@hudi-bot run azure |

1 similar comment

Contributor

Author

|

@hudi-bot run azure |

Contributor

Author

|

@danny0405 pls review :) |

danny0405

reviewed

Feb 22, 2022

|

|

||

| private static final int MINI_BATCH_SIZE = 1000; | ||

| private static final int MINI_BATCH_SIZE = 2048; | ||

|

|

Contributor

There was a problem hiding this comment.

I see your comment:

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache

Thanks, do we have some metrics to illustrate the benefit.

Contributor

Author

There was a problem hiding this comment.

now i dont have any metrics, but from the code,the time that data is stroed in the memory should be reduce to avoid entering the Old Generation.

Contributor

Author

There was a problem hiding this comment.

In our production environment, flink hudi jobs require a lot of memory, and I'm checking memory usage.

rkkalluri

pushed a commit

to rkkalluri/hudi

that referenced

this pull request

Mar 6, 2022

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache.

vingov

pushed a commit

to vingov/hudi

that referenced

this pull request

Apr 3, 2022

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache.

stayrascal

pushed a commit

to stayrascal/hudi

that referenced

this pull request

Apr 12, 2022

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

ParquetColumnarRowSplitReader#batchSize is 2048, so Changing MINI_BATCH_SIZE to 2048 will reduce memory cache.

Tips

What is the purpose of the pull request

(For example: This pull request adds quick-start document.)

Brief change log

(for example:)

Verify this pull request

(Please pick either of the following options)

This pull request is a trivial rework / code cleanup without any test coverage.

(or)

This pull request is already covered by existing tests, such as (please describe tests).

(or)

This change added tests and can be verified as follows:

(example:)

Committer checklist

Has a corresponding JIRA in PR title & commit

Commit message is descriptive of the change

CI is green

Necessary doc changes done or have another open PR

For large changes, please consider breaking it into sub-tasks under an umbrella JIRA.