[HUDI-4338] resolve the data skew when using flink datastream write hudi#5997

[HUDI-4338] resolve the data skew when using flink datastream write hudi#5997liufangqi wants to merge 1 commit intoapache:masterfrom

Conversation

|

@hudi-bot run azure |

| bucketDataStream = conf.getOptional(FlinkOptions.BUCKET_ASSIGN_TASKS).orElse(defaultParallelism) == | ||

| conf.getInteger(FlinkOptions.WRITE_TASKS) ? bucketDataStream : bucketDataStream | ||

| // shuffle by fileId(bucket id) | ||

| .keyBy(record -> record.getCurrentLocation().getFileId()); |

There was a problem hiding this comment.

Different keys may be assigned the same fileid in different tasks, Is there a concurrency problem if you do not repartition by fileid?

There was a problem hiding this comment.

Thanks for your review, actually, the bucket data stream is a keyed stream that repartition by hoodie record key.

If the bucket assgin opeator 's task num equals the write operator's, we don't need the repartition which repartition by location.

There was a problem hiding this comment.

There will not be the differrent tasks that hold the same fileid. Cause the bucket assign operator task get the keyed stream that repatitioned by hoodie key which means that every bucket assign operator task hold the unique file id, and wirte operator task : bucket assgin operator task is 1 : 1 which is the preconditions of the improvement.

There was a problem hiding this comment.

Thanks, I see what you mean, when repartition, each task has decided to write only one fileid.

There was a problem hiding this comment.

Actually, I think we can build a map-only data stream when we don't need update or delete. I can create a new issue to discuss this for avoid confict.

There was a problem hiding this comment.

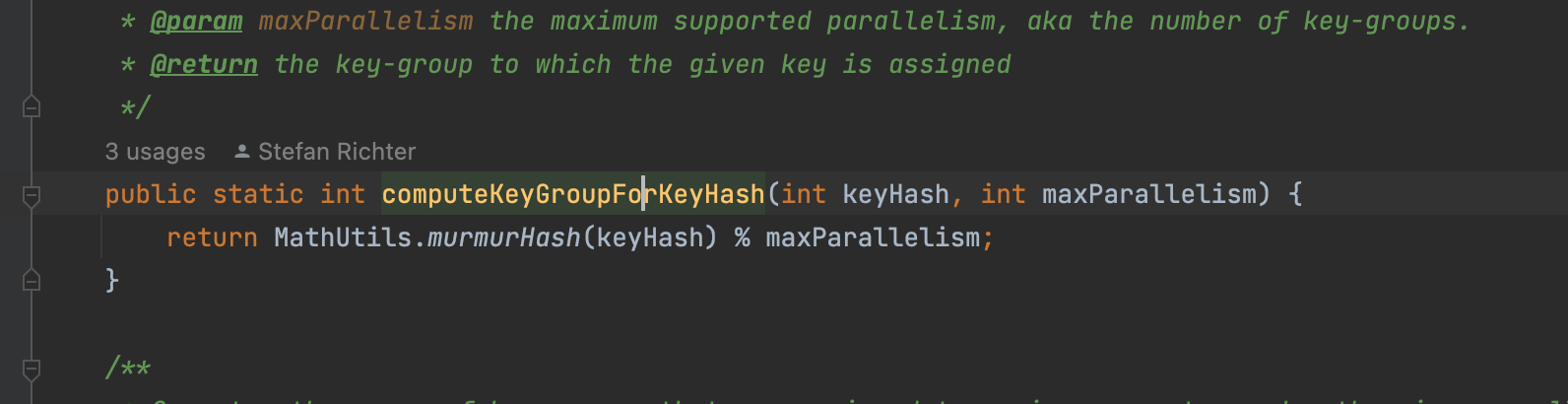

Then why not try to promote the hash algorithm, say the murmur hash ?

@danny0405

The hash algorithm what flink use is the murmur hash.

The point is that the bucket num is small that equals to the downstream task num.

See this example code:

int bucketNum = 10;

int taskNum = 10;

int [] map = new int[taskNum];

for (int i = 0; i < bucketNum ; i++) {

map[Math.abs(murmurHash(UUID.randomUUID().hashCode())) % taskNum] += 1;

}

for (int num:

map) {

System.out.println(num);

}

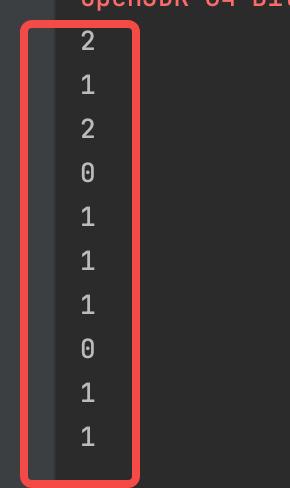

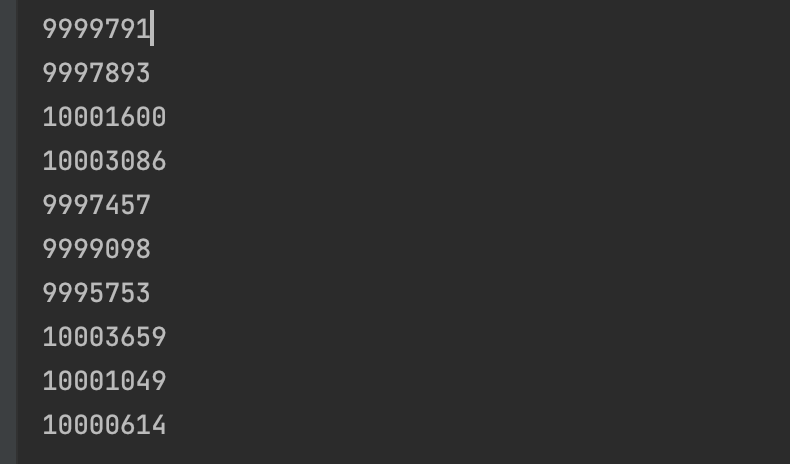

if we just generate 10 buckets for 10 tasks, we can get like this:

if we generate more buckets for 10 tasks, it seems like more balance:

There was a problem hiding this comment.

But we should figure out more random algorithm here instead of just fix the case when bucket assign and write task have the same parallelism, how about the bucket assign has parallelism 4 and write task has parallelism 6 here ?

There was a problem hiding this comment.

But we should figure out more random algorithm here instead of just fix the case when bucket assign and write task have the same parallelism, how about the bucket assign has parallelism 4 and write task has parallelism 6 here ?

@danny0405 Yeah, I approval this. We do need to resolve the problem completely. I will think about a better idea later.

But this pr can help resolve the network overhead and the data skew in some case. I think it should be a improvment not bug fix.

There was a problem hiding this comment.

It is a fix but the limitation is too strict here, so not that valid from my side.

There was a problem hiding this comment.

It is a fix but the limitation is too strict here, so not that valid from my side.

Okay, I get you. Thanks for your reply whatever.

yihua

left a comment

yihua

left a comment

There was a problem hiding this comment.

Closing the PR given that we should improve the hash algorithm as a general enhancement. Closing this PR.

Tips

What is the purpose of the pull request

https://issues.apache.org/jira/browse/HUDI-4338

Brief change log

(for example:)

Verify this pull request

(Please pick either of the following options)

This pull request is a trivial rework / code cleanup without any test coverage.

(or)

This pull request is already covered by existing tests, such as (please describe tests).

(or)

This change added tests and can be verified as follows:

(example:)

Committer checklist

Has a corresponding JIRA in PR title & commit

Commit message is descriptive of the change

CI is green

Necessary doc changes done or have another open PR

For large changes, please consider breaking it into sub-tasks under an umbrella JIRA.