Conversation

samperson1997

left a comment

samperson1997

left a comment

There was a problem hiding this comment.

Hi, thanks for your contributing and everything looks good to me.

By reading the User Guide documents you edited and seeing your demo based on real production environment, I believe this must be a fabulous and useful connector. Below I proposed some suggestions which do not affect functions and user interaction, so maybe you can choose to solve them during future work.

Really look forward to the connector tools for Hadoop 3.x and Hive 3.x!

| * @return the index of blockLocation or -1 if no block could be found | ||

| */ | ||

| private int getBlockLocationIndex(BlockLocation[] blockLocations, long middle) { | ||

| private static int getBlockLocationIndex(BlockLocation[] blockLocations, long middle, Logger logger) { |

There was a problem hiding this comment.

Why passing Logger logger in the method instead of using the public logger? Same question with other places in this file.

There was a problem hiding this comment.

Different callers have different loggers. In order to print right log, I need to pass the corresponding logger to the function.

| * <code>Mapper</code> task. | ||

| */ | ||

| public class TSFInputSplit extends InputSplit implements Writable { | ||

| public class TSFInputSplit extends FileSplit implements Writable, org.apache.hadoop.mapred.InputSplit { |

There was a problem hiding this comment.

How about import org.apache.hadoop.mapred.InputSplit; in the head of this file?

| public class TSFInputSplit extends FileSplit implements Writable, org.apache.hadoop.mapred.InputSplit { | |

| public class TSFInputSplit extends FileSplit implements Writable, InputSplit { |

| @@ -0,0 +1,51 @@ | |||

| /** | |||

There was a problem hiding this comment.

We are using block comments style for Apache License instead of Java-style comments due to this PR. Maybe you could change all the new files' Apache License in next PR, or we may face some problems when generating JavaDoc.

| import java.util.Objects; | ||

|

|

||

| public class TsFileDeserializer { | ||

| private static final Logger LOG = LoggerFactory.getLogger(TsFileDeserializer.class); |

There was a problem hiding this comment.

User logger instead of LOG to be consistent with other files.

| continue; | ||

| } | ||

| if (columnType.getCategory() != ObjectInspector.Category.PRIMITIVE) { | ||

| throw new TsFileSerDeException("Unknown TypeInfo: " + columnType.getCategory()); |

There was a problem hiding this comment.

Maybe you could add logger.error here so that users can know the exact error position. Same with other exceptions below.

| throw new TsFileSerDeException("Unknown TypeInfo: " + columnType.getCategory()); | |

| logger.error("Unknown TypeInfo: {}", columnType.getCategory()); |

| try { | ||

| writer.write(((HDFSTSRecord)writable).convertToTSRecord()); | ||

| } catch (WriteProcessException e) { | ||

| throw new IOException(String.format("Write tsfile record error %s", e)); |

There was a problem hiding this comment.

Maybe you could add logger.error here so that users can know the exact error position. Same with other exceptions.

| throw new IOException(String.format("Write tsfile record error %s", e)); | |

| logger.error("Write tsfile record error: {}", e); |

| * One example for reading TsFile with MapReduce. | ||

| * This MR Job is used to get the result of sum("device_1.sensor_3") in the tsfile. | ||

| * The source of tsfile can be generated by <code>TsFileHelper</code>. | ||

| * @author Yuan Tian |

There was a problem hiding this comment.

Don't forget to remove author here ; )

hive-connector/src/main/java/org/apache/iotdb/hive/TSFHiveInputFormat.java

Outdated

Show resolved

Hide resolved

hive-connector/src/main/java/org/apache/iotdb/hive/TSFHiveOutputFormat.java

Outdated

Show resolved

Hide resolved

hive-connector/src/main/java/org/apache/iotdb/hive/TSFHiveOutputFormat.java

Show resolved

Hide resolved

hive-connector/src/main/java/org/apache/iotdb/hive/TSFHiveRecordReader.java

Outdated

Show resolved

Hide resolved

hive-connector/src/main/java/org/apache/iotdb/hive/TSFHiveRecordReader.java

Outdated

Show resolved

Hide resolved

hive-connector/src/main/java/org/apache/iotdb/hive/TsFileDeserializer.java

Show resolved

Hide resolved

LeiRui

left a comment

LeiRui

left a comment

There was a problem hiding this comment.

I followed the user guide and checked all the instructions mentioned. Everything works (on my hadoop 2.7.7 & hive 2.3.6).

|

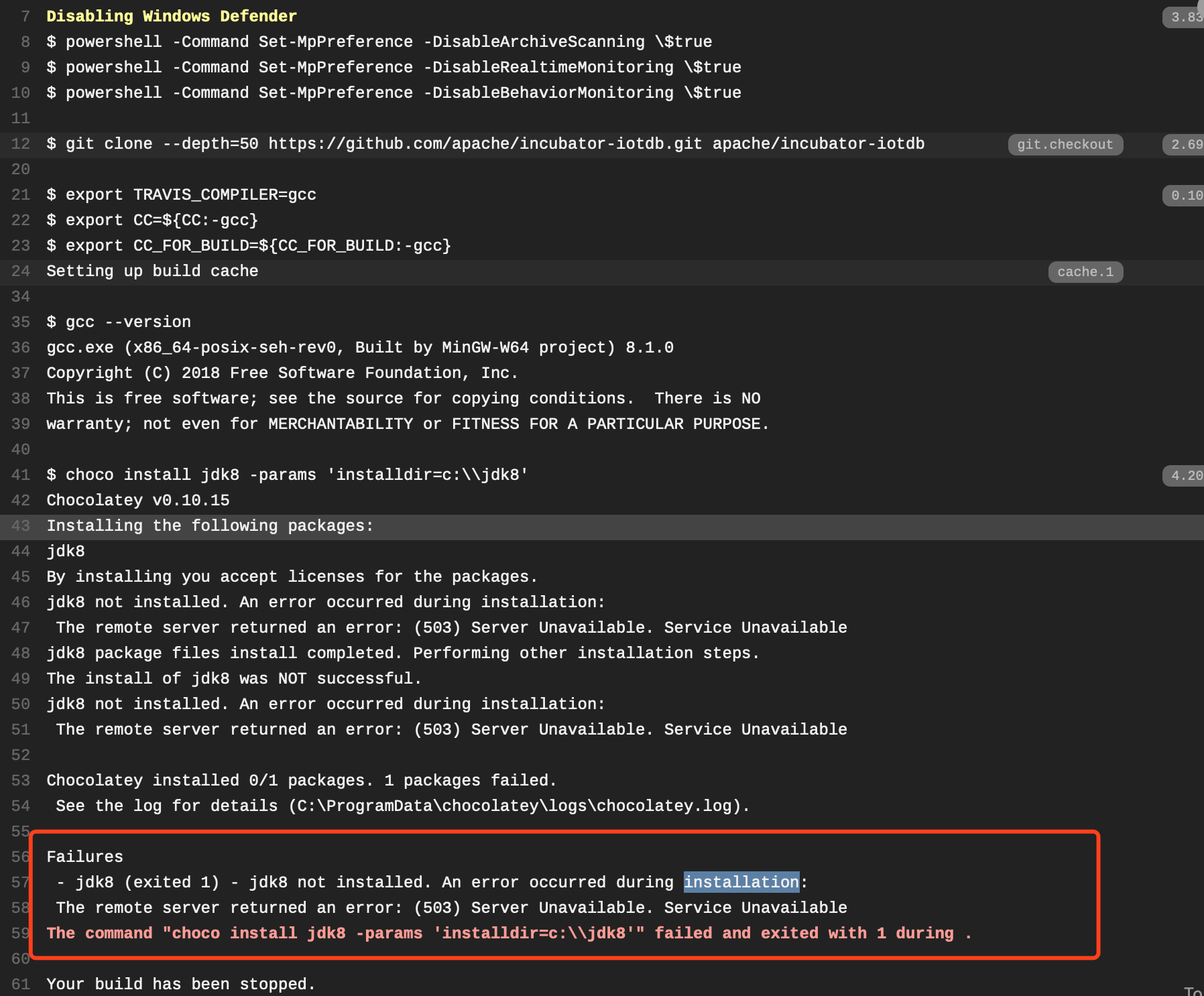

The Travis CI build failed. Try merge the master into your pr to solve the problem. |

No description provided.