NIFI-7886: FetchAzureBlobStorage, FetchS3Object, and FetchGCSObject processors should be able to fetch ranges#4576

NIFI-7886: FetchAzureBlobStorage, FetchS3Object, and FetchGCSObject processors should be able to fetch ranges#4576pkelly-nifi wants to merge 9 commits intoapache:mainfrom pkelly-nifi:nifi7886

Conversation

…rocessors should be able to fetch ranges

…rocessors should be able to fetch ranges

|

Is it also supported for the recently added ADLS Gen2 processor? If yes, would be nice to add it as well. |

|

Yes, ADLS Gen2 does support pulling by range. Good suggestion. I just committed an update for that one as well. |

| request = new GetObjectRequest(bucket, key, versionId); | ||

| } | ||

| request.setRequesterPays(requesterPays); | ||

| if(rangeLength != null) { |

There was a problem hiding this comment.

If rangeLength is 0, won't this result in a returned range of 1 byte?

There was a problem hiding this comment.

It doesn't seem to return a range of 1. If rangeLength is 0, S3 throws an error about it being an invalid range and the file routes to the Failure queue. A rangeLength of 1B downloads 1 byte as expected. Am I missing something? I'll update the validator for rangeLength to use createDataSizeBoundsValidator(1, Long.MAX_VALUE) so that we don't ever get to the zero length error condition.

There was a problem hiding this comment.

I'm trying to test from your recent changes on the branch and ran into an issue when I set these property values for FetchS3Object

- Range Start = 1

- Range Length = 1

The processor can't validate with that setting as it produces this validation warning:

- 's3-object-range-start' validated against '1' is invalid because Must be of format where is a non-negative integer and is a supported Data Unit, such as: B, KB, MB, GB, TB

- Same validation error for 's3-object-range-length'.

To clarify my original concern, should require Range Length be > 0 if Range Start is set to a value.

Since range requests start at 0 byte, It should be valid to have

- Range Start = 0

- Range Length = 1

with a result indicating that this is requesting just the first byte from the resource.

There was a problem hiding this comment.

As a follow up, if I change

- Range Start = 1B

- Range Length = 1B

What currently gets returned is bytes 1 and 2, which is returning 1 more byte then requested.

There was a problem hiding this comment.

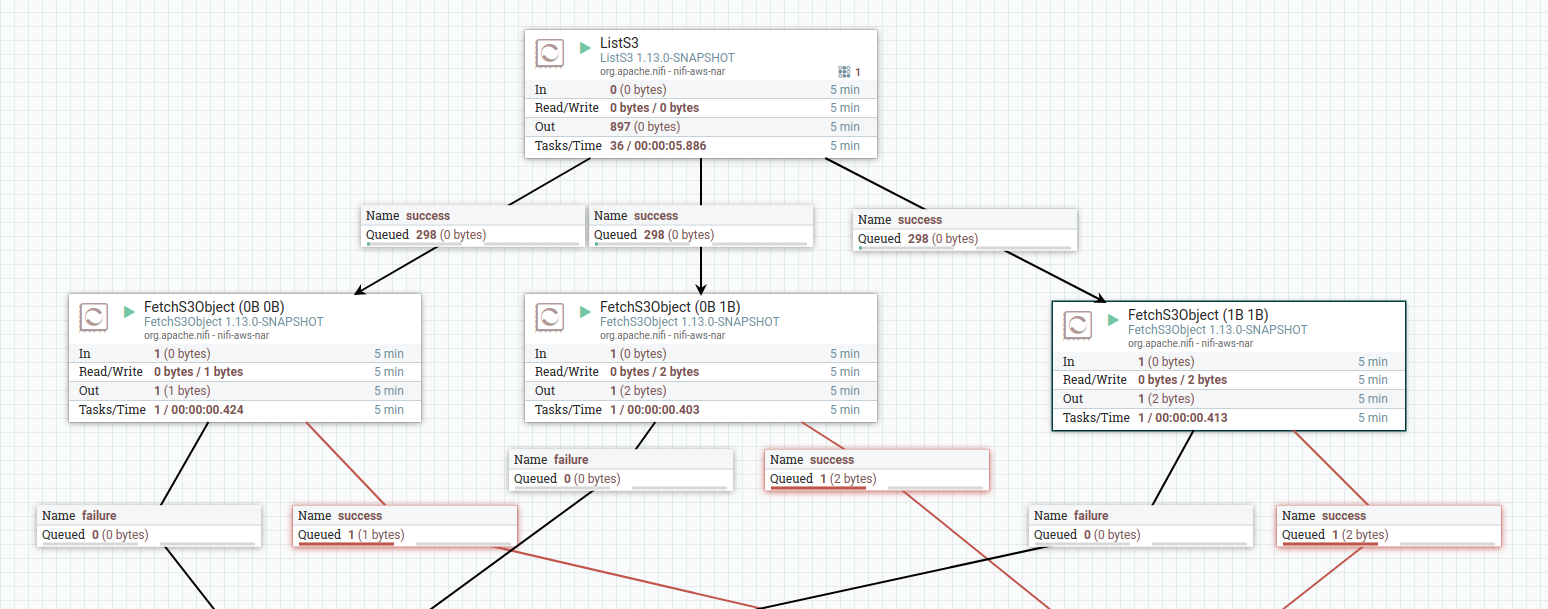

At time of writing, im testing this with a small file in an s3 bucket, and with three different FetchS3Object calls on the same,

See this image of a flow depicting the three different FetchS3Object processors, which only differ in what range start and range length is requested

With the following hex representation of a file that is the source being retrieved ...

0x00000000 5B 2E 53 68 65 6C 6C 43 6C 61 73 73 49 6E 66 6F [.ShellClassInfo

0x00000010 5D 0D 0A 43 4C 53 49 44 3D 7B 36 34 35 46 46 30 ]..CLSID={645FF0

0x00000020 34 30 2D 35 30 38 31 2D 31 30 31 42 2D 39 46 30 40-5081-101B-9F0

0x00000030 38 2D 30 30 41 41 30 30 32 46 39 35 34 45 7D 0D 8-00AA002F954E}.

0x00000040 0A 4C 6F 63 61 6C 69 7A 65 64 52 65 73 6F 75 72 .LocalizedResour

0x00000050 63 65 4E 61 6D 65 3D 40 25 53 79 73 74 65 6D 52 ceName=@%SystemR

0x00000060 6F 6F 74 25 5C 73 79 73 74 65 6D 33 32 5C 73 68 oot%\system32\sh

0x00000070 65 6C 6C 33 32 2E 64 6C 6C 2C 2D 38 39 36 34 0D ell32.dll,-8964.

0x00000080 0A .

from left to right ...

- start 0, length 0 ... yields 1 byte ... viewing queue in nifi, content in hex mode has

0x00000000 5B 10 [.- Im not sure whether this should error (returning nothing), or return the entire file?

- start 0, length 1 ... yields 2 bytes .. viewing queue in nifi, content in hex mode has

0x00000000 5B 2E [.- I was expecting this to return

5B

- I was expecting this to return

- start 1, length 1 ... yields 2 bytes .. viewing queue in nifi, content in hex mode has

0x00000000 2E 53 .S- I was expecting this to return

2E

- I was expecting this to return

There was a problem hiding this comment.

Maybe change

request.setRange(rangeStart, rangeStart + rangeLength);

to

request.setRange(rangeStart, rangeStart + rangeLength - 1);

There was a problem hiding this comment.

Thanks for your feedback. I just tested it again against real AWS S3 and you are absolutely right. This is apparently a bug in a third party product we've been using. I will update with the -1 tomorrow.

As for the validation errors you mentioned, this is using DataSize validation rather than pure long values, which allows shortcuts such as Range start: 0B, Range Length: 1GB, followed by Range start: 1GB, Range length: 1GB. So to use plain bytes, it requires adding a 'B' to the end. It seemed slightly cleaner than requiring someone to calculate out to the exact byte. Do you have a strong opinion against this syntax? Or a suggestion for making it clearer in the documentation? I'd be happy to update it.

There was a problem hiding this comment.

I just committed changes with the -1 for the range length calculation and changed the validators to require a length of at least 1B if it is specified. I don't think range length should be required when range start is specified because sometimes we have a need to read to the end of the file from a starting offset, and reading to the end is the default behavior if range length is not specified. Please let me know your thoughts.

There was a problem hiding this comment.

Personally I'm ok with using 0B, though I'd defer to others when it comes to standards for that. If its kept that way, I agree, it should have documentation guidance improved. I plan to take a look at this in the next 24 hours and will report back.

There was a problem hiding this comment.

My test results using a file with the following contents as input

0x00000000 5B 2E 53 68 65 6C 6C 43 6C 61 73 73 49 6E 66 6F [.ShellClassInfo

0x00000010 5D 0D 0A 43 4C 53 49 44 3D 7B 36 34 35 46 46 30 ]..CLSID={645FF0

0x00000020 34 30 2D 35 30 38 31 2D 31 30 31 42 2D 39 46 30 40-5081-101B-9F0

0x00000030 38 2D 30 30 41 41 30 30 32 46 39 35 34 45 7D 0D 8-00AA002F954E}.

0x00000040 0A 4C 6F 63 61 6C 69 7A 65 64 52 65 73 6F 75 72 .LocalizedResour

0x00000050 63 65 4E 61 6D 65 3D 40 25 53 79 73 74 65 6D 52 ceName=@%SystemR

0x00000060 6F 6F 74 25 5C 73 79 73 74 65 6D 33 32 5C 73 68 oot%\system32\sh

0x00000070 65 6C 6C 33 32 2E 64 6C 6C 2C 2D 38 39 36 34 0D ell32.dll,-8964.

0x00000080 0A .

| Range Start | Range Length | Result | Notes |

|---|---|---|---|

| 0B | 0B | PASS | 0B no longer allowed for length, must be set to empty string/unset, and validation picks this up |

| 0B | 1B | PASS | 1 byte returned, hex viewer shows 5B 10 (ok, see note below) |

| 1B | 1B | PASS | 1 byte returned, hex viewer shows 2E 10 (ok, see note below) |

| 0B | 2B | PASS | 2 bytes returned, hex viewer shows 5B 2E |

| 120B | 120B | PASS | 9 bytes returned, hex viewer showes 6C 2C 2D 38 39 36 34 0D 0A, the final 9 bytes of the file |

| 0B | unset | PASS | 129 bytes returned, hex viewer shows full contents of file |

| 120B | unset | PASS | 9 bytes returned, hex viewer showes 6C 2C 2D 38 39 36 34 0D 0A, the final 9 bytes of the file |

Known Issues

- There is some bug in the hex viewer that shows an extra x10 character when the content is only 1 byte. This isn't introduced by this capability.

The above testing was done manually, so this is something that could be applied in a unit test to automate

…h validator to require a minimum of 1 byte

|

@pvillard31 do you have any other recommendations for this pull request? |

|

No, I just don't have the time to make any tests on my side with all the cloud providers right now (it seems this has been tested extensively on AWS, but we need to make sure this works well on ADLS/BlobStorage/GCS as well). If any other committer can give a +1, I'm good with this. |

|

@lucasmoten @pvillard31 I'll review and do some testing on this. |

jfrazee

left a comment

jfrazee

left a comment

There was a problem hiding this comment.

@pkelly-nifi Hey, I looked this over and tested it thoroughly on Azure. The implementation looks good and it's working as expected.

I have some tiny suggestions about making the property names consistent with the pre-existing code, and I think it'd be useful to add tests to the ITs, where they exist. What do you think?

To help with my testing, I went ahead and created some ITs for Blob and ADLS (see here). If you end up including them as part of this PR feel free to grab these, if you want to do something different that's cool too.

| final String containerName = context.getProperty(AzureStorageUtils.CONTAINER).evaluateAttributeExpressions(flowFile).getValue(); | ||

| final String blobPath = context.getProperty(BLOB).evaluateAttributeExpressions(flowFile).getValue(); | ||

| final long rangeStart = (context.getProperty(RANGE_START).isSet() ? context.getProperty(RANGE_START).evaluateAttributeExpressions(flowFile).asDataSize(DataUnit.B).longValue() : 0L); | ||

| final Long rangeLength = (context.getProperty(RANGE_LENGTH).isSet() ? context.getProperty(RANGE_LENGTH).evaluateAttributeExpressions(flowFile).asDataSize(DataUnit.B).longValue() : null); |

There was a problem hiding this comment.

I have a question about the semantics of the azure.length attribute. It now won't match the length of the retrieved object, but instead the length of the remote object. What should be the behavior here? If we keep it as the length of the actual object, then I think we need to document that clearly.

There was a problem hiding this comment.

Hi @jfrazee,

Thank you for reviewing this PR and for your input. I very much appreciate it. I think it might be useful to keep the azure.length attribute as the original remote length since it could be used to verify the final file size after reassembly, if that is what is ultimately desired. I'm open to suggestions if you disagree.

There was a problem hiding this comment.

Thanks also for the ITs. I will grab yours and include it and try to add some for the other services. Thanks for your help.

...processors/src/main/java/org/apache/nifi/processors/azure/storage/FetchAzureBlobStorage.java

Outdated

Show resolved

Hide resolved

...processors/src/main/java/org/apache/nifi/processors/azure/storage/FetchAzureBlobStorage.java

Outdated

Show resolved

Hide resolved

...essors/src/main/java/org/apache/nifi/processors/azure/storage/FetchAzureDataLakeStorage.java

Outdated

Show resolved

Hide resolved

...essors/src/main/java/org/apache/nifi/processors/azure/storage/FetchAzureDataLakeStorage.java

Outdated

Show resolved

Hide resolved

...nifi-gcp-processors/src/main/java/org/apache/nifi/processors/gcp/storage/FetchGCSObject.java

Outdated

Show resolved

Hide resolved

...essors/src/main/java/org/apache/nifi/processors/azure/storage/FetchAzureDataLakeStorage.java

Outdated

Show resolved

Hide resolved

...undle/nifi-aws-processors/src/main/java/org/apache/nifi/processors/aws/s3/FetchS3Object.java

Outdated

Show resolved

Hide resolved

...undle/nifi-aws-processors/src/main/java/org/apache/nifi/processors/aws/s3/FetchS3Object.java

Outdated

Show resolved

Hide resolved

...undle/nifi-aws-processors/src/main/java/org/apache/nifi/processors/aws/s3/FetchS3Object.java

Outdated

Show resolved

Hide resolved

Standardizing some variable names and spacing as suggested by jfrazee Co-authored-by: Joey <joey.frazee@icloud.com>

|

@jfrazee - are there still requested changes on this pull request? |

I haven't had a chance yet to add any tests, so we're still waiting on that. If someone else wants to kick in some tests that'd be much appreciated. |

|

@pvillard31 @pkelly-nifi The ITs are a nice to have but we can also handle those separately. The testing on GCS is more important. I'll look into that again today. |

|

@pkelly-nifi I tested Right now, if you provide a range start that is larger than the content, it routes a zero-length string to AFAICT, this isn't a quirk of the So question, do we allow different behavior since the cloud providers are different and this apparently isn't an issue for GCS, or do we enforce some consistency and check against the length in the blob metadata? I think it should be the latter. |

…tchGCSObject to prevent setting the start range to beyond the end of the object, and add ITs for FetchAzure processors.

|

@jfrazee I committed an update that accounts for the scenario you described for FetchGCSObject where start range > object length. Please let me know your thoughts. I also included your ITs for Azure. Thank you. |

...nifi-gcp-processors/src/main/java/org/apache/nifi/processors/gcp/storage/FetchGCSObject.java

Outdated

Show resolved

Hide resolved

|

@pkelly-nifi I plan to spend some time on this tomorrow. Thanks for the updates! |

Co-authored-by: Joey <joey.frazee@icloud.com>

jfrazee

left a comment

jfrazee

left a comment

There was a problem hiding this comment.

+1

@pkelly-nifi Thanks for all the work on this!

This closes apache#4576 Co-authored-by: Joey Frazee <jfrazee@apache.org> Signed-off-by: Joey Frazee <jfrazee@apache.org>

Thank you for submitting a contribution to Apache NiFi.

Please provide a short description of the PR here:

Description of PR

Enables pulling objects and blobs by byte ranges for FetchAzureBlobStorage, FetchS3Object, and FetchGCSObject processors as described in NIFI-7886. Adds RANGE_START and RANGE_LENGTH parameters to all three processors and adjusts the API calls as necessary.

In order to streamline the review of the contribution we ask you

to ensure the following steps have been taken:

For all changes:

Is there a JIRA ticket associated with this PR? Is it referenced

in the commit message?

Does your PR title start with NIFI-XXXX where XXXX is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character.

Has your PR been rebased against the latest commit within the target branch (typically

main)?Is your initial contribution a single, squashed commit? Additional commits in response to PR reviewer feedback should be made on this branch and pushed to allow change tracking. Do not

squashor use--forcewhen pushing to allow for clean monitoring of changes.For code changes:

mvn -Pcontrib-check clean installat the rootnififolder?LICENSEfile, including the mainLICENSEfile undernifi-assembly?NOTICEfile, including the mainNOTICEfile found undernifi-assembly?.displayNamein addition to .name (programmatic access) for each of the new properties?For documentation related changes:

Note:

Please ensure that once the PR is submitted, you check GitHub Actions CI for build issues and submit an update to your PR as soon as possible.