[SPARK-39074][CI] Fail on upload, not download of missing test files#36413

[SPARK-39074][CI] Fail on upload, not download of missing test files#36413EnricoMi wants to merge 1 commit intoapache:masterfrom

Conversation

|

@HyukjinKwon @Yikun there are still errors in forks when syncing main branch. |

|

Can one of the admins verify this patch? |

| with: | ||

| name: test-results-${{ matrix.modules }}-${{ matrix.comment }}-${{ matrix.java }}-${{ matrix.hadoop }}-${{ matrix.hive }} | ||

| path: "**/target/test-reports/*.xml" | ||

| if-no-files-found: error |

There was a problem hiding this comment.

Hm, there's a case when *.xml are not generated time to time although when the tests passed. My only concern is that to make that case also failed after this change.

There was a problem hiding this comment.

You refer to case 1. in the description above?

There was a problem hiding this comment.

Oh no. I meant that there's a case when the tests actually run but XML files are not generated for some reasons I don't know IIRC.

There was a problem hiding this comment.

I think that is what I meant with 1.. I have reworded that to be more clear:

- The code has been build […] tests have been executed but no test files have been generated.

This is covered by if-no-files-found: error for actions/upload-artifact. The upload action is triggered when tests are run, so it expects files to upload.

There was a problem hiding this comment.

We can try and go ahead, I'm not against this. My only concern is that we'll now consider the build as failed in the case when the build is successful but it failed to generate XML test reports for an unknown reason - this happens often as far as I remember. Though, I agree that this PR can make such unknown cases onto the surface.

| steps: | ||

| - name: Download test results to report | ||

| uses: dawidd6/action-download-artifact@09385b76de790122f4da9c82b17bccf858b9557c # pin@v2 | ||

| continue-on-error: true |

|

Merged to master |

|

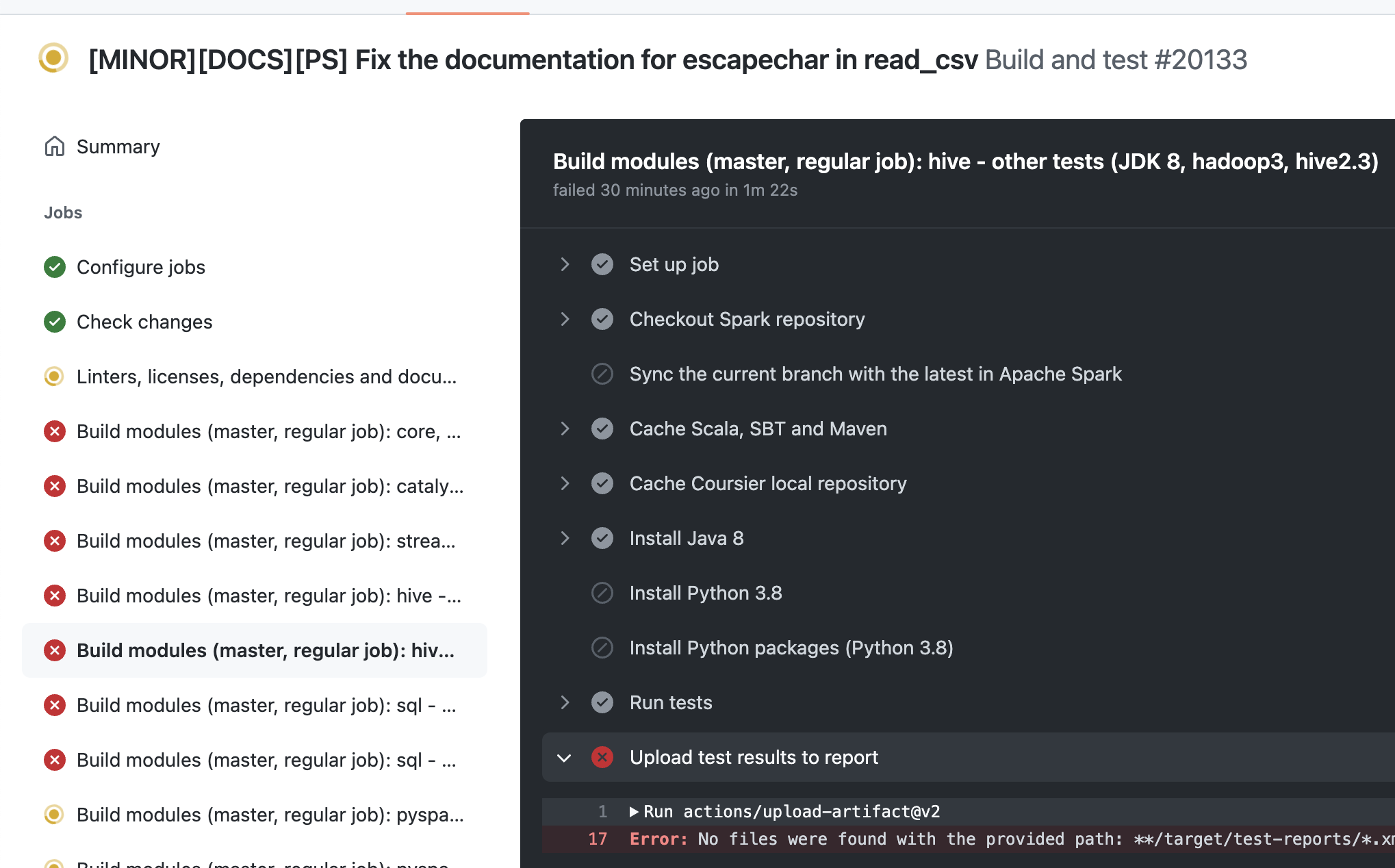

Seems like it fails in some cases such as 3b709eb The problem seems that https://github.com/apache/spark/blob/master/.github/workflows/build_and_test.yml#L134-L142 and actually testing skip logic are not matched. For example, here https://github.com/apache/spark/runs/6911661666?check_suite_focus=true#step:4:14 it says to build both PySpark and other builds too but later other builds were skipped at https://github.com/apache/spark/runs/6911690622?check_suite_focus=true#step:9:21 We should probably remove pyspark-, sparkr, etc at https://github.com/apache/spark/blob/master/.github/workflows/build_and_test.yml#L135 but I think it needs a closer look. Reverting this for now to avoid making other PRs failed. cc @EnricoMi mind taking a look please? cc @dongjoon-hyun too FYI |

|

Ah shoot OK thank you. You were right |

|

that's fine. i think it's good that we know there's a problem there 👍 |

|

Thank you for the investigation, @HyukjinKwon . |

|

Yes, it looks like the scope of the The This looks like a bigger change. |

What changes were proposed in this pull request?

The CI should not fail when there are no test result files to be downloaded.

Why are the changes needed?

The CI workflow "Report test results" fails when there are no artifacts to be downloaded from the triggering workflow. In some situations, the triggering workflow is not skipped, but all test jobs are skipped in case no code changes are detected.

Examples:

In that situation, no test files are uploaded, which makes the triggered workflow fail.

Downloading no test files can have two reasons:

You want to be notified in the first situation to fix the CI. Therefore, CI should fail when code is built and tests are run but no test result files are been found.

The

dawidd6/action-download-artifactaction cannot be configured to not fail when there are no files. But Github Actions can be configured (continue-on-error) to ignore that and still have the job succeed. However, if that action fails for a different reason, this will not be raised as an error any more.Does this PR introduce any user-facing change?

No

How was this patch tested?

Not tested