[SYSTEMDS-???] Parameterserver aggregation optimization#1211

[SYSTEMDS-???] Parameterserver aggregation optimization#1211Baunsgaard wants to merge 2 commits intoapache:masterfrom

Conversation

|

thanks for following up on this, instead of converting the vector back to dense (after it has been copied from dense to sparse), couldn't we directly do |

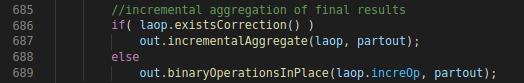

Aggregate of final results would use a generic aggregation if

either inputs were different format from each other.

This commit change the behavior to force uniform format.

Furthermore allocating the initial result in dense improve performance

slightly more with 1 second

The change improve performance of:

uack+ 59.179sec -> uack+ 9.0sec

on CNN implementation using the parameterserver on MNIST.

I Just tried it, we still encounter the case where the new values incoming are sparse, while our aggregate is dense. But i do get that it makes more sense to force the initial accumulator to be dense, since it most likely will be in the end so i added the copy to dense part you suggest, but overall this gives us a second better execution time on 32 batch size 1 epoch. |

This PR contains two small commits.

the second part make the execution of CNN ParameterServer with batch size 32 on MNIST go from 260 sec to 200 on my laptop, with minimal changes (10 lines), i don't think this have any bad side effects but would like confirmation.