AI shopping agents that hunt resale markets so you don't have to.

Saved searches are broken. You tell Depop you want Saint Laurent boots, and twelve hours later you get a notification for a listing that sold eleven hours ago. Resale markets move in minutes, not hours. The best pieces — the grails, the underpriced gems, the rare sizes — are gone before passive tooling even wakes up.

Sniper is an agent layer meant to be built on top of Phia that shops resale continuously on your behalf. You describe what you want in plain language, flip a toggle, and an AI agent scrapes live listings, scores every result against your criteria, and surfaces matches the moment they appear. This is not a saved search. This hunts.

Resale platforms like The RealReal, Depop, eBay, and Grailed have passive tooling that doesn't match the real-time nature of resale markets. Saved searches notify users hours after a listing goes live — by which point coveted items are already sold.

There is also no tooling that bridges the gap between a vague aesthetic or inspiration (a Pinterest board, a trip coming up, a general vibe) and actual resale shopping. Existing platforms treat all shoppers the same regardless of how specific or exploratory their intent is.

Shopping intent is a spectrum. Sometimes you know exactly what you want. Sometimes you have a vibe and no idea what specific item would satisfy it. Sniper handles both ends.

You know the piece. Brand, size, condition, price ceiling — all of it. You create an agent with a precise brief:

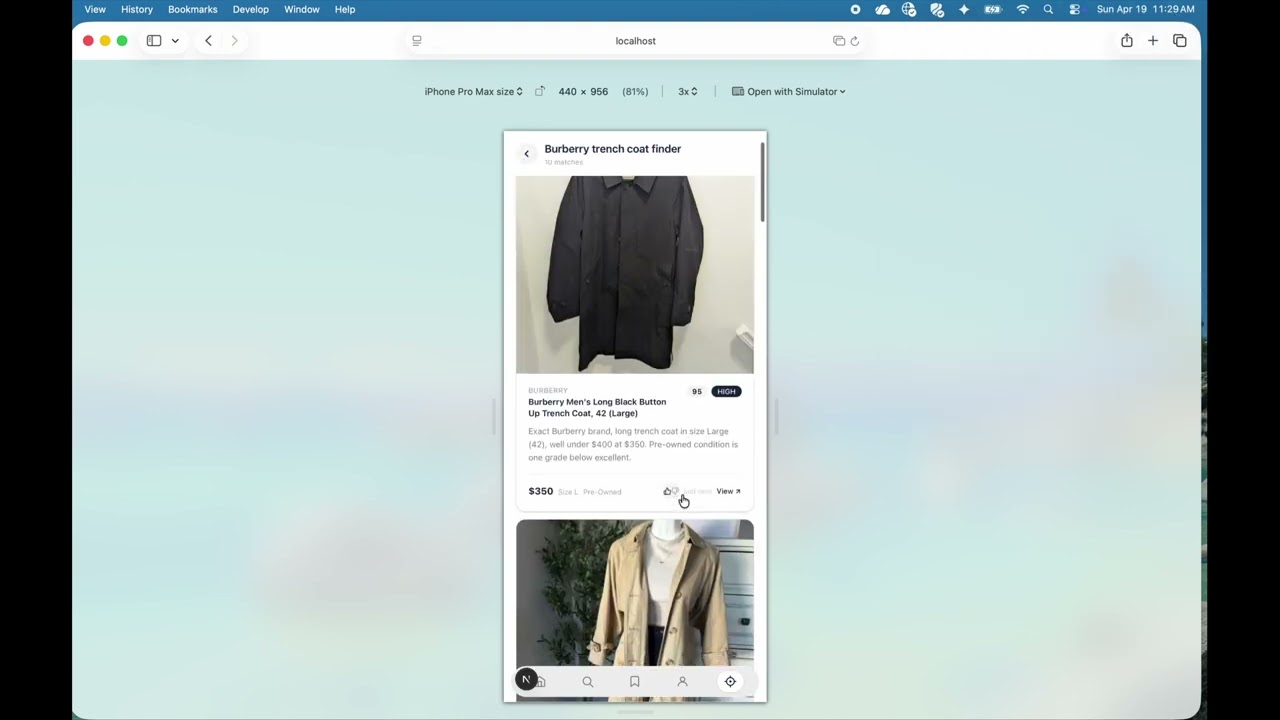

"Burberry long trench coat, size large, excellent condition, under $400"

The agent translates your brief into a search query, scrapes eBay for live listings, and runs every result through Claude with a detailed scoring rubric. Anything scoring 35 or above lands in your matches page, ranked and annotated with an explanation of why it matched. Anything scoring 80+ triggers a HIGH urgency notification. You see the match before most humans have even opened the app.

You don't have a specific item — you have a feeling. A trip coming up, a mood board, an aesthetic you can't quite name. You describe it in natural language, and optionally drop in a Pinterest board URL:

"Spending the summer in Europe, here is my Pinterest board, find me outfits: https://www.pinterest.com/essnutella/fashion/?request_params=%7B%221%22%3A%20130%2C%2[…]l_feed_title=fashion&view_parameter_type=3069&pins_display=3"

The agent scrapes the board for pin titles and descriptions, builds a rich understanding of your aesthetic, then continuously surfaces resale items that match the vibe — scored by how well they fit what you actually pinned.

You can run as many agents as you want simultaneously. A sniper hunting a specific pair of vintage Levi's alongside a vibe agent building out a capsule wardrobe for an upcoming trip. Each agent has its own toggle, its own run history, and its own matches page.

"Find me Saint Laurent leather ankle boots in black, size 8–8.5, under $600, excellent condition"

"Any The Row bag under $800 in good condition or better"

"Totême or Lemaire coat, size S, under $500, camel or neutral tones"

"Vintage Levi's 501 jeans, waist 26–27, any condition, under $150"

"Spending the summer in Europe, here is my Pinterest board, find me outfits: https://pinterest.com/..."

Claude extracts the search intent, Playwright fetches real listings, Claude scores them against the full prompt with nuance — condition hierarchy, size flexibility, brand synonyms (YSL = Saint Laurent), price tolerance.

For the demo, we are scraping eBay for live listings.

┌─────────────────────────────────────────────────────────────┐

│ Next.js 16 PWA │

│ │

│ /sniper → Agent Dashboard │

│ • Create agents (name + natural language) │

│ • Toggle each agent on/off │

│ • Run agent → see scored matches │

│ • /sniper/results — per-agent match feed │

│ • Thumbs up/down on each match │

└───────────────────────┬─────────────────────────────────────┘

│ POST /api/agent/run

▼

┌─────────────────────────────────────────────────────────────┐

│ ★ Agent Run Pipeline ★ │

│ │

│ Step 0: Scrape Pinterest board (if URL in prompt) │

│ Step 1: Claude reads enriched prompt → eBay search query │

│ Step 2: kernel.js (Playwright Stealth) → scrapes eBay │

│ Step 3: Claude scores each listing against full prompt │

│ (0–100 rubric: brand, type, price, size, condition)│

│ Step 4: Matches stored in Supabase + push notification │

└───────┬─────────────────────────────┬───────────────────────┘

│ │

▼ ▼

┌──────────────────┐ ┌────────────────────┐

│ scripts/ │ │ Supabase │

│ kernel.js │ │ │

│ pinterest- │ │ agents table │

│ scraper.js │ │ matches table │

│ │ │ push_subscriptions│

│ Playwright │ │ │

│ Stealth │ │ │

│ scrapes eBay │ └────────────────────┘

│ + Pinterest │

└──────────────────┘

When an agent runs, POST /api/agent/run executes the following steps:

-

scrapePinterestBoard()— Playwright (optional) If the agent prompt contains a Pinterest URL,scripts/pinterest-scraper.jslaunches a headless Chromium browser, navigates to the board, scrolls to load pins, and extracts up to 40 pin titles and descriptions. These are injected into the prompt as aesthetic context. -

extractSearchQuery()— Claude call #1 Sends the enriched prompt (original brief + Pinterest pins) to Claude Sonnet (claude-sonnet-4-6) and asks it to extract a 3–6 word eBay search query. A vibe prompt like "Mamma Mia energy, flowy linen" becomes a precise search term like"flowy linen midi dress white". -

scrapeProducts()— Playwright Shells out toscripts/kernel.jsviachild_process.exec. Kernel launches a headless Chromium browser, navigates to eBay search results, waits for client-side rendering, and scrapes up to 20 listings — each as{ title, brand, price, size, condition, url, image_url }. Runs with a 45-second timeout. Anti-detection: randomised user agents, disabled automation flags,navigator.webdrivermasking. -

filterWithClaude()— Claude call #2 Sends the scraped listings back to Claude Sonnet with the full enriched prompt and a scoring rubric. Claude scores each listing 0–100 across five dimensions:Dimension Points Brand match (exact, synonym, or partial) 30 Item type match 25 Price within budget 20 Size match 15 Condition match 10 Items below 35 are dropped. The rest are returned with a

match_explanationand urgency tier: HIGH (80+) · MEDIUM (55+) · LOW (35+).

| Layer | Technology |

|---|---|

| Framework | Next.js 16 (App Router, Turbopack) |

| Styling | Tailwind CSS v4 |

| PWA | next-pwa + Web Push API (VAPID) |

| Database | Supabase (Postgres) |

| Scraping | Playwright Stealth (scripts/kernel.js, scripts/pinterest-scraper.js) |

| Agent LLM | Claude Sonnet 4.6 (Anthropic) |

| Notifications | Browser Notification API + Web Push |

sniper/

├── src/

│ ├── app/

│ │ ├── layout.tsx # iOS PWA meta, BottomNav, Cormorant font

│ │ ├── globals.css # Tailwind v4, Phia design tokens, animations

│ │ ├── sniper/

│ │ │ ├── page.tsx # Agent dashboard (create, toggle, run, delete)

│ │ │ └── results/page.tsx # Per-agent match feed — scores, images, feedback

│ │ └── api/agent/

│ │ ├── create/route.ts # POST — create or update an agent

│ │ ├── toggle/route.ts # POST — flip is_active on/off

│ │ ├── delete/route.ts # POST — delete agent and its matches

│ │ ├── feedback/route.ts # POST — record liked/disliked on a match

│ │ └── run/route.ts # ★ AI CORE — Claude × 2 + Playwright

│ │ # 0. Fetch feedback → build taste profile

│ │ # 0b. Scrape Pinterest board (if URL present)

│ │ # 1. Claude extracts eBay search query

│ │ # 2. kernel.js scrapes live listings

│ │ # 3. Claude scores 0–100, assigns urgency

│ ├── components/

│ │ └── BottomNav.tsx # 5-icon pill nav with Sniper crosshair icon

│ └── lib/

│ ├── supabase.ts # Browser client

│ ├── supabase-server.ts # Server client

│ └── push.ts # VAPID push subscription helper

├── scripts/

│ ├── kernel.js # Playwright Stealth eBay scraper

│ └── pinterest-scraper.js # Playwright Pinterest board scraper

├── service-worker/

│ └── index.js # Push event + notificationclick handler

└── public/

└── manifest.json # PWA manifest

create table if not exists agents (

id uuid primary key default gen_random_uuid(),

name text not null,

prompt text not null,

is_active boolean default true,

last_run timestamptz,

last_match_count int default 0,

created_at timestamptz default now()

);

create table if not exists matches (

id uuid primary key default gen_random_uuid(),

agent_id uuid references agents(id) on delete cascade,

title text,

brand text,

price numeric,

size text,

condition text,

url text,

image_url text,

match_explanation text,

match_score int,

notification_urgency text check (notification_urgency in ('HIGH', 'MEDIUM', 'LOW')),

feedback text check (feedback in ('liked', 'disliked')),

seen boolean default false,

created_at timestamptz default now()

);

create table if not exists push_subscriptions (

id uuid primary key default gen_random_uuid(),

endpoint text unique not null,

p256dh text,

auth text,

subscription_json jsonb,

created_at timestamptz default now()

);

create index if not exists matches_agent_id_idx on matches(agent_id, created_at desc);cd sniper

npm install

npx playwright install chromium.env.local:

NEXT_PUBLIC_SUPABASE_URL=

NEXT_PUBLIC_SUPABASE_ANON_KEY=

ANTHROPIC_API_KEY=

NEXT_PUBLIC_VAPID_PUBLIC_KEY=

VAPID_PRIVATE_KEY=

VAPID_EMAIL=mailto:you@example.com

Run the schema SQL in Supabase, then:

npm run dev

# open http://localhost:3000/sniperTo test the scrapers directly:

node scripts/kernel.js "saint laurent boots"

node scripts/pinterest-scraper.js "https://www.pinterest.com/username/board-name/"- Feedback loop — wire up the thumbs up/down UI to influence future runs: inject liked/disliked history into the scoring prompt so the agent learns taste over time

- Continuous looping — agents run once on demand; background polling loop would make them truly continuous

- Multi-platform scraping — extend to The RealReal, Grailed, Depop, Vestiaire

- User accounts — isolated agent spaces and taste profiles per user

Every shopper deserves an agent that never sleeps.