A Sentinel-2 image (August 8, 2020) and fully polarimetric Alos-2 (June 27, 2019) courtesy of Japanese Space Agency (JAXA) were used in this project to derive land cover information.

All Sentinel-2 bands including Touzi phase ϕαs1 component were resampled to 20 m.

Training polygons for the area were converted to raster and stacked with multispectral image.

The values of the labels raster represented different cover types matching Alberta Satellite Land Classification (ASLC) codes. For instance Black Spruce polygon has ASLC code-51, while Aspen- 55.

Thestack had in total 11 bands where ten were features/predictors (9 Sentinel-2 bands) and one Touzi phase ϕαs1 band plus raster of training data.

Next, we generated regularly spaced point shapefile (figure 2) which we used to read values of all 11 bands in the stack.

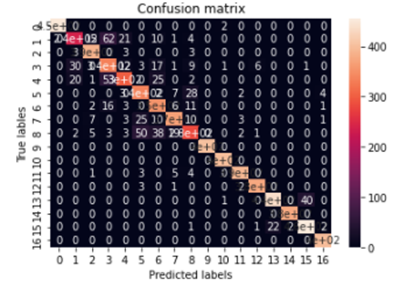

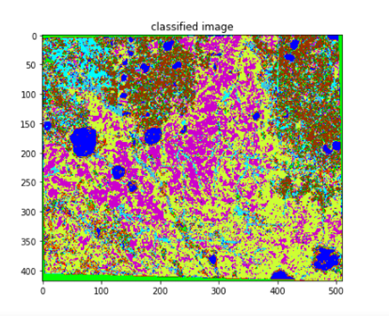

We had 17 different cover types ( ASLC classes) including burns-41, different pure coniferous (Sb-51, Pl-52, Sw-53), mixed conifer-54, decidusous-55 and water 80, mixedwood and wetlands. So, this is an examle of multiclass image classification.

Then we converted regularly spaced point shapefile to CSV file. So, that we can use CSV file in python environment to easily manipulate input data.

Our training sites contained 17 different ASLC classes, and instead of using regular ASLC codes (ex. Sb is 51), we added another field ASLC_ to order them from 1 to 17. The reason was that machine learning algorithm has to use ordered data instead of 40, 51,52,...522. For instance, Burns with ASLC code 40 were assigned id 1 in “ASLC_” field and last young Pine with ASLC code 552 received id 17.

Our predictors (features) are bands B2, B3..B12 and Touzi phase, while our response variable (label) is “ASLC_”.

Here are the results: Using exclusively Sentinel-2 data our accuracy after training was 82.40% . In the case we add Touzi phase to Sentinel 2 data, accuracy increases to 87% for the same model.

confusion matrix:

predicted classified image: