use setuptools_scm instead of setuptools-git-version#56

Merged

Conversation

Member

Author

|

why test fail... |

Member

Author

|

Travis-CI为啥分了一台gcc=5.4的机器…… |

Member

|

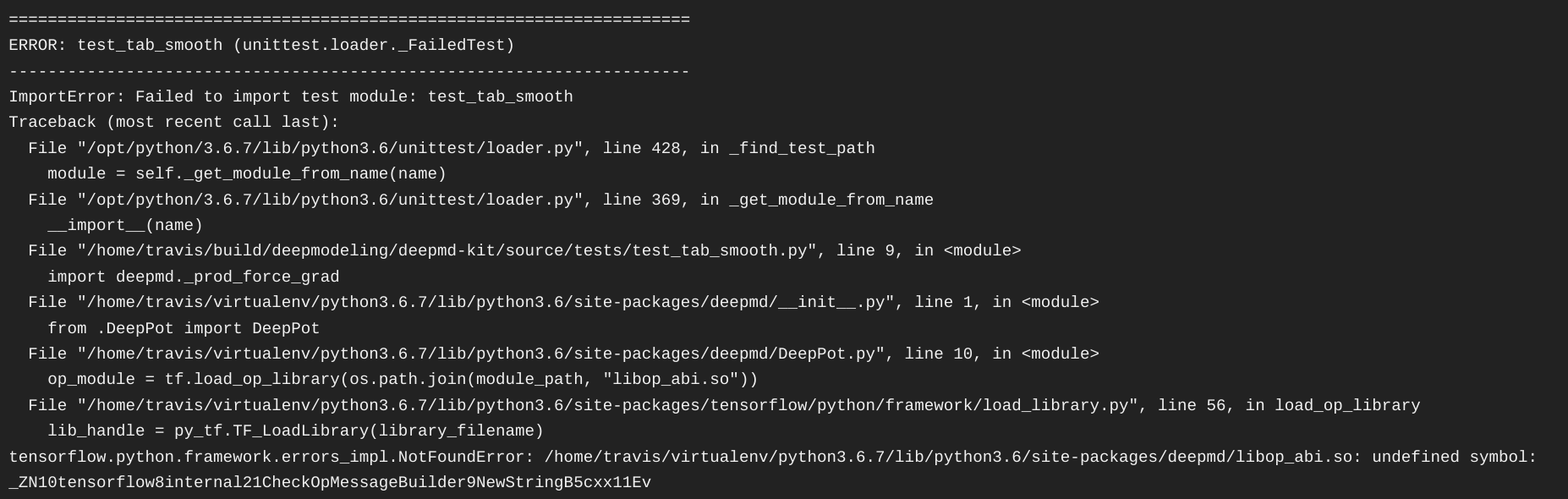

Why does the test fail on a machine with gcc==5.4? Could you paste the error log? |

Member

Member

|

The official tensorflow distribution was built with gcc==4.8 and the deepmd-kit should be built with the same gcc... |

Member

Author

njzjz-bot

pushed a commit

to njzjz-bot/deepmd-kit

that referenced

this pull request

May 8, 2026

Imported from jinzhezenggroup/computational-chemistry-agent-skills. Upstream-Commit: jinzhezenggroup/computational-chemistry-agent-skills@3f03f72 Upstream-Paths: - machine-learning-potentials/deepmd-finetune-dpa3 - machine-learning-potentials/deepmd-python-inference - machine-learning-potentials/deepmd-train-dpa3 - machine-learning-potentials/deepmd-train-se-e2-a - molecular-dynamics/lammps-deepmd

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

Then, we can use

python setup.py sdistto package the source, and usetwine upload dist/*to upload it to pypi.org. Users can usepip install deepmd-kitto install the python interface directly.