Use only cc_half by default in resolution analysis#1492

Conversation

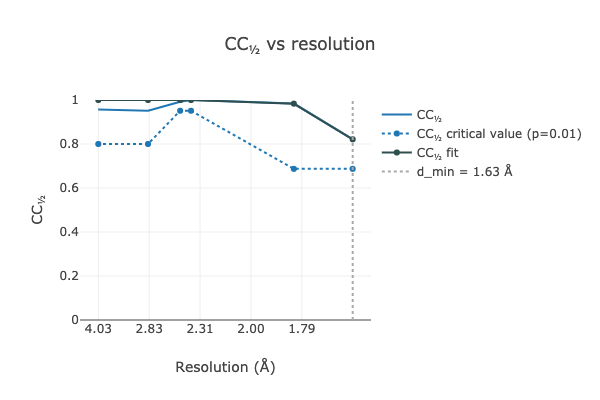

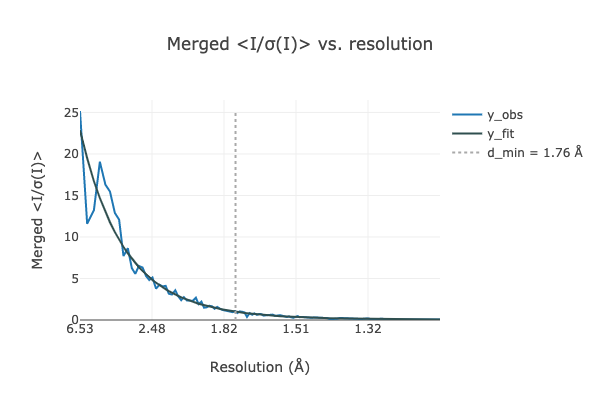

CC1/2 is now accepted as the most appropriate for estimating the resolution cutoff (other than paired refinement). I/sigI-based cutoffs can be unreliable due to dependence of the sigmas on error modelling in integration/scaling. This is now consistent with xia2 default behaviour. See also xia2/xia2#374

Codecov Report

@@ Coverage Diff @@

## master #1492 +/- ##

=======================================

Coverage 65.57% 65.57%

=======================================

Files 614 614

Lines 68956 68956

Branches 9519 9519

=======================================

Hits 45219 45219

Misses 21898 21898

Partials 1839 1839 |

graeme-winter

left a comment

graeme-winter

left a comment

There was a problem hiding this comment.

Makes sense, though I wonder what will happen if $USER passes in a data set with multiplicity ~ 1? Overall though good that this enforces current best practice for consistency.

| @@ -0,0 +1 @@ | |||

| dials.estimate_resolution: use only cc_half by default in resolution analysis | |||

There was a problem hiding this comment.

possibly "change default to only use cc_half in resolution analysis"?

It would only use those reflections with multiplicity > 1, obviously how many of these there are will depend on the symmetry etc. The First scale a single narrow wedge of data: Run Using Using |

dagewa

left a comment

dagewa

left a comment

There was a problem hiding this comment.

It makes sense for this to be consistent with xia2.

The issue of what cut off value to use has come up again recently in CCP4 land. 0.143 has been suggested as being better than 0.3, because a CC1/2 of 0.143 corresponds to CC* of 0.5. But having a CC* of 0.5 is also an arbitrary value. The right value of the cut off is out of scope here, but I thought it might be worth noting the discussion.

jbeilstenedmands

left a comment

jbeilstenedmands

left a comment

There was a problem hiding this comment.

Makes sense to me too.

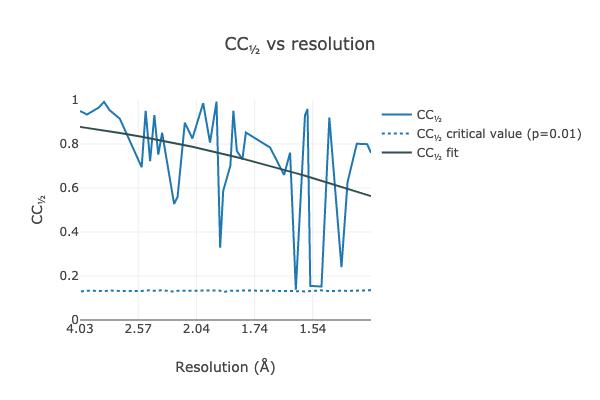

To test a really low-multiplicity dataset, you can do something like this:

$ dials.scale $(dials.data get -q l_cysteine_dials_output)/{11_integrated.expt,11_integrated.refl,23_integrated.refl,23_integrated.expt}

$ dials.estimate_resolution scaled.{expt,refl}

N.B. This fails both on master and this branch with a stacktrace, but this shouldn't be a blocker for this work:

merging_stats, metric, model, limit, sel=sel

File "/dials/modules/dials/util/resolution_analysis.py", line 143, in resolution_fit_from_merging_stats

return resolution_fit(d_star_sq, y_obs, model, limit, sel=sel)

File "/Users/whi10850/dials/modules/dials/util/resolution_analysis.py", line 178, in resolution_fit

raise RuntimeError("No reflections left for fitting")

RuntimeError: Please report this error to dials-support@lists.sourceforge.net: No reflections left for fitting```

|

The issue here is that even with just 4 bins, there is only 1 reflection in each of 2 bins with a multiplicity > 1: Even the sigma-tau CC1/2 method requires > 1 unique reflection with multiplicity > 1 in order to calculate a non-zero CC1/2 value: Granted, we should probably gracefully handle the resulting error, but as you say, that is outside the context of the PR. |

CC1/2 is now accepted as the most appropriate for estimating the resolution

cutoff (other than paired refinement). I/sigI-based cutoffs can be unreliable

due to dependence of the sigmas on error modelling in integration/scaling.

This is now consistent with xia2 default behaviour.

See also xia2/xia2#374