-

Notifications

You must be signed in to change notification settings - Fork 1.9k

ONNX model inference issues with ML.Net #6475

Description

System Information (please complete the following information):

- OS & Version: Windows 10

- ML.NET Version: ML.NET v1.6, ML.OnnxRuntime v1.7

- .NET Version: NET 6.0

Describe the bug

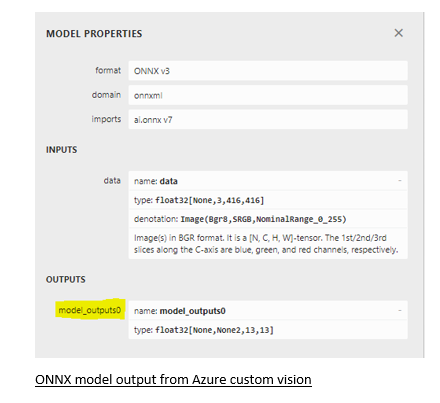

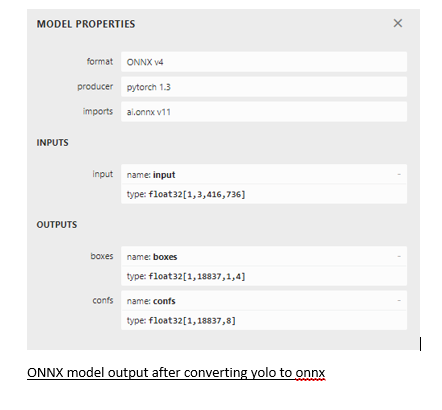

I have an ONNX model which is exported from Azure custom vision. It has single output. My ML.Net code pipeline works fine with this ONNX model with single output. I have another Yolo v4 model which I converted to ONNX but post conversion it has dual output. Due to this, my ML.Net code pipeline is not working. I checked the output details using Netron tool.

I used this link to convert Yolo to ONNX: https://github.com/Tianxiaomo/pytorch-YOLOv4

To Reproduce

Steps to reproduce the behavior:

Use ML.Net to infer a ONNX model

Expected behavior

ML.Net should give inference results

Screenshots, Code, Sample Projects

var pipeline = mlContext.Transforms.ResizeImages(resizing: ImageResizingEstimator.ResizingKind.Fill, outputColumnName: "data", imageWidth: ImageSettings.imageWidth, imageHeight: ImageSettings.imageHeight, inputColumnName: nameof(ImageInput.Image))

.Append(mlContext.Transforms.ExtractPixels(outputColumnName: "data", orderOfExtraction: ImagePixelExtractingEstimator.ColorsOrder.AGRB))

.Append(mlContext.Transforms.ApplyOnnxModel(modelFile: modeltoInfer.ModelPath, outputColumnName: "model_outputs0", inputColumnName: "data", gpuDeviceId: gpuDeviceId, fallbackToCpu: fallbackToCpu));

Screenshots:

Additional context

Is there any ML.Net code which can do inferencing for a ONNX model having dual output ?