Hey, this seems like the correct repository to write this issue on. If not please let me know so that I can move it for you.

Overview

Versions

hyper - "0.13.8"

rustls = "0.18.1"

tokio-rustls = "0.14"

tokio = "0.2.22"

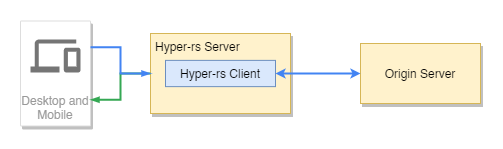

We use hyper-rs server and client to serve as the foundation for a special traffic replay proxy (i.e. not passthru). A client sends an HTTP/S request to our proxy, which goes to our server. Internally we have a client that mutates the request and sends out an HTTP/S request to an origin server, waits for the response and finally the server returns the response (mutated) back to the original client.

Issue

On average, between 8 to 48 hours of letting the proxy run, we consistently hit an issue where the hyper-rs server seemingly refuses to accept any connections. A couple of points on this:

- I cannot seem to reproduce this issue in any way. I tried heavy load tests, long soak tests over 8 hours, intermittent slow tests to see if it's an issue due to trying to reuse stale connections;

- There are no errors at any point in time related to this. When running

sudo RUST_LOG="debug" ./proxy, I was hoping to gain some insight, but all we seem to see are debug messages related to buffer reads;

tcpdump shows connection attempts coming through, just no response from the server.

Miscellaneous Troubleshooting

Some of these points may not be entirely relevant, but I am adding them incase they may help spring up some ideas.

Hyper Server + TLS Setup

Note that the proxy needs to be TLS-aware in this scenario.

let addr = SocketAddr::from((config.server.address, config.server.port));

let mut tls_config = rustls::ServerConfig::new(rustls::NoClientAuth::new());

tls_config.set_single_cert(...).expect("invalid key or certificate");

let tls_acceptor = TlsAcceptor::from(Arc::new(tls_config));

let arc_acceptor = Arc::new(tls_acceptor);

let mut listener = TcpListener::bind(&addr).await.expect(&format!("unable to bind to addr: {:?}", &addr));

let incoming = listener.incoming();

let incoming = hyper::server::accept::from_stream(incoming.filter_map(|socket| async {

match socket {

Ok(stream) => match arc_acceptor.clone().accept(stream).await {

Ok(val) => Some(Ok::<_, hyper::Error>(val)),

Err(e) => {

error!("tcp socket inner err: {}", e);

None

}

},

Err(e) => {

error!("tcp socket outer err: {}", e);

None

}

}

}));

let http_tls_service = make_service_fn(move |_| {

// arc clone some configuration

async move {

Ok::<_, Infallible>(service_fn(move |_req| {

// arc clone some configuration

on_request_handler(_req, config, services)

}))

}

});

let server = Server::builder(incoming).serve(http_tls_service);

// Run

if let Err(e) = server.await {

error!("server error: {}", e);

} Connections stuck in CLOSE_WAIT

The only indicator of something possibly going run occurs when the proxy hangs, and we take a look at active tcp connections (netstat -anp). We notice that there are an abnormal number of connections stuck in CLOSE_WAIT.

tcp 367 0 182.211.74.112:443 35.173.69.86:49930 CLOSE_WAIT off (0.00/0/0)

tcp 291 0 182.211.74.112:443 138.246.253.24:45312 CLOSE_WAIT off (0.00/0/0)

tcp 367 0 182.211.74.112:443 35.173.69.86:58335 CLOSE_WAIT off (0.00/0/0)

tcp 367 0 182.211.74.112:443 52.42.49.200:58022 CLOSE_WAIT off (0.00/0/0)

tcp 367 0 182.211.74.112:443 35.173.69.86:9528 CLOSE_WAIT off (0.00/0/0)

In total however we only see around 120 connections stuck in this state which still shouldn't hang the server. One thing to note here, is that the connection seems to be hanging on our server-side and there appears to always be a remaining 367 bytes in the Recv-Q that are never read, and remain stuck there.

After seeing this occur a couple of times, I am noticing that the CLOSE_WAIT connections are primarily caused by the same set of remote clients, which leads me to think that they are abruptly closing connections causing potential issues. If there are any recommendations on how to systematically try to reduce this, I'm open to ideas.

It may also be important to note that on this same server, a previous version of the proxy did not experience similar issues. There is also a nameserver module in Go that accompanies the proxy that runs indefinitely even after the proxy hangs. Any pointers would be really great.

Hey, this seems like the correct repository to write this issue on. If not please let me know so that I can move it for you.

Overview

Versions

hyper - "0.13.8"

rustls = "0.18.1"

tokio-rustls = "0.14"

tokio = "0.2.22"

We use hyper-rs server and client to serve as the foundation for a special traffic replay proxy (i.e. not passthru). A client sends an HTTP/S request to our proxy, which goes to our server. Internally we have a client that mutates the request and sends out an HTTP/S request to an origin server, waits for the response and finally the server returns the response (mutated) back to the original client.

Issue

On average, between 8 to 48 hours of letting the proxy run, we consistently hit an issue where the hyper-rs server seemingly refuses to accept any connections. A couple of points on this:

sudo RUST_LOG="debug" ./proxy, I was hoping to gain some insight, but all we seem to see are debug messages related to buffer reads;tcpdumpshows connection attempts coming through, just no response from the server.Miscellaneous Troubleshooting

Some of these points may not be entirely relevant, but I am adding them incase they may help spring up some ideas.

Hyper Server + TLS Setup

Note that the proxy needs to be TLS-aware in this scenario.

Connections stuck in CLOSE_WAIT

The only indicator of something possibly going run occurs when the proxy hangs, and we take a look at active

tcpconnections (netstat -anp). We notice that there are an abnormal number of connections stuck inCLOSE_WAIT.In total however we only see around 120 connections stuck in this state which still shouldn't hang the server. One thing to note here, is that the connection seems to be hanging on our server-side and there appears to always be a remaining

367bytes in theRecv-Qthat are never read, and remain stuck there.After seeing this occur a couple of times, I am noticing that the

CLOSE_WAITconnections are primarily caused by the same set of remote clients, which leads me to think that they are abruptly closing connections causing potential issues. If there are any recommendations on how to systematically try to reduce this, I'm open to ideas.It may also be important to note that on this same server, a previous version of the proxy did not experience similar issues. There is also a nameserver module in Go that accompanies the proxy that runs indefinitely even after the proxy hangs. Any pointers would be really great.