- Return relevant info for a prompt using the OP stack

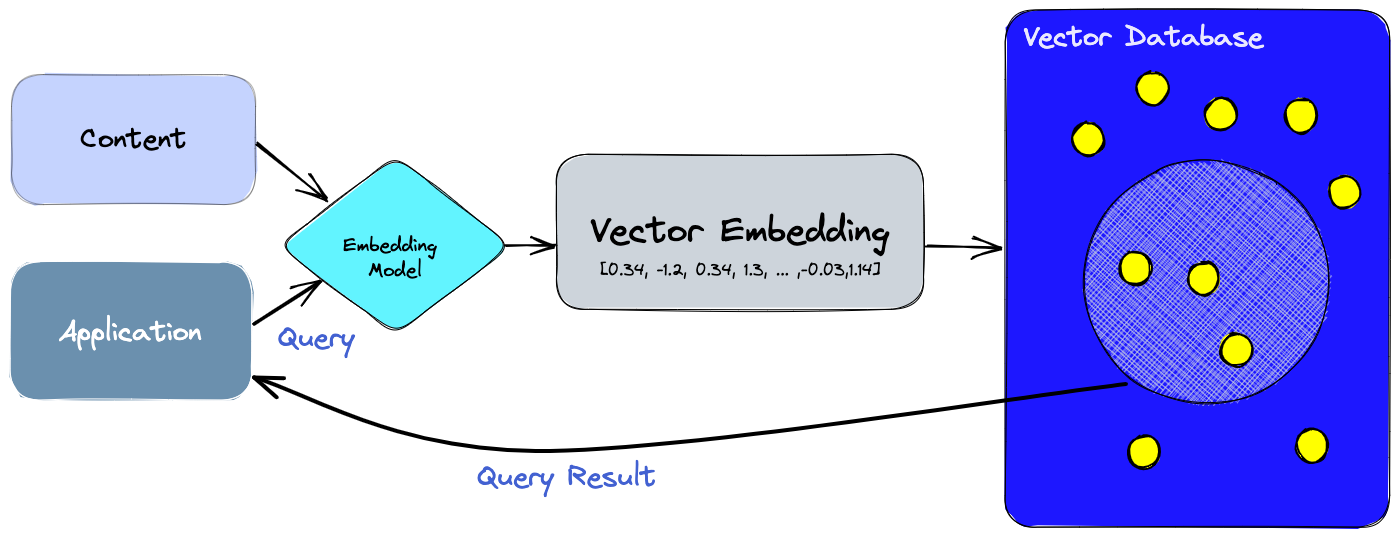

- Vector queryable datebase with semantic search

- Use the sentence transformer library to generate embeddings

- Use pinecone database library to store and search embeddings

- Insert top k responses from query as context for gpt4 model

- Extract relevant information using chat completion

- return top result and gpt response

- Fine-tune GPT davinci model on policy manuels

- Standard prompting to ask questions on fine-tuned models

- React native application

- Backend Flask server

- Integrate React and Flask

- Use render for web hosting