Replacing fileIO exceptions with error codes#4841

Conversation

|

✅ Build DirectXShaderCompiler 1.0.2339 completed (commit a1422abc60 by @harshpg) |

llvm-beanz

left a comment

llvm-beanz

left a comment

There was a problem hiding this comment.

Thank you @harshpg!

This is an awesome change. I have a general dislike for exceptions myself especially as an error reporting pattern.

Before we can merge this PR, you'll need to agree to the Microsoft CLA. The bot that commented in the PR has the text of the CLA in the comment and instructions for how to agree to the CLA.

Thanks!

|

Looks good, though I'm curious about the performance impact mentioned. Is this because ReadBinaryFile and WriteBinaryFile are being called directly from managed code, and exceptions are not handled in the code being called before crossing the managed code boundary? I ask because there are lot of other places where exceptions are used to indicate failure in this codebase, and I expect these should not cause performance problems when the API being called catches and handles the exceptions thrown (translating exceptions to |

|

Thank you for approving the changes! Yes, I also prefer to use error codes instead of exceptions whenever possible. I have requested approval from my company to be able to sign the license. Sorry about the delay. |

|

Thanks for approving the changes! Essentially, I made a DLL that interfaces with the DXC library to compile shaders. When compiling shaders, I pass in several include directories using -I, and the compiler searches for include files. In addition, I am compiling shaders using multiple threads so there were a ton of exceptions begin thrown from ReadBinaryFile. Once I added the above changes, I did not observe any other exception messages or performance drops so I don't have reason to believe exceptions in other parts of the code base are causing issues. |

I don’t know if @harshpg has profiled, but in general an exception throw is going to be at least an order of magnitude slower than returning an int. In most ABIs returning an int will result in a register passed result, and RAII cleanup at each level of the stack, but unlike exception throwing there is no stack unwinding. Even unwinding a stack one level is likely an order of magnitude slower than a return instruction, and it can’t be optimized by the compiler. Calls can be inlined, but the stack unwind can’t. I’ve certainly observed DXC’s file APIs throwing exceptions for recoverable and sometimes expected error propagation. Getting away from that likely has a measurable impact on performance. |

|

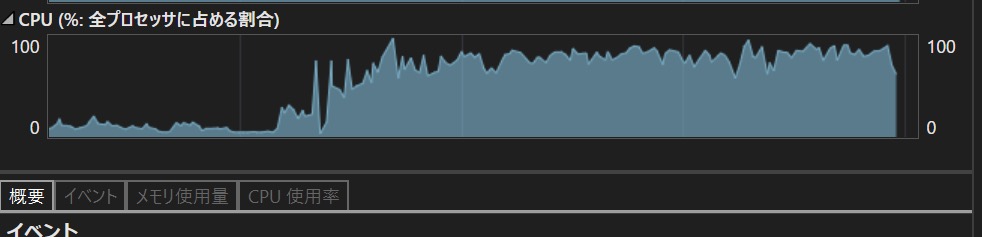

I didn't profile the code directly, but I looked at the general execution time to compile all shaders as well as CPU usage. Before, the CPU usage would spike and drop due to the exceptions being thrown. After changing the logic, the spikes were gone and there was a consistent 90%+ utilization. Due to the spikes and exception handling, compilation was really slow and probably would have taken at least 2 hours to finish. After my changes, compilation takes about 5 - 6 minutes. |

|

I reviewed my changes again, and noticed one mistake I made:

This should be:

Sorry about the issue. |

|

✅ Build DirectXShaderCompiler 1.0.2354 completed (commit 397e448a8a by @harshpg) |

|

@microsoft-github-policy-service agree company="PlatinumGames Inc." |

Every exception throw more or less results in worst-case cache misses. Most modern "zero-cost" exception models rely on looking up call stack entries in side tables. Those side tables are big, and pretty much always cold data. The drop in CPU utilization is likely the result of paging in the exception side tables as the unwinder tries to figure out how far to unwind the stack and which cleanup code to run.

The before and after here is more or less consistent with what I'd expect removing exceptions. Non-exception error handling is just returning an integer up the stack. That should just be a register-return value which is super fast. One of the other drawbacks of exception models (even "zero-cost" exceptions) is that they create boundaries the compiler can't optimize across. Eliminating the exceptions from the code should allow more aggressive inlining and other optimizations.

That's an awesome speedup! Is there any chance you can share (publicly or privately) more information about your shader(s) or the shader code itself? It would be very interesting for us to analyze the performance and see if there are other things we can do to help. Overall, this seems like a big win. Thank you so much for posting it! I'm going to run a couple tests with this change against some local testing changes I have and I'll aim to merge it in a few hours. |

|

Thank you! I have also attached the before and after CPU utilization images. Unfortunately, I can't publicly share any details, but I can ask my supervisor tomorrow if it would be possible to share more in private. Before After |

|

On a side note, I would be interested in seeing how much this change affects stutters in games that compile shaders at runtime. |

I don't know that we have a good way to measure that. A lot of games that ship shader source preprocess it first, so they are shipping a single header or they use a custom include handler to avoid disk IO performance. I would expect this change to have minimal impact on those usage patterns. |

|

Ah, yes that makes sense. Since we are on the topic of optimizations, I wonder how much of a gain can be made by using the likely / unlikely compiler attributes added in C++ 20. Also, thank you for merging the changes. |

The exceptions thrown by ReadBinaryFile and WriteBinaryFile give a noticeable performance impact, especially when being called by managed code which automatically tracks and logs exceptions called from native code. The exception logic can be replaced by error code return values. Adding this change greatly improved performance when calling the DXC API directly since exceptions were being thrown and reported all over the place while looking for include files.