-

Notifications

You must be signed in to change notification settings - Fork 3.8k

Closed

Description

Describe the bug

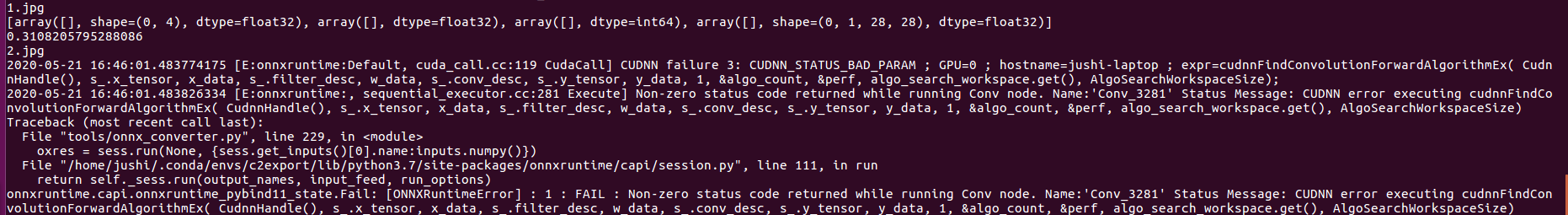

I trained a MaskRcnn model and convert it to onnx. When i use test images to do inference,it can give the correct output. But if i do the inference with a white image (all 255) first, and then use test images to do inference, it can't recognize correctly with onnxruntime-gpu1.3.0.

if i use onnxruntime1.3.0, it will not happen.

System information

- OS Platform and Distribution :Windows 10 (c++) & Linux Ubuntu 16.04(python3.7)

- ONNX Runtime installed from :binary from pip install

- ONNX Runtime version:1.3.0

- Python version:3.7

- Visual Studio version (if applicable):2017

- GCC/Compiler version (if compiling from source):compiling from binary

- CUDA/cuDNN version:CUDA10.1 & cuDNN7.6.5

- GPU model and memory:2080Ti 11G

To Reproduce

- Describe steps/code to reproduce the behavior.

- Attach the ONNX model to the issue (where applicable) to expedite investigation.

onnx model and images are here https://drive.google.com/open?id=1c7GDq8AYgneP6mdqFPSH1GKl7Oggn8Mq

do the inference with a white image (all 255) first, and then use test images to do inference

import os

import onnxruntime

import cv2

import torch #1.5.0

from detectron2.data.transforms as T #just for resize

filelist = os.listdir('./')

sess = onnxruntime.InferenceSession('./segmentation.onnx')

for i in filelist:

inputs = cv2.imread('./' + i)

transform_gen = T.ResizeShortestEdge([800,800],1333)

inputs = transform_gen.get_transform(inputs).apply_image(inputs)

inputs = torch.as_tensor(inputs.astype('float32').transpose(2,0,1))

inputs = inputs.unsqueeze(0)

res = sess.run(None, {sess.get_inputs()[0].name:inputs.numpy()})

print (res)

Screenshots

If applicable, add screenshots to help explain your problem.

Reactions are currently unavailable

Metadata

Metadata

Assignees

Labels

No labels