Gemm/Transpose fusion - additional pattern coverage#8941

Merged

Conversation

ashbhandare

reviewed

Sep 2, 2021

ashbhandare

reviewed

Sep 3, 2021

ashbhandare

reviewed

Sep 3, 2021

ashbhandare

reviewed

Sep 3, 2021

ashbhandare

reviewed

Sep 3, 2021

ashbhandare

previously approved these changes

Sep 3, 2021

ashbhandare

approved these changes

Sep 3, 2021

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

Description: In the final optimized training graph of ULRv5 model, found one Transpose/Gemm pattern not fused properly. Thus adding additional logic to cover the case when a Transpose output is consumed by multiple Gemm nodes.

The new pattern only consider the case where all consumers of a Transpose are Gemm nodes. If one of the consumer node is not Gemm, we'll skip the fusion.

Example:

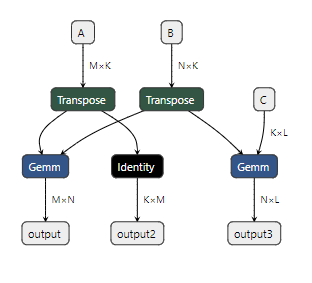

Before:

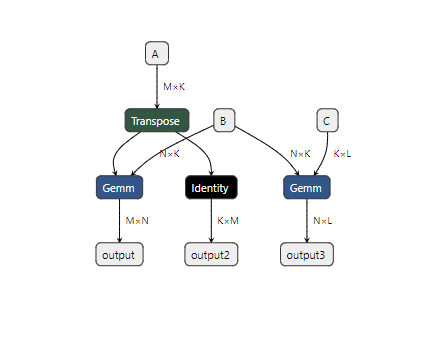

Expected results after transformation:

Motivation and Context