Change default for git.addAICoAuthor to off#313931

Conversation

Co-authored-by: Copilot <copilot@github.com>

📬 CODENOTIFYThe following users are being notified based on files changed in this PR: @lszomoruMatched files:

|

Screenshot ChangesBase: Changed (28)blocks-ci screenshots changedReplace the contents of Updated blocks-ci-screenshots.md<!-- auto-generated by CI — do not edit manually -->

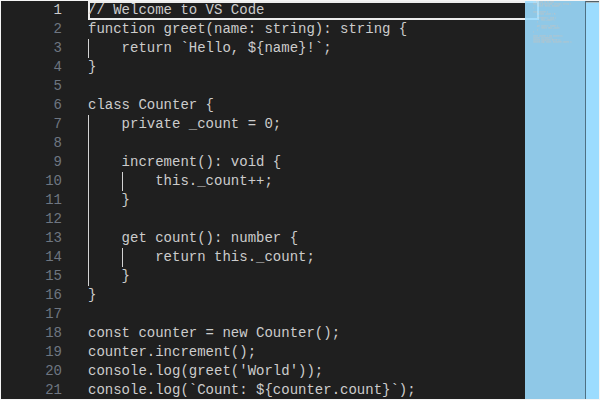

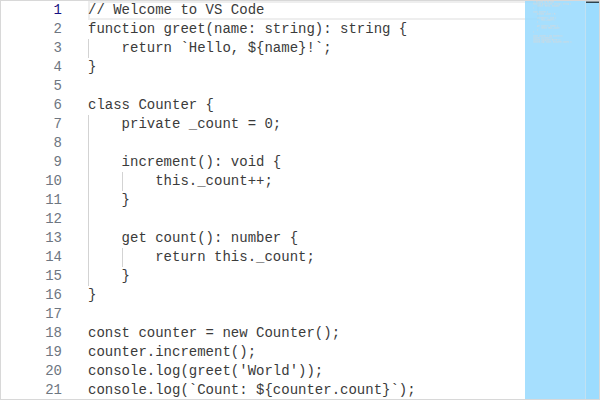

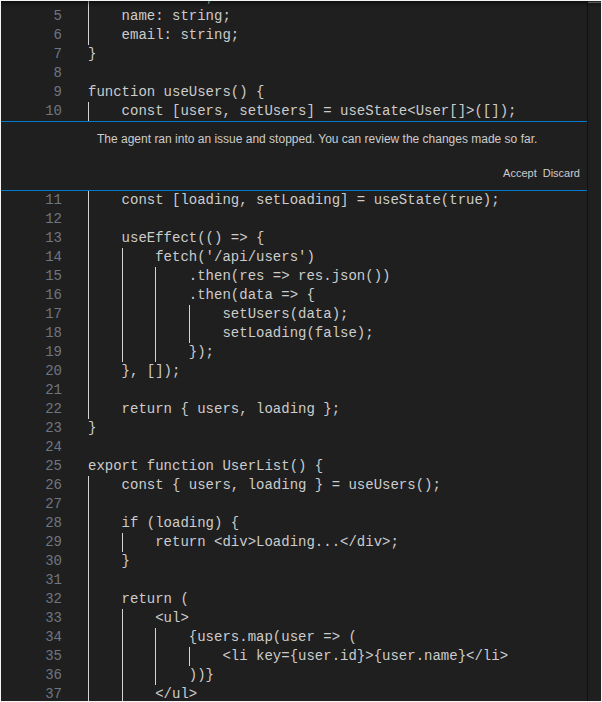

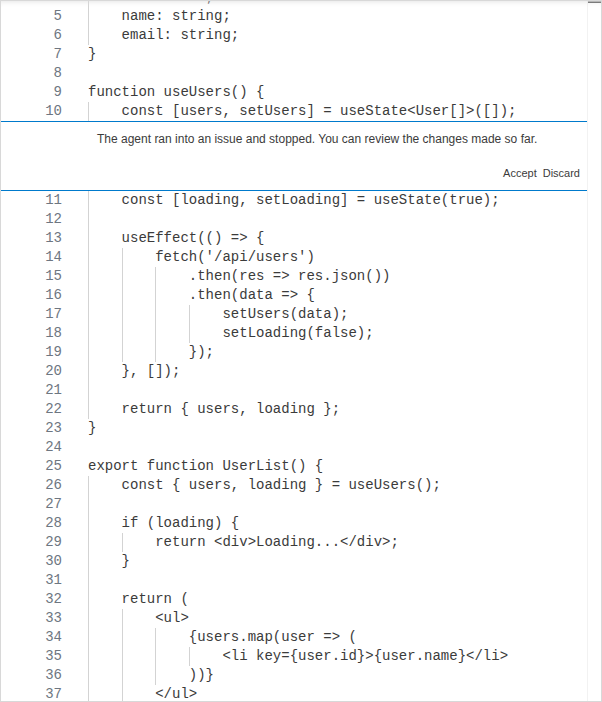

#### editor/codeEditor/CodeEditor/Dark

#### editor/codeEditor/CodeEditor/Light

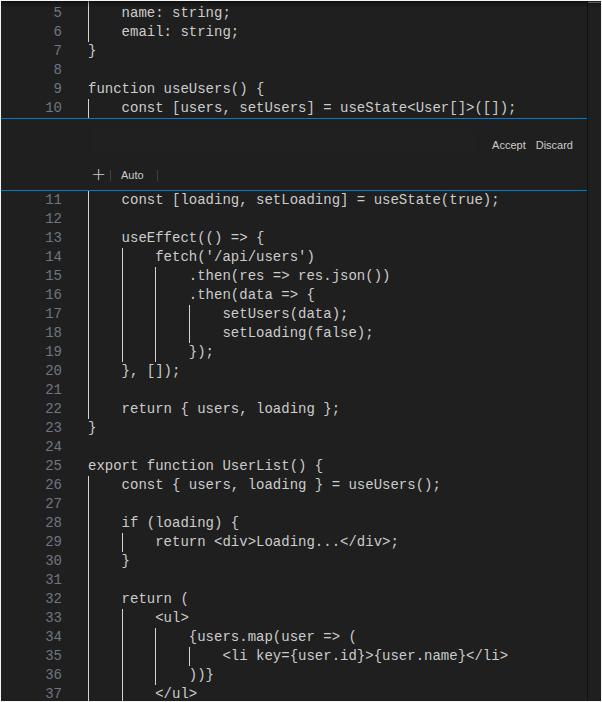

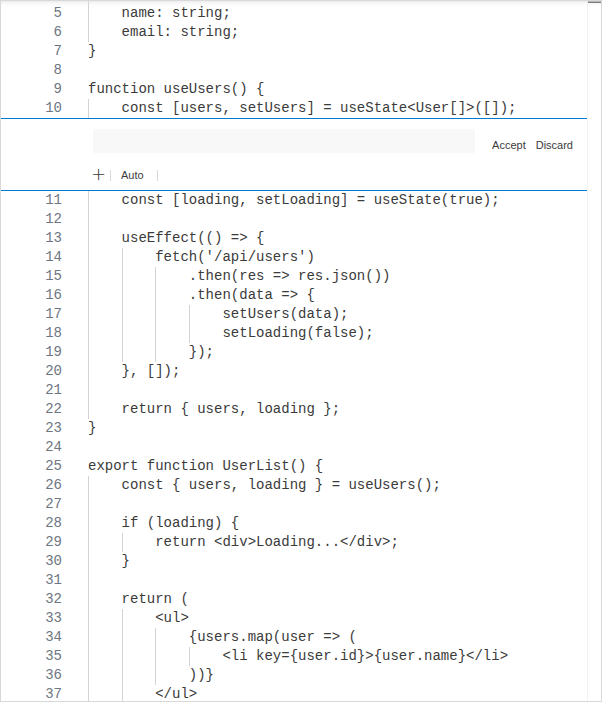

#### editor/inlineChatZoneWidget/InlineChatZoneWidget/Dark

#### editor/inlineChatZoneWidget/InlineChatZoneWidget/Light

#### editor/inlineChatZoneWidget/InlineChatZoneWidgetTerminated/Dark

#### editor/inlineChatZoneWidget/InlineChatZoneWidgetTerminated/Light

|

|

Thanks for listening to the feedback! |

There was a problem hiding this comment.

Pull request overview

This PR changes Git’s AI co-author behavior to be opt-in by default and ensures AI contribution tracking does not run when built-in AI features are disabled.

Changes:

- Set the default for

git.addAICoAuthorto"off"(schema + runtime default). - Prevent AI contribution tracking (

AiContributionFeature) from activating when AI features are disabled/hidden. - Skip adding the AI co-author trailer when

chat.disableAIFeaturesis enabled.

Show a summary per file

| File | Description |

|---|---|

| src/vs/workbench/contrib/editTelemetry/browser/editTelemetryContribution.ts | Gates AiContributionFeature activation behind the entitlement “hidden” state (covers chat.disableAIFeatures). |

| extensions/git/src/repository.ts | Changes default addAICoAuthor fallback to "off" and avoids appending the trailer when AI features are disabled. |

| extensions/git/package.json | Updates the configuration schema default for git.addAICoAuthor to "off". |

Copilot's findings

- Files reviewed: 3/3 changed files

- Comments generated: 0

|

I would not mind it defaulting to on, if it actually works: not only for Copilot, reliable listing the actual model versions used, differentiating between agendic creation and input completion. Being able to reference a session log, helping with logging the evidence needed to get indemification protection. And commit message preview for human. |

Agreed,. model attribution is really needed here, as well as making sure it doesn't catch non-AI changes. |

|

Thank you! Saw your post on hackernews as well. Appreciate the honesty here. |

|

Does this still add the attribution if copilot was active, but not used? Does it still do it silently? |

|

Could we consider completely reverting this default back to |

|

no shot no one at microsoft told you this is a terrible idea XD |

That is exactly what this change is doing - default back to |

With this change, default behavior is there will be no attribution whether Copilot was used or not. So basically by default it works as if this feature does not exist at all. When the setting is on, it will add attribution depending on the setting and there is more work to make sure it works correctly when the setting is 'all' and to make it more visible to the user. |

Big Tech companies are so high on their supply that they'll push obviously awful changes just to please the investors. The worst part isn't that someone didn't think this was a terrible idea, the worst part is that the execs didn't listen to them. |

|

Can we expect more silent and invasive changes in the near future? Were there any similar recent improvements forced by management? I think this was the final straw for most users. Good job, everybody involved. Zed it is. |

|

I genuinely wonder. Why are you all using VSCode at all? Most modern editors have less than maybe 2 days of learning curve. How hot does the water need to be before you decide to jump out? |

You can't just say "most modern editors", and then continue on to not specify a single one lmao. If you want people to move off VSCode, you gotta give them a hint of what to move to. |

Zed. Open-source, Rust, no Electron. |

|

I've got mixed feelings about this PR. On one hand I'm glad that this got so much traction that micro$lop actually decided to revert this commit. On the other hand this is just going to happen again. They're going to try to force AI down our throat over and over again. Many such commits are probably going to remain unnoticed. I'm sick and tired of all this AI garbage. Can't wait for the bubble to pop. |

|

Your comment makes no sense. A functional version of this feature would make it transparent who uses LLMs for code generation, which is a good thing for everyone who isn’t a blind AI believer. The issue was that they released it undercooked, so it 1. misattributed changes to Copilot that weren’t made by Copilot and 2. flagged commits as “co-authored” by LLMs that only used minimal autocompletion. If you didn’t use LLMs at all, you didn’t run into this bug. |

|

Microslop strikes again! |

|

I love how the commit is also co-authored by copilot 😄 |

My reasoning being that this is so poorly executed that I think it's not beyond plausible that this is malice. Just so that micro$lop can claim "oh look at us, so many people are using copepilot to write parts of all their commits! It makes people so productive, use it now". I also find it hard to believe that neither testing nor the review caught this "bug". The commit changed six characters Micro$lop just recently acted like they listened to the copepilot hate and now they're back at it again as if that never happened. Edit: clarification and addition |

|

you microslop nitwits should be ashamed of yourselves |

|

to add constructive feedback: how would this even detect copilot changes vs codex or claude code changes vs just having copilot active or clicking the "generate commit message" button? accurately tracking which exact changes where done in which exact way might be a useful feature to opt in to. but having it depend on just some flag does not seem correct in any way. |

Also making sure change tracking is disabled when AI features are disabled.

|

I think the default should definitely be for it to correctly track llm generated changes. The problem here was that it was sweeping up everyone even those not using llms to write their code. But if you ostensibly care about transparency, you should absolutely let your users know you're using copilot or whatever else. Why hide from it or make excuses about 'just auto-completing what I 100% was gonna do anyway'? |

|

They massacred my boy |

Of course, these people have no brains. Can't even do this without pushing their crap spyware... |

|

Can the VSCode dev team explain why the product manager, who almost never PR, PR'ed this and get merged quickly without testing ? I don't know if I can trust VSCode as a Microsoft product anymore but I could never trust it without the assurance that this will never happen anymore. |

|

I would ask the same question as @Dennis4720. I think there needs to be some serious questions about why a product manager and not a developer is producing pull requests/writing code, not to mention some accountability. Both for them and the individual who approved and merged their changes. This would go a long way to rebuilding the trust that has been lost over this incident. I don't think this was a change made maliciously, contrary to what a lot of others are saying. Malice implies that the two individuals who wrote and approved the code acted with intent to cause harm. That's an extremely high threshold. I think this was, at worst, negligence. Gross negligence, probably. Between this and the other tickets that I've both seen and raised (#309947, #290825, #288397, and #264166) there appears to be a sharp drop in the quality of AI-related pull requests in VSCode. Bugs are routine and getting increasingly severe. This is something that should be addressed. Sooner than later for the health of the product. |

|

AI's mere existence has drastically degraded the brains of tech bros. Pushes this crap with zero discussion or thought, and uses AI to review a single line edit. They literally cant do anything themselves anymore... Even if this worked "correctly" the first time, why would you force-attribute any AI code specifically to Copilot anyway? There are a lot of slop agents out there that the code could be from. |

|

My use of Sublime text feels more and more validated every day. |

|

Neovim is worth the learning curve |

|

@dmitrivMS being a pompous ass isn't helping your misguided attempts at brownosing your boss. |

Totally agreed! Copilot should give the attribution for the project it stole the code from for the commit. Anything else would be absurd since an AI can't legally be the author of anything. |

I agree with this. The most charitable interpretation here would be that Microsoft wanted to ensure that anything assisted by AI is appropriately flagged. In a perfect world, that would be an unavoidable watermark in some fashion so that reviewers, managers, and public onlookers can make informed decisions. In reality, a trailing note in the commit message is about the best we can hope for. This original PR does not sufficiently accomplish that, nor does this one. The pessimistic interpretation is that someone has some copilot usage metrics to hit and there was not adequate (or any) consideration for the side effects or implications. That is why people are so upset. |

|

Just plain |

|

switching to pen and paper pretty sure Bic won't claim co authorship |

|

Constructive feedback: This situation should have never happened. Pull request should have never been created, should have never been approved, should have never been merged, and should have never been released. I was already annoyed at the switch to weekly releases. That is playing too fast and loose for what is an essential piece of software for many developers. A supposed benefit of fast releases is that issues get fixed quicker, but the downside is that undoubtedly less time is spent reviewing changes. On the code review side of things, I am not sure if this was actually rubber stamped or just appeared as such. It is very evident that not enough time was given for this, and this is a breakdown in the software engineering process going on here. I ask that you please go back to monthly stable releases, both to allow more review time, and prevent issues from making it to production so quickly. It does not really matter numbers-wise if the chances of regression are low if the impact of a single fault is far larger. I love VS Code. Been using it for a very long time (since at least 2018), previously using Atom. Seeing the quality of it diminish actually does hurt. |

|

Microslop's insistence on forcing Copilot into every single piece of software they make, user consent be damned, has broken my trust in them a long time ago, yet this is somehow a new low. Last year I uninstalled VS and VS Code. I've had a lot of issues with Linux, but I don't see any world in which I will use Windows 11 once I need to switch from Windows 10. At this point, I don't trust your promises to fix Windows 11. Your software is actively repulsive to me. |

|

I've been an EMACS enjoyer for most of my career. I have never thought I'd side with Vim people, but to all the young programmers - I don't give a crap if you'd use Vim, Sublime, EMACS or whatever the hell, just do NOT use this bloated garbage. MACROslop is just marcroSLOP-ing, don't use their products and don't depend on their crap "products", it's that simple. |

|

Closing comments on this to prevent further spam. |

|

FYI - we have posted update on the subject with more details and analysis here: |

Also making sure change tracking is disabled when AI features are disabled.