masking model key,fix cuda model config transfer problem#16

Merged

pymumu merged 1 commit intomodelbox-ai:mainfrom Dec 30, 2021

Merged

masking model key,fix cuda model config transfer problem#16pymumu merged 1 commit intomodelbox-ai:mainfrom

pymumu merged 1 commit intomodelbox-ai:mainfrom

Conversation

0790cdf to

e8e9f1f

Compare

pymumu

reviewed

Dec 25, 2021

|

|

||

| auto drivers_ptr = GetBindDevice()->GetDeviceManager()->GetDrivers(); | ||

| ModelDecryption engine_decrypt; | ||

| engine_decrypt.Init(params_.engine, drivers_ptr, config); |

pymumu

reviewed

Dec 25, 2021

| std::shared_ptr<uint8_t> modelBuf = | ||

| engine_decrypt.GetModelSharedBuffer(model_len); | ||

| if (modelBuf == nullptr) { | ||

| auto err_msg = "modelBuf is empty, the model file " + params_.engine; |

pymumu

reviewed

Dec 25, 2021

| if (modelBuf == nullptr) { | ||

| auto err_msg = "modelBuf is empty, the model file " + params_.engine; | ||

| MBLOG_ERROR << err_msg; | ||

| return {modelbox::STATUS_FAULT, err_msg}; |

pymumu

reviewed

Dec 25, 2021

| } | ||

| engine_ = TensorRTInferObject(infer->deserializeCudaEngine( | ||

| modelBuf.get(), model_len, plugin_factory_.get())); | ||

| } else if (engine_decrypt.GetModelState() == |

pymumu

reviewed

Dec 25, 2021

| file.read(trtModelStream.data(), size); | ||

| file.close(); | ||

|

|

||

| engine_ = TensorRTInferObject(infer->deserializeCudaEngine( |

Contributor

There was a problem hiding this comment.

这里直接让引擎去加载模型文件,不要再中间拷贝一次。占用内存太多了。

Contributor

Author

There was a problem hiding this comment.

EngineToModel方法之前就是从内存加载的

pymumu

reviewed

Dec 25, 2021

| modelbox::Status PrePareInput(std::shared_ptr<modelbox::DataContext>& data_ctx, | ||

| std::vector<void*>& memory); | ||

| modelbox::Status PrePareOutput( | ||

| std::shared_ptr<modelbox::DataContext>& data_ctx, |

Contributor

There was a problem hiding this comment.

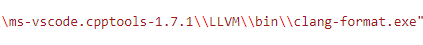

这些格式,确认下是否用的我们标准的clangformat

Contributor

There was a problem hiding this comment.

在linux下格式化吧。用默认vscode的配置,不要指定任何clangformat格式文件。

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

masking model key,fix cuda model config transfer problem