Feature: Services#224

Conversation

|

Todos:

|

|

Should the memory and jupyter server(s) be services? It would increase consistency and would make for a nice, self-documenting feature, since "services" in the pipeline settings would already be populated with some entries. I am aware there might be some technical difficulties into making both of them into services, but it's worth reasoning about.

Assuming those are to be secrets (non versioned) the naive solution would be to have services inherit the pipeline environment variables, but we run into the risk of collisions, secrets leaking into services that did not need them, etc. The way services are currently declared might be at odds with what we want to do. We could use a slightly higher level input form instead of having the user writing jsons in code mirror, and take care of what should go in the pipeline def json and what should be secret and written to the db behind the scenes. |

|

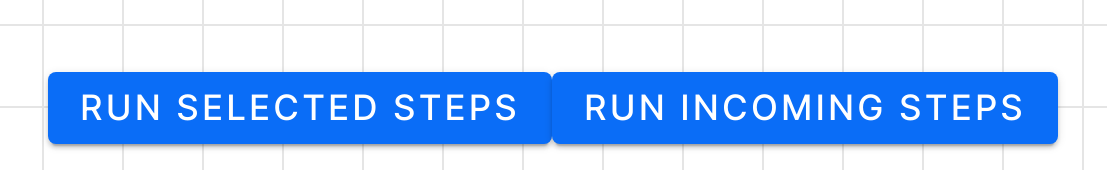

Environment variables are now passed, but only when explicitly requested: |

They are not optional and URL routing might be different to services. So I don't think they should be unified into services. As I feel like services should just be user defined services. But they are very similar internally indeed. We should re-use code between services and JupyterLab/the memory server as much as possible. They already share some parts of the implementation internally (e.g. they're both assigned to |

I think this is something we should iterate toward. Not necessarily release v1. But do agree it would be nicer. Secrets can now be passed using environment variable inheritance. |

This is now handled by the Orchest SDK. It returns: |

|

One pet peeve is the necessity to tweak container services to incorporate a base path. This is due to how the The structure is: Or broken into its components: Would like to do it in a 'cleaner' way. But this works reliably and allows you to flexibily run services on any TCP port in the service container without requiring any changes to the way in which we currently host Orchest on AWS and how it runs locally. |

|

Another potential issue is that we now pull the containers for services from DockerHub when we start the session. This could take a long time and could make the session start request time out. Should we bite the bullet and make the session start (POST) a polling operation? |

I think so |

| "uuid": "pipeline-uuid", | ||

| "settings": {}, | ||

| "parameters": {}, | ||

| "services": [], |

There was a problem hiding this comment.

Should not forget to also update the JSON schema in the docs.

There was a problem hiding this comment.

On another note, didn't we change it to be a dictionary instead?

|

Note: we should not access the |

…into feature-services

…into feature-services

| the inherited ones. Note that, while project and pipeline environment variables | ||

| are considered as `secrets`, services environment variables aren't and will | ||

| be persisted in the pipeline definition file. | ||

| - **scope**: To specify if the service should be running in interactive mode, jobs, or both. |

There was a problem hiding this comment.

Naming the scope to be "jobs" instead of "non-interactive" makes a lot of sense to me and I am sure it is a lot more obvious for the users as well.

Should we define scope to be "pipeline editor" or "jobs" instead?

There was a problem hiding this comment.

Makes sense to me, @ricklamers @joe-bell? (will need a small GUI tweak)

…ver, user services

…into feature-services

…into feature-services

Description

This PR adds the idea of "services" to Orchest. A service can be any container that can run as part of a session, e.g. a streamlit app to be interacted with.

Example service JSON configuration:

Todo