-

Notifications

You must be signed in to change notification settings - Fork 4

CS61B 2018 Lecture 19 Asymptotics III: Big O / Omega, Amortized Analysis #99

Description

Summary

-

Big O

- Formal Definition

- Big Theta vs. Big O

- Runtime Analysis Subtleties(Why Big O is Useful)

- The usefulness of Big O

-

Big Omega

-

Amortized Analysis (Intuitive Explanation)

-

Amortized Analysis (Rigorous Explanation)

- How to prove that the amortized (a.k.a. average) cost is constant?

-

IMPORTANT

- Big O does not mean "Worse case".

Big O

Formal Definition

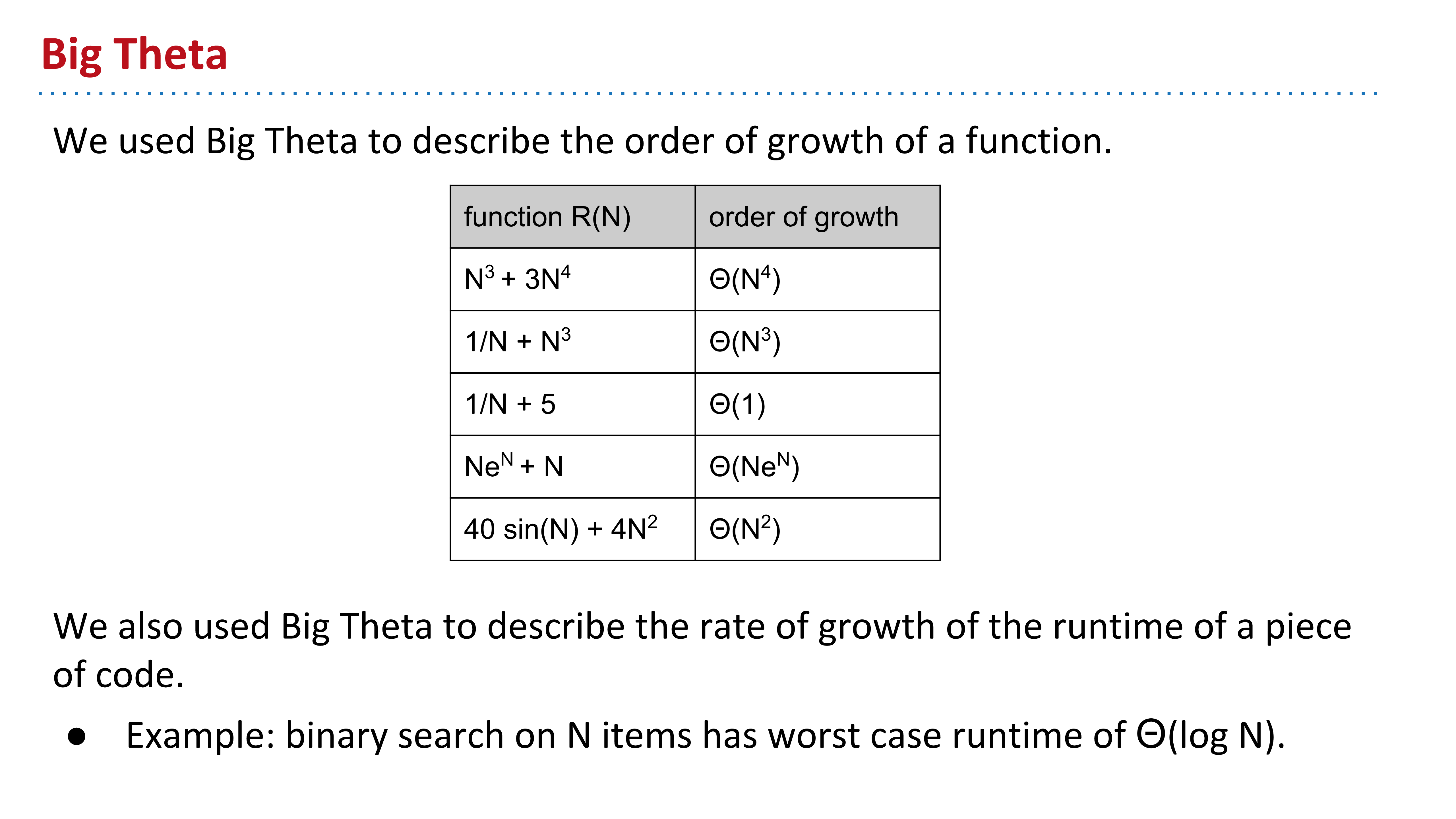

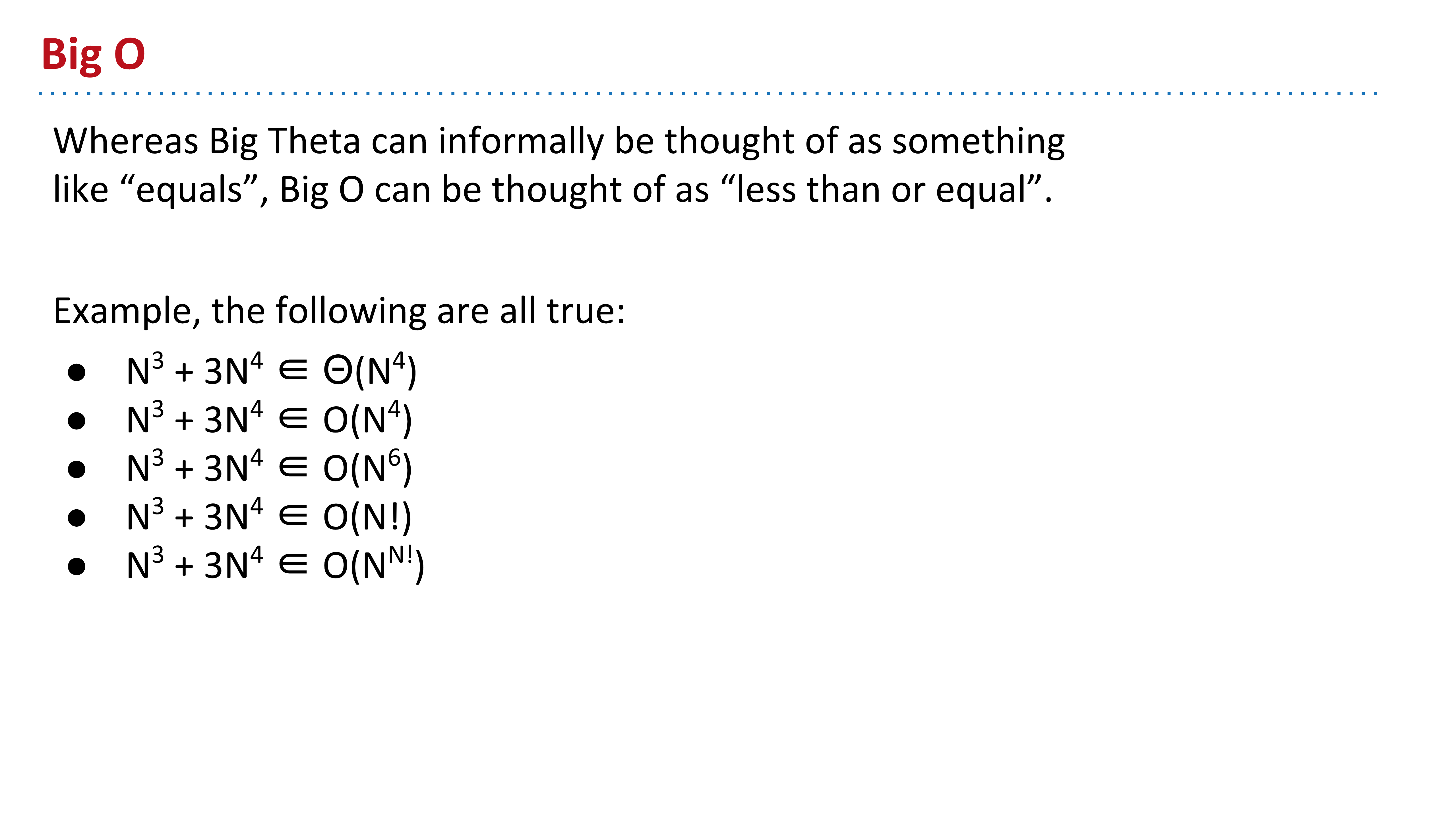

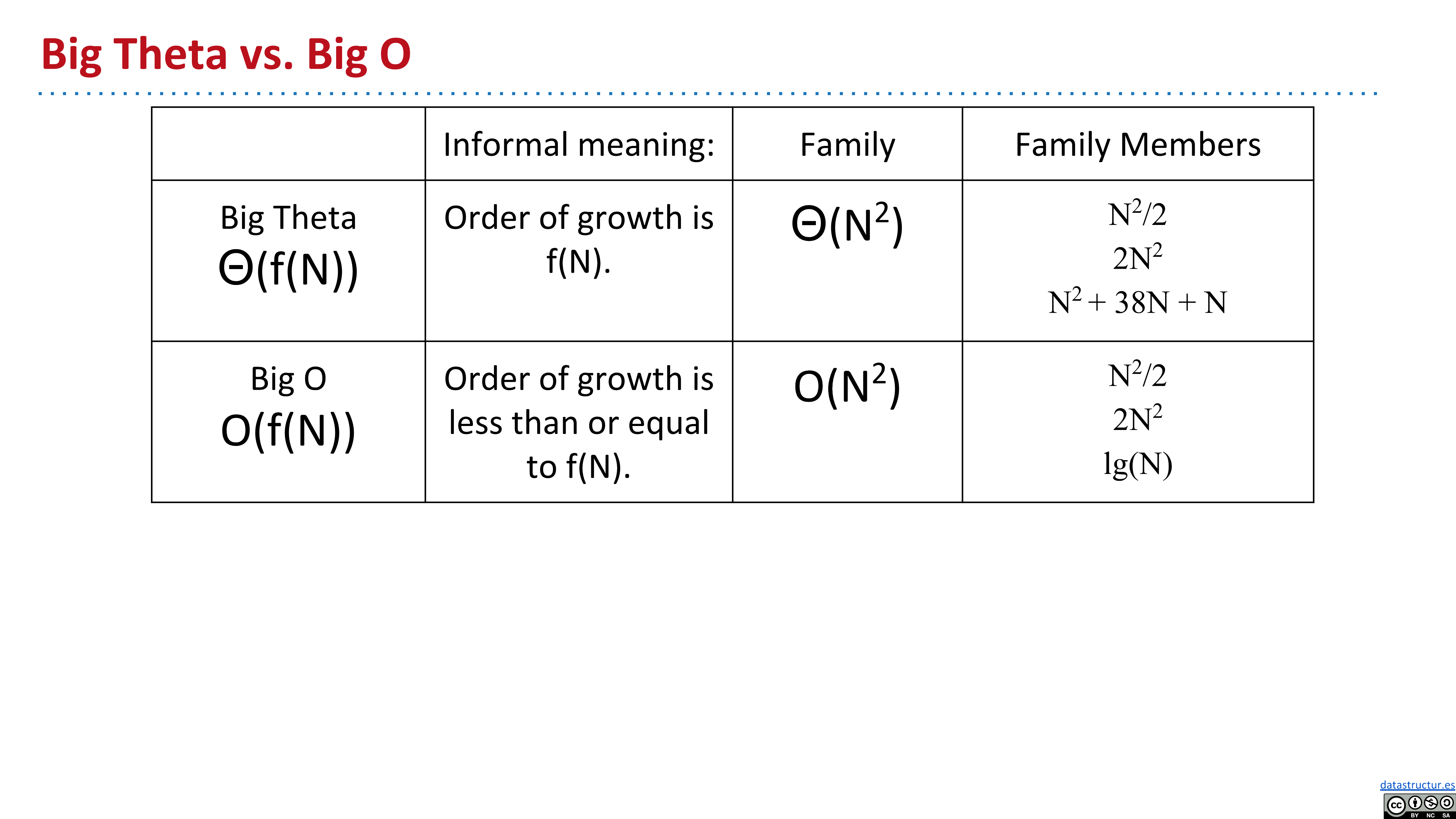

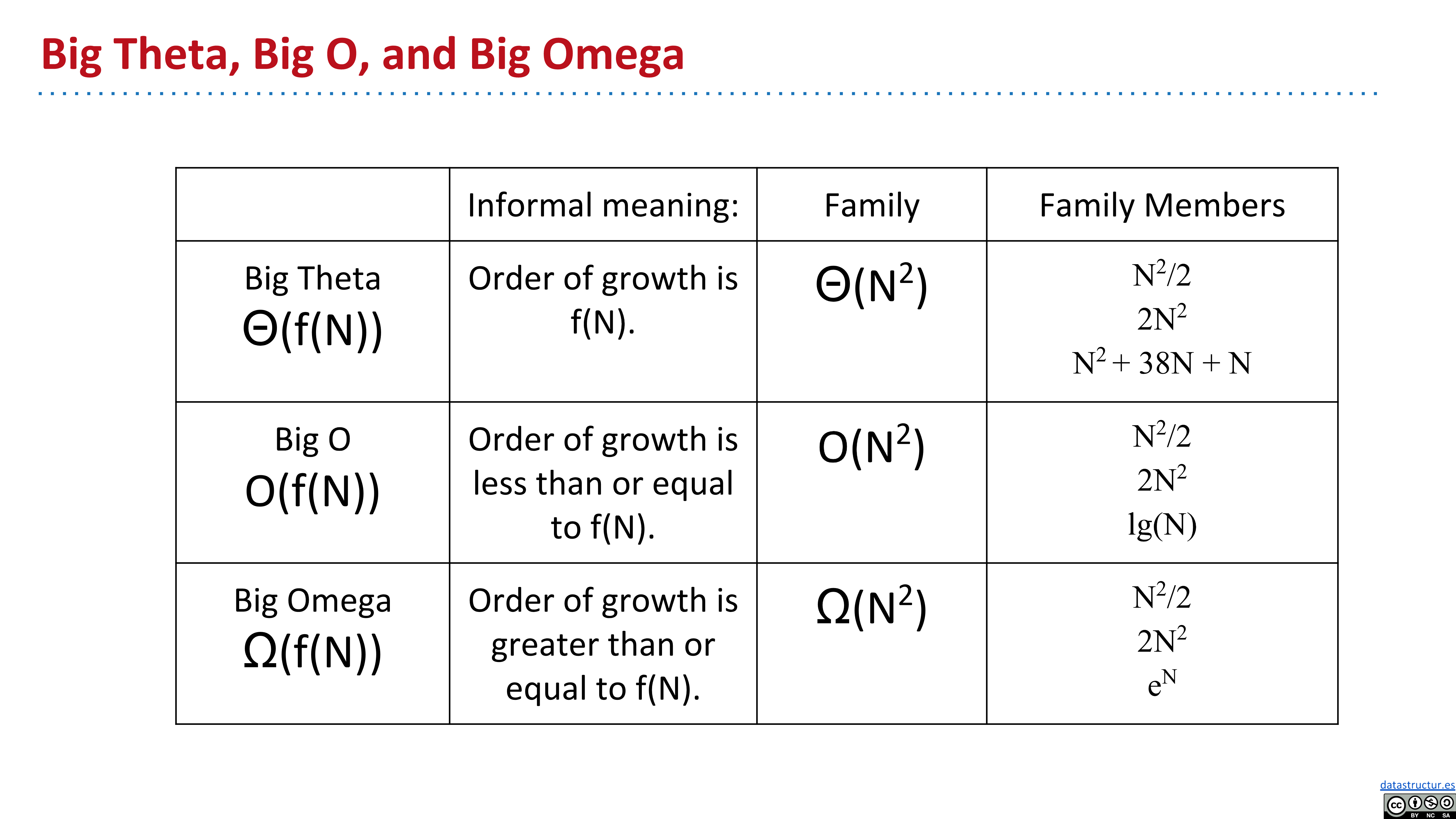

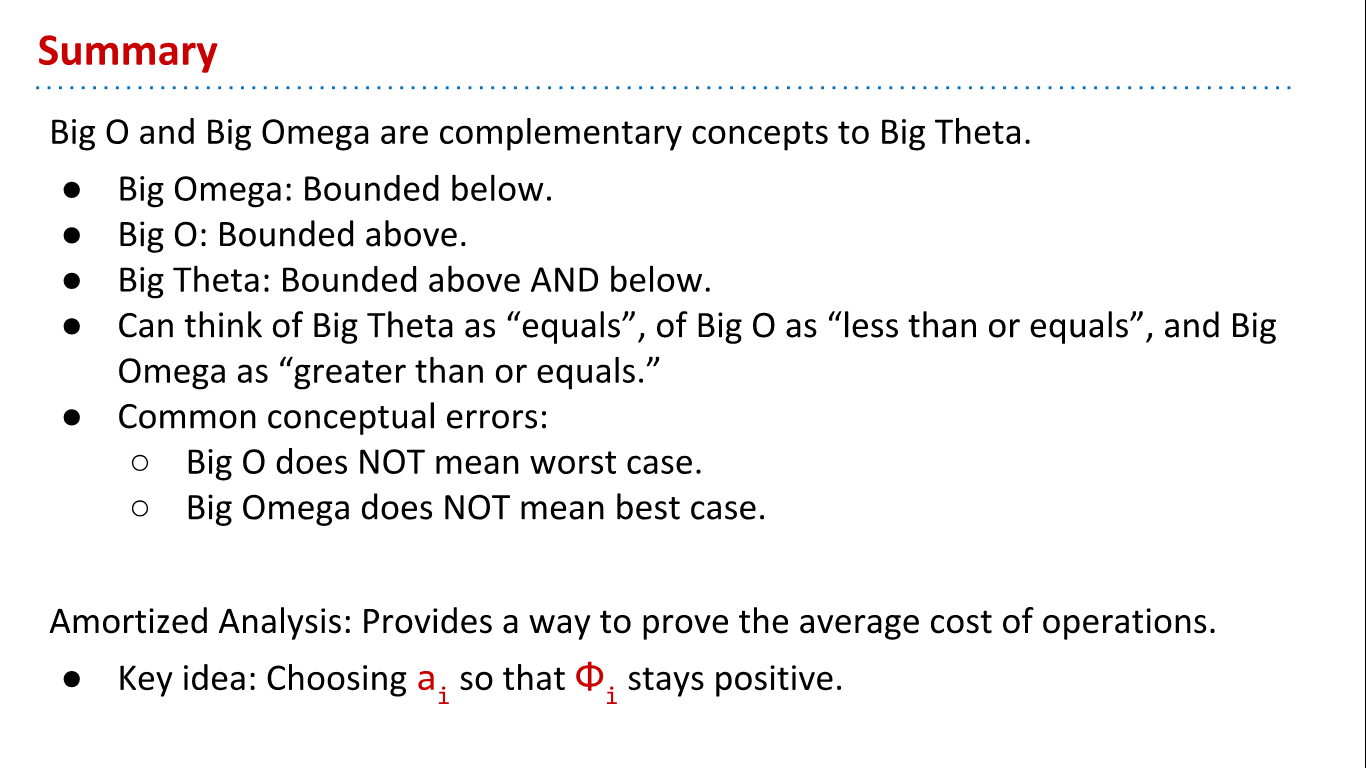

Observe that this is a looser condition than Big Theta since Big O does not care about the lower bound.

Big Theta vs. Big O

Runtime Analysis Subtleties(Why Big O is Useful)

To demonstrate why it is useful to use Big O, let's go back to our duplicate-finding functions consider the following exercises.

Answer:

R(N)∈Θ(1), it's constant time! That's because there's a bug in dup3: it always compares the first element with itself. In the very first iteration, i and j are both 0, so the function always immediately returns. Bummer!

Answer:

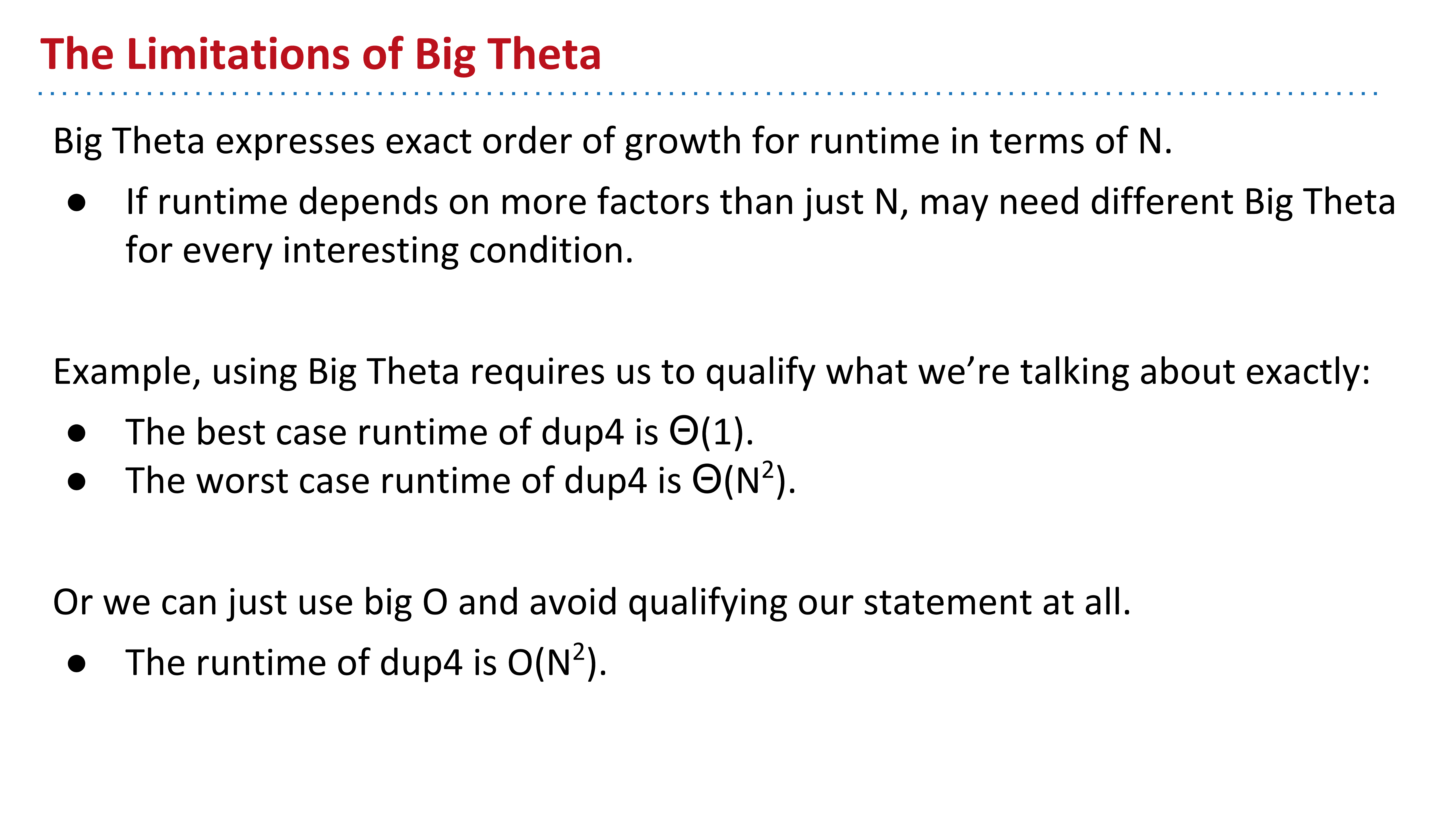

This time, the runtime depends on not only the length of the input, but also the array's contents. In the best case, R(N)∈Θ(1). If the input array contains all of the same element, then no matter how long it is, dup4 will return on the first iteration.

On the other hand, in the worst case, R(N)∈Θ(N^2). If the array has no duplicates, then dup4 will never return early, and the nested for loop will result in quadratic runtime.

This exercise highlights one limitation of Big Theta. Big Theta expresses the exact order of as a function of the input size. However, if the runtime depends on more than just the size of the input, then we must qualify our statements into different cases before using Big Theta. Big O does away with this annoyance. Rather than having to describe both the best and worse case, for the example above, we can simply say that the runtime of dup4 is O(N^2). Sometimes dup4 is faster, but it's at worst quadratic.

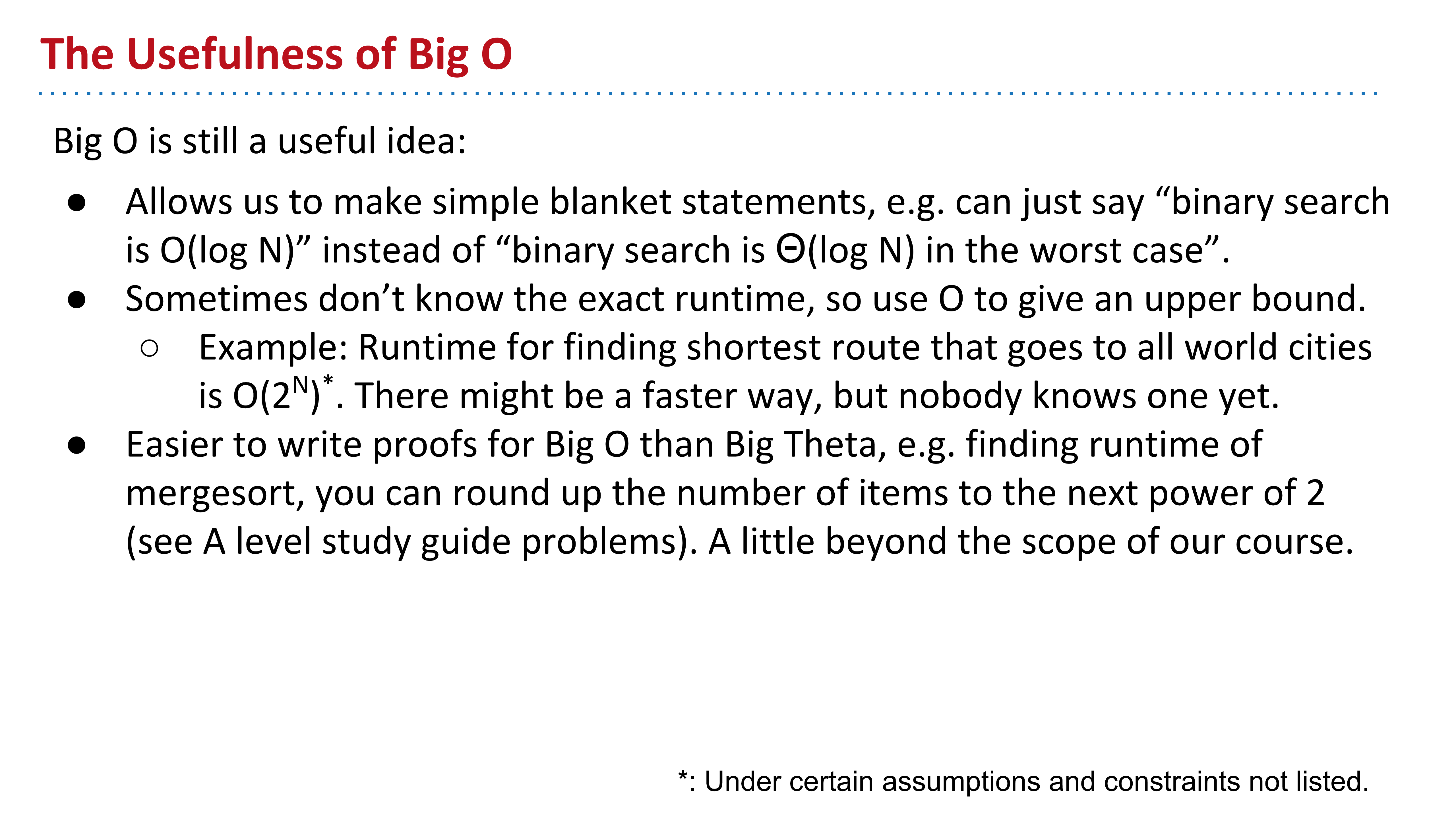

The usefulness of Big O

IMPORTANT: Big O does not mean "Worse case"

To summarize the usefulness of Big O:

- It allows us to make simple statements without case qualifications, in cases where the runtime is different for different inputs.

- Sometimes, for particularly tricky problems, we (the computer science community) don't know the exact runtime, so we may only state an upper bound.

- It's a lot easier to write proofs for Big O than Big Theta, like we saw in finding the runtime of mergesort in the previous chapter. This is beyond the scope of this course.

Big Omega

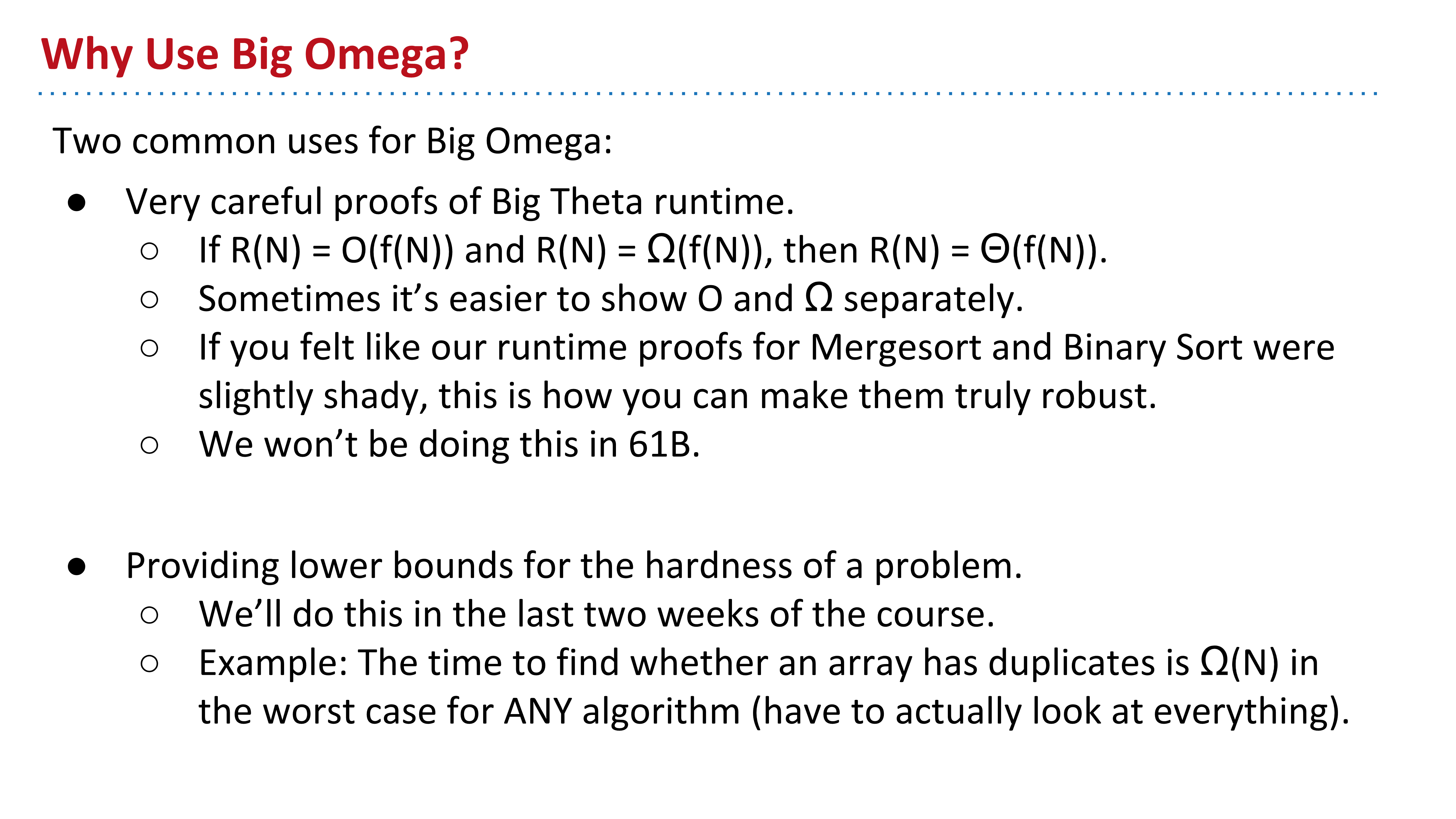

Why USe Big Omega

There's two common uses for Big Omega:

- It's used to prove Big Theta runtime. If R(N)=O(f(N)) and R(N)=Ω(f(N)), then R(N)=Θ(f(N)). Sometimes, it's easier to prove O and Ω separately. This is outside the scope of this course.

- It's used to prove the difficulty of a problem. For example, ANY duplicate-finding algorithm must be Ω(N), because the algorithm must at least look at each element.

Amortized Analysis (Intuitive Explanation)

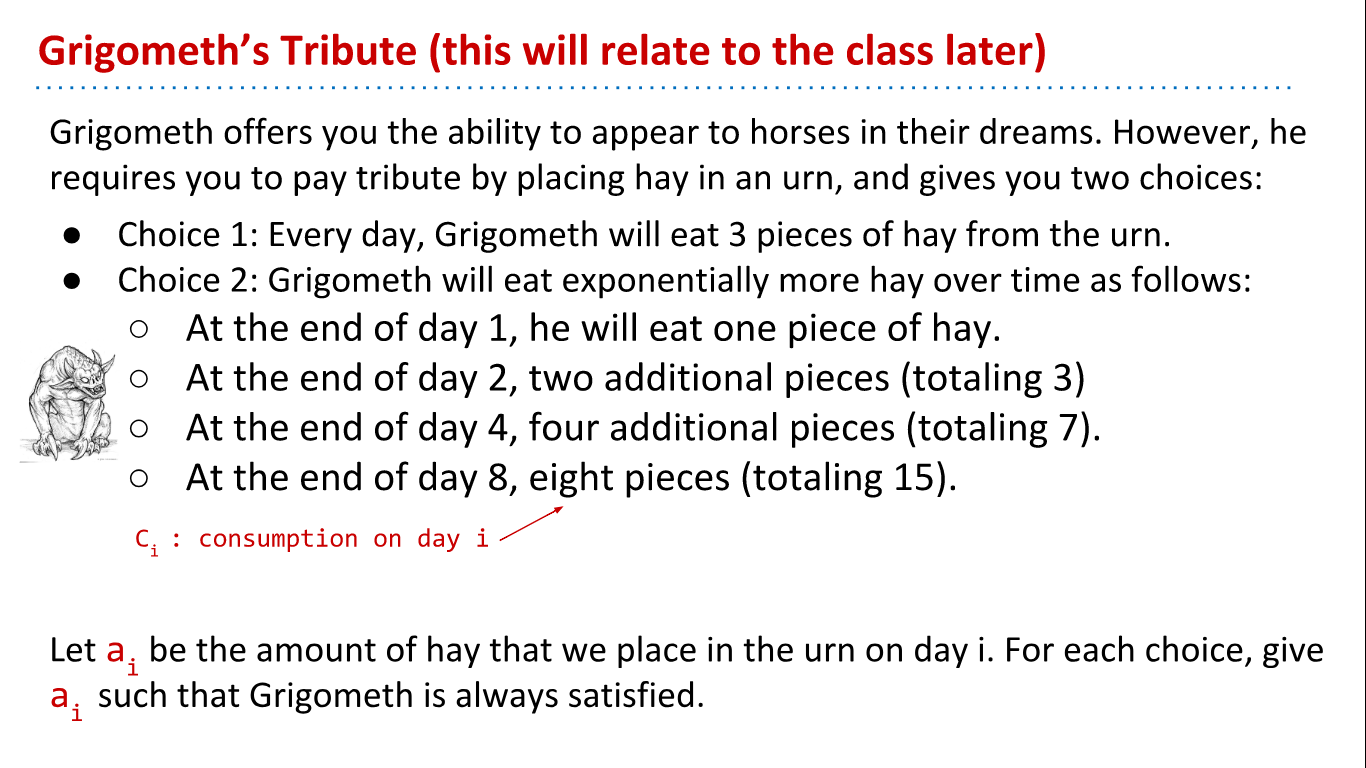

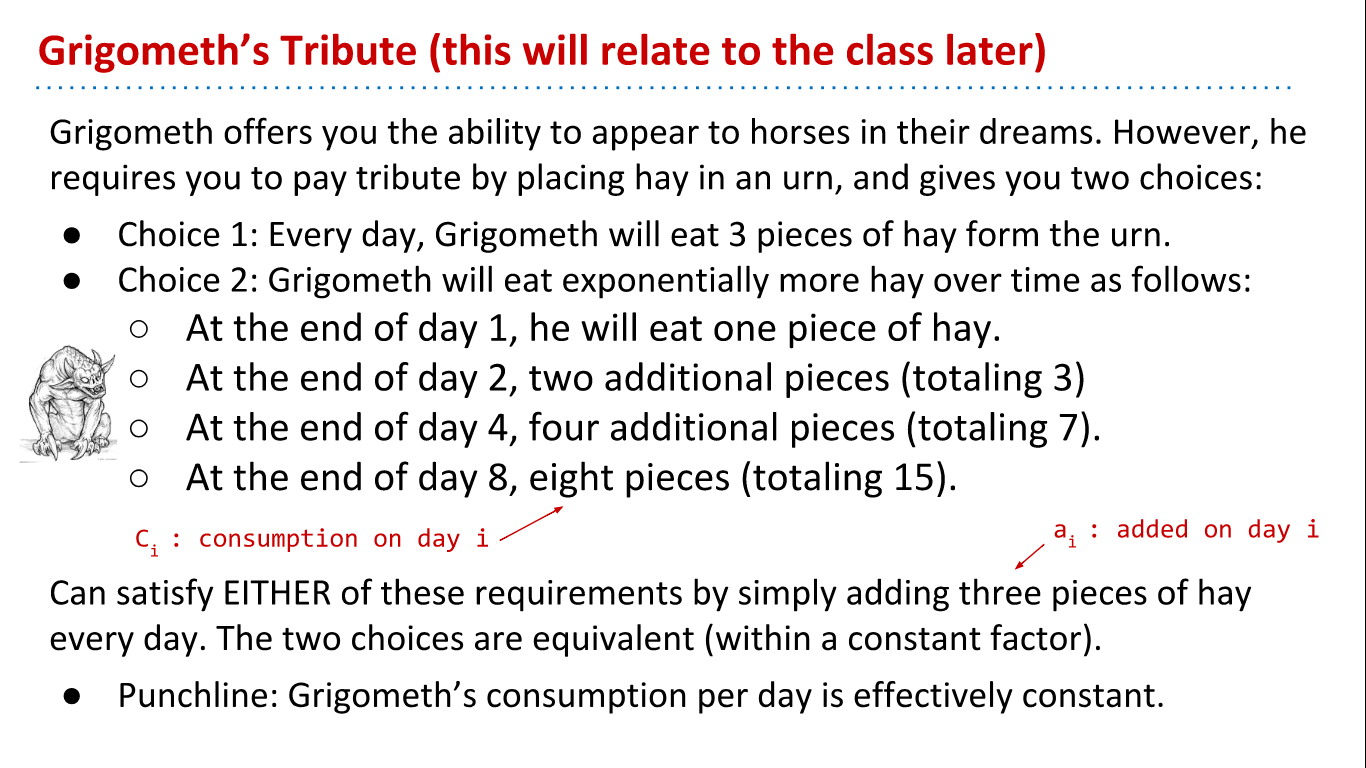

Grigometh's Urn

For the first choice, you'd have to put exactly 3 bushels of hay in the urn each day. However, the second choice actually turns out to be a a bit cheaper! You can get away with just putting in 2 bushels of hay in the urn each day. (Try to convince yourself why this is true -- one way to do this is the write out the total amount of hay you contribute after each day. You'll notice that whenever Grigometh comes for his hay snack, you'll always have one extra bushel in the urn after he takes his fill. Neat.)

The explanation will be shown below.

Bushels aside, notice here that Grigometh's hay consumption per day is effectively constant, and in choice 2, we can describe this situation as amortized constant hay consumption.

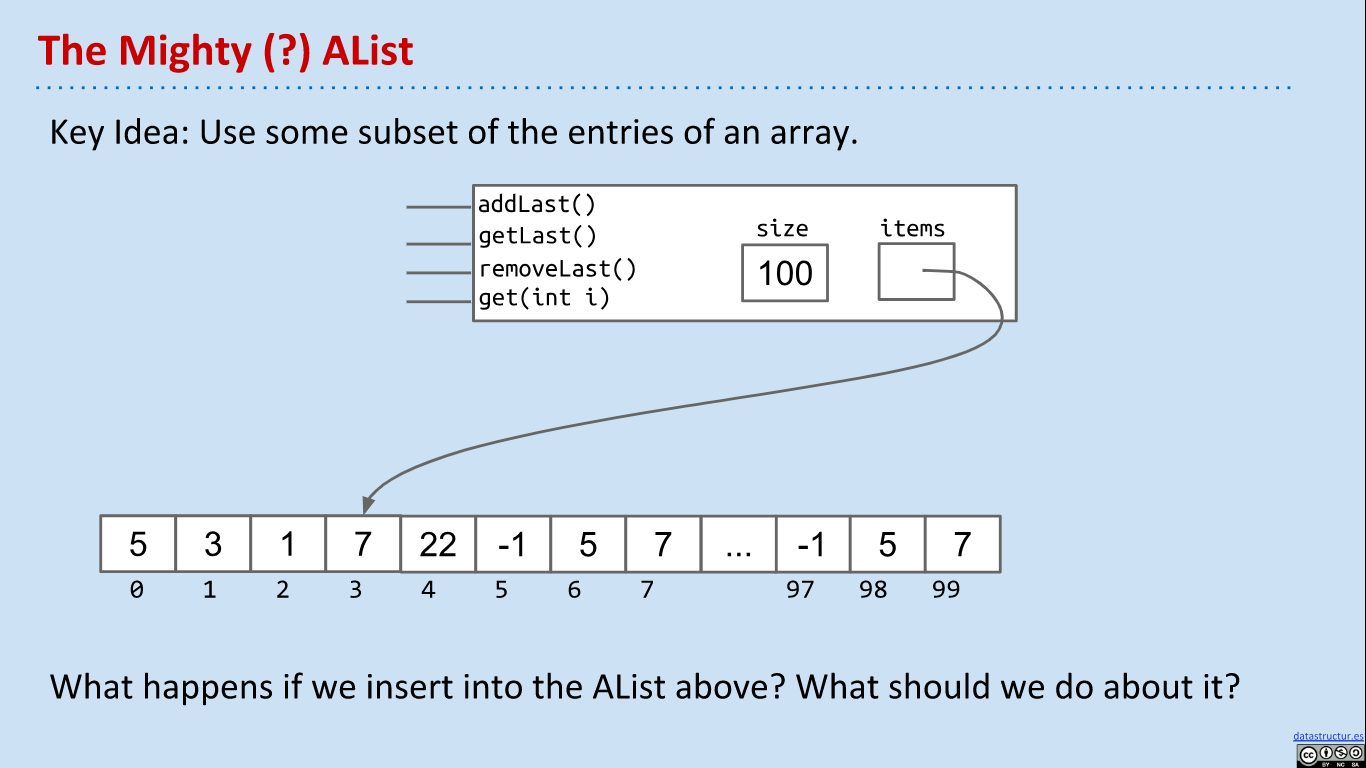

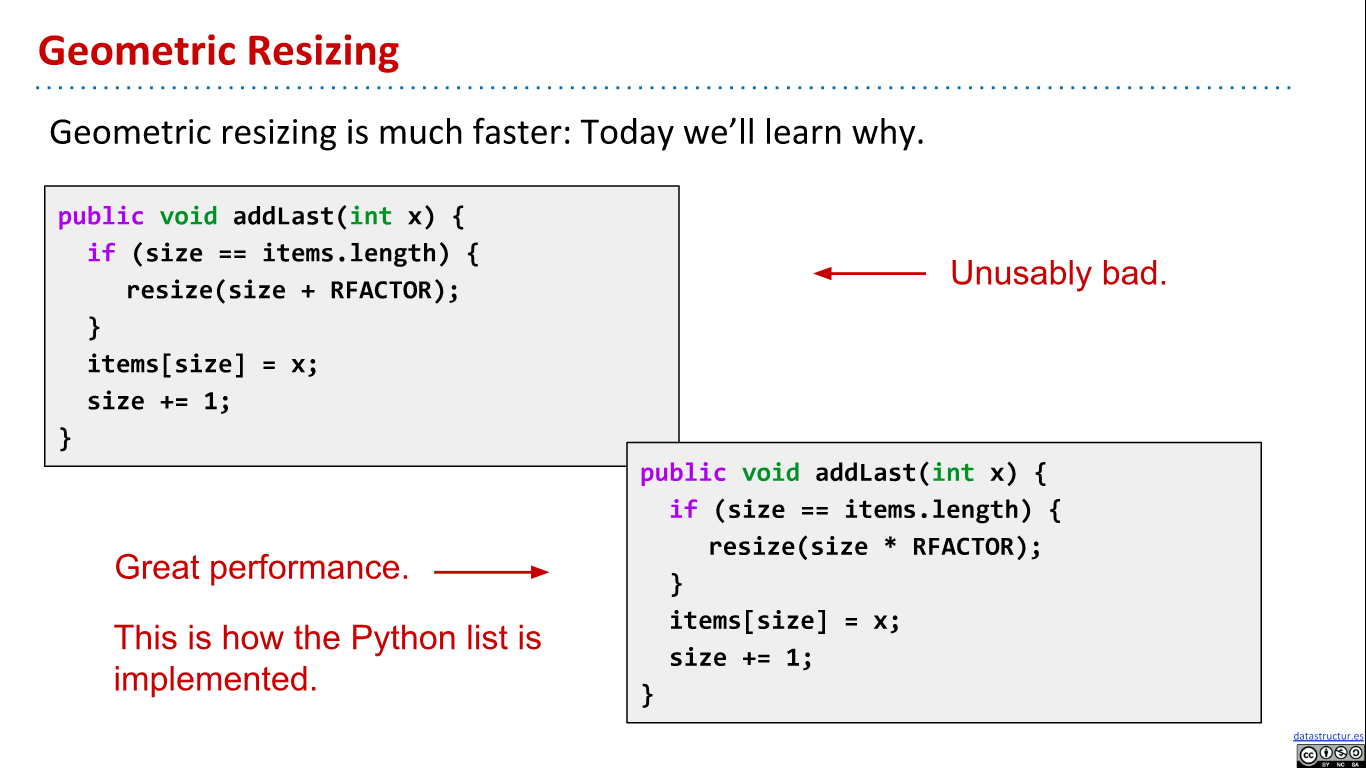

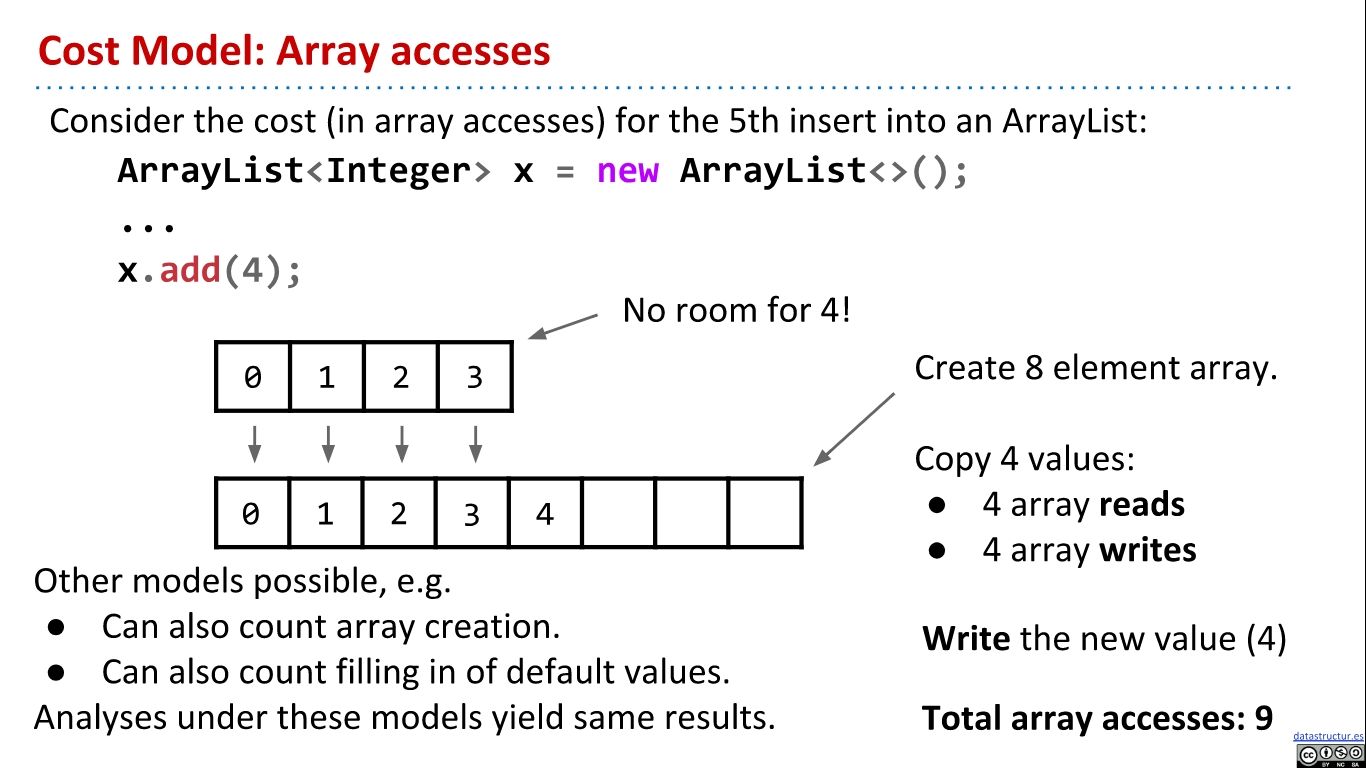

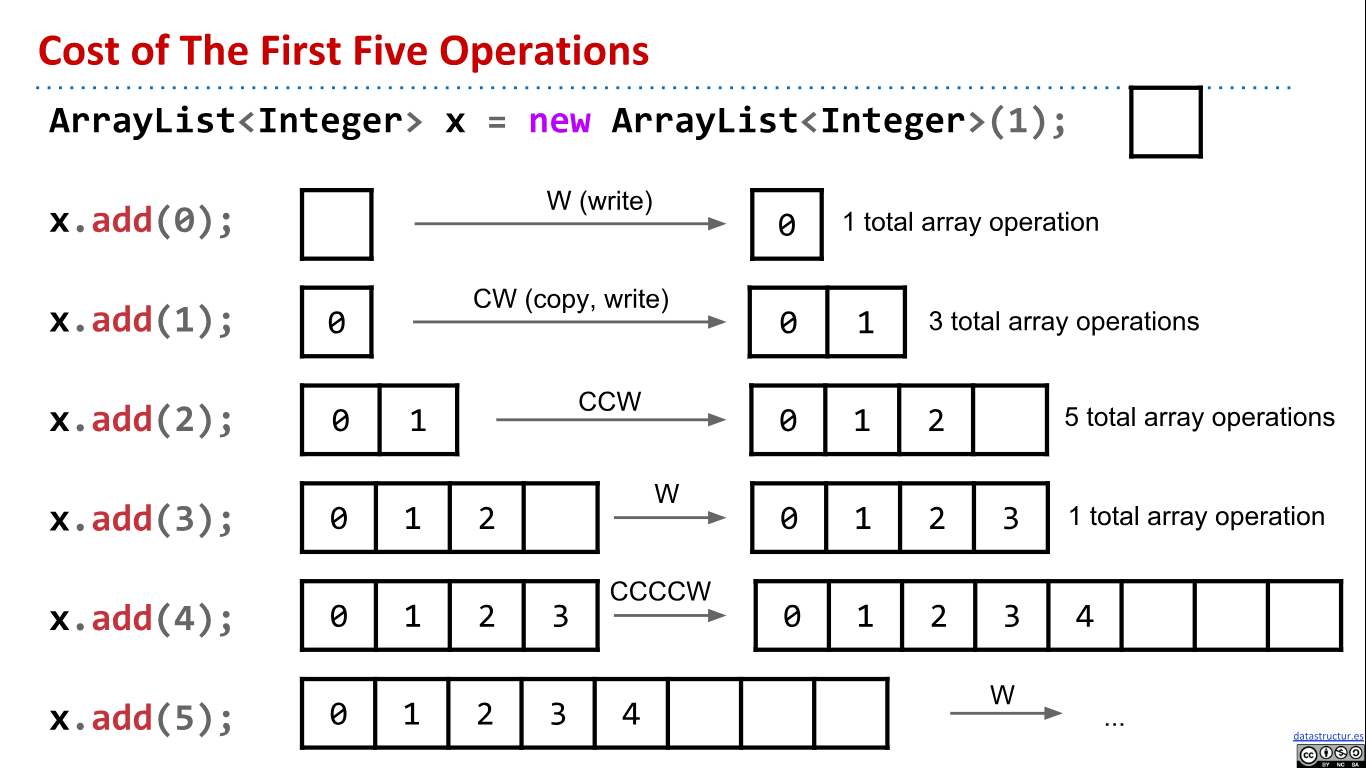

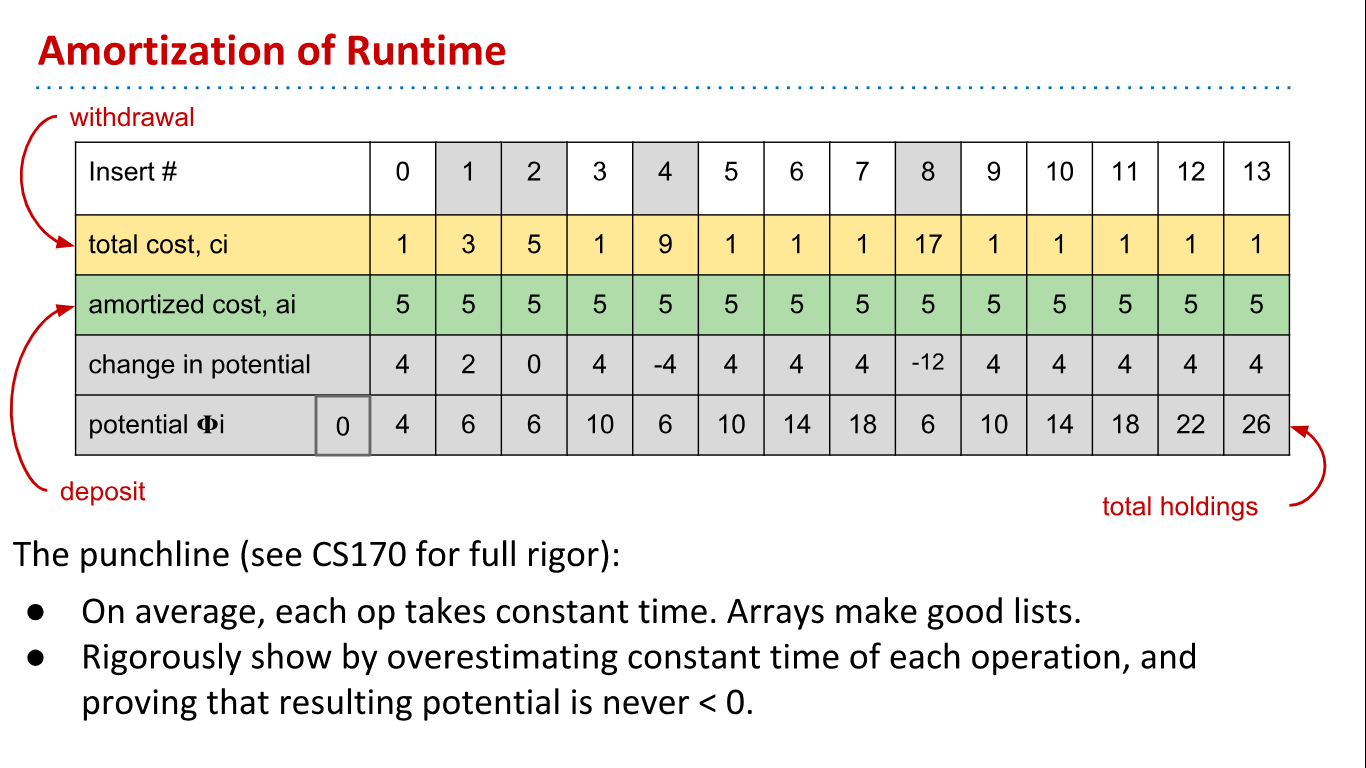

AList Resizing and Amortization

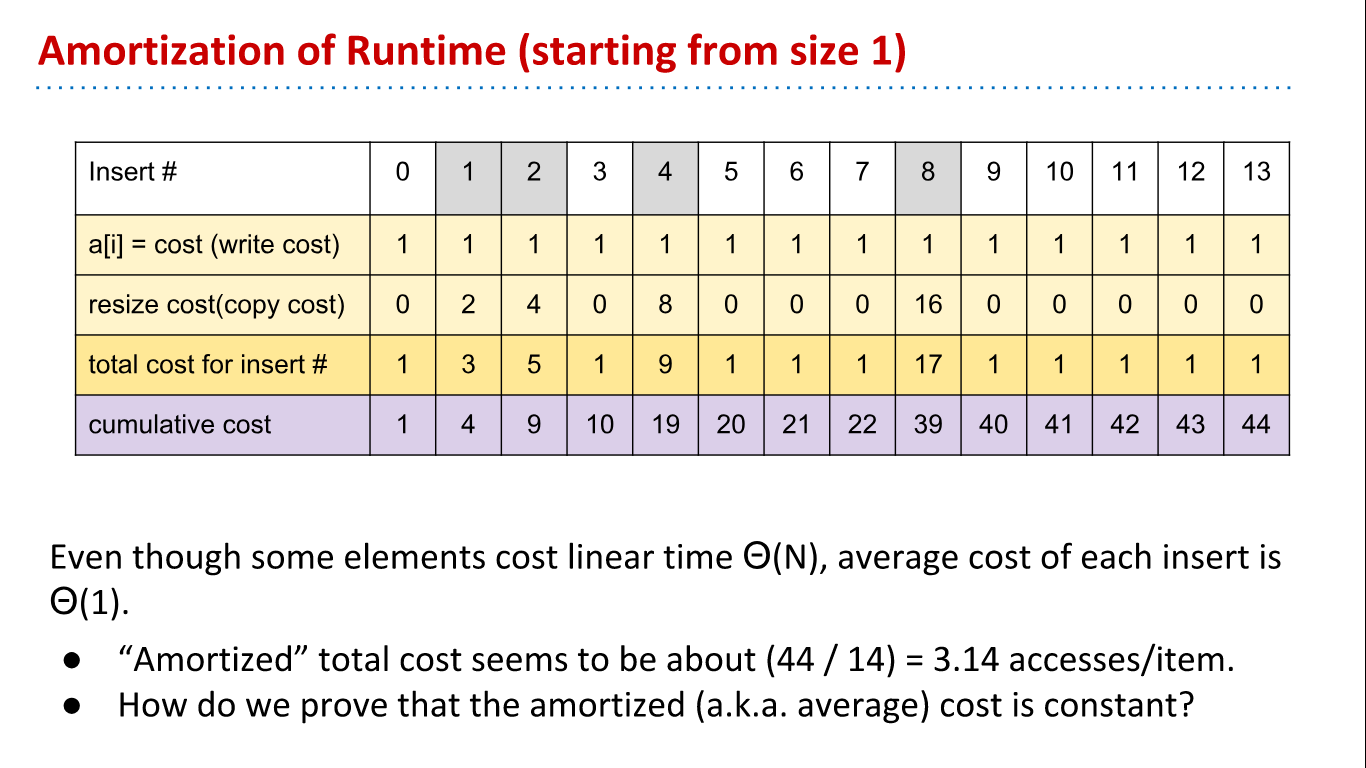

Let's look into the runtime of implementation of right one in more detail. Let RFACTOR be 2. When the array is full, resize doubles its size. Most add operations take Θ(1) time, but some are very expensive, and linear to the current size. However, if we average out the cost of expensive adds with resize over all the adds that are cheap, and given that expensive adds with happen half as frequently every time it happens, it turns out that on average, the runtime of add is Θ(1). We'll prove this in the next section.

Amortized Analysis (Rigorous Explanation)

A more rigorous examination of amortized analysis is done here, in three steps:

- Pick a cost model (like in regular runtime analysis)

- Compute the average cost of the i'th operation

- Show that this average (amortized) cost is bounded by a constant.

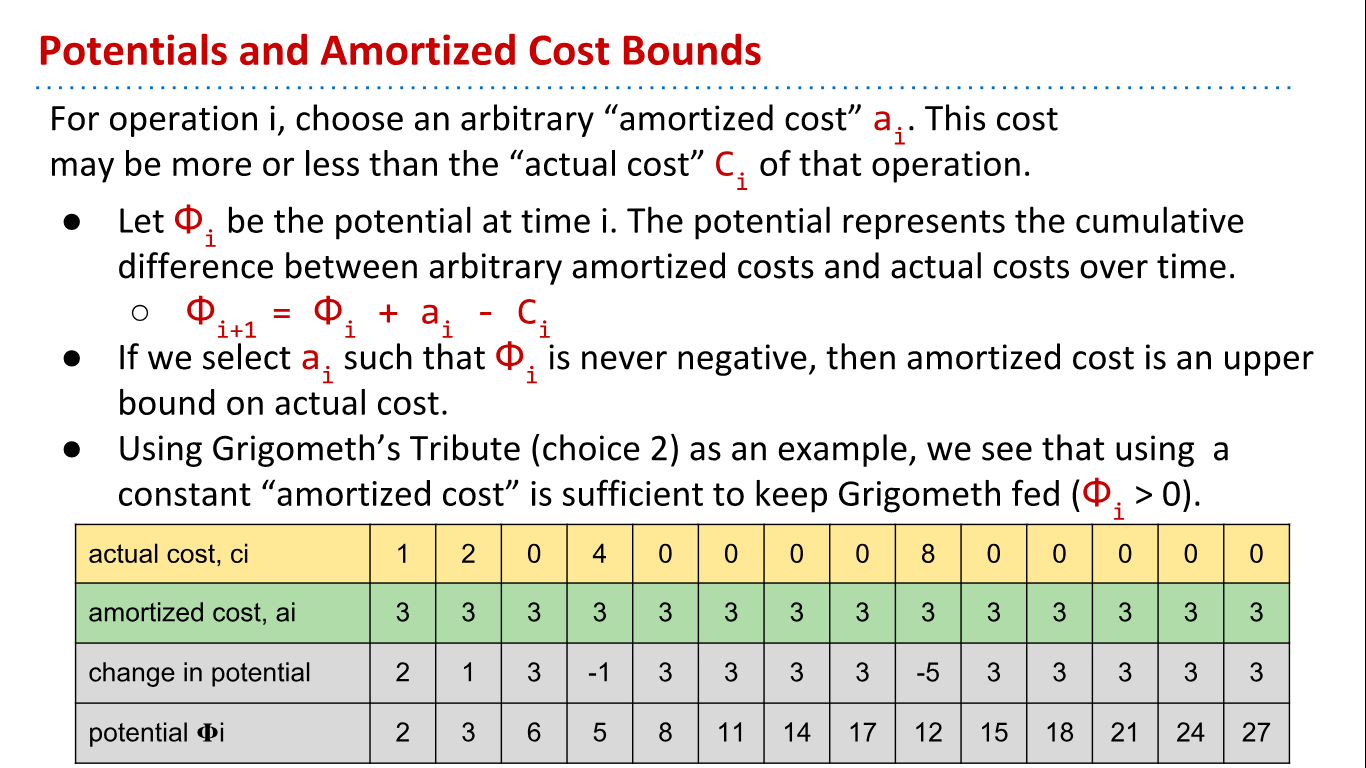

How to prove that the amortized (a.k.a. average) cost is constant?

ai is an arbitrary constant, meaning we can chose it. If we chose ai such that Φi is never negative and ai is constant for all i, then the amortized cost is an upper bound on the true cost. And if the true cost is upper bounded by a constant, then we've shown that it is on average constant time!