Hey,

I have a question concerning the transfer learning tutorial (https://pytorch.org/tutorials/beginner/transfer_learning_tutorial.html).

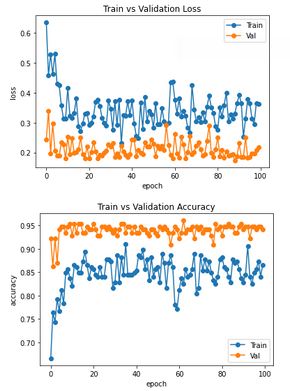

For a few days, I've been trying to figure out why the validation and training curves are reversed there. By this, I mean that for general neural networks the training curves are always better than the validation curves (lower loss and higher accuracy). However, as in the tutorial itself, this is not the case (see also values in the tutorial). To make the whole thing clearer, I also ran the tutorial for 100 epochs and plotted the accuracy and loss for training and validation. The graph looks like this:

Unfortunately, I haven't found a real reason for this yet.

It shouldn't be the dataset itself (I tried the same with other data). The only thing is the BatchNorm, which is different for training and validation. But I also suspect that this is not the reason for this big difference and the changing role. In past projects also on neural networks, with batch normalization at least I didn't have these reversed roles of validation and training.

Has anybody an idea, why this happens here and why it has not that effect using other neural networks?

cc @suraj813

Hey,

I have a question concerning the transfer learning tutorial (https://pytorch.org/tutorials/beginner/transfer_learning_tutorial.html).

For a few days, I've been trying to figure out why the validation and training curves are reversed there. By this, I mean that for general neural networks the training curves are always better than the validation curves (lower loss and higher accuracy). However, as in the tutorial itself, this is not the case (see also values in the tutorial). To make the whole thing clearer, I also ran the tutorial for 100 epochs and plotted the accuracy and loss for training and validation. The graph looks like this:

Unfortunately, I haven't found a real reason for this yet.

It shouldn't be the dataset itself (I tried the same with other data). The only thing is the BatchNorm, which is different for training and validation. But I also suspect that this is not the reason for this big difference and the changing role. In past projects also on neural networks, with batch normalization at least I didn't have these reversed roles of validation and training.

Has anybody an idea, why this happens here and why it has not that effect using other neural networks?

cc @suraj813