[HUDI-5987] Fix clustering on bootstrap table with row writer disabled#8342

[HUDI-5987] Fix clustering on bootstrap table with row writer disabled#8342yihua merged 4 commits intoapache:masterfrom

Conversation

|

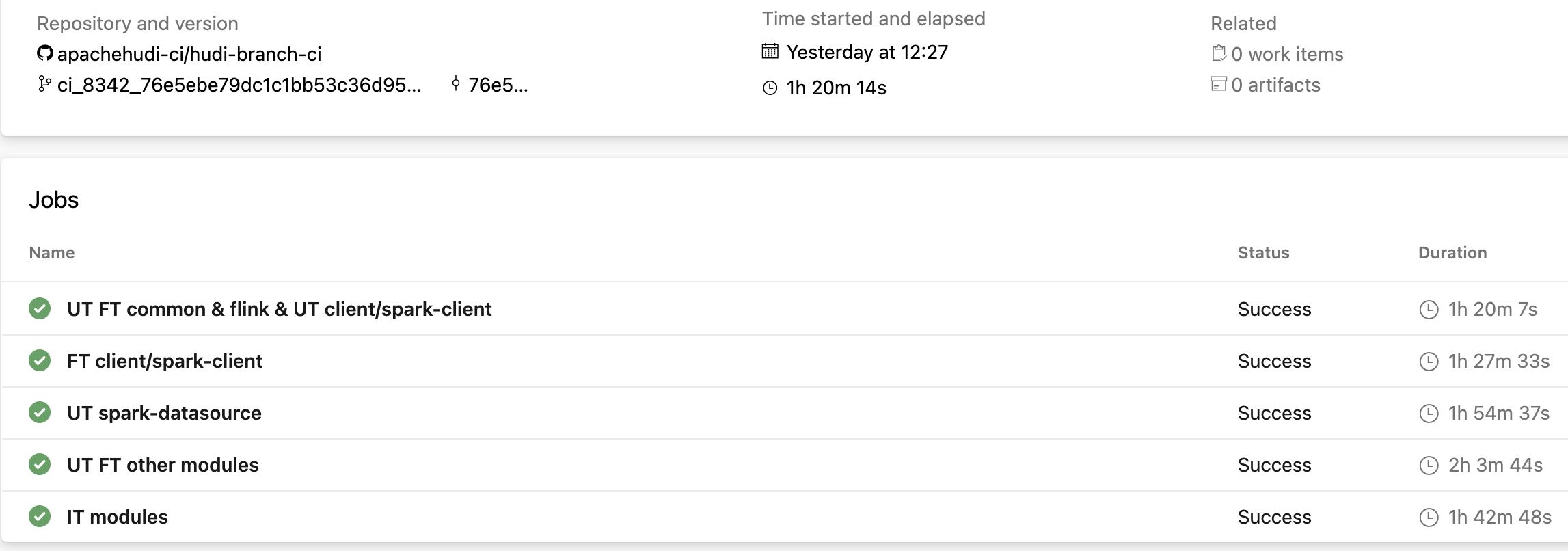

Rebased on top of #8289 with some cleanup and fixes. Tested for all Spark versions supported by Hudi. |

|

|

...n/java/org/apache/hudi/client/clustering/run/strategy/MultipleSparkJobExecutionStrategy.java

Outdated

Show resolved

Hide resolved

| String timeZoneId = jsc.getConf().get("timeZone", SQLConf.get().sessionLocalTimeZone()); | ||

| boolean shouldValidateColumns = jsc.getConf().getBoolean("spark.sql.sources.validatePartitionColumns", true); |

There was a problem hiding this comment.

Could this be moved to closure and use hadoopConf.get() to avoid additional variables passed?

|

|

||

| @Override | ||

| public Schema getSchema() { | ||

| return skeletonFileReader.getSchema(); |

There was a problem hiding this comment.

How is this used? I assume this only contains meta fields.

There was a problem hiding this comment.

Actually this is not used because we enforce the reader schema at the call site. But, I have changed it for and returning merged schema for completeness.

| clusteringOpsPartition.forEachRemaining(clusteringOp -> { | ||

| try { | ||

| Schema readerSchema = HoodieAvroUtils.addMetadataFields(new Schema.Parser().parse(writeConfig.getSchema())); | ||

| HoodieFileReader baseFileReader = HoodieFileReaderFactory.getReaderFactory(recordType).getFileReader(hadoopConf.get(), new Path(clusteringOp.getDataFilePath())); |

There was a problem hiding this comment.

We should skip this for bootstrap file group.

There was a problem hiding this comment.

Actually bootstrap file reader depends on both skeleton and data file reader. So, this becomes the skeleton file reader in the conditional block.

| Schema readerSchema = HoodieAvroUtils.addMetadataFields(new Schema.Parser().parse(writeConfig.getSchema())); | ||

| HoodieFileReader baseFileReader = HoodieFileReaderFactory.getReaderFactory(recordType).getFileReader(hadoopConf.get(), new Path(clusteringOp.getDataFilePath())); | ||

| // handle bootstrap path | ||

| if (StringUtils.nonEmpty(clusteringOp.getBootstrapFilePath()) && StringUtils.nonEmpty(bootstrapBasePath)) { |

There was a problem hiding this comment.

Do we need to provide the same fix for MOR table, in readRecordsForGroupWithLogs(jsc, clusteringOps, instantTime)? E.g., clustering is applied to a bootstrap file group with bootstrap data file, skeleton file, and log files.

There was a problem hiding this comment.

Good catch! Refactored and added a test to cover this scenario.

apache#8342) This commit fixes the bug where the clustering on a bootstrap table (METADATA_ONLY bootstrap mode) with row writer disabled did not show correct results. Only meta-fields were populated, while data columns were null. The fix adds a separate HoodieBootstrapFileReader that stitches the meta columns with the data columns.

apache#8342) This commit fixes the bug where the clustering on a bootstrap table (METADATA_ONLY bootstrap mode) with row writer disabled did not show correct results. Only meta-fields were populated, while data columns were null. The fix adds a separate HoodieBootstrapFileReader that stitches the meta columns with the data columns.

apache#8342) This commit fixes the bug where the clustering on a bootstrap table (METADATA_ONLY bootstrap mode) with row writer disabled did not show correct results. Only meta-fields were populated, while data columns were null. The fix adds a separate HoodieBootstrapFileReader that stitches the meta columns with the data columns.

Change Logs

Clustering on a bootstrap table (

METADATA_ONLYbootstrap mode) with row writer disabled did not show correct results. Only meta-fields were populated, while data columns were null. This PR fixes the bug. It adds a separateHoodieBootstrapFileReaderthat stitches the meta columns with the data columns.Before this fix, snapshot query after clustering on the bootstrap table (check data columns):

After this fix:

Impact

A bug fix for bootstrap tables.

Risk level (write none, low medium or high below)

low

Only when the base file has a bootstrap path in clustering then only the

HoodieBootstrapFileReaderwill be used.Documentation Update

Describe any necessary documentation update if there is any new feature, config, or user-facing change

ticket number here and follow the instruction to make

changes to the website.

Contributor's checklist