-

Notifications

You must be signed in to change notification settings - Fork 1.1k

Description

Describe the bug

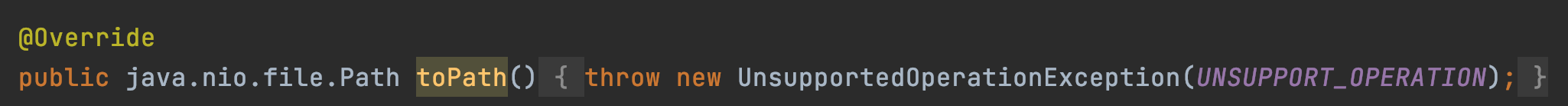

IoTDB cannot write data into HDFS because org.apache.iotdb.hadoop.fileSystem.HDFSFile doesn't implement toPath() method.

2021-03-25 16:12:59,934 [pool-1-IoTDB-Flush-ServerServiceImpl-thread-1] ERROR org.apache.iotdb.db.engine.storagegroup.TsFileProcessor:873 - root.ln/0 meet error when flush FileMetadata to hdfs://localhost:9000/data/data/sequence/root.ln/0/0/1616659978569-1-0.tsfile, change system mode to read-only

java.lang.UnsupportedOperationException: Unsupported operation.

at org.apache.iotdb.hadoop.fileSystem.HDFSFile.toPath(HDFSFile.java:433)

at org.apache.iotdb.db.engine.storagegroup.TsFileResource.serialize(TsFileResource.java:257)

at org.apache.iotdb.db.engine.storagegroup.TsFileProcessor.endFile(TsFileProcessor.java:939)

at org.apache.iotdb.db.engine.storagegroup.TsFileProcessor.flushOneMemTable(TsFileProcessor.java:868)

at org.apache.iotdb.db.engine.flush.FlushManager$FlushThread.runMayThrow(FlushManager.java:102)

at org.apache.iotdb.db.concurrent.WrappedRunnable.run(WrappedRunnable.java:32)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2021-03-25 16:12:59,949 [pool-1-IoTDB-Flush-ServerServiceImpl-thread-1] ERROR org.apache.iotdb.db.engine.storagegroup.TsFileProcessor:888 - root.ln/0: 1616659978569-1-0.tsfile marking or ending file meet error

java.lang.UnsupportedOperationException: Unsupported operation.

at org.apache.iotdb.hadoop.fileSystem.HDFSFile.toPath(HDFSFile.java:433)

at org.apache.iotdb.db.engine.storagegroup.TsFileResource.serialize(TsFileResource.java:257)

at org.apache.iotdb.db.engine.storagegroup.TsFileProcessor.endFile(TsFileProcessor.java:939)

at org.apache.iotdb.db.engine.storagegroup.TsFileProcessor.flushOneMemTable(TsFileProcessor.java:868)

at org.apache.iotdb.db.engine.flush.FlushManager$FlushThread.runMayThrow(FlushManager.java:102)

at org.apache.iotdb.db.concurrent.WrappedRunnable.run(WrappedRunnable.java:32)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

To Reproduce

Steps to reproduce the behavior:

- use HDFS to store data

- write data

set storage group to root.ln

create timeseries root.ln.wf01.wt01.status with datatype=BOOLEAN,encoding=PLAIN

insert into root.ln.wf01.wt01(timestamp,status) values(1509465600000,true)

flush - see the log_error.log

Expected behavior

succeed in writing data

Screenshots

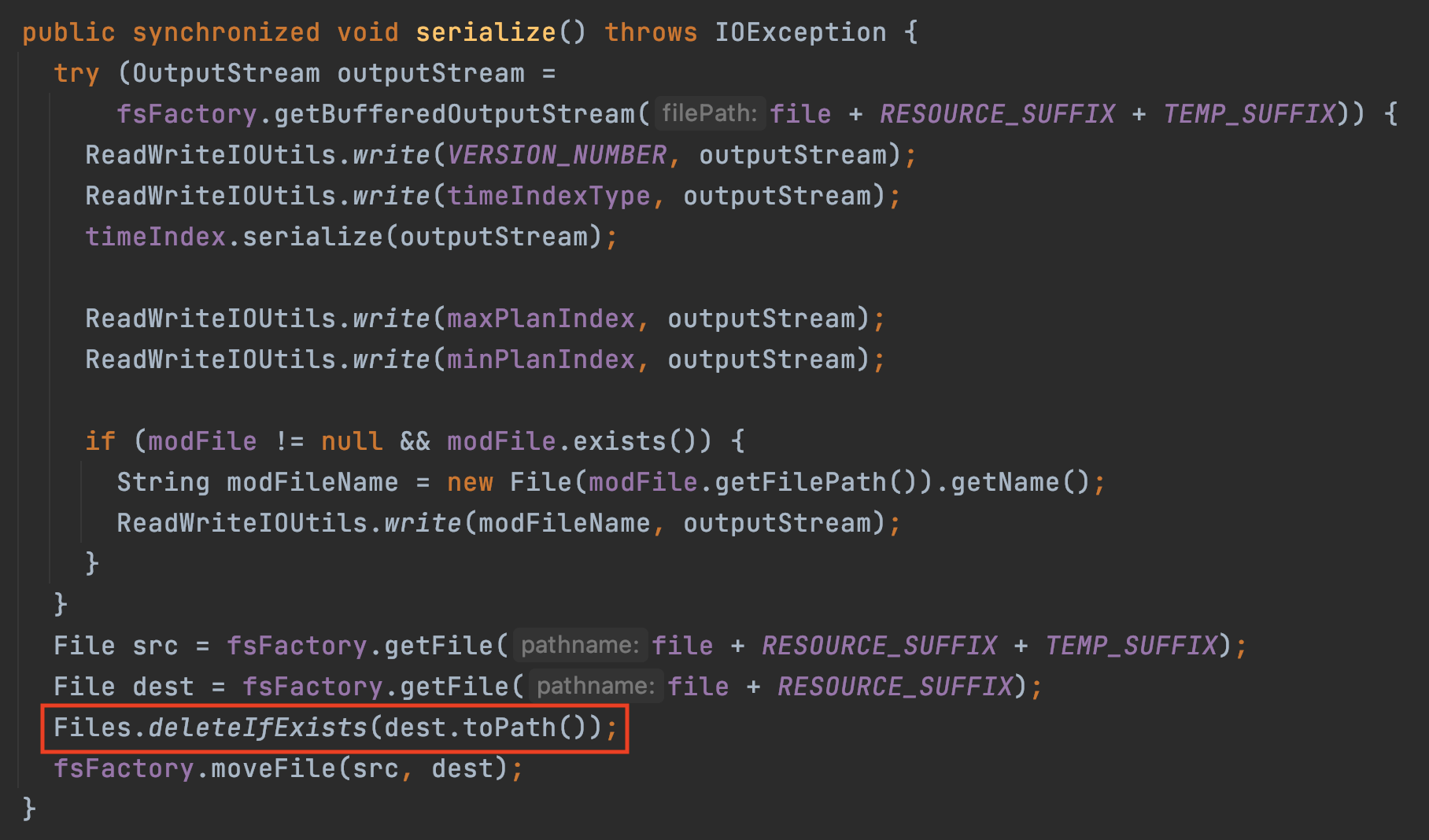

use java.nio package like org.apache.iotdb.db.engine.storagegroup.TsFileResource.serialize() will cause failure in hadoop

which is caused by

Possible solution

Implements Path, FileSystem, FileSystemProvider like https://github.com/damiencarol/jsr203-hadoop or just reuse it.