SOLR-15705: A deleteById request without _route_ param for compositeid router could be sent to all shard leaders#288

Conversation

solr/core/src/test/org/apache/solr/cloud/FullSolrCloudDistribCmdsTest.java

Show resolved

Hide resolved

solr/core/src/java/org/apache/solr/update/processor/DistributedZkUpdateProcessor.java

Outdated

Show resolved

Hide resolved

|

I revised the logic to call DeleteByQuery earlier. Now instead of calling doDeleteByQuery frrom doDistribDeleteById, it is called from doDeleteById. I tried revising doDeleteById to forward the request to all shard leaders if the required route is missing from the request, but this did not work. At one time I believe a deleteById would work even if the route was missing if it happened to land on the right shard, but it seems that this behavior is changed. |

| if (router instanceof CompositeIdRouter && routeField != null) { | ||

| DistribPhase phase = DistribPhase.parseParam(req.getParams().get(DISTRIB_UPDATE_PARAM)); | ||

| if (phase == DistribPhase.NONE) { | ||

| log.debug("Using compositeId router and deleteById command is with missing route value, distributing to all shard leaders"); |

There was a problem hiding this comment.

@makosten small nit, should this debug line be "command is missing" instead of "is with" ;-)

|

Sorry, I find having But firstly, I'm confused about how this PR relates to the JIRA issue. The JIRA issue clearly states this issue occurs when the implicit router is being used. Yet the test here and the fix use/check-for the CompositeIdRouter. Am I missing something? |

|

As far as implicit vs. composite router, you aren't missing anything. I was trying to avoid creating a duplicate JIRA and messed up by picking 6910. The patch only applies to the composite id router. Maybe the best way to unravel this is to create a new JIRA and reference 6910? |

|

Yes, please create a separate JIRA as it's only vaguely related to SOLR-6910. |

|

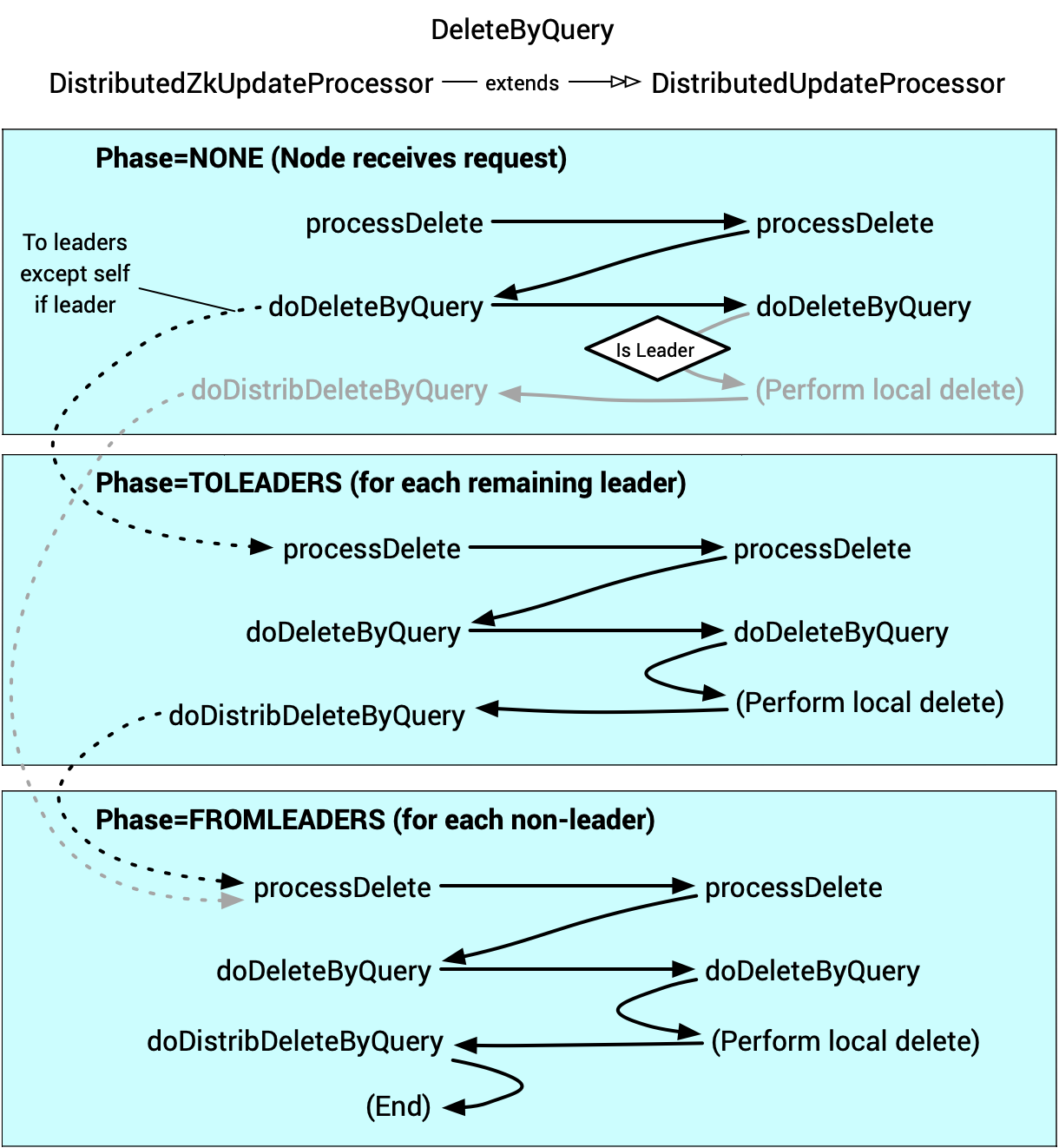

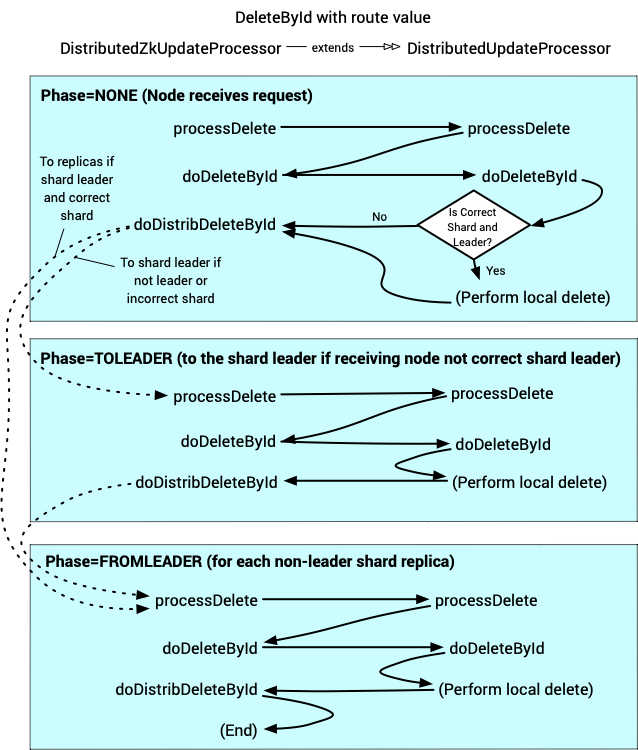

Here is a process flow of a DeleteByQuery. The doDistribDeleteByQuery is where a leader forwards the command to its replicas. The doDeleteByQuery in DistributedZkUpdateProcessor is where the command is forwarded to the shard leaders, so I do think that my logic of forwarding the request to doDeleteByQuery is in the right location, although the whole approach is not optimal. I did try modeling how DeleteByQuery works by forwarding the request to shard leaders, but found that the local deleteById, actually deleting the document from the index, does not happen if the route field value is missing. |

|

@cpoerschke any chance you'd be interested in reviewing this fix as well? Looking to get some more experienced folks to confirm this patch! |

If you do that and the test fails (presumably), I could help debug to see why. In terms of reviewers, I think @hossman would be a good choice based on him touching the same test very recently in SOLR-8889 to fix similar-ish bugs. |

|

Thanks @makosten for the diagrams to illustrate process flow, and @epugh for tagging me, this is an intriguing area of the code!

That seems intuitively right. From what I've learnt so far from code reading, the issue is that https://github.com/apache/lucene-solr/blob/releases/lucene-solr/8.10.1/solr/core/src/java/org/apache/solr/update/processor/DistributedZkUpdateProcessor.java#L609 picks a shard even if it does not have sufficient information to do so i.e. in the absence of the route it picks based on id which statistically will only sometimes pick the right shard and distribution to all shard leaders is necessary to be sure it reaches the right shard.

I'd be interested too in looking more into this. |

…e-by-id request. Refactoring.

|

@cpoerschke You nailed it! The CompositeId Router has some inconsistent behavior. It's strict on the router field when adding but if the route is missing when deleting by id, it returns the slice based on the unique id hash. I updated the CompositeIdRouter to return null for getTargetSlice for this condition, in which case it is run on the current slice, and then flag the request to be broadcast to the other shard leaders. This also affected the Implicit Router as it will now broadcast the delete-by-id request if the route is missing, so I updated a failing test. I'd like some feedback on this, as maybe the old behavior is more desirable for the Implicit router. |

| @@ -316,6 +317,41 @@ public void processDelete(DeleteUpdateCommand cmd) throws IOException { | |||

| protected void doDeleteById(DeleteUpdateCommand cmd) throws IOException { | |||

| setupRequest(cmd); | |||

There was a problem hiding this comment.

THREAD_SAFETY_VIOLATION: Unprotected write. Non-private method DistributedZkUpdateProcessor.doDeleteById(...) indirectly writes to field noggit.JSONParser.devNull.buf outside of synchronization.

Reporting because another access to the same memory occurs on a background thread, although this access may not.

(at-me in a reply with help or ignore)

There was a problem hiding this comment.

I’m LiftBot and I automatically analyze new code in code review, and comment when I find potential bugs. I also accept comments as commands. Just @sonatype-lift followed by the command: ignore to mark as false positive, unignore to undo, and help to see this message. Click here to add LiftBot to another repo.

From the "... does not automatically route ..." mention at https://solr.apache.org/guide/8_10/collection-management.html#create-parameters documentation I think the existing behaviour for the implicit router makes sense i.e. routing of both indexing and delete-by-id requests is the caller's responsibility. (If we wanted to change that behaviour, hypothetically, then doing so separately (e.g. as a follow-up issue and pull request) might be clearest and non-backwards compatibility would need to be considered and communicated e.g. users currently doing round-robins on delete-by-id external to Solr can cease doing that and users which use the implicit routing to (temporarily?) have the same document id on multiple shards would benefit from a way to opt-out of the delete-by-id broadcasting.) |

cpoerschke

left a comment

cpoerschke

left a comment

There was a problem hiding this comment.

Hi @makosten! I haven't yet looked at the test changes but the code changes conceptually make sense to me, comments inline are implementation detail related. What do you think?

|

|

||

| if (broadcastDeleteById && DistribPhase.parseParam(req.getParams().get(DISTRIB_UPDATE_PARAM)) == DistribPhase.NONE ) { | ||

|

|

||

| log.debug("The deleteById command is missing the required route, broadcasting to leaders of other shards"); |

There was a problem hiding this comment.

1/4 - minor: maybe include cmd.getId() in the debug logging here

There was a problem hiding this comment.

Good idea, I'll make this change.

| DocCollection coll = clusterState.getCollection(collection); | ||

| Collection<Slice> slices = coll.getRouter().getSearchSlices(route, params, coll); | ||

|

|

||

| // if just one slice, we can skip this |

There was a problem hiding this comment.

2/4 - if the "just one slice" logic happened earlier so that broadcastDeleteById is only true if there's more than one slice this:

- would remove the need for this

ifhere - could facilitate code sharing with

doDeleteByQuery

There was a problem hiding this comment.

Another good idea. I moved the check to setupRequest(). I believe the most efficient check that return the same value is coll.getActiveSlicesMap().size().

| outParams.remove("commit"); // this will be distributed from the local commit | ||

|

|

||

| cmdDistrib.distribDelete(cmd, leaders, outParams, false, rollupReplicationTracker, null); | ||

| boolean leaderForAnyShard = forwardDelete(coll, cmd); |

There was a problem hiding this comment.

3/4 - added a commit to the pull request branch -- feel free to revert or amend -- that factors out forwardDelete method from doDeleteByQuery, for potential code sharing with doDeleteById

There was a problem hiding this comment.

This is great! I'm going to see if forwardDelete can be shared.

There was a problem hiding this comment.

That worked quite nicely!

|

|

||

| // check if client has requested minimum replication factor information. will set replicationTracker to null if | ||

| // we aren't the leader or subShardLeader | ||

| checkReplicationTracker(cmd); |

There was a problem hiding this comment.

4/4 - doDeleteByQuery does rollup replication tracker logic before the forward-delete logic, doDeleteById here does check replication tracker logic after the forward-logic -- i've not yet considered this difference in detail, just noticed it whilst factoring out the forward-delete logic

There was a problem hiding this comment.

I added creating the rollup replication tracker before forwarding the delete-by-id to the other shard leaders and saw no behavior difference. The handleReplicationFactor method didn't receive responses from other shard leaders.

solr/core/src/java/org/apache/solr/update/processor/DistributedZkUpdateProcessor.java

Show resolved

Hide resolved

|

On the unintentional impact to ImplicitIdRouter, I was thinking adding a property to the router as whether to do the broadcast to avoid isinstanceof CompositeIdRouter. But, there are other places in DistributedZkUpdateProcessor that do exactly that. |

|

Thanks @cpoerschke for digging into this PR ;-) |

|

Added two commits with small tweaks, yet to complete running full test suite locally but assuming it passes LGTM here. @makosten - would you like to go add a |

https://issues.apache.org/jira/browse/SOLR-15705

Description

This is an implementation of distributed delete-by id-when using the CompositeId router with a router field defined and the field value is missing. The current behavior in this circumstance is to ignore delete by id request.

Solution

This first approach detects this condition and forwards the request to the doDeleteByQuery method. This works, but it would be better to extract the shared code from doDeleteByQuery. I looked at this but need some advice on how to proceed.

Tests

I've extended an existing test to verify the new behavior.

Checklist

Please review the following and check all that apply:

mainbranch../gradlew check.