DNN-39811 Purge schedule history much faster and in batches#3797

DNN-39811 Purge schedule history much faster and in batches#3797donker merged 3 commits intodnnsoftware:developfrom

Conversation

|

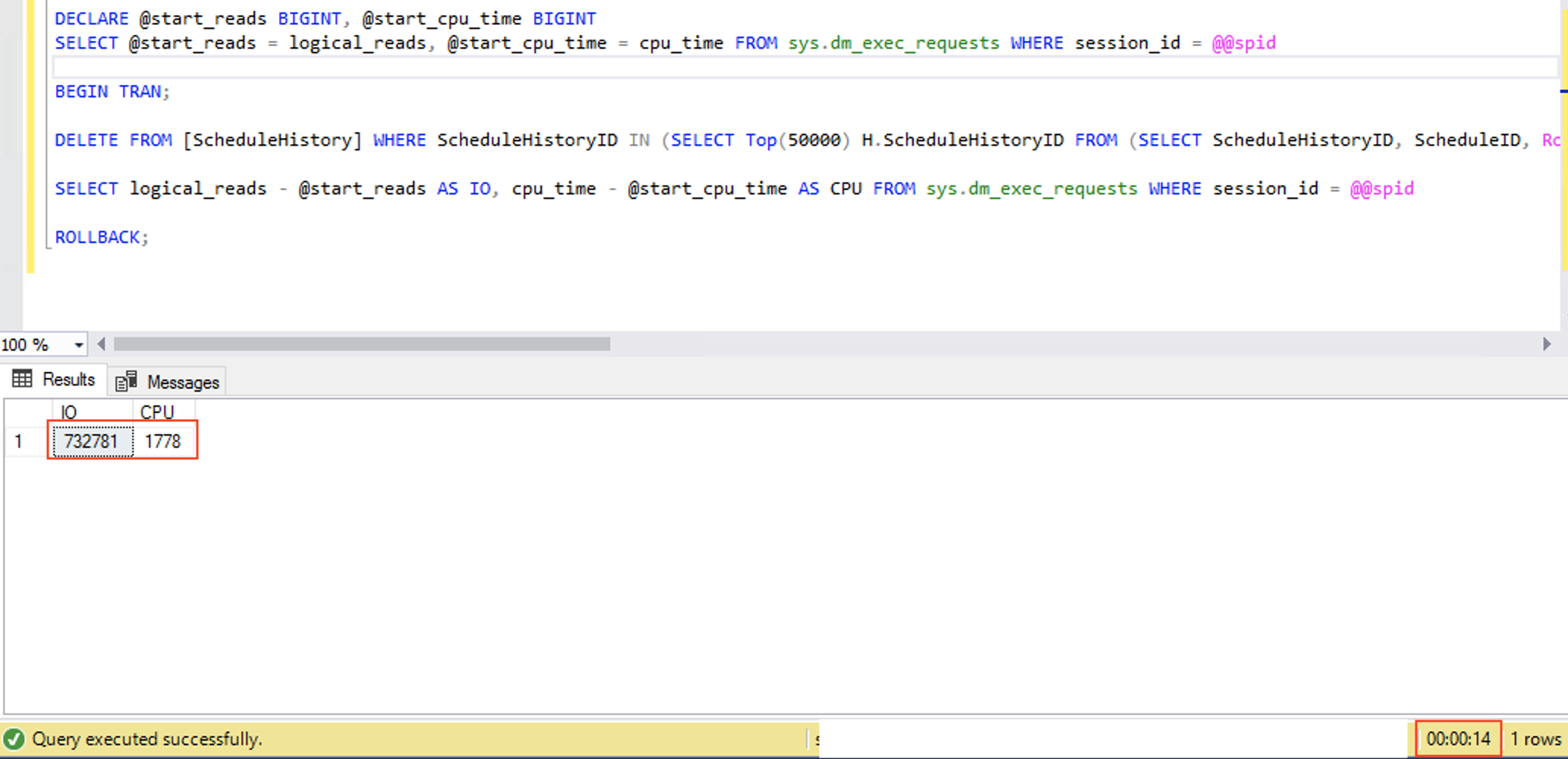

Using temp tables and table expressions is usually not very fast. |

|

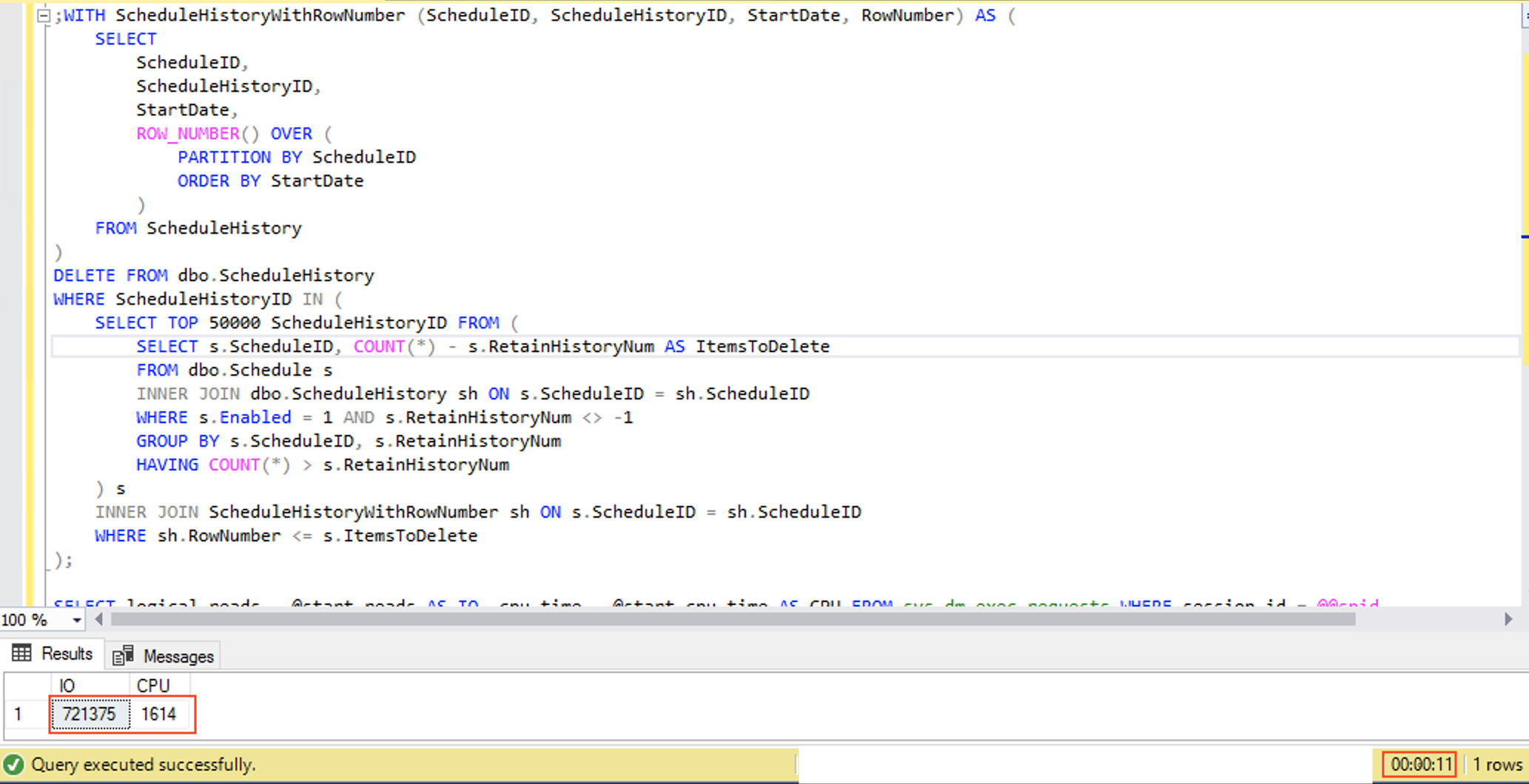

@sleupold, below you could find results of the comparison. I have changed my query to select only top 50000 and delete in one operation. Note, PR version of the SP deletes all obsolete items (not just top 50000) and deletes in batches to decrease lock contention (this increases SP time but it is not a problem in that case). Your version is OK, but it does not delete all obsolete items. |

|

@eugene-sea, |

|

@sleupold, yes, I have run each statement more than 5 times before measurement. On that production instance, history items are created with speed more than 5000 per hour. That is the reason obsolete items accumulate. Please see #3796. You could create PR with your version if you wish but the current version of SP in the platform is unacceptable and should be updated. With low number of items — deleting |

|

Just an update on this item, I'm doing some testing with large installations that I have to validate performance |

|

@mitchelsellers |

|

I'd add a host setting for batch size with default of 5000, this should be small enough for DNN on slow machines and might be increased, if necessary for bigger installations on powerful servers |

Just to make 100% sure it does not fail if there is a timeout we added a check for the existence of the temp table to delete it if present. This was discussed in the approvers meeting. This can be improved further as discussed but this PR is still an improvement so we will include it in 9.6.2

Fixes #3796

Summary

Subquery of

DELETEis refactored in the following way: we get schedules with too many scheduling history items and a number of them to delete, then we get that number of first history items to delete for each scheduling ordered byStartDate. This resembles original logic but has a much more efficient execution plan. Note, we delete all purgeable scheduling history items instead of the top 5000. Deletes are performed in batches as the number of items to delete may be big but we want to reduce lock contention.On production DB clone new version of SP is more than

300xfaster.