-

Notifications

You must be signed in to change notification settings - Fork 6.5k

Kandinsky_v22_yiyi #3936

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Merged

Merged

Kandinsky_v22_yiyi #3936

Changes from all commits

Commits

Show all changes

53 commits

Select commit

Hold shift + click to select a range

c40e286

Kandinsky2_2

cene555 c082fdd

fix init kandinsky2_2

cene555 392cff0

kandinsky2_2 fix inpainting

cene555 5296322

rename pipelines: remove decoder + 2_2 -> V22

8e6134d

Update scheduling_unclip.py

cene555 5834a82

remove text_encoder and tokenizer arguments from doc string

62af41a

add test for text2img

0603e5a

add tests for text2img & img2img

64b95f4

fix

80df9c0

add test for inpaint

82d76df

add prior tests

f72b53d

style

8d05dbf

copies

bbe07ba

Merge remote-tracking branch 'ru-diffusers/main' into kandinsky22-yiyi

374f237

add controlnet test

365fac5

style

cec9160

add a test for controlnet_img2img

a27b520

update prior_emb2emb api to accept image_embedding or image

b4189a1

add a test for prior_emb2emb

4a5c6ac

style

8fc24e6

remove try except

1480cdc

example

ce7ea47

fix

935614d

add doc string examples to all kandinsky pipelines

4c8c3ca

style

e737939

update doc

883a852

style

dda70da

add a top about 2.2

03cf726

Apply suggestions from code review

yiyixuxu 73d0f0b

vae -> movq

19e9574

vae -> movq

ce3f7c2

style

552ce7b

fix the #copied from

69df159

remove decoder from file name

30c0c9f

update doc: add a section for kandinsky 2.2

307de02

fix

80d85d5

fix-copies

453fed2

add coped from

6959e60

add copies from for prior

7bfe3e7

add copies from for prior emb2emb

81c5c77

copy from for img2img

39a49db

copied from for inpaint

9586192

more copied from

16440d8

more copies from

145ef68

more copies

ff1a204

remove the yiyi comments

060488e

Apply suggestions from code review

pcuenca fb3d0bb

Self-contained example, pipeline order

pcuenca 6d5e70d

Import prior output instead of redefining.

pcuenca 6a06ed4

Style

pcuenca 00c4981

Make VQModel compatible with model offload.

pcuenca 1c8fdd9

Merge remote-tracking branch 'origin/main' into kandinsky22-yiyi

pcuenca 63e7795

Fix copies

pcuenca File filter

Filter by extension

Conversations

Failed to load comments.

Loading

Jump to

Jump to file

Failed to load files.

Loading

Diff view

Diff view

There are no files selected for viewing

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

|

|

@@ -11,19 +11,12 @@ specific language governing permissions and limitations under the License. | |

|

|

||

| ## Overview | ||

|

|

||

| Kandinsky 2.1 inherits best practices from [DALL-E 2](https://arxiv.org/abs/2204.06125) and [Latent Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/latent_diffusion), while introducing some new ideas. | ||

| Kandinsky inherits best practices from [DALL-E 2](https://huggingface.co/papers/2204.06125) and [Latent Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/latent_diffusion), while introducing some new ideas. | ||

|

|

||

| It uses [CLIP](https://huggingface.co/docs/transformers/model_doc/clip) for encoding images and text, and a diffusion image prior (mapping) between latent spaces of CLIP modalities. This approach enhances the visual performance of the model and unveils new horizons in blending images and text-guided image manipulation. | ||

|

|

||

| The Kandinsky model is created by [Arseniy Shakhmatov](https://github.com/cene555), [Anton Razzhigaev](https://github.com/razzant), [Aleksandr Nikolich](https://github.com/AlexWortega), [Igor Pavlov](https://github.com/boomb0om), [Andrey Kuznetsov](https://github.com/kuznetsoffandrey) and [Denis Dimitrov](https://github.com/denndimitrov) and the original codebase can be found [here](https://github.com/ai-forever/Kandinsky-2) | ||

| The Kandinsky model is created by [Arseniy Shakhmatov](https://github.com/cene555), [Anton Razzhigaev](https://github.com/razzant), [Aleksandr Nikolich](https://github.com/AlexWortega), [Igor Pavlov](https://github.com/boomb0om), [Andrey Kuznetsov](https://github.com/kuznetsoffandrey) and [Denis Dimitrov](https://github.com/denndimitrov). The original codebase can be found [here](https://github.com/ai-forever/Kandinsky-2) | ||

|

|

||

| ## Available Pipelines: | ||

|

|

||

| | Pipeline | Tasks | | ||

| |---|---| | ||

| | [pipeline_kandinsky.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky.py) | *Text-to-Image Generation* | | ||

| | [pipeline_kandinsky_inpaint.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_inpaint.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky_img2img.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_img2img.py) | *Image-Guided Image Generation* | | ||

|

|

||

| ## Usage example | ||

|

|

||

|

|

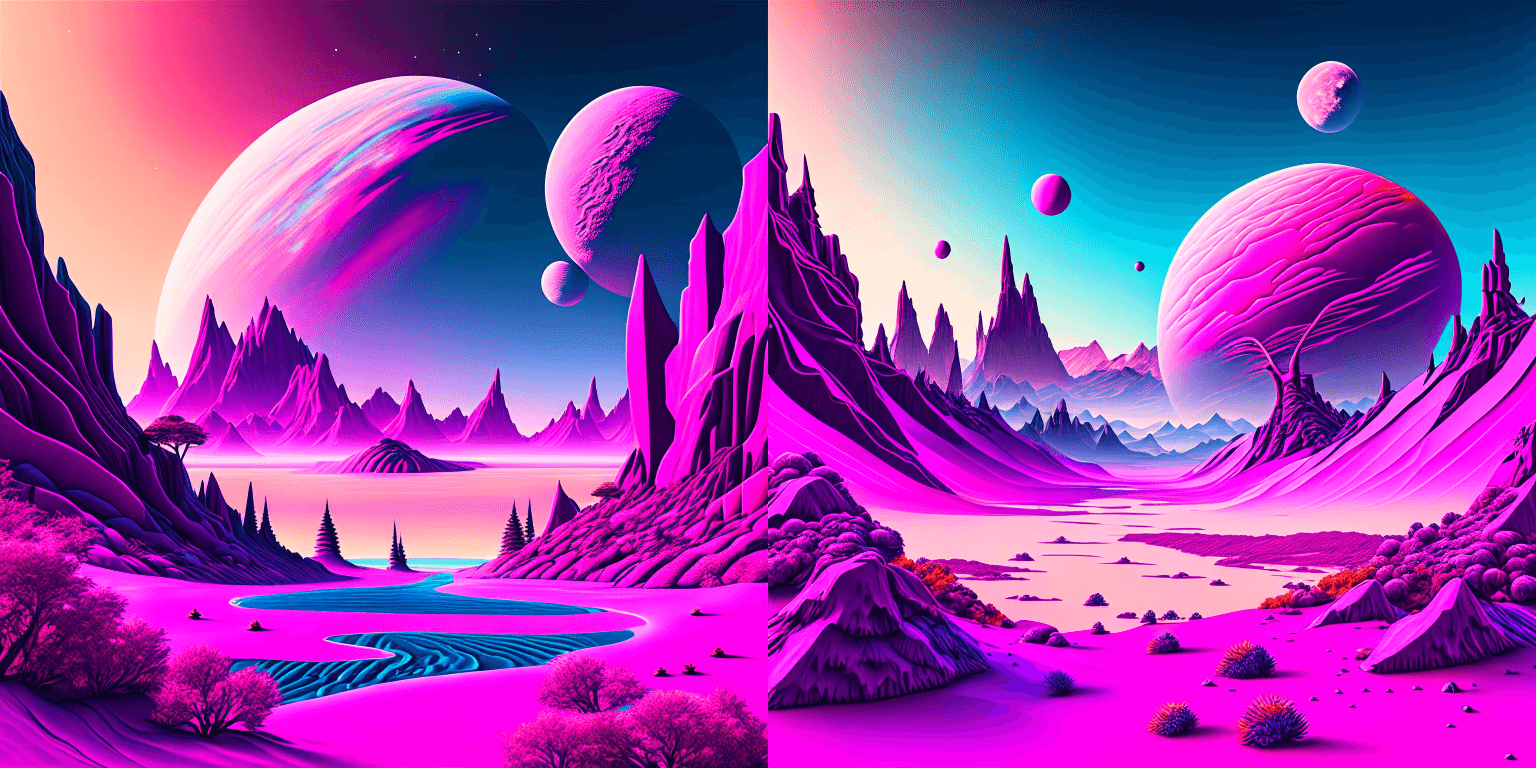

@@ -135,6 +128,7 @@ prompt = "birds eye view of a quilted paper style alien planet landscape, vibran | |

|  | ||

|

|

||

|

|

||

|

|

||

| ### Text Guided Image-to-Image Generation | ||

|

|

||

| The same Kandinsky model weights can be used for text-guided image-to-image translation. In this case, just make sure to load the weights using the [`KandinskyImg2ImgPipeline`] pipeline. | ||

|

|

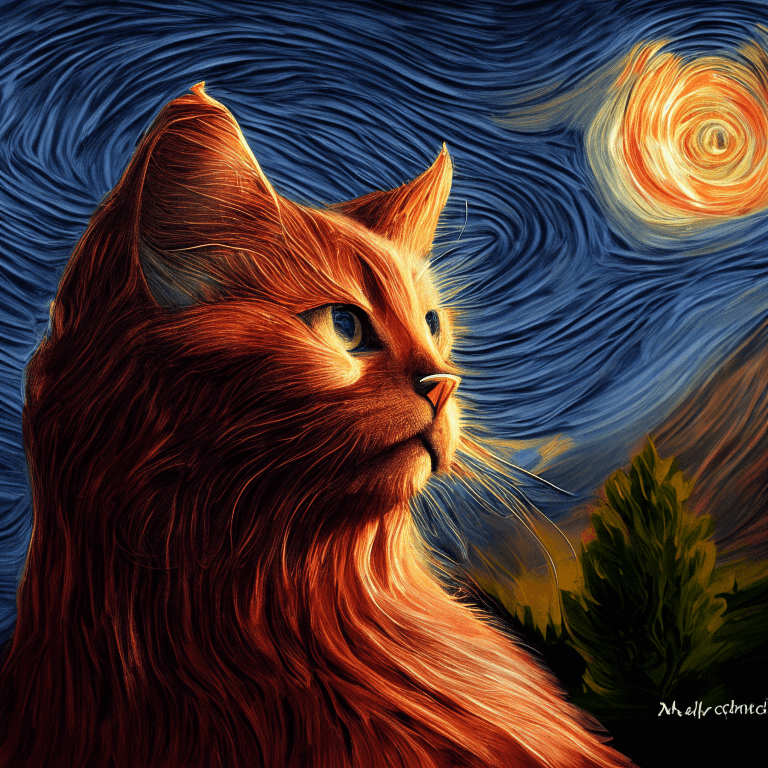

@@ -283,6 +277,207 @@ image.save("starry_cat.png") | |

|  | ||

|

|

||

|

|

||

| ### Text-to-Image Generation with ControlNet Conditioning | ||

|

|

||

| In the following, we give a simple example of how to use [`KandinskyV22ControlnetPipeline`] to add control to the text-to-image generation with a depth image. | ||

|

|

||

| First, let's take an image and extract its depth map. | ||

|

|

||

| ```python | ||

| from diffusers.utils import load_image | ||

|

|

||

| img = load_image( | ||

| "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main/kandinskyv22/cat.png" | ||

| ).resize((768, 768)) | ||

| ``` | ||

|  | ||

|

|

||

| We can use the `depth-estimation` pipeline from transformers to process the image and retrieve its depth map. | ||

|

|

||

| ```python | ||

| import torch | ||

| import numpy as np | ||

|

|

||

| from transformers import pipeline | ||

| from diffusers.utils import load_image | ||

|

|

||

|

|

||

| def make_hint(image, depth_estimator): | ||

sayakpaul marked this conversation as resolved.

Show resolved

Hide resolved

|

||

| image = depth_estimator(image)["depth"] | ||

| image = np.array(image) | ||

| image = image[:, :, None] | ||

| image = np.concatenate([image, image, image], axis=2) | ||

| detected_map = torch.from_numpy(image).float() / 255.0 | ||

| hint = detected_map.permute(2, 0, 1) | ||

| return hint | ||

|

|

||

|

|

||

| depth_estimator = pipeline("depth-estimation") | ||

| hint = make_hint(img, depth_estimator).unsqueeze(0).half().to("cuda") | ||

| ``` | ||

| Now, we load the prior pipeline and the text-to-image controlnet pipeline | ||

|

|

||

| ```python | ||

| from diffusers import KandinskyV22PriorPipeline, KandinskyV22ControlnetPipeline | ||

|

|

||

| pipe_prior = KandinskyV22PriorPipeline.from_pretrained( | ||

| "kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16 | ||

| ) | ||

| pipe_prior = pipe_prior.to("cuda") | ||

|

|

||

| pipe = KandinskyV22ControlnetPipeline.from_pretrained( | ||

| "kandinsky-community/kandinsky-2-2-controlnet-depth", torch_dtype=torch.float16 | ||

| ) | ||

| pipe = pipe.to("cuda") | ||

| ``` | ||

|

|

||

| We pass the prompt and negative prompt through the prior to generate image embeddings | ||

|

|

||

| ```python | ||

| prompt = "A robot, 4k photo" | ||

|

|

||

| negative_prior_prompt = "lowres, text, error, cropped, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, out of frame, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, fused fingers, too many fingers, long neck, username, watermark, signature" | ||

|

|

||

| generator = torch.Generator(device="cuda").manual_seed(43) | ||

| image_emb, zero_image_emb = pipe_prior( | ||

| prompt=prompt, negative_prompt=negative_prior_prompt, generator=generator | ||

| ).to_tuple() | ||

| ``` | ||

|

|

||

| Now we can pass the image embeddings and the depth image we extracted to the controlnet pipeline. With Kandinsky 2.2, only prior pipelines accept `prompt` input. You do not need to pass the prompt to the controlnet pipeline. | ||

|

|

||

| ```python | ||

| images = pipe( | ||

| image_embeds=image_emb, | ||

| negative_image_embeds=zero_image_emb, | ||

| hint=hint, | ||

| num_inference_steps=50, | ||

| generator=generator, | ||

| height=768, | ||

| width=768, | ||

| ).images | ||

|

|

||

| images[0].save("robot_cat.png") | ||

| ``` | ||

|

|

||

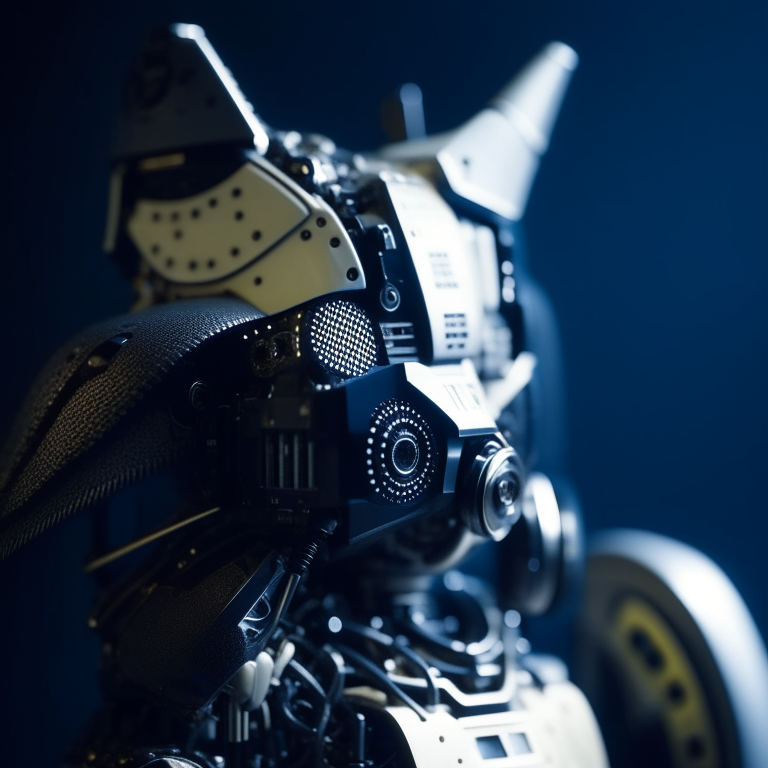

| The output image looks as follow: | ||

|  | ||

|

|

||

| ### Image-to-Image Generation with ControlNet Conditioning | ||

sayakpaul marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| Kandinsky 2.2 also includes a [`KandinskyV22ControlnetImg2ImgPipeline`] that will allow you to add control to the image generation process with both the image and its depth map. This pipeline works really well with [`KandinskyV22PriorEmb2EmbPipeline`], which generates image embeddings based on both a text prompt and an image. | ||

|

|

||

| For our robot cat example, we will pass the prompt and cat image together to the prior pipeline to generate an image embedding. We will then use that image embedding and the depth map of the cat to further control the image generation process. | ||

|

|

||

| We can use the same cat image and its depth map from the last example. | ||

|

|

||

| ```python | ||

| import torch | ||

| import numpy as np | ||

|

|

||

| from diffusers import KandinskyV22PriorEmb2EmbPipeline, KandinskyV22ControlnetImg2ImgPipeline | ||

| from diffusers.utils import load_image | ||

| from transformers import pipeline | ||

pcuenca marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| img = load_image( | ||

| "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinskyv22/cat.png" | ||

| ).resize((768, 768)) | ||

|

|

||

|

|

||

| def make_hint(image, depth_estimator): | ||

| image = depth_estimator(image)["depth"] | ||

| image = np.array(image) | ||

| image = image[:, :, None] | ||

| image = np.concatenate([image, image, image], axis=2) | ||

| detected_map = torch.from_numpy(image).float() / 255.0 | ||

| hint = detected_map.permute(2, 0, 1) | ||

| return hint | ||

|

|

||

|

|

||

| depth_estimator = pipeline("depth-estimation") | ||

| hint = make_hint(img, depth_estimator).unsqueeze(0).half().to("cuda") | ||

|

|

||

| pipe_prior = KandinskyV22PriorEmb2EmbPipeline.from_pretrained( | ||

| "kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16 | ||

| ) | ||

| pipe_prior = pipe_prior.to("cuda") | ||

|

|

||

| pipe = KandinskyV22ControlnetImg2ImgPipeline.from_pretrained( | ||

| "kandinsky-community/kandinsky-2-2-controlnet-depth", torch_dtype=torch.float16 | ||

| ) | ||

| pipe = pipe.to("cuda") | ||

|

|

||

| prompt = "A robot, 4k photo" | ||

| negative_prior_prompt = "lowres, text, error, cropped, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, out of frame, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, fused fingers, too many fingers, long neck, username, watermark, signature" | ||

|

|

||

| generator = torch.Generator(device="cuda").manual_seed(43) | ||

|

|

||

| # run prior pipeline | ||

|

|

||

| img_emb = pipe_prior(prompt=prompt, image=img, strength=0.85, generator=generator) | ||

| negative_emb = pipe_prior(prompt=negative_prior_prompt, image=img, strength=1, generator=generator) | ||

|

|

||

| # run controlnet img2img pipeline | ||

| images = pipe( | ||

| image=img, | ||

| strength=0.5, | ||

| image_embeds=img_emb.image_embeds, | ||

| negative_image_embeds=negative_emb.image_embeds, | ||

| hint=hint, | ||

| num_inference_steps=50, | ||

| generator=generator, | ||

| height=768, | ||

| width=768, | ||

| ).images | ||

|

|

||

| images[0].save("robot_cat.png") | ||

| ``` | ||

|

|

||

| Here is the output. Compared with the output from our text-to-image controlnet example, it kept a lot more cat facial details from the original image and worked into the robot style we asked for. | ||

|

|

||

|  | ||

|

|

||

| ## Kandinsky 2.2 | ||

|

|

||

| The Kandinsky 2.2 release includes robust new text-to-image models that support text-to-image generation, image-to-image generation, image interpolation, and text-guided image inpainting. The general workflow to perform these tasks using Kandinsky 2.2 is the same as in Kandinsky 2.1. First, you will need to use a prior pipeline to generate image embeddings based on your text prompt, and then use one of the image decoding pipelines to generate the output image. The only difference is that in Kandinsky 2.2, all of the decoding pipelines no longer accept the `prompt` input, and the image generation process is conditioned with only `image_embeds` and `negative_image_embeds`. | ||

|

|

||

| Let's look at an example of how to perform text-to-image generation using Kandinsky 2.2. | ||

|

|

||

| First, let's create the prior pipeline and text-to-image pipeline with Kandinsky 2.2 checkpoints. | ||

|

|

||

| ```python | ||

| from diffusers import DiffusionPipeline | ||

| import torch | ||

|

|

||

| pipe_prior = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16) | ||

|

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Sidenote: should we release the weights as safetensors, and with fp16 variants? cc @patrickvonplaten @sayakpaul |

||

| pipe_prior.to("cuda") | ||

|

|

||

| t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16) | ||

| t2i_pipe.to("cuda") | ||

| ``` | ||

|

|

||

| You can then use `pipe_prior` to generate image embeddings. | ||

|

|

||

| ```python | ||

| prompt = "portrait of a women, blue eyes, cinematic" | ||

| negative_prompt = "low quality, bad quality" | ||

|

|

||

| image_embeds, negative_image_embeds = pipe_prior(prompt, guidance_scale=1.0).to_tuple() | ||

| ``` | ||

|

|

||

| Now you can pass these embeddings to the text-to-image pipeline. When using Kandinsky 2.2 you don't need to pass the `prompt` (but you do with the previous version, Kandinsky 2.1). | ||

|

|

||

| ``` | ||

| image = t2i_pipe(image_embeds=image_embeds, negative_image_embeds=negative_image_embeds, height=768, width=768).images[ | ||

| 0 | ||

| ] | ||

| image.save("portrait.png") | ||

| ``` | ||

|  | ||

|

|

||

| We used the text-to-image pipeline as an example, but the same process applies to all decoding pipelines in Kandinsky 2.2. For more information, please refer to our API section for each pipeline. | ||

|

|

||

|

|

||

| ## Optimization | ||

|

|

||

| Running Kandinsky in inference requires running both a first prior pipeline: [`KandinskyPriorPipeline`] | ||

|

|

@@ -335,30 +530,84 @@ t2i_pipe.unet = torch.compile(t2i_pipe.unet, mode="reduce-overhead", fullgraph=T | |

| After compilation you should see a very fast inference time. For more information, | ||

| feel free to have a look at [Our PyTorch 2.0 benchmark](https://huggingface.co/docs/diffusers/main/en/optimization/torch2.0). | ||

|

|

||

| ## Available Pipelines: | ||

|

|

||

| | Pipeline | Tasks | | ||

| |---|---| | ||

| | [pipeline_kandinsky2_2.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky2_2/pipeline_kandinsky2_2.py) | *Text-to-Image Generation* | | ||

| | [pipeline_kandinsky.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky.py) | *Text-to-Image Generation* | | ||

| | [pipeline_kandinsky2_2_inpaint.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky2_2/pipeline_kandinsky2_2_inpaint.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky_inpaint.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_inpaint.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky2_2_img2img.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky2_2/pipeline_kandinsky2_2_img2img.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky_img2img.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky/pipeline_kandinsky_img2img.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky2_2_controlnet.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky2_2/pipeline_kandinsky2_2_controlnet.py) | *Image-Guided Image Generation* | | ||

| | [pipeline_kandinsky2_2_controlnet_img2img.py](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/kandinsky2_2/pipeline_kandinsky2_2_controlnet_img2img.py) | *Image-Guided Image Generation* | | ||

|

|

||

|

|

||

| ### KandinskyV22Pipeline | ||

pcuenca marked this conversation as resolved.

Show resolved

Hide resolved

|

||

|

|

||

| [[autodoc]] KandinskyV22Pipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ### KandinskyV22ControlnetPipeline | ||

|

|

||

| [[autodoc]] KandinskyV22ControlnetPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ### KandinskyV22ControlnetImg2ImgPipeline | ||

|

|

||

| [[autodoc]] KandinskyV22ControlnetImg2ImgPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ### KandinskyV22Img2ImgPipeline | ||

|

|

||

| [[autodoc]] KandinskyV22Img2ImgPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ### KandinskyV22InpaintPipeline | ||

|

|

||

| [[autodoc]] KandinskyV22InpaintPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ### KandinskyV22PriorPipeline | ||

|

|

||

| [[autodoc]] ## KandinskyV22PriorPipeline | ||

| - all | ||

| - __call__ | ||

| - interpolate | ||

|

|

||

| ### KandinskyV22PriorEmb2EmbPipeline | ||

|

|

||

| [[autodoc]] KandinskyV22PriorEmb2EmbPipeline | ||

| - all | ||

| - __call__ | ||

| - interpolate | ||

|

|

||

| ## KandinskyPriorPipeline | ||

| ### KandinskyPriorPipeline | ||

|

|

||

| [[autodoc]] KandinskyPriorPipeline | ||

| - all | ||

| - __call__ | ||

| - interpolate | ||

|

|

||

| ## KandinskyPipeline | ||

| ### KandinskyPipeline | ||

|

|

||

| [[autodoc]] KandinskyPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ## KandinskyImg2ImgPipeline | ||

| ### KandinskyImg2ImgPipeline | ||

|

|

||

| [[autodoc]] KandinskyImg2ImgPipeline | ||

| - all | ||

| - __call__ | ||

|

|

||

| ## KandinskyInpaintPipeline | ||

| ### KandinskyInpaintPipeline | ||

|

|

||

| [[autodoc]] KandinskyInpaintPipeline | ||

| - all | ||

|

|

||

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Oops, something went wrong.

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

Uh oh!

There was an error while loading. Please reload this page.