Been improving on this for H4, and I think there may be a slight bug. At each reward calculation, which input has a higher reward needs to be known to pass it into the model.

In TRL: https://github.com/lvwerra/trl/blob/a05ddbdd836d3217c80a4b3e679ba984bfd4fa24/examples/summarization/scripts/reward_summarization.py#L185

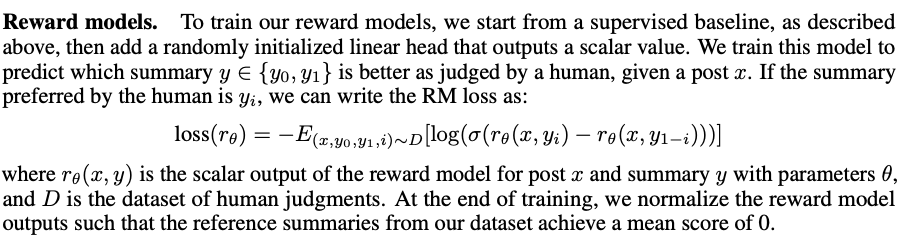

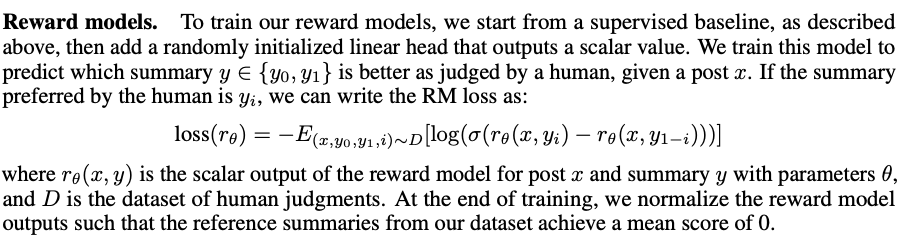

Here's the original paper, note how the indexing depends on which is selected. Or, maybe this is handled elsewhere in the script (I didn't see it).

Been improving on this for H4, and I think there may be a slight bug. At each reward calculation, which input has a higher reward needs to be known to pass it into the model.

In TRL: https://github.com/lvwerra/trl/blob/a05ddbdd836d3217c80a4b3e679ba984bfd4fa24/examples/summarization/scripts/reward_summarization.py#L185

Here's the original paper, note how the indexing depends on which is selected. Or, maybe this is handled elsewhere in the script (I didn't see it).