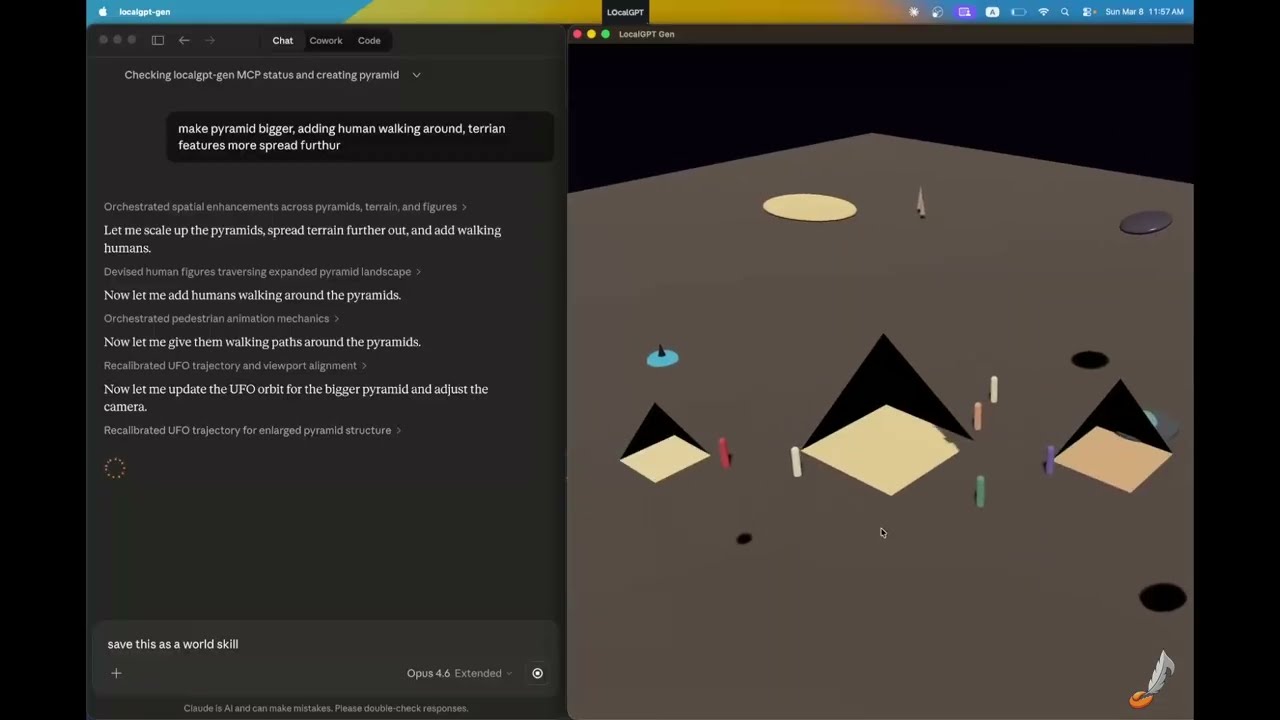

Build explorable 3D worlds with natural language — geometry, materials, lighting, audio, and behaviors. Open source, runs locally.

# World Building

cargo install localgpt-gen

# AI Assistant (chat, memory, daemon)

cargo install localgptlocalgpt-gen is a standalone binary for AI-driven 3D world creation with the Bevy game engine.

# Start interactive mode

localgpt-gen

# Start with an initial prompt

localgpt-gen "Create a desert scene with pyramids and a UFO hovering above"

# Load an existing scene

localgpt-gen --scene ./world.glb

# Verbose logging

localgpt-gen --verbose- Parametric shapes — box, sphere, cylinder, capsule, plane, torus, pyramid, tetrahedron, icosahedron, wedge

- PBR materials — color, metalness, roughness, emissive, alpha, double-sided

- Lighting — point, spot, directional lights with color and intensity

- Behaviors — orbit, spin, bob, look_at, pulse, path_follow, bounce

- Audio — ambient sounds (wind, rain, forest, ocean, cave) and spatial emitters

- Export — glTF/GLB, HTML (browser-viewable), screenshots

- World skills — save/load complete worlds as reusable skills

Use Gen from any MCP-compatible (Claude Desktop, Codex Desktop/CLI, Gemini CLI, etc.):

localgpt-gen --mcpAdd to your .mcp.json:

{

"mcpServers": {

"localgpt-gen": {

"command": "localgpt-gen",

"args": ["--mcp"]

}

}

}Full docs: docs/gen.md | MCP Server

Built something cool? Share on Discord or YouTube!

localgpt is a local-first AI assistant with persistent memory, autonomous tasks, and multiple interfaces.

# Interactive chat

localgpt chat

# Single question

localgpt ask "What is the meaning of life?"

# Run as daemon with HTTP API and web UI

localgpt daemon start- Single binary — no Node.js, Docker, or Python required

- Local device focused — runs entirely on your machine, your data stays yours

- Persistent memory — markdown-based knowledge store with full-text and semantic search

- Hybrid web search — native provider search passthrough plus client-side fallback

- Autonomous heartbeat — delegate tasks and let it work in the background

- Multiple interfaces — CLI, web UI, desktop GUI, Telegram bot

- Defense-in-depth security — signed policy files, kernel-enforced sandbox, prompt injection defenses

- Multiple LLM providers — Anthropic, OpenAI, xAI, Ollama, GLM, Vertex AI, CLI providers

LocalGPT uses XDG-compliant directories for config/data/state/cache. Run localgpt paths to see resolved paths.

Workspace memory layout:

<workspace>/

├── MEMORY.md # Long-term knowledge (auto-loaded each session)

├── HEARTBEAT.md # Autonomous task queue

├── SOUL.md # Personality and behavioral guidance

└── knowledge/ # Structured knowledge bank

Files are indexed with SQLite FTS5 for keyword search and sqlite-vec for semantic search with local embeddings.

Stored at <config_dir>/config.toml:

[agent]

default_model = "claude-cli/opus"

[providers.anthropic]

api_key = "${ANTHROPIC_API_KEY}"

[heartbeat]

enabled = true

interval = "30m"

[telegram]

enabled = true

api_token = "${TELEGRAM_BOT_TOKEN}"Full config reference: docs/configuration.md

- Kernel-enforced sandbox — Landlock/seccomp on Linux, Seatbelt on macOS

- Signed policy files — HMAC-SHA256 signed

LocalGPT.mdwith tamper detection - Prompt injection defenses — marker stripping, pattern detection, content boundaries

- Audit chain — hash-chained security event log

Security docs: docs/sandbox.md | docs/localgpt.md

| Endpoint | Description |

|---|---|

GET / |

Embedded web UI |

POST /api/chat |

Chat with assistant |

POST /api/chat/stream |

SSE streaming chat |

GET /api/memory/search?q=<query> |

Search memory |

Full API reference: docs/http-api.md

localgpt chat # Interactive chat

localgpt ask "question" # Single question

localgpt daemon start # Start daemon

localgpt memory search "query" # Search memory

localgpt config show # Show config

localgpt paths # Show resolved pathsFull CLI reference: docs/cli-commands.md

Rust, Tokio, Axum, Bevy, SQLite (FTS5 + sqlite-vec), fastembed, eframe