TEP-0121: Refine Retries for TaskRuns and CustomRuns#816

TEP-0121: Refine Retries for TaskRuns and CustomRuns#816tekton-robot merged 1 commit intotektoncd:mainfrom XinruZhang:tep-0121

Conversation

|

/kind tep |

|

/assign |

|

/assign @pritidesai |

|

Pushed a new commit comparing related works. |

|

I'm moving this TEP to |

| We propose to support `retries` in TaskRuns, and solidify that `Timeout` is for each Retry attempt in CustomRun by updating [TEP-0069](https://github.com/tektoncd/community/blob/33ca1d5254a405b1d479f2350443f6c7979a0b72/teps/0069-support-retries-for-custom-task-in-a-pipeline.md) and the Developer guidance [docs/runs.md](https://github.com/tektoncd/pipeline/blob/0dd9f9719177054bdf60928ce9b4aab4b85af5bd/docs/runs.md). | ||

|

|

||

| ### Notes and Caveats | ||

| One caveat is that **it's hard for PipelineRun Controller to know whether retries is implemented correctly by customers**. If customers want to use a specific CustomRun, they need to check (the documentation or even the code) whether the functionality is supported properly. |

There was a problem hiding this comment.

With the proposed option, I think we should strongly consider removing retriesStatus from CustomRun status (while still keeping retries in the spec). this will allow customRun controllers to implement retries however they want and prevent the pipelinerun controller from making assumptions about how retries are implemented.

There was a problem hiding this comment.

Similarly, I think this discussion is also related to this comment thread because IMO we need the retriesStatus in pipelineController to decide whether the retries is exhausted, let's move our discussions there.

There was a problem hiding this comment.

sorry to continue this thread-- I think this is actually a separate point. My idea here was that the pipelinerun controller can determine whether a run is running, failed, or succeeded based on its status.conditions. If we want to hand retries responsibility entirely off to run controllers to implement however they would like, why require them to update status.retriesstatus in a certain way? The run controller can keep track of retries in status.extrafields.

There was a problem hiding this comment.

No problem at all. I'm wondering how does pipelinerun controller determine the run finishes: like what if it failed (the condition is marked as failed), and would be retried in the next reconciliation loop?

There was a problem hiding this comment.

I think we'd have to clarify our run controller guidance not to update the ConditionSucceeded until the run is finished running, including exhausting all retries (i.e. most runs would probably implement retries differently than how they're currently implemented on taskrun, but that's OK).

There was a problem hiding this comment.

If we want to hand retries responsibility entirely off to run controllers to implement however they would like, why require them to update status.retriesstatus in a certain way? The run controller can keep track of retries in status.extrafields.

status.retriesStatus is a natural field to store status of each execution. It is part of an implicit contract between tekton controller and custom controller. status.extrafields is a very generic and not ideal for storing such key information.

What is the motivation behind this request?

There was a problem hiding this comment.

I made this request because I think we want to minimize the implicit contract between the tekton controller and custom controller as much as possible. Part of the motivation for adding retries to custom tasks is to allow them to implement retries in whatever way makes the most sense, and we take away some of that flexibility if we require them to update retries status in a certain way.

There was a problem hiding this comment.

Thanks for the good discussion on this topic.

I definitely agree that we need to have a clear separation between the PipelineRun controller and the TaskRun / CustomRun controller, and what @lbernick highlighted in tektoncd/pipeline#5248 - i.e. that the TaskRun reconciler will use the length of its RetriesStatus to determine what to name the pod, is not great.

About RetriesStatus specifically, the fact that RetriesStatus exists in a TaskRun is not ideal, because that's not something that the TaskRun controller should be concerned with, in the current implementation.

Similarly, we should not need RetriesStatus in CustomRuns:

- if retries are implemented by the

PipelineRuncontroller, it should keep in thePipelineRunthis status info - if retries are implemented by the

CustomRuncontroller, it's not something that thePipelineRuncontroller needs to know about. If theCustomRuncontroller needs to store data in the status, it can do so, but it doesn't have to be a standard location as thePipelineRuncontroller will not use it.

My preferred overall setup would be:

- Focus on

PipelineRundriven retries. Advantages:- Users have a consistent interface for retries

- Custom task controller developers get a default implementation of retries for free (by embedding in a pipeline)

- "Pipelines in pipeline" can be retried the same as the other resources

- No changes to the

PipelineRunAPI (not in thespecat least) - No changes to the

TaskRunAPI (not in thespecat least)

- Remove

RetriesStatusfromTaskRunsandCustomRuns - Improve the retries of

TaskRunsby using separateTaskRunsfor each retry- This means we can see the logs of failed retries too

- Dashboard and CLI may need extra work for this

- Deprecate

spec.retriesinRun

There was a problem hiding this comment.

Thanks for the detailed comment.

For the advantages of the your poposed overall setup:

Users have a consistent interface for retries

- For RuntimeObject driven retries, the interface is also consistent -- we pass PipelineTask.retries to the

retriesfield in child runtime objects.

Custom task controller developers get a default implementation of retries for free (by embedding in a pipeline)

- This harms the flexibility of Custom Task -- what if a custom task controller wants to have different retry stragety/policy, instead of just recreating on failure. Most CI/CD systems have the configurable retry stragety. See the related work.

"Pipelines in pipeline" can be retried the same as the other resources

- This is an area that I'm not quite sure what needs to be done, and

- I'm not sure if this discussion should be part of this TEP.

No changes to the PipelineRun API (not in the spec at least)

- For RuntimeObject driven retries, no changes to the PipelineRun API neither.

No changes to the TaskRun API (not in the spec at least)

- For RuntimeObject driven retries, indeed, it adds an extra API field

retries, but this would be an addictive change rather than backward-incompatible change, wdyt?

I believe we should leave the retry implementation to the CustomRun controller, pipelinerun controller shouldn't touch it at all -- it shouldn't rely on the retriesStatus to decide whether the CustomRun is finished or not etc.

I totally agree that we can get rid of retriesStatus for both CustomRun and TaskRun. For TaskRun, instead of updating retriesStatus, we can create a new TaskRun in taskrun controller for each retry attempt.

Therefore, having TaskRun controller implements the retry can have most of the advantages you mentiond plus

- taskrun retry on its own

- we don't need to deprecate

retriesin Run

There was a problem hiding this comment.

Thanks for all of the great discussion in the WG meeting Andrea @afrittoli!

Yeah as you mentioned, it doesn't neccesarrily harm the flexibility of CustomTask. The more accurate way to state it is it harms the UX: say a CustomRun controller implemented its own retry strategy, for the users of the Custom Task, they would be confused about which retries field to use (PipelineTask.retries or PipelineTask.TaskSpec.SpecializedRetries):

apiVersion: tekton.dev/v1beta1

kind: PipelineRun

metadata:

generateName: pr-custom-task-

spec:

pipelineSpec:

tasks:

- name: wait

timeout: "1s"

retries: 1 // The common retries field in the PipelineTask

taskSpec:

specialized-retries: 5 // Specialized retries field in Custom Task Spec.

other-spec-fields: foobar

Besides, would you mind pointing out which field we need to update in PipelineRun API spec?

A piece of background information: the current implementation is different (timeout for per retry) from what documented (timeout for all retry attempts), but is aligned with TaskRun behavior. The current end condition when a Run fails: We propose to not rely on the @lbernick, @pritidesai , @abayer wdyt? cc @dibyom |

|

thanks Xinru for this summary! I think we should remove |

Thanks @lbernick! Right, updated the sumary to reflect the proposal. |

|

|

||

| ### Goals | ||

| 1. Align the behavior of `Retries` between TaskRun and CustomRun. | ||

| 2. Retry TaskRun independently. |

There was a problem hiding this comment.

What is the use case of being able to retry taskRun independently? What is the driving factor for creating just a taskRun outside of pipeline? I am trying to recollect and gather any such use case. I have hardly seen a requirement of creating just a taskRun. I can think that it could be beneficial for testing purpose, but if I am eventually making that task part of a pipeline, its easier to create a pipeline to begin with. Do we know if our catalog is using taskRun for their testing purpose?

There was a problem hiding this comment.

Thanks for the comment Priti!

We do have standalone TaskRuns use cases:

- https://github.com/tektoncd/catalog/tree/main/task/send-to-webhook-slack/0.1

- https://github.com/tektoncd/catalog/tree/main/task/sendmail/0.1

It sounds weird to me If we believe all custom runs need to support the retries field while we don't necessarily need to support retries for all Task Runs.

IMHO the reason why we didn't see a lot of use cases (i.e. feature request iessues) is creating feature requests is not an effortless action. People may not bother to create feature requests for stand-alone TaskRuns potentially because they can actually write scripts to retry them. By supporting retries natively, we can group their retry attempts all together, saving them times on writing scripts etc.

Transient errors are everywhere especially in the Cloud Environment, services can be down for a short period of time making the entire TaskRun fails. I truly believe retry is something necessary for TaskRuns to avoid flaky errors.

https://learn.microsoft.com/en-us/azure/architecture/best-practices/transient-faults#why-do-transient-faults-occur-in-the-cloud explained how common the transient errors are in the Cloud env. It deepened my understanding about the nature of Cloud Services and how to make things more stable. Hope it to be helpful.

There was a problem hiding this comment.

@XinruZhang @pritidesai we do have use cases where we run tasks by themselves in Tekton CI, typically to interact with GitHub, however, two things are worth noticing:

- if a standalone task required retries, that would be possible today by using a single-task pipeline

- we are in the process of replacing those with custom tasks because when the only thing done by a task is calling out to an API, running a Pod introduces overhead, and a custom task controller can handle the failure/retry logic much better anyways

IMHO the reason why we didn't see a lot of use cases (i.e. feature request iessues) is creating feature requests is not an effortless action. People may not bother to create feature requests for stand-alone TaskRuns potentially because they can actually write scripts to retry them. By supporting retries natively, we can group their retry attempts all together, saving them times on writing scripts etc.

I'm not sure I fully agree with this. Retrying a multi-step Task is not trivial.

Creating a feature request is not effortless, but I don't think the bar is too high if the feature solves an actual pain point.

There was a problem hiding this comment.

Thanks Andrea for the use cases. That's true, users can always write a sinle-task pipeline in order to retry a Task. Therefore making TaskRun retriable on its own should not be the reason to introduce the new retries field for TaskRun.

| | **Attempts amount** |supported|supported|supported|supported|supported|supported| | ||

| | **Timeout for each attempt** |supported|supported|supported|[supported](https://docs.gitlab.com/ee/ci/yaml/#retrywhen)|supported|supported| | ||

| | **Timeout for all attempts** |[supported](https://docs.github.com/en/actions/using-workflows/workflow-syntax-for-github-actions#jobsjob_idstepstimeout-minutes)|[supported](https://docs.github.com/en/actions/using-workflows/workflow-syntax-for-github-actions#jobsjob_idstepstimeout-minutes)|[?](https://stackoverflow.com/questions/71424429/argo-workflowtemplate-timeout)|-|-|-| | ||

|

|

There was a problem hiding this comment.

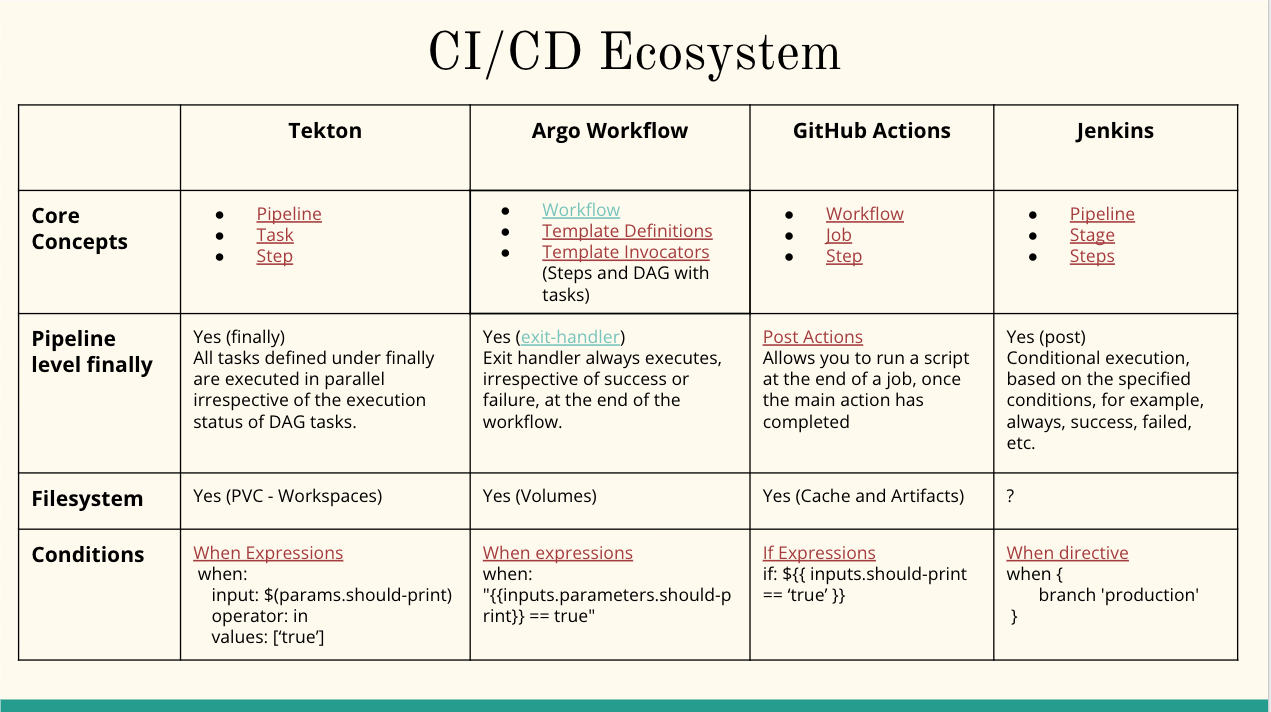

Thank you @XinruZhang for this detailed comparison with an industry standard.

Tekton pipelines do support retries when a task is part of a pipeline. The task can be a native Tekton task or a custom task.

This table does not represent apples to apples comparison. For example, Argo Workflow is not equivalent to Tekton task but its more or less similar to pipeline or even Tekton workflow. This proposal is focused on independent taskRun and customRun CRDs. We need to identify and document the list of resources in the industry which are equivalent to a task or taskRun in Tekton.

This table is misleading and may confuse users while comparing Tekton with the other CI/CD systems.

@jerop and I had documented this kind of comparison last year (which might be outdated at this point), but something for your reference:

There was a problem hiding this comment.

Thanks for the review @pritidesai and the comparison work you and Jerop did!

This related work section is written for comparing the general retry strategy (instead of demonstrating that retry is a neccesary functionality in TaskRun), especially about if we should set Timeout for all retry attempts or each retry attempt. I updated the Related Work section, hopefuly the confusion is alleviated.

Thanks for the concept comparison! It seems like there are no concepts equivalent to Task, becuase in Tekton, PipelineRun, TaskRun and CustomRun can all run on their own, while in other CI/CD systems, they only support the top level Pipeline/Workflow to run on its own. So it's hard for us to find an industry standard to follow to decide if we want to support retry in TaskRun.

|

Thanks @pritidesai @jerop @lbernick for your detailed review, appreciate it a lot! ❤️ |

| - 1.b: Update `retriesStatus` for each retry attempt for `TaskRun`, deprecate `retriesStatus` for `CustomRun` | ||

| - No implementation restrictions of `retriesStatus` for `CustomRun` | ||

| - Need to implement a strategy for clients to get the previous pod and read its logs. | ||

| - 1.c: Deprecate `retriesStatus` for both `TaskRun` and `CustomRun`, create a new `TaskRun` for each retry attempt. |

There was a problem hiding this comment.

For independent taskRun, the users might have to query metadata (labels and annotations) to get the list of taskRun CRDs for each retry attempt. Let's say there is a taskRun which was retried five times, each five taskRuns and pod will have a label saying it was created for a particular instance.

For the taskRun created for a pipelineTask, how do we retrieve the collection of taskRuns for each attempt? Using the same metadata?

taskRun with retriesStatus works as a master with a list of children as each attempt. It hides complexity of querying a list of taskRuns for a given pipelineTask and checking the status of each attempted taskRun.

There was a problem hiding this comment.

Summary our recent dicussion offline around option 1.c, the details are attached in Appendix if you are interested.

- TaskRun reconciler creates a new

TaskRunfor each retry attempt. - Creates a new field

RetryAttemptsinTaskRun.Statusto record the retry history. - Creates two new labels:

tekton.dev/retry-count: <retry number>, if the number is 0, the TaskRun is not initiated for a retry.tekton.dev/retry-parent: <parent taskrun name>, record the parent TaskRun of a retry attempt TaskRun.

We need to prototype this solution before moving this TEP to implementable.

Thanks for all of the inputs and suggestions @pritidesai !

In [TEP-0121][tep-0121], we are redesigining `Retries` in `TaskRuns` and `CustomRuns`. In this change, we propose excluding `retries` and `retriesStatus` in the initial release of `CustomRuns`. We will figure out a way forward in [TEP-0121][tep-0121], which could be reintroducing the fields implementing replacement fields. This allows us to unblock release of Custom Tasks Beta and Tekton Pipelines V1. /kind tep [tep-0121]: tektoncd#816

In [TEP-0121][tep-0121], we are redesigining `Retries` in `TaskRuns` and `CustomRuns`. In this change, we propose excluding `retries` and `retriesStatus` in the initial release of `CustomRuns`. We will figure out a way forward in [TEP-0121][tep-0121], which could be reintroducing the fields implementing replacement fields. This allows us to unblock release of Custom Tasks Beta and Tekton Pipelines V1. /kind tep [tep-0121]: tektoncd#816

In [TEP-0121][tep-0121], we are redesigining `Retries` in `TaskRuns` and `CustomRuns`. In this change, we propose excluding `retries` and `retriesStatus` in the initial release of `CustomRuns`. We will figure out a way forward in [TEP-0121][tep-0121], which could be reintroducing the fields implementing replacement fields. This allows us to unblock release of Custom Tasks Beta and Tekton Pipelines V1. /kind tep [tep-0121]: tektoncd#816

|

/hold Talking with @pritidesai offline about some important details of Option 1, related links:

Will update the TEP soon. |

In [TEP-0121][tep-0121], we are redesigining `Retries` in `TaskRuns` and `CustomRuns`. In this change, we propose excluding `retries` and `retriesStatus` in the initial release of `CustomRuns`. We will figure out a way forward in [TEP-0121][tep-0121], which could be reintroducing the fields implementing replacement fields. This allows us to unblock release of Custom Tasks Beta and Tekton Pipelines V1. /kind tep [tep-0121]: #816

|

Hello folks, I am just trying to catch up with all the updates and might have missed some. Do we ensure that a custom task treats that timeout is for each retry and how? |

|

Hi @ScrapCodes, thanks for the comment! Yes, that's a great question. I don't think we have a way to enforce that, this is more of a contract we build with our customers: i.e. if you don't implement the custom task controller in this way, the pipeline controller may not work as intended. |

|

We(me and Prashant) have reached agreement in an offline discussion on last Friday, see the meeting note. Thanks for the great discussion! @ScrapCodes |

Propose to refine Retries for TaskRun and CustomRun

|

thanks @XinruZhang for all your efforts and patience 🤗 /approve |

|

[APPROVALNOTIFIER] This PR is APPROVED This pull-request has been approved by: jerop, lbernick, pritidesai The full list of commands accepted by this bot can be found here. The pull request process is described here DetailsNeeds approval from an approver in each of these files:

Approvers can indicate their approval by writing |

|

LGTM since have all the approvals now /lgtm |

Retries is designed inconsistently between TaskRun and CustomRun, see the Motivation section for details. In this TEP, we propose to align the inconsistency by

TimeOutis for each retry attempt, updating TEP-069 and docs/runs.md to reflect the alignment.Retriesimplementation to TaskRun reconciler.