-

Notifications

You must be signed in to change notification settings - Fork 75.3k

Memory leak with tf.py_function in eager mode using TF 2.0 #35010

Copy link

Copy link

Closed

Labels

TF 2.7Issues related to TF 2.7.0Issues related to TF 2.7.0comp:eagerEager related issuesEager related issuesstaleThis label marks the issue/pr stale - to be closed automatically if no activityThis label marks the issue/pr stale - to be closed automatically if no activitystat:awaiting responseStatus - Awaiting response from authorStatus - Awaiting response from authortype:performancePerformance IssuePerformance Issue

Description

System information

- Have I written custom code (as opposed to using a stock example script provided in TensorFlow): Yes

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): MacOS 10.14.6

- TensorFlow installed from (source or binary): binary

- TensorFlow version (use command below): 2.0.0

- Python version: 3.6.3 64bit

- Bazel version (if compiling from source): N/A

- GCC/Compiler version (if compiling from source): N/A

- CUDA/cuDNN version: N/A

- GPU model and memory: N/A

Describe the current behavior

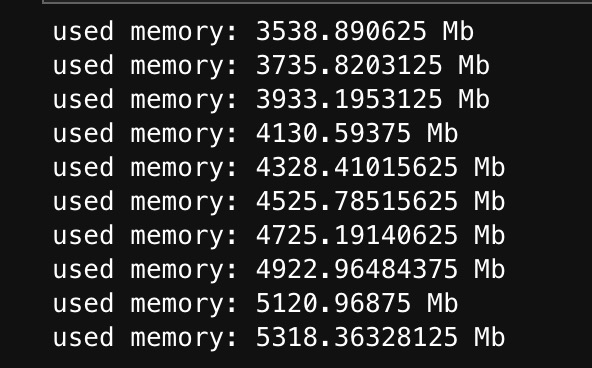

I customize a Keras layer and use tf.py_function in the call of the layer. The used memory keeps increasing linearly with the number of calling the layer with inputs in eager mode. What's

Describe the expected behavior

The memory should keep stable.

Code to reproduce the issue

import psutil

import numpy as np

import tensorflow as tf

class TestLayer(tf.keras.layers.Layer):

def __init__(

self,

output_dim,

**kwargs

):

super(TestLayer, self).__init__(**kwargs)

self.output_dim = output_dim

def build(self, input_shape):

self.built = True

def call(self, inputs):

batch_embedding = tf.py_function(

self.mock_output, inp=[inputs], Tout=tf.float64,

)

return batch_embedding

def mock_output(self, inputs):

shape = inputs.shape.as_list()

batch_size = shape[0]

return tf.constant(np.random.random((batch_size,self.output_dim)))

test_layer = TestLayer(1000)

for i in range(1000):

test_layer.call(tf.constant(np.random.randint(0,100,(256,10))))

if i % 100 == 0:

used_mem = psutil.virtual_memory().used

print('used memory: {} Mb'.format(used_mem / 1024 / 1024))other info

We encounter this issue when developing a customized embedding layer in ElasticDL and resolving the ElasticDL issue 1567

Reactions are currently unavailable

Metadata

Metadata

Assignees

Labels

TF 2.7Issues related to TF 2.7.0Issues related to TF 2.7.0comp:eagerEager related issuesEager related issuesstaleThis label marks the issue/pr stale - to be closed automatically if no activityThis label marks the issue/pr stale - to be closed automatically if no activitystat:awaiting responseStatus - Awaiting response from authorStatus - Awaiting response from authortype:performancePerformance IssuePerformance Issue