-

Notifications

You must be signed in to change notification settings - Fork 0

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Pull in master #4

Commits on Jan 7, 2021

-

[Don't review] Clean up type annotations in caffe2/torch/nn (#50079)

Summary: Pull Request resolved: #50079 Test Plan: Sandcastle tests Reviewed By: xush6528 Differential Revision: D25718694 fbshipit-source-id: f535fb879bcd4cb4ea715adfd90bbffa3fcc1150

Configuration menu - View commit details

-

Copy full SHA for f83d57f - Browse repository at this point

Copy the full SHA f83d57fView commit details -

Clean up some type annotations in android (#49944)

Summary: Pull Request resolved: #49944 Upgrades type annotations from Python2 to Python3 Test Plan: Sandcastle tests Reviewed By: xush6528 Differential Revision: D25717539 fbshipit-source-id: c621e2712e87eaed08cda48eb0fb224f6b0570c9

Configuration menu - View commit details

-

Copy full SHA for 09eefec - Browse repository at this point

Copy the full SHA 09eefecView commit details -

[Gradient Compression] Remove the extra comma after "bucket" in Power…

Configuration menu - View commit details

-

Copy full SHA for ce37039 - Browse repository at this point

Copy the full SHA ce37039View commit details

Commits on Jan 8, 2021

-

Configuration menu - View commit details

-

Copy full SHA for 870ab04 - Browse repository at this point

Copy the full SHA 870ab04View commit details -

Fix SyncBatchNorm usage without stats tracking (#50126)

Summary: In `batch_norm_gather_stats_with_counts_cuda` use `input.scalar_type()` if `running_mean` is not defined In `SyncBatchNorm` forward function create count tensor with `torch.float32` type if `running_mean` is None Fix a few typos Pull Request resolved: #50126 Test Plan: ``` python -c "import torch;print(torch.batch_norm_gather_stats_with_counts( torch.randn(1, 3, 3, 3, device='cuda'), mean = torch.ones(2, 3, device='cuda'), invstd = torch.ones(2, 3, device='cuda'), running_mean = None, running_var = None , momentum = .1, eps = 1e-5, counts = torch.ones(2, device='cuda')))" ``` Fixes #49730 Reviewed By: ngimel Differential Revision: D25797930 Pulled By: malfet fbshipit-source-id: 22a91e3969b5e9bbb7969d9cc70b45013a42fe83

Configuration menu - View commit details

-

Copy full SHA for bf4fcab - Browse repository at this point

Copy the full SHA bf4fcabView commit details -

[PyTorch] Devirtualize TensorImpl::numel() with macro (#49766)

Summary: Pull Request resolved: #49766 Devirtualizing this seems like a decent performance improvement on internal benchmarks. The *reason* this is a performance improvement is twofold: 1) virtual calls are a bit slower than regular calls 2) virtual functions in `TensorImpl` can't be inlined Test Plan: internal benchmark Reviewed By: hlu1 Differential Revision: D25602321 fbshipit-source-id: d61556456ccfd7f10c6ebdc3a52263b438a2aef1

Configuration menu - View commit details

-

Copy full SHA for 2e7c6cc - Browse repository at this point

Copy the full SHA 2e7c6ccView commit details -

[PyTorch] validate that SparseTensorImpl::dim needn't be overridden (#…

…49767) Summary: Pull Request resolved: #49767 I'm told that the base implementation should work fine. Let's validate that in an intermediate diff before removing it. ghstack-source-id: 119528066 Test Plan: CI Reviewed By: ezyang, bhosmer Differential Revision: D25686830 fbshipit-source-id: f931394d3de6df7f6c5c68fe8ab711d90d3b12fd

Configuration menu - View commit details

-

Copy full SHA for 1a1b665 - Browse repository at this point

Copy the full SHA 1a1b665View commit details -

[PyTorch] Devirtualize TensorImpl::dim() with macro (#49770)

Summary: Pull Request resolved: #49770 Seems like the performance cost of making this commonly-called method virtual isn't worth having use of undefined tensors crash a bit earlier (they'll still fail to dispatch). ghstack-source-id: 119528065 Test Plan: framework overhead benchmarks Reviewed By: ezyang Differential Revision: D25687465 fbshipit-source-id: 89aabce165a594be401979c04236114a6f527b59

Configuration menu - View commit details

-

Copy full SHA for 4de6b27 - Browse repository at this point

Copy the full SHA 4de6b27View commit details -

Let RpcAgent::send() return JitFuture (#49906)

Summary: Pull Request resolved: #49906 This commit modifies RPC Message to inherit from `torch::CustomClassHolder`, and wraps a Message in an IValue in `RpcAgent::send()`. Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25719518 Pulled By: mrshenli fbshipit-source-id: 694e40021e49e396da1620a2f81226522341550b

Configuration menu - View commit details

-

Copy full SHA for 84e3237 - Browse repository at this point

Copy the full SHA 84e3237View commit details -

Replace FutureMessage with ivalue::Future in distributed/autograd/uti…

Configuration menu - View commit details

-

Copy full SHA for 25ef605 - Browse repository at this point

Copy the full SHA 25ef605View commit details -

Replace FutureMessage with ivalue::Future in RRefContext (#49960)

Summary: Pull Request resolved: #49960 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25730530 Pulled By: mrshenli fbshipit-source-id: 5d54572c653592d79c40aed616266c87307a1ad8

Configuration menu - View commit details

-

Copy full SHA for 008206d - Browse repository at this point

Copy the full SHA 008206dView commit details -

Replace FutureMessage with ivalue::Future in RpcAgent retry logic (#4…

Configuration menu - View commit details

-

Copy full SHA for d730c7e - Browse repository at this point

Copy the full SHA d730c7eView commit details -

Completely remove FutureMessage from RRef Implementations (#50004)

Summary: Pull Request resolved: #50004 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25750602 Pulled By: mrshenli fbshipit-source-id: 06854a77f4fb5cc4c34a1ede843301157ebf7309

Configuration menu - View commit details

-

Copy full SHA for 2d5f57c - Browse repository at this point

Copy the full SHA 2d5f57cView commit details -

Completely remove FutureMessage from RPC TorchScript implementations (#…

Configuration menu - View commit details

-

Copy full SHA for b2da0b5 - Browse repository at this point

Copy the full SHA b2da0b5View commit details -

Completely remove FutureMessage from distributed autograd (#50020)

Summary: Pull Request resolved: #50020 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25752968 Pulled By: mrshenli fbshipit-source-id: 138d37e204b6f9a584633cfc79fd44c8c9c00f41

Configuration menu - View commit details

-

Copy full SHA for 0c94393 - Browse repository at this point

Copy the full SHA 0c94393View commit details -

Remove FutureMessage from sender ProcessGroupAgent (#50023)

Summary: Pull Request resolved: #50023 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753217 Pulled By: mrshenli fbshipit-source-id: 5a98473c17535c8f92043abe143064e7fca4413b

Configuration menu - View commit details

-

Copy full SHA for 1deb895 - Browse repository at this point

Copy the full SHA 1deb895View commit details -

Remove FutureMessage from sender TensorPipeAgent (#50024)

Summary: Pull Request resolved: #50024 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753386 Pulled By: mrshenli fbshipit-source-id: fdca051b805762a2c88f965ceb3edf1c25d40a56

Configuration menu - View commit details

-

Copy full SHA for 0684d07 - Browse repository at this point

Copy the full SHA 0684d07View commit details -

Completely remove FutureMessage from FaultyProcessGroupAgent (#50025)

Summary: Pull Request resolved: #50025 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753587 Pulled By: mrshenli fbshipit-source-id: a5d4106a10d1b0d3e4c406751795f19af8afd120

Configuration menu - View commit details

-

Copy full SHA for 2831af9 - Browse repository at this point

Copy the full SHA 2831af9View commit details -

Remove FutureMessage from RPC request callback logic (#50026)

Summary: Pull Request resolved: #50026 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753588 Pulled By: mrshenli fbshipit-source-id: a6fcda7830901dd812fbf0489b001e6bd9673780

Configuration menu - View commit details

-

Copy full SHA for 1f795e1 - Browse repository at this point

Copy the full SHA 1f795e1View commit details -

Completely Remove FutureMessage from RPC cpp tests (#50027)

Summary: Pull Request resolved: #50027 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753815 Pulled By: mrshenli fbshipit-source-id: 85b9b03fec52b4175288ac3a401285607744b451

Configuration menu - View commit details

-

Copy full SHA for 0987510 - Browse repository at this point

Copy the full SHA 0987510View commit details -

Completely Remove FutureMessage from RPC agents (#50028)

Summary: Pull Request resolved: #50028 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25753887 Pulled By: mrshenli fbshipit-source-id: 40718349c2def262a16aaa24c167c0b540cddcb1

Configuration menu - View commit details

-

Copy full SHA for 171648e - Browse repository at this point

Copy the full SHA 171648eView commit details -

Completely remove FutureMessage type (#50029)

Summary: Pull Request resolved: #50029 Test Plan: buck run mode/opt -c=python.package_style=inplace //caffe2/torch/fb/training_toolkit/examples:ctr_mbl_feed_april_2020 -- local-preset --flow-entitlement pytorch_ftw_gpu --secure-group oncall_pytorch_distributed Before: ``` ... I0107 11:03:10.434000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|total_examples 14000.0 I0107 11:03:10.434000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|window_qps 74.60101318359375 I0107 11:03:10.434000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|lifetime_qps 74.60101318359375 ... I0107 11:05:12.132000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|total_examples 20000.0 I0107 11:05:12.132000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|window_qps 64.0 I0107 11:05:12.132000 3831111 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|lifetime_qps 64.64917755126953 ... ``` After: ``` ... I0107 11:53:03.858000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|total_examples 14000.0 I0107 11:53:03.858000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|window_qps 72.56404876708984 I0107 11:53:03.858000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|lifetime_qps 72.56404876708984 ... I0107 11:54:24.612000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|total_examples 20000.0 I0107 11:54:24.612000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|window_qps 73.07617950439453 I0107 11:54:24.612000 53693 print_publisher.py:23 master ] Publishing batch metrics: qps-qps|lifetime_qps 73.07617950439453 ... ``` Reviewed By: lw Differential Revision: D25774915 Pulled By: mrshenli fbshipit-source-id: 1128c3c2df9d76e36beaf171557da86e82043eb9

Configuration menu - View commit details

-

Copy full SHA for c480eeb - Browse repository at this point

Copy the full SHA c480eebView commit details -

[PyTorch] Introduce packed SizesAndStrides abstraction (#47507)

Summary: Pull Request resolved: #47507 This introduces a new SizesAndStrides class as a helper for TensorImpl, in preparation for changing its representation. ghstack-source-id: 119313559 Test Plan: Added new automated tests as well. Run framework overhead benchmarks. Results seem to be neutral-ish. Reviewed By: ezyang Differential Revision: D24762557 fbshipit-source-id: 6cc0ede52d0a126549fb51eecef92af41c3e1a98

Configuration menu - View commit details

-

Copy full SHA for 882ddb2 - Browse repository at this point

Copy the full SHA 882ddb2View commit details -

[PyTorch] Change representation of SizesAndStrides (#47508)

Summary: Pull Request resolved: #47508 This moves SizesAndStrides to a specialized representation that is 5 words smaller in the common case of tensor rank 5 or less. ghstack-source-id: 119313560 Test Plan: SizesAndStridesTest added in previous diff passes under ASAN + UBSAN. Run framework overhead benchmarks. Looks more or less neutral. Reviewed By: ezyang Differential Revision: D24772023 fbshipit-source-id: 0a75fd6c2daabb0769e2f803e80e2d6831871316

Configuration menu - View commit details

-

Copy full SHA for b73c018 - Browse repository at this point

Copy the full SHA b73c018View commit details -

Disable cuDNN persistent RNN on sm_86 devices (#49534)

Summary: Excludes sm_86 GPU devices from using cuDNN persistent RNN. This is because there are some hard-to-detect edge cases that will throw exceptions with cudnn 8.0.5 on Nvidia A40 GPU. Pull Request resolved: #49534 Reviewed By: mruberry Differential Revision: D25632378 Pulled By: mrshenli fbshipit-source-id: cbe78236d85d4d0c2e4ca63a3fc2c4e2de662d9e

Configuration menu - View commit details

-

Copy full SHA for 5a63c45 - Browse repository at this point

Copy the full SHA 5a63c45View commit details -

Address clang-tidy warnings in ProcessGroupNCCL (#50131)

Summary: Pull Request resolved: #50131 Noticed that in the internal diff for #49069 there was a clang-tidy warning to use emplace instead of push_back. This can save us a copy as it eliminates the unnecessary in-place construction ghstack-source-id: 119560979 Test Plan: CI Reviewed By: pritamdamania87 Differential Revision: D25800134 fbshipit-source-id: 243e57318f5d6e43de524d4e5409893febe6164c

Configuration menu - View commit details

-

Copy full SHA for 294b786 - Browse repository at this point

Copy the full SHA 294b786View commit details -

Revert D25687465: [PyTorch] Devirtualize TensorImpl::dim() with macro

Test Plan: revert-hammer Differential Revision: D25687465 (4de6b27) Original commit changeset: 89aabce165a5 fbshipit-source-id: fa5def17209d1691e68b1245fa0873fd03e88eaa

Configuration menu - View commit details

-

Copy full SHA for c215ffb - Browse repository at this point

Copy the full SHA c215ffbView commit details -

Autograd engine, only enqueue task when it is fully initialized (#50164)

Summary: This solves a race condition where the worker thread might see a partially initialized graph_task Fixes #49652 I don't know how to reliably trigger the race so I didn't add any test. But the rocm build flakyness (it just happens to race more often on rocm builds) should disappear after this PR. Pull Request resolved: #50164 Reviewed By: zou3519 Differential Revision: D25824954 Pulled By: albanD fbshipit-source-id: 6a3391753cb2afd2ab415d3fb2071a837cc565bb

Configuration menu - View commit details

-

Copy full SHA for fc2ead0 - Browse repository at this point

Copy the full SHA fc2ead0View commit details -

Configuration menu - View commit details

-

Copy full SHA for 9f832c8 - Browse repository at this point

Copy the full SHA 9f832c8View commit details -

Update autograd related comments (#50166)

Summary: Remove outdated comment and update to use new paths. Pull Request resolved: #50166 Reviewed By: zou3519 Differential Revision: D25824942 Pulled By: albanD fbshipit-source-id: 7dc694891409e80e1804eddcdcc50cc21b60f822

Configuration menu - View commit details

-

Copy full SHA for 006cfeb - Browse repository at this point

Copy the full SHA 006cfebView commit details -

Implement torch.linalg.svd (#45562)

Summary: This is related to #42666 . I am opening this PR to have the opportunity to discuss things. First, we need to consider the differences between `torch.svd` and `numpy.linalg.svd`: 1. `torch.svd` takes `some=True`, while `numpy.linalg.svd` takes `full_matrices=True`, which is effectively the opposite (and with the opposite default, too!) 2. `torch.svd` returns `(U, S, V)`, while `numpy.linalg.svd` returns `(U, S, VT)` (i.e., V transposed). 3. `torch.svd` always returns a 3-tuple; `numpy.linalg.svd` returns only `S` in case `compute_uv==False` 4. `numpy.linalg.svd` also takes an optional `hermitian=False` argument. I think that the plan is to eventually deprecate `torch.svd` in favor of `torch.linalg.svd`, so this PR does the following: 1. Rename/adapt the old `svd` C++ functions into `linalg_svd`: in particular, now `linalg_svd` takes `full_matrices` and returns `VT` 2. Re-implement the old C++ interface on top of the new (by negating `full_matrices` and transposing `VT`). 3. The C++ version of `linalg_svd` *always* returns a 3-tuple (we can't do anything else). So, there is a python wrapper which manually calls `torch._C._linalg.linalg_svd` to tweak the return value in case `compute_uv==False`. Currently, `linalg_svd_backward` is broken because it has not been adapted yet after the `V ==> VT` change, but before continuing and spending more time on it I wanted to make sure that the general approach is fine. Pull Request resolved: #45562 Reviewed By: H-Huang Differential Revision: D25803557 Pulled By: mruberry fbshipit-source-id: 4966f314a0ba2ee391bab5cda4563e16275ce91f

Configuration menu - View commit details

-

Copy full SHA for 5c5abd5 - Browse repository at this point

Copy the full SHA 5c5abd5View commit details -

Add tensor.view(dtype) (#47951)

Summary: Fixes #42571 Note that this functionality is a subset of [`numpy.ndarray.view`](https://numpy.org/doc/stable/reference/generated/numpy.ndarray.view.html): - this only supports viewing a tensor as a dtype with the same number of bytes - this does not support viewing a tensor as a subclass of `torch.Tensor` Pull Request resolved: #47951 Reviewed By: ngimel Differential Revision: D25062301 Pulled By: mruberry fbshipit-source-id: 9fefaaef77f15d5b863ccd12d836932983794475

Configuration menu - View commit details

-

Copy full SHA for d00aceb - Browse repository at this point

Copy the full SHA d00acebView commit details -

Configuration menu - View commit details

-

Copy full SHA for 54ce171 - Browse repository at this point

Copy the full SHA 54ce171View commit details -

add type annotations to torch.nn.quantized.modules.conv (#49702)

Summary: closes gh-49700 No mypy issues were found in the first three entries deleted from `mypy.ini`: ``` [mypy-torch.nn.qat.modules.activations] ignore_errors = True [mypy-torch.nn.qat.modules.conv] ignore_errors = True [mypy-torch.nn.quantized.dynamic.modules.linear] ignore_errors = True ``` Pull Request resolved: #49702 Reviewed By: walterddr, zou3519 Differential Revision: D25767119 Pulled By: ezyang fbshipit-source-id: cb83e53549a299538e1b154cf8b79e3280f7392a

Configuration menu - View commit details

-

Copy full SHA for 55919a4 - Browse repository at this point

Copy the full SHA 55919a4View commit details -

Stop using c10::scalar_to_tensor in float_power. (#50105)

Summary: Pull Request resolved: #50105 There should be no functional change here. A couple of reasons here: 1) This function is generally an anti-pattern (#49758) and it is good to minimize its usage in the code base. 2) pow itself has a fair amount of smarts like not broadcasting scalar/tensor combinations and we should defer to it. Test Plan: Imported from OSS Reviewed By: mruberry Differential Revision: D25786172 Pulled By: gchanan fbshipit-source-id: 89de03aa0b900ce011a62911224a5441f15e331a

Configuration menu - View commit details

-

Copy full SHA for 88bd69b - Browse repository at this point

Copy the full SHA 88bd69bView commit details -

Configuration menu - View commit details

-

Copy full SHA for b5ab0a7 - Browse repository at this point

Copy the full SHA b5ab0a7View commit details -

[onnx] Do not deref nullptr in scalar type analysis (#50237)

Summary: Apply a little bit of defensive programming: `type->cast<TensorType>()` returns an optional pointer so dereferencing it can lead to a hard crash. Fixes SIGSEGV reported in #49959 Pull Request resolved: #50237 Reviewed By: walterddr Differential Revision: D25839675 Pulled By: malfet fbshipit-source-id: 403d6df5e2392dd6adc308b1de48057f2f9d77ab

Configuration menu - View commit details

-

Copy full SHA for 81778e2 - Browse repository at this point

Copy the full SHA 81778e2View commit details -

Clean up some type annotations in test/jit (#50158)

Summary: Pull Request resolved: #50158 Upgrades type annotations from Python2 to Python3 Test Plan: Sandcastle tests Reviewed By: xush6528 Differential Revision: D25717504 fbshipit-source-id: 9a83c44db02ec79f353862255732873f6d7f885e

Configuration menu - View commit details

-

Copy full SHA for a4f30d4 - Browse repository at this point

Copy the full SHA a4f30d4View commit details -

[numpy] torch.{all/any} : output dtype is always bool (#47878)

Summary: BC-breaking note: This PR changes the behavior of the any and all functions to always return a bool tensor. Previously these functions were only defined on bool and uint8 tensors, and when called on uint8 tensors they would also return a uint8 tensor. (When called on a bool tensor they would return a bool tensor.) PR summary: #44790 (comment) Fixes 2 and 3 Also Fixes #48352 Changes * Output dtype is always `bool` (consistent with numpy) **BC Breaking (Previously used to match the input dtype**) * Uses vectorized version for all dtypes on CPU * Enables test for complex * Update doc for `torch.all` and `torch.any` TODO * [x] Update docs * [x] Benchmark * [x] Raise issue on XLA Pull Request resolved: #47878 Reviewed By: albanD Differential Revision: D25714324 Pulled By: mruberry fbshipit-source-id: a87345f725297524242d69402dfe53060521ea5d

Configuration menu - View commit details

-

Copy full SHA for 5d45140 - Browse repository at this point

Copy the full SHA 5d45140View commit details -

Convert string => raw strings so char classes can be represented in P…

…ython regex (#50239) Summary: Pull Request resolved: #50239 Convert regex strings that have character classes (e.g. \d, \s, \w, \b, etc) into raw strings so they won't be interpreted as escape characters. References: Python RegEx - https://www.w3schools.com/python/python_regex.asp Python Escape Chars - https://www.w3schools.com/python/gloss_python_escape_characters.asp Python Raw String - https://www.journaldev.com/23598/python-raw-string Python RegEx Docs - https://docs.python.org/3/library/re.html Python String Tester - https://www.w3schools.com/python/trypython.asp?filename=demo_string_escape Python Regex Tester - https://regex101.com/ Test Plan: To find occurrences of regex strings with the above issue in VS Code, search using the regex \bre\.[a-z]+\(['"], and under 'files to include', use /data/users/your_username/fbsource/fbcode/caffe2. Reviewed By: r-barnes Differential Revision: D25813302 fbshipit-source-id: df9e23c0a84c49175eaef399ca6d091bfbeed936

Configuration menu - View commit details

-

Copy full SHA for d78b638 - Browse repository at this point

Copy the full SHA d78b638View commit details -

Dump state when hitting ambiguous_autogradother_kernel. (#50246)

Summary: Pull Request resolved: #50246 Test Plan: Imported from OSS Reviewed By: bhosmer Differential Revision: D25843205 Pulled By: ailzhang fbshipit-source-id: 66916ae477a4ae97e1695227fc6af78c4f328ea3

Configuration menu - View commit details

-

Copy full SHA for 0bb341d - Browse repository at this point

Copy the full SHA 0bb341dView commit details -

Apply clang-format to rpc cpp files (#50236)

Summary: Pull Request resolved: #50236 Test Plan: Imported from OSS Reviewed By: lw Differential Revision: D25847892 Pulled By: mrshenli fbshipit-source-id: b4af1221acfcaba8903c629869943abbf877e04e

Configuration menu - View commit details

-

Copy full SHA for f9f758e - Browse repository at this point

Copy the full SHA f9f758eView commit details -

Revert D25717504: Clean up some type annotations in test/jit

Test Plan: revert-hammer Differential Revision: D25717504 (a4f30d4) Original commit changeset: 9a83c44db02e fbshipit-source-id: e6e3a83bed22701d8125f5a293dfcd5093c1a2cd

Configuration menu - View commit details

-

Copy full SHA for 1bb7d8f - Browse repository at this point

Copy the full SHA 1bb7d8fView commit details -

Configuration menu - View commit details

-

Copy full SHA for 8f31621 - Browse repository at this point

Copy the full SHA 8f31621View commit details -

Unused exception variables (#50181)

Summary: These unused variables were identified by [pyflakes](https://pypi.org/project/pyflakes/). They can be safely removed to simplify the code. Pull Request resolved: #50181 Reviewed By: gchanan Differential Revision: D25844270 fbshipit-source-id: 0e648ffe8c6db6daf56788a13ba89806923cbb76

Configuration menu - View commit details

-

Copy full SHA for 2c4b6ec - Browse repository at this point

Copy the full SHA 2c4b6ecView commit details -

Configuration menu - View commit details

-

Copy full SHA for aa18d17 - Browse repository at this point

Copy the full SHA aa18d17View commit details

Commits on Jan 9, 2021

-

Optimize Vulkan command buffer submission rate. (#49112)

Summary: Pull Request resolved: #49112 Differential Revision: D25729889 Test Plan: Imported from OSS Reviewed By: SS-JIA Pulled By: AshkanAliabadi fbshipit-source-id: c4ab470fdcf3f83745971986f3a44a3dff69287f

Configuration menu - View commit details

-

Copy full SHA for 1c12cbe - Browse repository at this point

Copy the full SHA 1c12cbeView commit details -

Support scripting classmethod called with object instances (#49967)

Summary: Currentlt classmethods are compiled the same way as methods - the first argument is self. Adding a fake statement to assign the first argument to the class. This is kind of hacky, but that's all it takes. Pull Request resolved: #49967 Reviewed By: gchanan Differential Revision: D25841378 Pulled By: ppwwyyxx fbshipit-source-id: 0f3657b4c9d5d2181d658f9bade9bafc72de33d8

Configuration menu - View commit details

-

Copy full SHA for 49bb0a3 - Browse repository at this point

Copy the full SHA 49bb0a3View commit details -

Change CMake config to enable universal binary for Mac (#50243)

Summary: This PR is a step towards enabling cross compilation from x86_64 to arm64. The following has been added: 1. When cross compilation is detected, compile a local universal fatfile to use as protoc. 2. For the simple compile check in MiscCheck.cmake, make sure to compile the small snippet as a universal binary in order to run the check. **Test plan:** Kick off a minimal build on a mac intel machine with the macOS 11 SDK with this command: ``` CMAKE_OSX_ARCHITECTURES=arm64 USE_MKLDNN=OFF USE_QNNPACK=OFF USE_PYTORCH_QNNPACK=OFF BUILD_TEST=OFF USE_NNPACK=OFF python setup.py install ``` (If you run the above command before this change, or without macOS 11 SDK set up, it will fail.) Then check the platform of the built binaries using this command: ``` lipo -info build/lib/libfmt.a ``` Output: - Before this PR, running a regular build via `python setup.py install` (instead of using the flags listed above): ``` Non-fat file: build/lib/libfmt.a is architecture: x86_64 ``` - Using this PR: ``` Non-fat file: build/lib/libfmt.a is architecture: arm64 ``` Pull Request resolved: #50243 Reviewed By: malfet Differential Revision: D25849955 Pulled By: janeyx99 fbshipit-source-id: e9853709a7279916f66aa4c4e054dfecced3adb1

Configuration menu - View commit details

-

Copy full SHA for c2d37cd - Browse repository at this point

Copy the full SHA c2d37cdView commit details -

[fix] torch.cat: Don't resize out if it is already of the correct siz…

Configuration menu - View commit details

-

Copy full SHA for 36ddb00 - Browse repository at this point

Copy the full SHA 36ddb00View commit details -

JIT: guard DifferentiableGraph node (#49433)

Summary: This adds guarding for DifferentiableGraph nodes in order to not depend on Also bailing out on required gradients for the CUDA fuser. Fixes #49299 I still need to look into a handful of failing tests, but maybe it can be a discussion basis. Pull Request resolved: #49433 Reviewed By: ngimel Differential Revision: D25681374 Pulled By: Krovatkin fbshipit-source-id: 8e7be53a335c845560436c0cceeb5e154c9cf296

Configuration menu - View commit details

-

Copy full SHA for ea087e2 - Browse repository at this point

Copy the full SHA ea087e2View commit details -

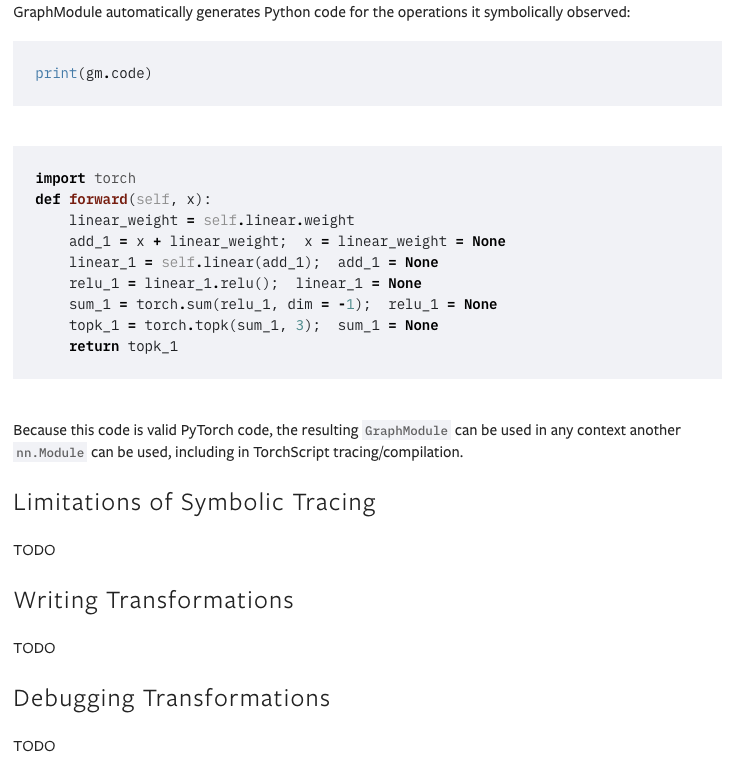

Document single op replacement (#50116)

Summary: Pull Request resolved: #50116 Test Plan: Imported from OSS Reviewed By: jamesr66a Differential Revision: D25803457 Pulled By: ansley fbshipit-source-id: de2f3c0bd037859117dde55ba677fb5da34ab639

Configuration menu - View commit details

-

Copy full SHA for ba1ce71 - Browse repository at this point

Copy the full SHA ba1ce71View commit details -

reuse consant from jit (#49916)

Summary: Pull Request resolved: #49916 Test Plan: 1. Build pytorch locally. `MACOSX_DEPLOYMENT_TARGET=10.9 CC=clang CXX=clang++ USE_CUDA=0 DEBUG=1 MAX_JOBS=16 python setup.py develop` 2. Run `python save_lite.py` ``` import torch # ~/Documents/pytorch/data/dog.jpg model = torch.hub.load('pytorch/vision:v0.6.0', 'shufflenet_v2_x1_0', pretrained=True) model.eval() # sample execution (requires torchvision) from PIL import Image from torchvision import transforms import pathlib import tempfile import torch.utils.mobile_optimizer input_image = Image.open('~/Documents/pytorch/data/dog.jpg') preprocess = transforms.Compose([ transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]), ]) input_tensor = preprocess(input_image) input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model # move the input and model to GPU for speed if available if torch.cuda.is_available(): input_batch = input_batch.to('cuda') model.to('cuda') with torch.no_grad(): output = model(input_batch) # Tensor of shape 1000, with confidence scores over Imagenet's 1000 classes print(output[0]) # The output has unnormalized scores. To get probabilities, you can run a softmax on it. print(torch.nn.functional.softmax(output[0], dim=0)) traced = torch.jit.trace(model, input_batch) sum(p.numel() * p.element_size() for p in traced.parameters()) tf = pathlib.Path('~/Documents/pytorch/data/data/example_debug_map_with_tensorkey.ptl') torch.jit.save(traced, tf.name) print(pathlib.Path(tf.name).stat().st_size) traced._save_for_lite_interpreter(tf.name) print(pathlib.Path(tf.name).stat().st_size) print(tf.name) ``` 3. Run `python test_lite.py` ``` import torch from torch.jit.mobile import _load_for_lite_interpreter # sample execution (requires torchvision) from PIL import Image from torchvision import transforms input_image = Image.open('~/Documents/pytorch/data/dog.jpg') preprocess = transforms.Compose([ transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]), ]) input_tensor = preprocess(input_image) input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model reload_lite_model = _load_for_lite_interpreter('~/Documents/pytorch/experiment/example_debug_map_with_tensorkey.ptl') with torch.no_grad(): output_lite = reload_lite_model(input_batch) # Tensor of shape 1000, with confidence scores over Imagenet's 1000 classes print(output_lite[0]) # The output has unnormalized scores. To get probabilities, you can run a softmax on it. print(torch.nn.functional.softmax(output_lite[0], dim=0)) ``` 4. Compare the result with pytorch in master and pytorch built locally with this change, and see the same output. 5. The model size was 16.1 MB and becomes 12.9 with this change. Imported from OSS Reviewed By: kimishpatel, iseeyuan Differential Revision: D25731596 Pulled By: cccclai fbshipit-source-id: 9731ec1e0c1d5dc76cfa374d2ad3d5bb10990cf0

Configuration menu - View commit details

-

Copy full SHA for d4c1684 - Browse repository at this point

Copy the full SHA d4c1684View commit details -

[codemod][fbcode/caffe2] Apply clang-format update fixes

Test Plan: Sandcastle and visual inspection. Reviewed By: igorsugak Differential Revision: D25849205 fbshipit-source-id: ef664c1ad4b3ee92d5c020a5511b4ef9837a09a0

Configuration menu - View commit details

-

Copy full SHA for 8530c65 - Browse repository at this point

Copy the full SHA 8530c65View commit details

Commits on Jan 10, 2021

-

Configuration menu - View commit details

-

Copy full SHA for 375c30a - Browse repository at this point

Copy the full SHA 375c30aView commit details -

Summary: This PR adds `torch.linalg.inv` for NumPy compatibility. `linalg_inv_out` uses in-place operations on provided `result` tensor. I modified `apply_inverse` to accept tensor of Int instead of std::vector, that way we can write a function similar to `linalg_inv_out` but removing the error checks and device memory synchronization. I fixed `lda` (leading dimension parameter which is max(1, n)) in many places to handle 0x0 matrices correctly. Zero batch dimensions are also working and tested. Ref #42666 Pull Request resolved: #48261 Reviewed By: gchanan Differential Revision: D25849590 Pulled By: mruberry fbshipit-source-id: cfee6f1daf7daccbe4612ec68f94db328f327651

Configuration menu - View commit details

-

Copy full SHA for 4774c68 - Browse repository at this point

Copy the full SHA 4774c68View commit details -

Allow arbitrary docstrings to be inside torchscript interface methods (…

Configuration menu - View commit details

-

Copy full SHA for 26cc630 - Browse repository at this point

Copy the full SHA 26cc630View commit details -

Automated submodule update: tensorpipe (#50267)

Summary: This is an automated pull request to update the first-party submodule for [pytorch/tensorpipe](https://github.com/pytorch/tensorpipe). New submodule commit: pytorch/tensorpipe@03e0711 Pull Request resolved: #50267 Test Plan: Ensure that CI jobs succeed on GitHub before landing. Reviewed By: gchanan Differential Revision: D25848309 Pulled By: mrshenli fbshipit-source-id: c77adbad73c5b3b4b7d4e79953a797621dc11e5c

Configuration menu - View commit details

-

Copy full SHA for 92fcb59 - Browse repository at this point

Copy the full SHA 92fcb59View commit details

Commits on Jan 11, 2021

-

Use FileStore in TorchScript for store registry (#50248)

Summary: Pull Request resolved: #50248 make the FileStore path also use TorchScript when it's needed. Test Plan: wait for sandcastle. Reviewed By: zzzwen Differential Revision: D25842651 fbshipit-source-id: dec941e895a33ffde42c877afcaf64b5aecbe098

Configuration menu - View commit details

-

Copy full SHA for fd92bcf - Browse repository at this point

Copy the full SHA fd92bcfView commit details -

treat Parameter the same way as Tensor (#48963)

Summary: Pull Request resolved: #48963 This PR makes the binding code treat `Parameter` the same way as `Tensor`, unlike all other `Tensor` subclasses. This does change the semantics of `THPVariable_CheckExact`, but it isn't used much and it seemed to make sense for the half dozen or so places that it is used. Test Plan: Existing unit tests. Benchmarks are in #48966 Reviewed By: ezyang Differential Revision: D25590733 Pulled By: robieta fbshipit-source-id: 060ecaded27b26e4b756898eabb9a94966fc9840

Configuration menu - View commit details

-

Copy full SHA for 839c2f2 - Browse repository at this point

Copy the full SHA 839c2f2View commit details -

clean up imports for tensor.py (#48964)

Summary: Pull Request resolved: #48964 Stop importing overrides within methods now that the circular dependency is gone, and also organize the imports while I'm at it because they're a jumbled mess. Test Plan: Existing unit tests. Benchmarks are in #48966 Reviewed By: ngimel Differential Revision: D25590730 Pulled By: robieta fbshipit-source-id: 4fa929ce8ff548500f3e55d0475f3f22c1fccc04

Configuration menu - View commit details

-

Copy full SHA for 632a440 - Browse repository at this point

Copy the full SHA 632a440View commit details -

move has_torch_function to C++, and make a special case object_has_to…

…rch_function (#48965) Summary: Pull Request resolved: #48965 This PR pulls `__torch_function__` checking entirely into C++, and adds a special `object_has_torch_function` method for ops which only have one arg as this lets us skip tuple construction and unpacking. We can now also do away with the Python side fast bailout for `Tensor` (e.g. `if any(type(t) is not Tensor for t in tensors) and has_torch_function(tensors)`) because they're actually slower than checking with the Python C API. Test Plan: Existing unit tests. Benchmarks are in #48966 Reviewed By: ezyang Differential Revision: D25590732 Pulled By: robieta fbshipit-source-id: 6bd74788f06cdd673f3a2db898143d18c577eb42

Configuration menu - View commit details

-

Copy full SHA for d31a760 - Browse repository at this point

Copy the full SHA d31a760View commit details -

Treat has_torch_function and object_has_torch_function as static Fals…

…e when scripting (#48966) Summary: Pull Request resolved: #48966 This PR lets us skip the `if not torch.jit.is_scripting():` guards on `functional` and `nn.functional` by directly registering `has_torch_function` and `object_has_torch_function` to the JIT as statically False. **Benchmarks** The benchmark script is kind of long. The reason is that it's testing all four PRs in the stack, plus threading and subprocessing so that the benchmark can utilize multiple cores while still collecting good numbers. Both wall times and instruction counts were collected. This stack changes dozens of operators / functions, but very mechanically such that there are only a handful of codepath changes. Each row is a slightly different code path (e.g. testing in Python, testing in the arg parser, different input types, etc.) <details> <summary> Test script </summary> ``` import argparse import multiprocessing import multiprocessing.dummy import os import pickle import queue import random import sys import subprocess import tempfile import time import torch from torch.utils.benchmark import Timer, Compare, Measurement NUM_CORES = multiprocessing.cpu_count() ENVS = { "ref": "HEAD (current)", "torch_fn_overhead_stack_0": "#48963", "torch_fn_overhead_stack_1": "#48964", "torch_fn_overhead_stack_2": "#48965", "torch_fn_overhead_stack_3": "#48966", } CALLGRIND_ENVS = tuple(ENVS.keys()) MIN_RUN_TIME = 3 REPLICATES = { "longer": 1_000, "long": 300, "short": 50, } CALLGRIND_NUMBER = { "overnight": 500_000, "long": 250_000, "short": 10_000, } CALLGRIND_TIMEOUT = { "overnight": 800, "long": 400, "short": 100, } SETUP = """ x = torch.ones((1, 1)) y = torch.ones((1, 1)) w_tensor = torch.ones((1, 1), requires_grad=True) linear = torch.nn.Linear(1, 1, bias=False) linear_w = linear.weight """ TASKS = { "C++: unary `.t()`": "w_tensor.t()", "C++: unary (Parameter) `.t()`": "linear_w.t()", "C++: binary (Parameter) `mul` ": "x + linear_w", "tensor.py: _wrap_type_error_to_not_implemented `__floordiv__`": "x // y", "tensor.py: method `__hash__`": "hash(x)", "Python scalar `__rsub__`": "1 - x", "functional.py: (unary) `unique`": "torch.functional.unique(x)", "functional.py: (args) `atleast_1d`": "torch.functional.atleast_1d((x, y))", "nn/functional.py: (unary) `relu`": "torch.nn.functional.relu(x)", "nn/functional.py: (args) `linear`": "torch.nn.functional.linear(x, w_tensor)", "nn/functional.py: (args) `linear (Parameter)`": "torch.nn.functional.linear(x, linear_w)", "Linear(..., bias=False)": "linear(x)", } def _worker_main(argv, fn): parser = argparse.ArgumentParser() parser.add_argument("--output_file", type=str) parser.add_argument("--single_task", type=int, default=None) parser.add_argument("--length", type=str) args = parser.parse_args(argv) single_task = args.single_task conda_prefix = os.getenv("CONDA_PREFIX") assert torch.__file__.startswith(conda_prefix) env = os.path.split(conda_prefix)[1] assert env in ENVS results = [] for i, (k, stmt) in enumerate(TASKS.items()): if single_task is not None and single_task != i: continue timer = Timer( stmt=stmt, setup=SETUP, sub_label=k, description=ENVS[env], ) results.append(fn(timer, args.length)) with open(args.output_file, "wb") as f: pickle.dump(results, f) def worker_main(argv): _worker_main( argv, lambda timer, _: timer.blocked_autorange(min_run_time=MIN_RUN_TIME) ) def callgrind_worker_main(argv): _worker_main( argv, lambda timer, length: timer.collect_callgrind(number=CALLGRIND_NUMBER[length], collect_baseline=False)) def main(argv): parser = argparse.ArgumentParser() parser.add_argument("--long", action="store_true") parser.add_argument("--longer", action="store_true") args = parser.parse_args(argv) if args.longer: length = "longer" elif args.long: length = "long" else: length = "short" replicates = REPLICATES[length] num_workers = int(NUM_CORES // 2) tasks = list(ENVS.keys()) * replicates random.shuffle(tasks) task_queue = queue.Queue() for _ in range(replicates): envs = list(ENVS.keys()) random.shuffle(envs) for e in envs: task_queue.put((e, None)) callgrind_task_queue = queue.Queue() for e in CALLGRIND_ENVS: for i, _ in enumerate(TASKS): callgrind_task_queue.put((e, i)) results = [] callgrind_results = [] def map_fn(worker_id): # Adjacent cores often share cache and maxing out a machine can distort # timings so we space them out. callgrind_cores = f"{worker_id * 2}-{worker_id * 2 + 1}" time_cores = str(worker_id * 2) _, output_file = tempfile.mkstemp(suffix=".pkl") try: loop_tasks = ( # Callgrind is long running, and then the workers can help with # timing after they finish collecting counts. (callgrind_task_queue, callgrind_results, "callgrind_worker", callgrind_cores, CALLGRIND_TIMEOUT[length]), (task_queue, results, "worker", time_cores, None)) for queue_i, results_i, mode_i, cores, timeout in loop_tasks: while True: try: env, task_i = queue_i.get_nowait() except queue.Empty: break remaining_attempts = 3 while True: try: subprocess.run( " ".join([ "source", "activate", env, "&&", "taskset", "--cpu-list", cores, "python", os.path.abspath(__file__), "--mode", mode_i, "--length", length, "--output_file", output_file ] + ([] if task_i is None else ["--single_task", str(task_i)])), shell=True, check=True, timeout=timeout, ) break except subprocess.TimeoutExpired: # Sometimes Valgrind will hang if there are too many # concurrent runs. remaining_attempts -= 1 if not remaining_attempts: print("Too many failed attempts.") raise print(f"Timeout after {timeout} sec. Retrying.") # We don't need a lock, as the GIL is enough. with open(output_file, "rb") as f: results_i.extend(pickle.load(f)) finally: os.remove(output_file) with multiprocessing.dummy.Pool(num_workers) as pool: st, st_estimate, eta, n_total = time.time(), None, "", len(tasks) * len(TASKS) map_job = pool.map_async(map_fn, range(num_workers)) while not map_job.ready(): n_complete = len(results) if n_complete and len(callgrind_results): if st_estimate is None: st_estimate = time.time() else: sec_per_element = (time.time() - st_estimate) / n_complete n_remaining = n_total - n_complete eta = f"ETA: {n_remaining * sec_per_element:.0f} sec" print( f"\r{n_complete} / {n_total} " f"({len(callgrind_results)} / {len(CALLGRIND_ENVS) * len(TASKS)}) " f"{eta}".ljust(40), end="") sys.stdout.flush() time.sleep(2) total_time = int(time.time() - st) print(f"\nTotal time: {int(total_time // 60)} min, {total_time % 60} sec") desc_to_ind = {k: i for i, k in enumerate(ENVS.values())} results.sort(key=lambda r: desc_to_ind[r.description]) # TODO: Compare should be richer and more modular. compare = Compare(results) compare.trim_significant_figures() compare.colorize(rowwise=True) # Manually add master vs. overall relative delta t. merged_results = { (r.description, r.sub_label): r for r in Measurement.merge(results) } cmp_lines = str(compare).splitlines(False) print(cmp_lines[0][:-1] + "-" * 15 + "]") print(f"{cmp_lines[1]} |{'':>10}\u0394t") print(cmp_lines[2] + "-" * 15) for l, t in zip(cmp_lines[3:3 + len(TASKS)], TASKS.keys()): assert l.strip().startswith(t) t0 = merged_results[(ENVS["ref"], t)].median t1 = merged_results[(ENVS["torch_fn_overhead_stack_3"], t)].median print(f"{l} |{'':>5}{(t1 / t0 - 1) * 100:>6.1f}%") print("\n".join(cmp_lines[3 + len(TASKS):])) counts_dict = { (r.task_spec.description, r.task_spec.sub_label): r.counts(denoise=True) for r in callgrind_results } def rel_diff(x, x0): return f"{(x / x0 - 1) * 100:>6.1f}%" task_pad = max(len(t) for t in TASKS) print(f"\n\nInstruction % change (relative to `{CALLGRIND_ENVS[0]}`)") print(" " * (task_pad + 8) + (" " * 7).join([ENVS[env] for env in CALLGRIND_ENVS[1:]])) for t in TASKS: values = [counts_dict[(ENVS[env], t)] for env in CALLGRIND_ENVS] print(t.ljust(task_pad + 3) + " ".join([ rel_diff(v, values[0]).rjust(len(ENVS[env]) + 5) for v, env in zip(values[1:], CALLGRIND_ENVS[1:])])) print("\033[4m" + " Instructions per invocation".ljust(task_pad + 3) + " ".join([ f"{v // CALLGRIND_NUMBER[length]:.0f}".rjust(len(ENVS[env]) + 5) for v, env in zip(values[1:], CALLGRIND_ENVS[1:])]) + "\033[0m") print() import pdb pdb.set_trace() if __name__ == "__main__": parser = argparse.ArgumentParser() parser.add_argument("--mode", type=str, choices=("main", "worker", "callgrind_worker"), default="main") args, remaining = parser.parse_known_args() if args.mode == "main": main(remaining) elif args.mode == "callgrind_worker": callgrind_worker_main(remaining) else: worker_main(remaining) ``` </details> **Wall time** <img width="1178" alt="Screen Shot 2020-12-12 at 12 28 13 PM" src="https://user-images.githubusercontent.com/13089297/101994419-284f6a00-3c77-11eb-8dc8-4f69a890302e.png"> <details> <summary> Longer run (`python test.py --long`) is basically identical. </summary> <img width="1184" alt="Screen Shot 2020-12-12 at 5 02 47 PM" src="https://user-images.githubusercontent.com/13089297/102000425-2350e180-3c9c-11eb-999e-a95b37e9ef54.png"> </details> **Callgrind** <img width="936" alt="Screen Shot 2020-12-12 at 12 28 54 PM" src="https://user-images.githubusercontent.com/13089297/101994421-2e454b00-3c77-11eb-9cd3-8cde550f536e.png"> Test Plan: existing unit tests. Reviewed By: ezyang Differential Revision: D25590731 Pulled By: robieta fbshipit-source-id: fe05305ff22b0e34ced44b60f2e9f07907a099dd

Configuration menu - View commit details

-

Copy full SHA for 6a3fc0c - Browse repository at this point

Copy the full SHA 6a3fc0cView commit details -

Use Unicode friendly API in fused kernel related code (#49781)

Configuration menu - View commit details

-

Copy full SHA for 9d8bd21 - Browse repository at this point

Copy the full SHA 9d8bd21View commit details -

svd_backward: more memory and computationally efficient. (#50109)

Summary: As per title. CC IvanYashchuk (unfortunately I cannot add you as a reviewer for some reason). Pull Request resolved: #50109 Reviewed By: gchanan Differential Revision: D25828536 Pulled By: albanD fbshipit-source-id: 3791c3dd4f5c2a2917eac62e6527ecd1edcb400d

Configuration menu - View commit details

-

Copy full SHA for eb87686 - Browse repository at this point

Copy the full SHA eb87686View commit details -

Run mypy over test/test_utils.py (#50278)

Summary: _resubmission of gh-49654, which was reverted due to a cross-merge conflict_ This caught one incorrect annotation in `cpp_extension.load`. xref gh-16574. Pull Request resolved: #50278 Reviewed By: walterddr Differential Revision: D25865278 Pulled By: ezyang fbshipit-source-id: 25489191628af5cf9468136db36f5a0f72d9d54d

Configuration menu - View commit details

-

Copy full SHA for e29082b - Browse repository at this point

Copy the full SHA e29082bView commit details -

Vulkan convolution touchups. (#50329)

Summary: Pull Request resolved: #50329 Test Plan: Imported from OSS Reviewed By: SS-JIA Differential Revision: D25869147 Pulled By: AshkanAliabadi fbshipit-source-id: b8f393330b68912506fdaefaf62a455dc192e36c

Configuration menu - View commit details

-

Copy full SHA for acaf091 - Browse repository at this point

Copy the full SHA acaf091View commit details -

Format RPC files with clang-format (#50367)

Summary: Pull Request resolved: #50367 This had already been done by mrshenli on Friday (#50236, D25847892 (f9f758e)) but over the weekend Facebook's internal clang-format version got updated and this changed the format, hence we need to re-apply it. Note that this update also affected the JIT files, which are the other module enrolled in clang-format (see 8530c65, D25849205 (8530c65)). ghstack-source-id: 119656866 Test Plan: Shouldn't include functional changes. In any case, there's CI. Reviewed By: mrshenli Differential Revision: D25867720 fbshipit-source-id: 3723abc6c35831d7a8ac31f74baf24c963c98b9d

Configuration menu - View commit details

-

Copy full SHA for 186fe48 - Browse repository at this point

Copy the full SHA 186fe48View commit details -

Move scalar_to_tensor_default_dtype out of ScalarOps.h because it's o…

Configuration menu - View commit details

-

Copy full SHA for 0f412aa - Browse repository at this point

Copy the full SHA 0f412aaView commit details -

[aten] embedding_bag_byte_rowwise_offsets_out (#49561)

Summary: Pull Request resolved: #49561 Out variant for embedding_bag_byte_rowwise_offsets Test Plan: ```MKL_NUM_THREADS=1 OMP_NUM_THREADS=1 numactl -m 0 -C 3 ./buck-out/opt/gen/caffe2/caffe2/fb/predictor/ptvsc2_predictor_bench --scripted_model=/data/users/ansha/tmp/adindexer/merge/traced_merge_dper_fixes.pt --p t_inputs=/data/users/ansha/tmp/adindexer/merge/container_precomputation_bs1.pt --iters=30000 --warmup_iters=10000 --num_threads=1 --pred_net=/data/users/ansha/tmp/adindexer/precomputation_merge_net.pb --c2_inp uts=/data/users/ansha/tmp/adindexer/merge/c2_inputs_precomputation_bs1.pb --c2_sigrid_transforms_opt=1 --c2_use_memonger=1 --c2_apply_nomnigraph_passes --c2_weights=/data/users/ansha/tmp/adindexer/merge/c2_weig hts_precomputation.pb --pt_enable_static_runtime --pt_cleanup_activations=true --pt_enable_out_variant=true --compare_results --do_profile``` Check embedding_bag_byte_rowwise_offsets_out is called in perf Before: 0.081438 After: 0.0783725 Reviewed By: supriyar, hlu1 Differential Revision: D25620718 fbshipit-source-id: 83d5d0dd2e1f60c46e6727f73d5d8b52661b6767

Configuration menu - View commit details

-

Copy full SHA for 6eb8e83 - Browse repository at this point

Copy the full SHA 6eb8e83View commit details -

[quant][graphmode][fx] Scope support for call_method in QuantizationT…

…racer (#50173) Summary: Pull Request resolved: #50173 Previously we did not set the qconfig for call_method node correctly since it requires us to know the scope (module path of the module whose forward graph contains the node) of the node. This PR modifies the QuantizationTracer to record the scope information and build a map from call_method Node to module path, which will be used when we construct qconfig_map Test Plan: python test/test_quantization.py TestQuantizeFx.test_qconfig_for_call_method Imported from OSS Reviewed By: vkuzo Differential Revision: D25818132 fbshipit-source-id: ee9c5830f324d24d7cf67e5cd2bf1f6e0e46add8

Configuration menu - View commit details

-

Copy full SHA for f10e7aa - Browse repository at this point

Copy the full SHA f10e7aaView commit details -

[FX} Implement wrap() by patching module globals during symtrace (#50182

) Summary: Pull Request resolved: #50182 Test Plan: Imported from OSS Reviewed By: pbelevich Differential Revision: D25819730 Pulled By: jamesr66a fbshipit-source-id: 274f4799ad589887ecf3b94f5c24ecbe1bc14b1b

Configuration menu - View commit details

-

Copy full SHA for a7e92f1 - Browse repository at this point

Copy the full SHA a7e92f1View commit details -

[FX] Make graph target printouts more user-friendly (#50296)

Summary: Pull Request resolved: #50296 Test Plan: Imported from OSS Reviewed By: pbelevich Differential Revision: D25855288 Pulled By: jamesr66a fbshipit-source-id: dd725980fc492526861c2ec234050fbdb814caa8

Configuration menu - View commit details

-

Copy full SHA for d390e3d - Browse repository at this point

Copy the full SHA d390e3dView commit details -

[JIT] Ensure offset is a multiple of 4 to fix "Philox" RNG in jitted …

…kernels (#50169) Summary: Immediately-upstreamable part of #50148. This PR fixes what I'm fairly sure is a subtle bug with custom `Philox` class usage in jitted kernels. `Philox` [constructors in kernels](https://github.com/pytorch/pytorch/blob/68a6e4637903dba279c60daae5cff24e191ff9b4/torch/csrc/jit/codegen/cuda/codegen.cpp#L102) take the cuda rng generator's current offset. The Philox constructor then carries out [`offset/4`](https://github.com/pytorch/pytorch/blob/74c055b24065d0202aecdf4bc837d3698d1639e1/torch/csrc/jit/codegen/cuda/runtime/random_numbers.cu#L13) (a uint64_t division) to compute its internal offset in its virtual Philox bitstream of 128-bit chunks. In other words, it assumes the incoming offset is a multiple of 4. But (in current code) that's not guaranteed. For example, the increments used by [these eager kernels](https://github.com/pytorch/pytorch/blob/74c055b24065d0202aecdf4bc837d3698d1639e1/aten/src/ATen/native/cuda/Distributions.cu#L171-L216) could easily make offset not divisible by 4. I figured the easiest fix was to round all incoming increments up to the nearest multiple of 4 in CUDAGeneratorImpl itself. Another option would be to round the current offset up to the next multiple of 4 at the jit point of use. But that would be a jit-specific offset jump, so jit rng kernels wouldn't have a prayer of being bitwise accurate with eager rng kernels that used non-multiple-of-4 offsets. Restricting the offset to multiples of 4 for everyone at least gives jit rng the chance to match eager rng. (Of course, there are still many other ways the numerics could diverge, like if a jit kernel launches a different number of threads than an eager kernel, or assigns threads to data elements differently.) Pull Request resolved: #50169 Reviewed By: mruberry Differential Revision: D25857934 Pulled By: ngimel fbshipit-source-id: 43a75e2d0c8565651b0f12a5694c744fd86ece99

Configuration menu - View commit details

-

Copy full SHA for 271240a - Browse repository at this point

Copy the full SHA 271240aView commit details -

[quant][graphmode][fx] Support preserved_attributes in prepare_fx (#5…

Configuration menu - View commit details

-

Copy full SHA for 55ac7e5 - Browse repository at this point

Copy the full SHA 55ac7e5View commit details -

Implement optimization bisect (#49031)

Summary: Pull Request resolved: #49031 Test Plan: Imported from OSS Reviewed By: nikithamalgifb Differential Revision: D25691790 Pulled By: tugsbayasgalan fbshipit-source-id: a9c4ff1142f8a234a4ef5b1045fae842c82c18bf

Configuration menu - View commit details

-

Copy full SHA for 559e2d8 - Browse repository at this point

Copy the full SHA 559e2d8View commit details -

Fix elu backward operation for negative alpha (#49272)

Summary: Fixes #47671 Pull Request resolved: #49272 Test Plan: ``` x = torch.tensor([-2, -1, 0, 1, 2], dtype=torch.float32, requires_grad=True) y = torch.nn.functional.elu_(x.clone(), alpha=-2) grads = torch.tensor(torch.ones_like(y)) y.backward(grads) ``` ``` RuntimeError: In-place elu backward calculation is triggered with a negative slope which is not supported. This is caused by calling in-place forward function with a negative slope, please call out-of-place version instead. ``` Reviewed By: albanD Differential Revision: D25569839 Pulled By: H-Huang fbshipit-source-id: e3c6c0c2c810261566c10c0cc184fd81b280c650

Configuration menu - View commit details

-

Copy full SHA for ec51b67 - Browse repository at this point

Copy the full SHA ec51b67View commit details -

Update op replacement tutorial (#50377)

Summary: Pull Request resolved: #50377 Test Plan: Imported from OSS Reviewed By: jamesr66a Differential Revision: D25870409 Pulled By: ansley fbshipit-source-id: b873b89c2e62b57cd5d816f81361c8ff31be2948

Configuration menu - View commit details

-

Copy full SHA for 3d263d1 - Browse repository at this point

Copy the full SHA 3d263d1View commit details -

Add docstring for Proxy (#50145)

Summary: Pull Request resolved: #50145 Test Plan: Imported from OSS Reviewed By: pbelevich Differential Revision: D25854281 Pulled By: ansley fbshipit-source-id: d7af6fd6747728ef04e86fbcdeb87cb0508e1fd8

Configuration menu - View commit details

-

Copy full SHA for 080a097 - Browse repository at this point

Copy the full SHA 080a097View commit details -

[JIT] Print better error when class attribute IValue conversion fails (…

…#50255) Summary: Pull Request resolved: #50255 **Summary** TorchScript classes are copied attribute-by-attribute from a py::object into a `jit::Object` in `toIValue`, which is called when copying objects from Python into TorchScript. However, if an attribute of the class cannot be converted, the error thrown is a standard pybind error that is hard to act on. This commit adds code to `toIValue` to convert each attribute to an `IValue` inside a try-catch block, throwing a `cast_error` containing the name of the attribute and the target type if the conversion fails. **Test Plan** This commit adds a unit test to `test_class_type.py` based on the code in the issue that commit fixes. **Fixes** This commit fixes #46341. Test Plan: Imported from OSS Reviewed By: pbelevich, tugsbayasgalan Differential Revision: D25854183 Pulled By: SplitInfinity fbshipit-source-id: 69d6e49cce9144af4236b8639d8010a20b7030c0

Configuration menu - View commit details

-

Copy full SHA for 4d3c12d - Browse repository at this point

Copy the full SHA 4d3c12dView commit details -

[JIT] Update clang-format hashes (#50399)

Summary: Pull Request resolved: #50399 **Summary** This commit updates the expected hashes of the `clang-format` binaries downloaded from S3. These binaries themselves have been updated due to having been updated inside fbcode. **Test Plan** Uploaded new binaries to S3, deleted `.clang-format-bin` and ran `clang_format_all.py`. Test Plan: Imported from OSS Reviewed By: seemethere Differential Revision: D25875184 Pulled By: SplitInfinity fbshipit-source-id: da483735de1b5f1dab7b070f91848ec5741f00b1

Configuration menu - View commit details

-

Copy full SHA for a48640a - Browse repository at this point

Copy the full SHA a48640aView commit details -

.circleci: Remove CUDA 9.2 binary build jobs (#50388)

Summary: Now that we support CUDA 11 we can remove support for CUDA 9.2 Signed-off-by: Eli Uriegas <eliuriegas@fb.com> Fixes #{issue number} Pull Request resolved: #50388 Reviewed By: zhangguanheng66 Differential Revision: D25872955 Pulled By: seemethere fbshipit-source-id: 1c10bcc8f4abbc1af1b3180b4cf4a9ea9c7104f9Configuration menu - View commit details

-

Copy full SHA for fd09270 - Browse repository at this point

Copy the full SHA fd09270View commit details -

Add link to tutorial in Timer doc (#50374)

Summary: Because I have a hard time finding this tutorial every time I need it. So I'm sure other people have the same issue :D Pull Request resolved: #50374 Reviewed By: zhangguanheng66 Differential Revision: D25872173 Pulled By: albanD fbshipit-source-id: f34f719606e58487baf03c73dcbd255017601a09

Configuration menu - View commit details

-

Copy full SHA for 7efc212 - Browse repository at this point

Copy the full SHA 7efc212View commit details -

Configuration menu - View commit details

-

Copy full SHA for e160362 - Browse repository at this point

Copy the full SHA e160362View commit details -

Raise warning during validation when arg_constraints not defined (#50302

) Summary: After we merged #48743, we noticed that some existing code that subclasses `torch.Distribution` started throwing `NotImplemenedError` since the constraints required for validation checks were not implemented. ```sh File "torch/distributions/distribution.py", line 40, in __init__ for param, constraint in self.arg_constraints.items(): File "torch/distributions/distribution.py", line 92, in arg_constraints raise NotImplementedError ``` This PR throws a UserWarning for such cases instead and gives a better warning message. cc. Balandat Pull Request resolved: #50302 Reviewed By: Balandat, xuzhao9 Differential Revision: D25857315 Pulled By: neerajprad fbshipit-source-id: 0ff9f81aad97a0a184735b1fe3a5d42025c8bcdf

Configuration menu - View commit details

-

Copy full SHA for d76176c - Browse repository at this point

Copy the full SHA d76176cView commit details -

[fix] Indexing.cu: Move call to C10_CUDA_KERNEL_LAUNCH_CHECK to make …

…it reachable (#49283) Summary: Fixes Compiler Warning: ``` aten/src/ATen/native/cuda/Indexing.cu(233): warning: loop is not reachable aten/src/ATen/native/cuda/Indexing.cu(233): warning: loop is not reachable aten/src/ATen/native/cuda/Indexing.cu(233): warning: loop is not reachable ``` Pull Request resolved: #49283 Reviewed By: zhangguanheng66 Differential Revision: D25874613 Pulled By: ngimel fbshipit-source-id: 6e384e89533c1d80f241b7b98fda239c357d1a2c

Configuration menu - View commit details

-

Copy full SHA for bb97503 - Browse repository at this point

Copy the full SHA bb97503View commit details

Commits on Jan 12, 2021

-

Automated submodule update: tensorpipe (#50369)

Summary: This is an automated pull request to update the first-party submodule for [pytorch/tensorpipe](https://github.com/pytorch/tensorpipe). New submodule commit: pytorch/tensorpipe@bc5ac93 Pull Request resolved: #50369 Test Plan: Ensure that CI jobs succeed on GitHub before landing. Reviewed By: mrshenli Differential Revision: D25867976 Pulled By: lw fbshipit-source-id: 5274aa424e3215b200dcb2c02f342270241dd77d

Configuration menu - View commit details

-

Copy full SHA for 9a3305f - Browse repository at this point

Copy the full SHA 9a3305fView commit details -

[GPU] Calculate strides for metal tensors (#50309)

Summary: Pull Request resolved: #50309 Previously, in order to unblock the dogfooding, we did some hacks to calculate the strides for the output tensor. Now it's time to fix that. ghstack-source-id: 119673688 Test Plan: 1. Sandcastle CI 2. Person segmentation results Reviewed By: AshkanAliabadi Differential Revision: D25821766 fbshipit-source-id: 8c067f55a232b7f102a64b9035ef54c72ebab4d4

Configuration menu - View commit details

-

Copy full SHA for ba83aea - Browse repository at this point

Copy the full SHA ba83aeaView commit details -

Stop using an unnecessary scalar_to_tensor(..., device) call. (#50114)

Summary: Pull Request resolved: #50114 In this case, the function only dispatches on cpu anyway. Test Plan: Imported from OSS Reviewed By: mruberry Differential Revision: D25790155 Pulled By: gchanan fbshipit-source-id: 799dc9a3a38328a531ced9e85ad2b4655533e86a

Configuration menu - View commit details

-

Copy full SHA for b001c4c - Browse repository at this point

Copy the full SHA b001c4cView commit details -

Ensure DDP + Pipe works with find_unused_parameters. (#49908)

Summary: Pull Request resolved: #49908 As described in #49891, DDP + Pipe doesn't work with find_unused_parameters. This PR adds a simple fix to enable this functionality. This only currently works for Pipe within a single host and needs to be re-worked once we support cross host Pipe. ghstack-source-id: 119573413 Test Plan: 1) unit tests added. 2) waitforbuildbot Reviewed By: rohan-varma Differential Revision: D25719922 fbshipit-source-id: 948bcc758d96f6b3c591182f1ec631830db1b15c

Configuration menu - View commit details

-

Copy full SHA for f39f258 - Browse repository at this point

Copy the full SHA f39f258View commit details -

Configuration menu - View commit details

-

Copy full SHA for 5f8e1a1 - Browse repository at this point

Copy the full SHA 5f8e1a1View commit details -

[GPU] Fix the broken strides value for 2d transpose (#50310)

Summary: Pull Request resolved: #50310 Swapping the stride value is OK if the output tensor's storage stays in-contiguous. However, when we copy the result back to CPU, we expect to see a contiguous tensor. ``` >>> x = torch.rand(2,3) >>> x.stride() (3, 1) >>> y = x.t() >>> y.stride() (1, 3) >>> z = y.contiguous() >>> z.stride() (2, 1) ``` ghstack-source-id: 119692581 Test Plan: Sandcastle CI Reviewed By: AshkanAliabadi Differential Revision: D25823665 fbshipit-source-id: 61667c03d1d4dd8692b76444676cc393f808cec8

Configuration menu - View commit details

-

Copy full SHA for a72c6fd - Browse repository at this point

Copy the full SHA a72c6fdView commit details -

[GPU] Clean up the operator tests (#50311)

Summary: Pull Request resolved: #50311 Code clean up ghstack-source-id: 119693032 Test Plan: Sandcastle Reviewed By: husthyc Differential Revision: D25823635 fbshipit-source-id: 5205ebd8a5331c0d1825face034cca10e8b3b535

Configuration menu - View commit details

-

Copy full SHA for 2193544 - Browse repository at this point

Copy the full SHA 2193544View commit details -

Pytorch Distributed RPC Reinforcement Learning Benchmark (Throughput …

…and Latency) (#46901) Summary: A Pytorch Distributed RPC benchmark measuring Agent and Observer Throughput and Latency for Reinforcement Learning Pull Request resolved: #46901 Reviewed By: mrshenli Differential Revision: D25869514 Pulled By: osandoval-fb fbshipit-source-id: c3b36b21541d227aafd506eaa8f4e5f10da77c78

Configuration menu - View commit details

-

Copy full SHA for 09f4844 - Browse repository at this point

Copy the full SHA 09f4844View commit details -

Minor Fix: Double ";" typo in transformerlayer.h (#50300)

Summary: Fix double ";" typo in transformerlayer.h Pull Request resolved: #50300 Reviewed By: zhangguanheng66 Differential Revision: D25857236 Pulled By: glaringlee fbshipit-source-id: b9b21cfb3ddbff493f6d1c616abe21c5cfb9bce0

Configuration menu - View commit details

-

Copy full SHA for 72c1d9d - Browse repository at this point

Copy the full SHA 72c1d9dView commit details -

Fix warning when running scripts/build_ios.sh (#49457)

Summary: * Fixes `cmake implicitly converting 'string' to 'STRING' type` * Fixes `clang: warning: argument unused during compilation: '-mfpu=neon-fp16' [-Wunused-command-line-argument]` Pull Request resolved: #49457 Reviewed By: zhangguanheng66 Differential Revision: D25871014 Pulled By: malfet fbshipit-source-id: fa0c181ae7a1b8668e47f5ac6abd27a1c735ffce

Configuration menu - View commit details

-

Copy full SHA for bee6b0b - Browse repository at this point

Copy the full SHA bee6b0bView commit details -

[MacOS] Add unit tests for Metal ops (#50312)

Summary: Pull Request resolved: #50312 Integrate the operator tests to the MacOS playground app, so that we can run them on Sandcastle ghstack-source-id: 119693035 Test Plan: - `buck test pp-macos` - Sandcastle tests Reviewed By: AshkanAliabadi Differential Revision: D25778981 fbshipit-source-id: 8b5770dfddba0ca19f662894757b2dff66df87e6

Configuration menu - View commit details

-

Copy full SHA for 4fed585 - Browse repository at this point

Copy the full SHA 4fed585View commit details -

[PyTorch] List::operator[] can return const ref for Tensor & string (#…

…50083) Summary: Pull Request resolved: #50083 This should supercede D21966183 (a371652) (#39763) and D22830381 (b44a10c) as the way to get fast access to the contents of a `torch::List`. ghstack-source-id: 119675495 Reviewed By: smessmer Differential Revision: D25776232 fbshipit-source-id: 81b4d649105ac9e08fc2c6563806f883809872f4

Configuration menu - View commit details

-

Copy full SHA for c3b4b20 - Browse repository at this point

Copy the full SHA c3b4b20View commit details -

Fix PyTorch NEON compilation with gcc-7 (#50389)

Summary: Apply sebpop patch to correctly inform optimizing compiler about side-effect of missing neon restrictions Allow vec256_float_neon to be used even if compiled by gcc-7 Fixes #47098 Pull Request resolved: #50389 Reviewed By: walterddr Differential Revision: D25872875 Pulled By: malfet fbshipit-source-id: 1fc5dfe68fbdbbb9bfa79ce4be2666257877e85f

Configuration menu - View commit details

-

Copy full SHA for 8c5b024 - Browse repository at this point

Copy the full SHA 8c5b024View commit details -

warn user once for possible unnecessary find_unused_params (#50133)

Summary: Pull Request resolved: #50133 `find_unused_parameters=True` is only needed when the model has unused parameters that are not known at model definition time or differ due to control flow. Unfortunately, many DDP users pass this flag in as `True` even when they do not need it, sometimes as a precaution to mitigate possible errors that may be raised (such as the error we raise with not using all outputs).While this is a larger issue to be fixed in DDP, it would also be useful to warn once if we did not detect unused parameters. The downside of this is that in the case of flow control models where the first iteration doesn't have unused params but the rest do, this would be a false warning. However, I think the warning's value exceeds this downside. ghstack-source-id: 119707101 Test Plan: CI Reviewed By: pritamdamania87 Differential Revision: D25411118 fbshipit-source-id: 9f4a18ad8f45e364eae79b575cb1a9eaea45a86c

Configuration menu - View commit details

-

Copy full SHA for 78e71ce - Browse repository at this point

Copy the full SHA 78e71ceView commit details -

[doc] fix doc formatting for

torch.randpermand `torch.repeat_inter…Configuration menu - View commit details

-

Copy full SHA for 4da9ceb - Browse repository at this point

Copy the full SHA 4da9cebView commit details -

Migrate some torch.fft tests to use OpInfos (#48428)

Summary: Pull Request resolved: #48428 Test Plan: Imported from OSS Reviewed By: ngimel Differential Revision: D25868666 Pulled By: mruberry fbshipit-source-id: ca6d0c4e44f4c220675dc264a405d960d4b31771

Configuration menu - View commit details

-

Copy full SHA for fb73cc4 - Browse repository at this point

Copy the full SHA fb73cc4View commit details -

Cleanup unnecessary SpectralFuncInfo logic (#48712)

Summary: Pull Request resolved: #48712 Test Plan: Imported from OSS Reviewed By: ngimel Differential Revision: D25868675 Pulled By: mruberry fbshipit-source-id: 90b32b27d9a3d79c3754c4a1c0747dbe0f140192

Configuration menu - View commit details

-

Copy full SHA for d25c673 - Browse repository at this point

Copy the full SHA d25c673View commit details -

test_ops: Only run complex gradcheck when complex is supported (#49018)

Summary: Pull Request resolved: #49018 Test Plan: Imported from OSS Reviewed By: ngimel Differential Revision: D25868683 Pulled By: mruberry fbshipit-source-id: d8c4d89c11939fc7d81db8190ac6b9b551e4cbf5

Configuration menu - View commit details

-

Copy full SHA for 5347398 - Browse repository at this point

Copy the full SHA 5347398View commit details -

remove redundant tests from tensor_op_tests (#50096)

Summary: All these Unary operators have been an entry in OpInfo DB. Pull Request resolved: #50096 Reviewed By: zhangguanheng66 Differential Revision: D25870048 Pulled By: mruberry fbshipit-source-id: b64e06d5b9ab5a03a202cda8c22fdb7e4ae8adf8

Configuration menu - View commit details

-

Copy full SHA for 5546a12 - Browse repository at this point

Copy the full SHA 5546a12View commit details -

Fix Error with torch.flip() for cuda tensors when dims=() (#50325)

Summary: Fixes #49982 The method flip_check_errors was being called in cuda file which had a condition to throw an exception for when dims size is <=0 changed that to <0 and added seperate condition for when equal to zero to return from the method... the return was needed because after this point the method was performing check expecting a non-zero size dims ... Also removed the comment/condition written to point to the issue mruberry kshitij12345 please review this once Pull Request resolved: #50325 Reviewed By: zhangguanheng66 Differential Revision: D25869559 Pulled By: mruberry fbshipit-source-id: a831df9f602c60cadcf9f886ae001ad08b137481

Configuration menu - View commit details

-

Copy full SHA for 314351d - Browse repository at this point

Copy the full SHA 314351dView commit details -

Summary: This PR adds `torch.linalg.pinv`. Changes compared to the original `torch.pinverse`: * New kwarg "hermitian": with `hermitian=True` eigendecomposition is used instead of singular value decomposition. * `rcond` argument can now be a `Tensor` of appropriate shape to apply matrix-wise clipping of singular values. * Added `out=` variant (allocates temporary and makes a copy for now) Ref. #42666 Pull Request resolved: #48399 Reviewed By: zhangguanheng66 Differential Revision: D25869572 Pulled By: mruberry fbshipit-source-id: 0f330a91d24ba4e4375f648a448b27594e00dead

Configuration menu - View commit details

-

Copy full SHA for 9384d31 - Browse repository at this point

Copy the full SHA 9384d31View commit details -

add type annotations to torch.nn.modules.normalization (#49035)

Summary: Fixes #49034 Pull Request resolved: #49035 Test Plan: Imported from GitHub, without a `Test Plan:` line. Force rebased to deal with merge conflicts Reviewed By: zhangguanheng66 Differential Revision: D25767065 Pulled By: walterddr fbshipit-source-id: ffb904e449f137825824e3f43f3775a55e9b011b

Configuration menu - View commit details

-

Copy full SHA for 4411b5a - Browse repository at this point

Copy the full SHA 4411b5aView commit details -

Disable complex dispatch on min/max functions (#50347)

Summary: Fixes #50064 **PROBLEM:** In issue #36377, min/max functions were disabled for complex inputs (via dtype checks). However, min/max kernels are still being compiled and dispatched for complex. **FIX:** The aforementioned dispatch has been disabled & we now rely on errors produced by dispatch macro to not run those ops on complex, instead of doing redundant dtype checks. Pull Request resolved: #50347 Reviewed By: zhangguanheng66 Differential Revision: D25870385 Pulled By: anjali411 fbshipit-source-id: 921541d421c509b7a945ac75f53718cd44e77df1

Configuration menu - View commit details

-

Copy full SHA for 6420071 - Browse repository at this point

Copy the full SHA 6420071View commit details -

Enable fast pass tensor_fill for single element complex tensors (#50383)

Summary: Pull Request resolved: #50383 Test Plan: Imported from OSS Reviewed By: heitorschueroff Differential Revision: D25879881 Pulled By: anjali411 fbshipit-source-id: a254cff48ea9a6a38f7ee206815a04c31a9bcab0

Configuration menu - View commit details

-

Copy full SHA for 5834438 - Browse repository at this point

Copy the full SHA 5834438View commit details -

Add new patterns for ConcatAddMulReplaceNaNClip (#50249)

Summary: Pull Request resolved: #50249 Add a few new patterns for `ConcatAddMulReplaceNanClip` Reviewed By: houseroad Differential Revision: D25843126 fbshipit-source-id: d4987c716cf085f2198234651a2214591d8aacc0

Configuration menu - View commit details

-

Copy full SHA for 158c98a - Browse repository at this point

Copy the full SHA 158c98aView commit details -

[PyTorch] Devirtualize TensorImpl::sizes() with macro (#50176)

Summary: Pull Request resolved: #50176 UndefinedTensorImpl was the only type that overrode this, and IIUC we don't need to do it. ghstack-source-id: 119609531 Test Plan: CI, internal benchmarks Reviewed By: ezyang Differential Revision: D25817370 fbshipit-source-id: 985a99dcea2e0daee3ca3fc315445b978f3bf680

Configuration menu - View commit details

-

Copy full SHA for b5d3826 - Browse repository at this point

Copy the full SHA b5d3826View commit details -

[JIT] Frozen Graph Conv-BN fusion (#50074)

Summary: Pull Request resolved: #50074 Adds Conv-BN fusion for models that have been frozen. I haven't explicitly tested perf yet but it should be equivalent to the results from Chillee's PR [here](https://github.com/pytorch/pytorch/pull/476570) and [here](#47657 (comment)). Click on the PR for details but it's a good speed up. In a later PR in the stack I plan on making this optimization on by default as part of `torch.jit.freeze`. I will also in a later PR add a peephole so that there is not conv->batchnorm2d doesn't generate a conditional checking # dims. Zino was working on freezing and left the team, so not really sure who should be reviewing this, but I dont care too much so long as I get a review � Test Plan: Imported from OSS Reviewed By: tugsbayasgalan Differential Revision: D25856261 Pulled By: eellison fbshipit-source-id: da58c4ad97506a09a5c3a15e41aa92bdd7e9a197

Configuration menu - View commit details

-

Copy full SHA for 035229c - Browse repository at this point

Copy the full SHA 035229cView commit details -